Structural Biochemistry/Volume 4

Translational science is a type of scientific research that has its foundations on helping and improving people’s lives. This term is used mostly in clinical science where it refers to things that improve people’s health such as advancements in medical technology or drug development.

Examples of Application

[edit | edit source]For a long time, pathologists have noticed the fact that cholesterol was present in unhealthy arteries. In the 1960s, epidemiological studies illustrated the correlation between serum cholesterol and coronary heart disease. In the 1980s, inhibitors of HMG-CoA reductase (statins) became available to the market. These drugs were created using the biochemical knowledge of the pathways for cholesterol synthesis and transport. Subsequent clinical trials were performed to collect safety and efficacy data about the drug. After the safety and effectiveness of the drugs were confirmed, physicians and the public were educated about the drugs, and the drugs became widely used. All of this contributed to a reduction of death caused by coronary heart disease. Considering that heart disease is the biggest cause of death in the world, this example demonstrates how scientific knowledge can be used to create drugs that can improve human health or reduce human mortality. Other examples of how scientific knowledge has affected the clinical environment are “inhibitors of angiotensin-converting enzyme, inhibitors of oncogenic tyrosine kinases, and insulin derivatives with more favorable pharmacokinetic profiles”.

Glutamine [1] as a Therapeutic Target in Cancer

Another example of translational science in action is the discovery that certain cancers show a considerably high rate of glutamine metabolism. In addition, glutamine has been shown to be an integral part of metabolic functions and protein functions in cancer cells. Therefore, by designing drugs that can reduce glutamine uptake in cancer cells can potentially provide new cancer therapeutics. In fact, there are several types of drugs that have been developed that have been shown to suppress glutamine uptake. L-γ-glutamyl-p-nitroanilide (GPNA) is an example of a designed drug that serves as a SLC1A5 inhibitor that can inhibit the uptake of glutamine in glutamine-dependent cancer cells and thereby inhibit the activation of the mammalian target of rapamycin complex (mTORC1) which regulates cell growth and protein synthesis. However, more research is being done to develop a drug that specifically targets and inhibits cancer cells without damaging normal cells. In addition, translational science has led to the development of an FDA approved drug, phenylbutyrate, [Buphenyl (Ucyclyd Pharma) or Ammonaps (Swedish Orphan] that lead to a major reduction of glutamine levels in blood plasma. Also, L-asparaginase [Elspar (Merck & Co.Inc)] has also been shown to decrease glutamine levels, but it is extremely toxic in adults. The fact that cancer is a major disease that has plaqued our society in recent years, this is a good example that illustrates the importance of how translational science has led to the development of new therapeutics to help patients win the battle against cancer. It also illustrates that scientific research is constantly conducted to better drug development and a necessary aspect of science to better out lives.

Medication to Arthritis Pain

Also, through advancement of biochemical knowledge and its complementary technology, a new way to fight arthritis pain is formulated. The pain resulting from arthritis is commonly treated with aspirin, Advil, and ibuprofen. However, most of these medications lead to gastrointestinal organ damages. Up to 100,000 hospitalization and 16,500 deaths is in fact lead by side effects from those non-steroidal anti-inflammatory drugs (NSAID). Fortunately, studying NSAID at its molecular level led to identification of the solution to this major problem. The scientist discovered that NSAIDs inhibits two enzymes called “cyclooxygenases,” or COX-1 and COX-2. Even though COX-1 and COX-2 have similar functions, COX-2 is formed as a reaction to an injury and infection. This leads to inflammation and immune response, which is the reason why NSAID blockade of COX-2 relives pain and inflammation from arthritis. On the other hand, COX-1 enzyme is responsible for the production of prostaglandins, which plays role in protecting the stomach linings from the acids. As NSAID inhibits COX-1, it may lead to a major problem, ulcers. With this knowledge, biochemist scientists are able to create a type of medication that not only reduces pain and inflammation, but also removes the side effects caused by the drug. Today, this drug is called Celebrex and it is a great example of how the advancement of biochemistry and drug development technology can open up new paths to better treatments for millions of people suffering with illness.

Imprinting

Imprinting is a process that is independent from Mendelian inheritance that takes place naturally in the animal cells, which is an example of epigenetics. In more cases, two copies of genes work the same way but in some mammalian genes, either the father’s or the mother’s copy is amplified rather than both being turned on. Imprinting does not occur selectively based on gender, but occur because the genes are imprinted (chemically marked) during creation of eggs and sperm. For instance, imprinted gene of insulin like growth factor 2 (Igf2) plays a role in growth in mammalian fetus. Considering Igf2, only the father’s copy is expressed while the mother’s copy remains silent and not expressed in the life of its offspring. Interestingly, this selective silencing of the imprinted genes seems to take place in all of the mammals except platypus, echidna, and marsupials.

The questions that scientist wondered regarding this was why would evolution tolerate this kind of process that risks an organism’s survival since only one of two of the gene is expressed? The answer to this is that the mother and the father have different interest and this resulted from a competition. For example, the father’s main interest for the offspring is for it becomes big and fast because it will increase the survivability, which will in turn give greater chance of passing on the genes to the next generation. On the other hand, the mother desires strong offspring like the father, but due to limited physical resources for pregnancy duration, it is wiser to divide the resources among the offspring instead of just one. Today, more than 200 imprinted genes in the mammals are identified and some of these imprinting genes regulate embryonic growth and allocation of resources. Furthermore, mutation in these genes leads to fatal growth disorders. There are scientists now trying to understand how Igf2 and other imprinted genes stay silent in the life of cells because it can be manipulated for treatment to various mutational diseases.

Medications in Nature

There are many substances that can be used as medications and drugs already existing in the nature. For example, in 1980s, Michael Zasloff was working with frogs in the lab at the National Institutes of Health in Bethesda. There was an interesting component to the skin of the frog that allowed prevention of infection even with surgical wounds. This observation led to isolation of a peptide called Magainin that was produced by the frog as the response to the injury. Furthermore, peptide made from frog skin possessed micro-bacteria killing properties and there are hundreds of other type of molecules called alkaloid on the amphibian skin. After careful study, the scientist realized that the compound responsible for the painkilling ability is called epibatidine. Epibatidine is however too toxic to humans for pain relieving medication. Knowing the chemical structure, the goal of making similar effect drug isn’t too far.

The Sea and Cancer Treatments

The ocean is a vast resource for anti cancer treatment and its success stems from the diversity it embodies. The earth is made up of around 70% of bodies of water and there are thousands of species living in the ocean, making it the most diverse marine ecosystem. Out of the 36 known phyla, the ocean contains 34. There are many drugs that have been founded based on natural substances and the ocean is a large provider of anti cancer treatments. Marine microorganisms are extremely important and can be difficult to utilize based on where they live. Many microbes live in very specific environments and it can be difficult to find an adequate supply.

One of the maritime organisms used in cancer treatment is the ascidian Diazona. The Diazona is a source for the peptide Diazonamide A. Diazonamide A has been known to be a growth inhibitor in the cells. Compared to others like Vinblastine and Paclitaxel, Diazonamide A has the best result in inhibiting cell growth after a 24 hour exposure to Human Ovarian Carcinoma. After testing down as a joint project in the UCSD Medical center, Diazonamide A proves to be a viable anti cancer treatment. The peptide has potency with in vitro cytotoxicity because it inhibits mitotic cell division. According to in vitro date, the reason that Diazonamide A is a good inhibitor of cell growth is its attack on the cell’s tubulin assembly. Structurally, the peptide is not similar to any known drug candidate but it is still considered an “active lead compound”. However, the lack of availability of the peptide ultimately renders it unviable due to lack of practicality. Without a ready source, it cannot advance to the preclinical stage and is not a practical option for drug making and distribution. However, in 2007, a synthesis for the peptide has been available and thus finally allowing it to go further in development. An example of how peptide synthesis is an important step in drug development.

Another organism that can be used for anti-cancer treatment is the Soft-Coral Eleutherobia. Similar to the hard coral, but they are soft bodied and have no defenses against the environment. Its extract, the organic cytotoxin Eleutherobin, shows potency in cytotoxicity of 10nl/mL. Similar to the Diazonamide A, Eleutherobin is also a mitotic inhibitor. It stops the HCT116 colon carcinoma cell line by stabilizing the microtubules. When treated soluble tubulin with Eleutherobin, the microtubules are stabilized in a similar fashion to Taxol. In certain circumstances, Eleutherobin competes with the Taxol for target sites. Eleutherobin is, therefore, almost identical to Taxol and it is also a leading prospect for cancer research. However, similar to the Diazonamide A, it is not a practical lead to follow as there is no supply. There have been synthesis but none could yield practical results within reasonable costs. Because of those difficulties, it has not been advanced to preclinical stage.

A fungal strain extracted from the surface of the Halimeda. Like the two previous examples, this strain is also a potent cancer treatment. It works against HCT116 human colon carcinoma. Unlike Eleutherobin, this is not similar to Taxol but it is potent against cell lines resistant to Taxol. Halimide as a natural product, can be converted into the synthetic product NPI-2358. NPI-2358 differs from Halimide with a t-butyl group. The NPI-2358 has shown more progress than either Diazonamide A or Eleutherobin in its clinical stages. It has shown great in vivo results and since 2006, has been in phase 1 of clinical trials. In in vivo experiments with breast adenocarcinoma, the NPI-2358 has shown significant necrosis in tumor. The experiment includes a the breast adenocarninoma to be grafted onto the dorsal skin of a mouse with a viewing window. After 15 days of treatment, the group treated with the NPI-2358 shows a reduction in the tumor size in comparison to the control group. Upon closer inspection, the NPI-2358 seems to target tumor vasculature.

An organism that has been founded through the sampling methods is the Salinispora. They are special in that they require salt for growth and in cultivating the bacteria, grains of salt need to be added directly. They have unique colorings and 16s rDNA sequence. More than 2500 rDNA strains from the Salinispora have been studied. Their geographical location is along the earth’s equator, they have been discovered in Hawaii, Guam, Sea of Cortez, Bahamas, Virgin Islands, Red Sea, and Palau. The extract from the bacteria has shown cytotoxicity against HCT-116 colon carcinoma. To prepare, the Salinispora is cultured in shake flask for 15 days with XAD absorbent resin, the resin is then filtered with methanol. The cytotoxic effect of the Salinispora is very effective, it has a broad but very selective of cancer line cells. Above 2 microM, the bacteria is effective against several cancer types. The bacteria proves to be a wonderful inhibitor of cancer mechanism. Salinosporamide A is very effecting in inhibiting proteasome in vivo. It shows an average IC50 of less ca. 5nM against the 60 call line panel. It is also active against several other types of cancers. Currently as of 2006, it is in phase 1 of human trial and shows great promise as a future drug against some cancers.

Similar to the Salinispora, another marine stain of the Marinospora also requires salt. They are discovered along the Sea of Cortez by deep ocean sampling. Like the Salinispora they also have unique 16S rDNA sequences. They are also morphologically identical to the Streptomyces. Their extracts show good potency against drug-resistant pathogenic bacteria and cytotoxicity against certain cancer cell lines. The fractionation of the strain also leads to a new macrolide class. Marinomycin A from the strain is shown to be very potent against melanoma. It has been advanced to the hollow fiber assay.

The ocean is a diverse place and the many organism that it houses has great medicinal effects. Many of the populations were previously unknown but with strategies of deep sea sampling, in two years, 15 new genera of bacteria from 6 families have been cultured. More amazingly, of the 15 genera cultured, 11 have shown two be good inhibitors of cell growth and can be possible anticancer ingredients. That simply shows the wealth that the ocean has to offer in battling against dangerous illness.

Stem cell

There are cell in the human body that is completely generic and it has the ability to express extremely broad array of genes. Simply put, stem cells are able to becoming all kinds of cells in the body with an unlimited potential. Specifically speaking, this cell exists for few days after conception and is called embryonic stem cell. Once these embryonic stem cells differentiate, there are cells called adult stem that too have similar ability as the embryonic stem cell. These cells are located throughout the body, mostly in bone marrow, brain, muscle, skin, and liver. Then tissues are damaged by injury, disease, or age, it can be replaced by these stem cells. Adult stem cells however are dormant and remain undifferentiated until the body signals for its need. Adult stem cells have the capacity of self-renewal but different from the embryonic stem cell in a sense that it exists in small numbers and aren’t too flexible in differentiating. Adult stem cells plays role in therapies that treats lymphoma and leukemia. Scientists are able to isolate an individual’s stem cells from blood and grow them in the laboratory. After high dosage chemotherapy, the scientist can use the harvested stem cells to transplant and inject into replacing the cells destroyed by the chemotherapy. James A. Thomson of the University of Wisconsin was the first one to isolate stem cells from embryo into growing them in the lab. Stem cell research opens possibilities to treating Parkinson’s disease, heart disease, etc. diseases that involve irreplaceable cells.

AIDS Treatment

To understand strategies to combat HIV-1 infections, a study of its biology must be conducted. HIV-1 was found to interact with the host cells by means of their glycoproteins, gp120 and gp41. The receptors CD4, CCR5, and CXCR4 recognize these envelope proteins and together, they lead to the fusion of the virus and the host cell. In the beginning, how HIV-1 was treated was by preventing the protein from maturing and stop the RNA to replicate into the DNA. However, since both of these happen after the host genome as already been infected- a much more attractive strategy is to stop the virus from fusing with the cell. This line of research leads to the discovery of many HIV entry inhibitors.

Since the glycoproteins gp120 and gp 41 play an important role in viral infection, their structures were studied. The gp120 interacts with the CD4 by undergoing a conformational transformation. This transformation exposes the gp120 to the receptor proteins CCR5 o CXCR4. gp41 also has a major conformational transformation that changes from a prefusion complex with the gp120 into a structure that is able to place the viral and host membranes side by side. Entry inhibitions that can prevent that step from happening can prevent the cell from being infected. The gp41 has a six-helix bundle (6-HB) made from N-and C- HR regions. The N-HR forms a core and the C-HR packs tigtly against it. The formation of stable crystals from these peptides aids in the search of finding a peptide inhibitor for the six-helix bundle. An HIV-1 entry inhibitor is the T-20, which is a homolog to the helical C-HR region of the gp41.

Experiments in the absence of high resolution structure of gp41 shows that the T-20 interacts with the N-HR helical region and acts as an entry inhibitor. When high resolutions of the gp41 structures were available, the T-20 was shown to form a heterocomplex that inhibits the formation of the 6-HB hairpin is required of the viral and host genome fusion. This result shows that either the C-HR or the N-HR could act as an entry inhibitor. The N-HR peptides are trihelical and they have deep hydrophobic pockets, these pockets have a complementary pocket-binding domain (PBD) present on the C-HR peptides. This stable helical structure shows that a point for the success of the T-20 is its ability to have a helical structure as well. In general, to improve inhibitions, w can increase the helicity of the C-peptides, increase the T-20 interactions with the N-peptide trihelices, or to make N-peptides that form soluble, stable triple helix core. A good relationship between the tendency to form a stable helix with inhibition activity was seen from many entry inhibitors.

Unlike the T-20, the C-34 has a PBD sequence that can interact with the hydrophobic pockets of the N-HR core. The C-34 is therefore effective against strains of HIV that are resistant against T-20. The C-34 works by preventing the formation of the 6-HB. On the other hand, T-20 works by interacting with the lipids that interrupts the membrane fusion pore from forming. If both characteristics of both the C-34 and the T-20 were incorporated into a single inhibitor, the result would be very potent.

Recent studies have shown that fatty acid and cholesterol may be used to act as peptide fusion inhibitors for gp41. During T-20 binding to liposomes the LBD domain plays a role in the fusion process. Fatty acids were shown to have similar binding characteristics which make them possible candidates to act as fusion inhibitors along the binding locus. Researchers speculate that the reason for this is because (C-16)-DP combats HIV-1 by increasing inhibition around the viral membrane. Similarly cholesterol helps by targeting C34 to lipid rafts which also increase inhibition activity around the membrane. These kinds of applications are extremely useful when combined with drug treatments that have limited local concentration.

N-peptides are another viable solution for inhibition because they show similar properties to that of N-HR on gp41. They contain the 5-helix design which inhibits HIV-1 fusion at nM concentrations. Studies show that stability of the triple helical core may be correlated to the effectiveness of HIV treatment. It has been shown that disulfide stabilizes the trimeric coiled-coil core which will increase inhibition properties. When combined with N-HR and C-HR inhibitors this virus has an increasingly difficult time surviving without mutating further. The downside to N-peptide treatment is the higher molecular weight which may lead to immunogenicity if not used through injections.

A final possible fusion inhibitor was found using D-amino acids. These D-amino acids mimic binding to the trimeric core. Additionally these amino acids are highly resistant to protease degradation which makes them more effective than T-20 which cannot be absorbed by paracellular passages in the intestine. This leads to the possibility of using D-amino acids in topical treatments which can be easily applied at a less expensive cost.

Protein and Drugs

Each individual human body has variations in the genetic makeup that leads to difference in general health. There are environmental and lifestyle factors involved, but the response that every individual have on medications is due to a variant gene called cytochrome P450 protein. This protein is in charge of processing any kind of drugs the body intakes. Due to uniqueness of the individual’s genes, the encodings for the cytochrome P450 is very exotic. This information was discovered in the 50s as certain patients had different side effects to anesthetic drug, which was fatal. Through experiments, the scientist realized that genetic variation can cause a dangerous side effect because cytochrome P450 protein wasn’t able to break down the medication in the normal way. Even medicines like Tylenol can sometimes give no relief to the body because of the genetic variation. Fortunately, with greater knowledge of this, pharmacogenetic scientists can now develop drugs that are customized based on individual’s genes.

Obstacles and Potential Solutions

[edit | edit source]To mediate between laboratory science and clinical science is not an easy task. It requires a vast amount of different types of complex and specialized knowledge, and this brings up a lot of problems and obstacles. One research team is not even nearly enough to create a bridge between basic and clinical science. One proposal is to create research teams that specialize in different steps to interconnect basic science into the clinical environment. It also seems very practical to train individuals that can mediate between these different steps so that if a research team does not exist at one step, these mediators or translators can try to find assistance from teams at adjacent steps of the process. These proposed solutions on how to implement translational science depend on the cooperation of various types of scientists. The idea is to allow translational scientists to have easy access to the wide array of intricate and specialized knowledge needed to bridge the gap between scientific research and clinical and medical advancements.

Over the years, an increasing emphasis have been placed Translational Science. National fundings and policies have greatly facilitated the growth of the field creating opportunities for the advancement of applied clinical research informatics (CRI) and translational bioinformatics (TBI). Examples includes The National Cancer Institute's caBIG program which engineered a variety of service oriented data-sharing, data-managing, and knowledge management systems, and the CTSA which aim focuses on informatics training, database design/hosting, and execution of complex data analysis. The issue however, is that such programs, and the fundings that accompany it are geared towards solving immediate problems while neglecting to focus on foundational CRI and TBI research that is crucial to the growth of biomedical informatics subdisciplines that ensures future innovations. Furthermore, the funds are usually allocated to service oriented research that provides the resolutions to immediate problems while the policies restrict the resources of data networks to specific universities or centers. Several resolutions have been proposed in response to the issue at hand:

- Rigorous campaign advocacy to ensure that foundational CRI and TBI knowledge and practice is both recognized and supported as a core objective of translational change which will engage informaticians as equal partners in planning an execution as opposed to mere service providers.

- Community effort to refine and promote national scale agenda that focuses on challenges and opportunities facing CRI and TBI allowing them to do more than just react to new fundings and policies.

- Creation of a forum to ensure that establishment of policies and fundings affecting CRI and TBI are open to researched of the field and not limited to the few institutions, investigators, or lenders.

National Institutes of Health (NIH) and Clinical and Translational Science Awards (CTSA)

[edit | edit source]The National Center for Research Resources (NCRR) of the National Institutes of Health (NIH) has created the CTSA for the purpose of encouraging translational science and research. The NCRR has used the CTSA to give funding to infrastructure for translational science in areas such as “biostatistics, translational technologies, study design, community engagement, biomedical informatics, education, ethics and regulatory knowledge, and clinical research units”. The CTSA has six major goals with regards to translational science. The first goal is to train individuals in the field across the entire translational spectrum. This involves giving training to MD to allow them to act as clinical investigators, but it also involves teaching PhDs the fundamentals about the medical world. The expected result from this training would be that PhDs would know when they have come across something that would be of medical importance and so that MDs could understand what it is that the PhDs are trying to say to them. Secondly is the goal of trying to simply the translational process. This means trying to speed up the translational process as much as possible without losing regards to safety. This would include making expertise available to clinical researchers by the way of institutional review boards, FDA regulations and applications for investigating new drugs. The third goal of the CTSA is to take advantage of advances in informatics, imaging, and data analysis by applying these advances directly to research that clinical investigators are doing. By taking advantage of these resources, it is more likely that the investigators will come up with a meaningful study. The fourth goal is to find a way to encourage and protect the careers of translational researchers. An example of a path that propagates this career is MD/PhD programs. These types of programs could bridge the so-called translational divide by education people in aspects of both the medical and scientific fields. The success of these programs depends largely on tuition assistance provided, making it so that the graduates of these programs are not burdened with large loans to pay off. The fifth goal is to provide team mentoring, as well as support to junior clinical scientists. This goal can be achieved in part through programs like the K12 awards. The final goal of the CTSA is to catalogue all research resources in order to make these resources available to everyone possible that could need them.

References

[edit | edit source]- Payne, Phillip, Embi, Peter, Niland, Joyce "Foundational biomedical informatics research in the clinical and translational science era: a call to action." JAMIA, August 2010 vol. 17 no. 6 615-616. Web. <http://jamia.bmj.com/content/17/6/615.full.html#ref-list-1>.

- McClain, Donald. "Bridging the gap between basic and clinical investigation." Trends Biochem Sci. 2010 Apr;35(4):187-8. Epub 2010 Feb 19.

- "The Structures of Life." National Institutes of General Medical Sciences. U.S. Department of Health and Human Services, July 2007. Web. <http://www.nigms.nih.gov>.

- Z. Cruz-Monserrate, H. Vervoort, R. Bai, D. Newman, S. Howell, G. Los, J. Mullaney, M. Williams, G. Pettit, W. Fenical and E. Hamel "Diazonamide A and a Synthetic Structural Analog: Disruptive Effects on Mitosis and Cellular Microtubules and Analysis of Their Interactions with Tubulin." Molecular Pharmacology, June 2003 vol. 63 no. 6 1273-1280. Web. <http://molpharm.aspetjournals.org/content/63/6/1273.full#cited-by>.

- B. Long, J. Carboni, A. Wasserman, L. Cornell, A. Casazza, P. Jensen, T. Lindel, W. Fenical, C. Fairchild "Eleutherobin, a Novel Cytotoxic Agent That Induces Tubulin Polymerization, Is similar to Paclitaxel (Taxol)" Cancer Research, March 15, 1998. Web. <http://cancerres.aacrjournals.org/content/58/6/1111.full.pdf>.

- W. Fenical "The Growing Role of the Ocean in the Treatment of Cancer" Lecture. April 2010

- "The New Genetics." National Institutes of General Medical Sciences. U.S. Department of Health and Human Services, October 2006. Web. <http://www.nigms.nih.gov>.

- "The Chemistry of Health." National Institutes of General Medical Sciences. U.S. Department of Health and Human Services, August 2006. Web. <http://www.nigms.nih.gov>.

- F. Naider, J. Anglister"Peptides in the Treatment of AIDS" Curr Opin Struct Biol. <http://www.ncbi.nlm.nih.gov/pmc/articles/PMC2763535/>

- "Inside the Cell." National Institutes of General Medical Sciences. U.S. Department of Health and Human Services, July 2007. Web. <http://www.nigms.nih.gov>.

INTRODUCTION

[edit | edit source]Mellitus diabetes is categorized as a group of chronic (lifelong) diseases in which high levels of sugar (glucose) exist in human blood. It is the 9th leading cause of death in the world, killing more than 1.2 million people each year. Diabetes is categorized in many groups: type 1, type 2, gestational, prediabetes and a few more. However, the two main groups are type 1 and type 2, type 2 being more common. Different symptoms correlate to different types of diabetes, but generally, diabetes exhibit similar symptoms such as the following:

Type 1 Symptoms include:

- Frequent urination

- Unusual thirst

- Extreme hunger

- Unusual weight loss

- Extreme fatigue and irritability

Type 2 Symptoms include:

- Any of the type 1 symptoms

- Frequent infections

- Blurred vision

- Cuts/bruises that are slow to heal

- Tingling/numbness in the hands/feet

- Recurring skin, gum, or bladder infections

Many complications arise and are caused by diabetes. People may experience problems in their vision which potentially leads to blindness, numbness in their feet and hands, especially in the legs, hypertension (high blood pressure), their mental health, hearing loss, and others that are gender related. Although there is no cure for type 1 diabetes, maintaining an idea body weight with an active lifestyle and a healthy diet can prevent and sustain type 2 diabetes.

TYPE 1 DIABETES

[edit | edit source]In type 1 diabetes, the pancreas fails to produce little or no insulin. Insulin is a hormone that is produced by beta cells located in the pancreas. It functions as a transporter of sugar (glucose) into cells throughout the body. Glucose travels through the hemoglobin, an enzyme found in blood, and is stored away and later used for energy by the body’s organs. The failure to produce insulin results with a lack of an appropriate amount needed for the human body to function at a normal pace. Without enough insulin, sugar accumulates in the bloodstream, thus raising the blood’s sugar level – this event is called hypertension.

There is no exact cure for type 1 diabetes and the exact cause is still unknown. Researchers believe it is an autoimmune disorder, a condition in which the immune system mistakenly damages healthy tissues. In this case, the pancreas would have been attacked, preventing its function to produce insulin. Type 1 diabetes shows hereditary correlation, meaning this disease can be passed down through families. Also, the adolescent group is the most often diagnosed group of people.

TYPE 2 DIABETES

[edit | edit source]In type 2 diabetes, fat, liver, and muscle cells become insulin resistant. Those cells do not respond to insulin correctly and fails to obtain the sugar (glucose) that is being transported. Because glucose is crucial for the cells’ functions, the pancreas would sustain the equilibrium. That means, if the cells do not intake the glucose, the pancreas would automatically create more insulin to make sure the cells have enough. The remaining sugar in the bloodstream would accumulate, resulting with hyperglycemia.

Maintaining a healthy diet is essential in preventing type 2 diabetes. Low activity level, poor diet, and excess body weight increases the risk of type 2 diabetes because increased fat levels slows down the ability to properly use insulin.

Treatment

[edit | edit source]Although there is no known cure for diabetes, it can be managed with certain precautions.

Type 1 & 2 Diabetes Management

[edit | edit source]Those with type 1 & 2 diabetes, who want to maintain a healthy lifestyle, exercising regularly and maintaining a healthy weight, eating healthy foods, and most importantly monitoring their blood sugar levels.

Diabetics should try to maintain their blood sugar levels between these readings: Daytime glucose levels: between 80 and 120 mg/dL (4.4 to 6.7 mmol/L) Nighttime glucose levels: between 100 and 140 mg/dL (5.6 to 7.8 mmol/L)

People with type 1 diabetes need insulin to survive, therefore, they typically inject themselves with insulin using either a fine needle and syringe, an insulin pen, or an insulin pump.

TESTING

[edit | edit source]To determine if one is diagnosed with diabetes, a few tests must be done.

- Fasting Blood Glucose (Type 1, 2) - Blood test must be higher than 126 mg/dL twice

- Random (nonfasting) Blood Glucose (Type 1) - Blood test is higher than 200 mg/dL - Must be confirmed with fasting test

- Oral Glucose Tolerance Test (Type 1, 2) - Blood level is higher than 200 mg/dL after 2 hours

- Hemoglobin A1c (Type 1, 2) - Normal: <5.7% - Pre-diabetes: between 5.7%-6.4% - Diabetes: >6.5%

- Ketone (Type 1) - Done with urine or blood sample (If sugar level is > 240 mg/dL, when ill (ex. pneumonia, stroke, etc.), when nauseated, vomiting, when pregnant)

STATISTICS

[edit | edit source]Out of 25.8 million people in the United States, 8.3% of all children and adults are diagnosed with diabetes. It is the leading cause of nontraumatic lower-limb amputations, kidney failure, and blindness in the United States and contributes greatly to heart disease and stroke.

CLINICAL RESEARCH

[edit | edit source]Currently, thousands of laboratories are focusing on the study of diabetes: type 1, type 2, its relationship with heart, kidney diseases, obesity, and many more.

One specific research was done by Karolina I. Woroniecka and company in the Albert Einstein College of Medicine of Yeshiva University. Their topic mainly focuses on the relationship between diabetes and kidney failure, known as diabetic kidney disease (DKD), which is the prominent cause of kidney failures in the United States. The research topic is called “Transcriptome Analysis of Human Diabetic Kidney,” and was published in September 2011. Its objective was to provide a collection of gene-expression changes in human diabetic kidney biopsy samples after being treated. Gene-expression is defined as the translation of information from a gene into a messenger RNA and then to a protein. Transcriptome analysis is often used to obtain insight into disease pathogenesis, molecular classification, and the identification of biomarkers, indicates the presence of some sort of phenomenon, used for future studies and treatments. This study was able to catalog gene expression regulation, identify genes and pathways that may either play a role in DKD or serve as biomarkers.

44 dissected human kidney samples were used in this experiment, portioned out according to their racial status and glomerular filtration rate (25-35 mL/min). A glomeruli is a cluster of capillaries around the end of a kidney tubule. Their method included a series of statistical equations to identify expressed transcripts found in both the control and the diseased samples. Also, algorithms helped the study by defining the regulated pathways.

The human kidneys were obtained from donors and leftover portions of kidney biopsies. The samples were manually microdissected and only the samples without any degradation were further used through the amplification of the RNA. Before any treatment, the raw samples were normalized using the RMA16 algorithm. Its purpose is to obtain a stabilized set of data and reduce any inconsistencies in their patterns. This is where the Benjamin-Hochberg testing was used at a p value < 0.05. After, the oPOSSUM software determines the overrepresented transcription factor binding sites (TFBSs) within a catalog of coexpressed genes and is then compared to a control set. The differentially expressed transcripts that comply with the statistical conditions undergo analysis that uses a ratio to determine the top canonical pathways – the Fischer exact test is used at p value < 0.05. Immunostaining is a major component in the visualization and final step of the procedure. This procedure requires the use of a specific antibody to detect a specific protein in a sample. The following primary antibodies were used: C3, CLIC5, and podocin. The Vectastain ABC Elite kit was used for the secondary antibodies to bind to the proteins and then, 3,3”diaminobenzidine was applied for visualizations. Immunostaining is typically scored on a scale of 0-4, correlating to the amount of activity on that specific protein.

Results from this experiment identified 1,700 differently expressed probesets in DKD glomeruli and 1,831 probesets in diabetic tubuli (seminiferous tubules); probeset is a collection of more than two probes and is designed to measure a single molecular species. There were 330 probesets that were commonly expressed in both compartments. Pathway analysis emphasized the regulation of many genes that factored into the signaling in DKD glomeruli. Some molecules included Cdc42, integrin, integrin-linked kinase, and others. Strong enhancements for the inflammation-related pathways were shown in the tubulointerstitial compartment. Lastly, the canonical signaling pathway was regulated in both the DKD glomeruli and tubuli, which are associated with increased glomerulosclerosis.

With ongoing research about diabetes-linked diseases, results that are obtained contribute to the overall understanding of the biochemical processes and issues. Dr. Karolina I. Woroniecka and company are one of the many research teams throughout the world that dedicate their jobs to saving or improving people’s well-being. This study is one of the many that contribute to the complications of diabetic-related kidney diseases. However there are many more studies that relate to diabetes, such as obesity and heart attack/failure.

Hiroaki Masazuki and company conducted a project relating obesity and diabetes, “Glucose Metabolism and Insulin Sensitivity in Transgenic Mice Overexpressing Leptin with Lethal Yellow Agouti Mutation.” This article was published in August 1999 from the Department of medicine and Clinical Sciences, Kyoto University Graduate School of Medicine at Kyoto, Japan. The objective of this research project was to determine the usefulness of leptin for the treatment of obesity-related diabetes. Leptin is an adipocyte-derived blood-borne satiety factor that increases glucose metabolism by decreasing food intake and increasing energy expenditure. Two different types of mice were crossed and examined at weeks 6 and 12 during the experiment. The first type was a transgenic skinny mice overexpressing leptin breed, with allele Tg/+, and the second is a lethal yellow KKAy mice, commonly used as models for obesity-diabetes syndrome, with allele Ay/+. The F1 animals’ metabolic phenotypes were examined, noting everything from body weight to their sensitivity of insulin and concentrations of leptin. This study was able to demonstrate the potential usefulness of leptin along with a long-term caloric restriction for the treatment of obesity-related diabetes. It demonstrated that hyperleptinemia can delay the onset of impaired glucose metabolism and hasten the recovery from diabetes during caloric food restriction in the crossed F1 bred mice, Tg/+ and Ay/+. Hyperleptinemia is defined as increased serum leptin level.

Although leptin may have been found to be potentially useful in treating diabetes, the fact that a caloric food restriction is required suggests that leptin can stimulate glucose metabolism independent of body weight. Other studies have demonstrated that leptin stimulates glucose metabolism in normal-weight nondiabetic mice and also improves impaired glucose metabolism in over-weight diabetic mice with leptin deficiency. Masazuki and company have created transgenic mice models overexpressing leptin (allele Tg/+) that exhibit insulin sensitivity and increased glucose tolerance. A liver-specific promoter controls the overexpression of leptin and insulin sensitivity results with the activation of signaling in the skeletal muscle and liver. In this study, Masazuki and company genetically crossed the transgenic mice and lethal yellow obese mice. The resulting 4 genotypes are: Tg/+: Ay/+, Tg/+, Ay/+, and wild-type +/+. When at week 6, all the mice were at normal body weight and at week 12, the mice with the Ay/+ allele clearly developed obesity. At 9 weeks, +/+, Ay/+, and Tg/+: Ay/+ were placed on a 3 week food restriction diet and analyzed at week 12.

The research design and methods include: measurements of body weight and cumulative food intake, plasma leptin, glucose, and insulin concentrations, glucose and insulin tolerance tests, and caloric food restriction experiments and later statistical analysis were done. Body weights were measured daily since the mice were 4 weeks old and food intake was measured daily over a 2-week period. Blood was sampled from retro-orbital sinus of mice at 9:00AM. Plasma leptin concentrations were determined using radioimmunoassay (RIA) for mouse leptin. Insulin and plasma glucose concentrations were determined by the glucose oxidase method with a reflectance glucometer. The glucose tolerance tests (GTT) were done after an 8 hour fast and injections of 1.0 mg/g glucose. The insulin tolerance tests (ITT) were done after a 2 hour fast and injection of 0.5 mU/g insulin. The blood was then drawn from the mice tail veins at periodic times after injection at 15, 30, 60, and 90 minutes and blood was drawn from before the injections to measure comparable results. The food restriction experiment was based off of the cumulative food intake at week 12. The mice were then provided with 60% of the amount of food consumed. The exact same tests were measured: plasma leptin, glucose, and insulin concentrations were also determined; GTT and ITT were also done. At the end, all these data were analyzed and expressed at ±SE.

The results identified a large difference in body weights with the four genotypes. At week 4, all the mice showed no significant difference in body weight. At week 6 of age, Tg/+ mice gained approximately 20-30% less weight than the control +/+ mice and indicated a sign of developing adiposity compared to +/+, Tg/+: Ay/+, and Ay/+ mice. At this time, the control, Tg/+: Ay/+, and Ay/+ mice showed no drastic difference in body weights. However, by week 12 of age, the mice with the Ay/+ allele developed obese. As for plasma leptin concentrations, 6 week old Tg/+ mice were approximately 12 times those of the control +/+ mice, at week 12, they were 9 times higher. The concentrations in Ay/+ and +/+ mice were roughly equivalent. The concentrations of Tg/+:Ay/+ mice were 8 times higher than those of the +/+ mice and at week 12, they were higher than the Ay/+. At week 12, the body weight of Tg/+ was ~23% less than the control’s. The food intake of Tg/+ reduced significantly after 6 and 12 weeks of age compared to the control litter. The food intake of Ay/+ mice increased by 50% compared to the control litter. The food intake of Tg/+: Ay/+ mice, compared to the +/+ mice, were roughly the same. The food intake of Ay/+ and Tg/+: Ay/+ were approximately the same. At week 6, the plasma glucose concentrations among all 4 genotypes were the approximately the same. At week 12, the glucose levels of Tg/+ and +/+ mice were the same. However, the glucose level of Ay/+ and Tg/+: Ay/+ elevated significantly compared to the control but compared to each other, they were the same. As for plasma insulin concentration levels, the Tg/+ mice greatly decreased compared to the control at week 6. The plasma insulin concentrations in Tg/+: Ay/+ mice were higher than the control. At this point, the Ay/+ mice demonstrated marked hyperinsulinemia compared to the rest of the genotypes. GTTs and ITTs showed that the plasma glucose elevation is significant in Tg/+ compared to the control. 30 minutes after the injection, the glucose concentrations increased greatly in Ay/+ mice compared to the control.

The genotypes’ glucose metabolisms were examined after their food restriction. 60% of their total food intake were given to these mice and after 2 weeks, the body weights of Tg/+ were 17% less and +/+ were 12% less compared to before and the Ay/+, Tg/+: Ay/+ body weights also decreased. The plasma leptin concentrations in Tg/+ mice were higher than those in +/+ mice and Tg/+:Ay/+ were higher than those of Ay/+. The leptin concentrations between Tg/+ compared to Tg/+:Ay/+ and those of +/+ and Ay/+ were approximately the same. After 3 weeks of food restriction, the plasma glucose concentrations among +/+, Ay/+, and Tg/+:Ay/+ were similar. The plasma insulin concentrations, however, in Ay/+ mice were higher than those of +/+ and Tg/+:Ay/+ mice.

The results indicated that glucose tolerance and insulin sensitivity are increased in Tg/+:Ay/+ mice and plasma leptin concentrations in Tg/+:Ay/+ are higher than regular Ay/+ mice. These indicate that overproduction of leptin can prolong the start of impaired glucose metabolism in Tg/+: Ay/+ mice and endogenous leptin cannot in Ay/+ mice. Leptin can apply its anti-diabetic effect in normal weight animals at week 6. At week 12, Tg/+:Ay/+ mice developed resistance to the anti-diabetic action of leptin. In this study, glucose metabolism is somewhat improved in Ay/+ after a long term body weight reduction due to the 3 week food restriction while the metabolism is improved in Tg/+:Ay/+ compared to Ay/+ and control which suggests that hyperleptinemia enhances glucose level when body weight is stable. Persistent hyperleptinemia delays the beginning of impaired glucose metabolism and quickens the recovery from diabetes in Ay/+ mice in combination with food restriction.

Emilie Vander Haar and her team in the University of Minnesota Minneapolis studied “Insulin signaling to mTOR mediated by the Akt/PKB substrate PRAS40.” In this study, they were able to identify PRAS40 as a crucial regulator of insulin sensitivity of the Akt-mTOR metabolic pathway which can potentially help target the treatment of cancers, insulin resistance, and hamartona syndromes. Insulin activates the protein kinases Akt, also known as PKB, and mammalian target of rapamycin (mTOR) which stimulates protein synthesis and cell growth. This study was able to identify PRAS40 as a unique mTOR binding partner and is induced under conditions that inhibit mTOR signaling. Akt phosphorylates PRAS40, which is crucial for insulin to stimulate mTOR. These findings contribute to the clinical studies of type 2 diabetes insulin related pathways.

mTOR is a kinase-related protein that is a key mediator of insulin. Inhibition of mTOR in mammals proves to reduce insulin resistance and extend lifespan. mTORC1 is a nutrient and insulin regulated complex that is formed from mTOR when it interacts with raptor and a G-protein. This complex is involved in the cytoskeleton regulation and Akt phosphorylation; however the interactions and associated proteins in response to insulin have not been identified. In order to do so, Haar and her team used a mass spectroscopy method. An mTOR antibody prepared mTOR immunoprecipitates from T-cells and the proteins that were bound to the regulator were eluted from the precipitates. The mixtures of proteins were trypsinized and the mass spectra were obtained. The highest P scores obtained from the derived peptides illustrated that mass spectroscopy isolated mTOR-binding proteins. Three sequences were obtained and contributed to the finding that Sin1 is crucial in the formation of the mTOR interaction. The PRAS40 peptide sequence was also identified. However, in order to confirm the hypothesis that mTOR binds with PRAS40, T-cells’ precipitates, which carries PRAS40, were analyzed using western blotting. Compared to the control, PRAS40 was found to bind only with mTOR and nothing else. It was shown that PRAS40 binds specifically in the mTOR carboxy-terminal kinase domain. Certain conditions inhibit mTOR signaling increases affinity, binding abilities, of the PRAS40 mTOR interaction. These conditions include depriving leucine or glucose from the media solution, treatment with the glycolytic inhibitor and mitochondrial metabolic inhibitors. The increase in affinity leads to disrupting the raptor-mTOR interaction, which results with destabilizing the PRAS40-mTOR interactions. This tightened bond between the proteins under nutrient deprivation conditions proposes a hypothesis that states PRAS40 has a negative role in regulating mTOR.

In order to further understand the consequence of PRAS40 in mTOR signaling, the regulator was downregulated in 3T3-L1 and HepG2 cells. The phosphorylation process of Akt at Ser 473 and S6K1 (a mTOR substrate) at Thr 389 was studied. PRAS40 silencing led to a significant decrease in Akt phosphorylation in the cell lines which resulted with negative effects on the Akt components. PRAS40 silencing also led to increased levels of S6K1 phosphorylation, and suggests that the PRAS40-knockout mTOR complex is still active in S6k1 phosphorylation. The mechanism was studied and results indicated that PRAS40 silenced cells and resulting activated state of mTOR may contribute to Akt inactivation – a feedback inhibition. That was the first part of PRAS40 analysis, PRAS40 silencing. In the second part, PRAS40 was overexpressed. Increasing the levels of PRAS40 in cells resulted with decreased S6K1 phosphorylation. These results prove that PRAS40 inhibition of mTOR regulation is likely to require mTOR and raptor binding.

After determining the inhibitory function of PRAS40 in mTOR signaling, Haar and her team studied the role PRAS40 plays in the regulation of mTOR. PRAS40 knockdown in mice and human cells weakened the ability of insulin to stimulate phosphorylation. PRAS40 silencing reduces the levels of phosphorylation in both cell types. In order to further study the response of mTOR to insulin, sample cells were treated with insulin. The data collected proposes that PRAS40 silencing detaches mTOR from Akt signals – PRAS40 plays a crucial role in regulating Akt signaling to mTOR. It also demonstrates that Akt phosphorylation of PRAS40 is crucial for mTOR activation through the use of insulin.

The next matter that Haar and her team touched upon was the study that nutrient starvation has dominant effects on PRAS40-mTOR interaction. PRAS40 was hardly released from mTOR when the conditions are deprived of leucine. Also, the amount of 14-3-3, an interaction induced on PRAS40 phosphorylation, bound to mTOR and PRAS40 was significantly reduced under deprived leucine conditions – the interaction was prevented under non-nutrient conditions. 14-3-3 interactions with mTOR and PRAS40 were also prevented under leucine-deprived conditions. In all, these results prove that PRAS40 is a key mediator of Akt signals to mTOR and a negative effector of mTOR signaling. PRAS40 is a crucial regulator of in insulin sensitivity of mTOR signaling, an important role in insulin resistance.

Some methods of this experiment included the use of antibodies in western blotting, plasmid constructions and mutagenesis, the identification of mTOR-interacting proteins, cell culture and transfection, coimmunoprecipitation, chemical crosslinking, and lentiviral preparation, viral infection, and stable cell line generation. Human PRAS40 cDNAs were provided and mouse PRAS40 cDNA samples underwent PCR amplification and then subcloned into mammalian expression vector. All these cloned samples were confirmed by sequencing. PRAS40 Thr 246 was replaced by amino acids: alanine, glutamate, and aspartate. This is done through a site-directed mutagenesis kit. The way that mTOR immunoprecipitates is through the use of an mTOR antibody on cells cultured in 10% fetal bovine. The cell samples were lysed in a buffer and then incubated with 20ul of protein G resin and 4ug of mTOR antibody. The mTOR precipitates were washed with lysis buffer and the binding proteins were eluted by incubation. The mTOR binding proteins were diluted with digestion buffer and then incubated overnight with trypsin. These samples underwent analysis by mass spectrometry. Data would only be considered accurate when the P score is greater or equal to 0.95. For chemical crosslinking experiments, T cells were treated with dithiobis and then harvested and lysed in a buffer. The precipitates were then analyzed using the SDS-PAGE method. In order to measure the cell-size, T cells were infected with lentiviruses, and then selected in the presence of zeocin. The cell samples were trysinized the following day and diluted 10 times. The ViCell cell-size analyzer analyzed the size of 1.0 mL of diluted cell culture sample.

Overall, the study of PRAS40 in regulating mTOR insulin signaling can potentially lead to potential targets for the treatment of different diseases relating to type 2 diabetes, cancers, and insulin resistance. The results indicate that the Akt/PKB substrate, PRAS40, provide negative effects on the signaling of mTOR. The binding suppresses mTOR activation and insulin-receptor substrate-1 (IRS-1 and Akt, therefore, uncoupling the response of mTOR to Akt signals. PRAS40’s interaction with mTOR is induced under certain environmental conditions such as nutrient, leucine and serum deprivation. In general, this project was able to identify that PRAS40 is a crucial mTOR binding partner that intervenes Akt signaling to mTOR.

Endoplasmic Reticulum Stress Stimuli and Beta-Cell Death In Type 2 Diabetes

[edit | edit source]Obesity is related with insulin resistance, however Type 2 Diabetes, a complex known for increased levels of blood glucose due to insulin resistance in the muscle and liver tissue as well as impaired insulin secretion from pancreatic beta-cells, solely cultivates in genetically predisposed and insulin resistant subjects with the beginning of beta-cell dysfunction. As research progresses there is clear data that demonstrates that beta-cell failure and death are due to unresolvable endoplasmic reticulum stress, bringing chronic and strong activation of inositol-requiring protein 1. Endoplasmic reticulum stress can start and generate the characteristics of Beta-cell failure and death observed in Type 2 Diabetes.

Glucose Transport Deficiency in Type 2 Diabetes

[edit | edit source]GLUT4 glucose transporters migrate to the cell surface in response to insulin signaling, thereby upgrading glucose levels in muscle and fat cells. This is accomplished by stimulating vesicle transport of glucose to where it is needed. Adult onset diabetes is often the result of gradual increase in insulin tolerance in individuals who overeat. This desensitization to the effects of insulin interferes with metabolism because vesicles containing GLUT4 are not able to efficiently fuse with the cell membrane therefore glucose uptake into cells is inhibited. By understanding this pathway, researchers may eventually find a therapeutic workaround to treat those suffering from Type 2 diabetes. Presumably, this could be accomplished by synthesizing molecules that mimic the function of GLUT4 and its auxiliaries to resolve the trafficking problem. Alternatively, the insulin pathway could be targeted.

RESOURCES

[edit | edit source]1. http://www.diabetes.org/diabetes-basics/diabetes-statistics/

2. http://www.ncbi.nlm.nih.gov/pubmedhealth/PMH0001350/

3. http://www.ncbi.nlm.nih.gov/pubmedhealth/PMH0001356/

4. http://www.ncbi.nlm.nih.gov/pmc/articles/PMC2682681/

5. http://www.ncbi.nlm.nih.gov/pmc/articles/PMC3161334/

6. http://diabetes.niddk.nih.gov/dm/pubs/statistics/

7. http://diabetes.diabetesjournals.org/content/60/9/2354.full

8. http://www.mayoclinic.com/health/type-1-diabetes/DS00329/DSECTION=treatments%2Dand%2Ddrugs

9. http://www.mayoclinic.com/health/type-2-diabetes/DS00585/DSECTION=treatments%2Dand%2Ddrugs

10. http://diabetes.diabetesjournals.org/content/48/9/1822.short

11. http://www.ncbi.nlm.nih.gov/pubmed/22482906

12. http://www.nature.com/ncb/journal/v9/n3/pdf/ncb1547.pdf

References

[edit | edit source]1. Endoplasmic reticulum stress and type 2 diabetes. Back SH, Kaufman RJ. Annu Rev Biochem. 2012;81:767-93. Epub 2012 Mar 23. Review. PMID: 22443930 [PubMed - indexed for MEDLINE]

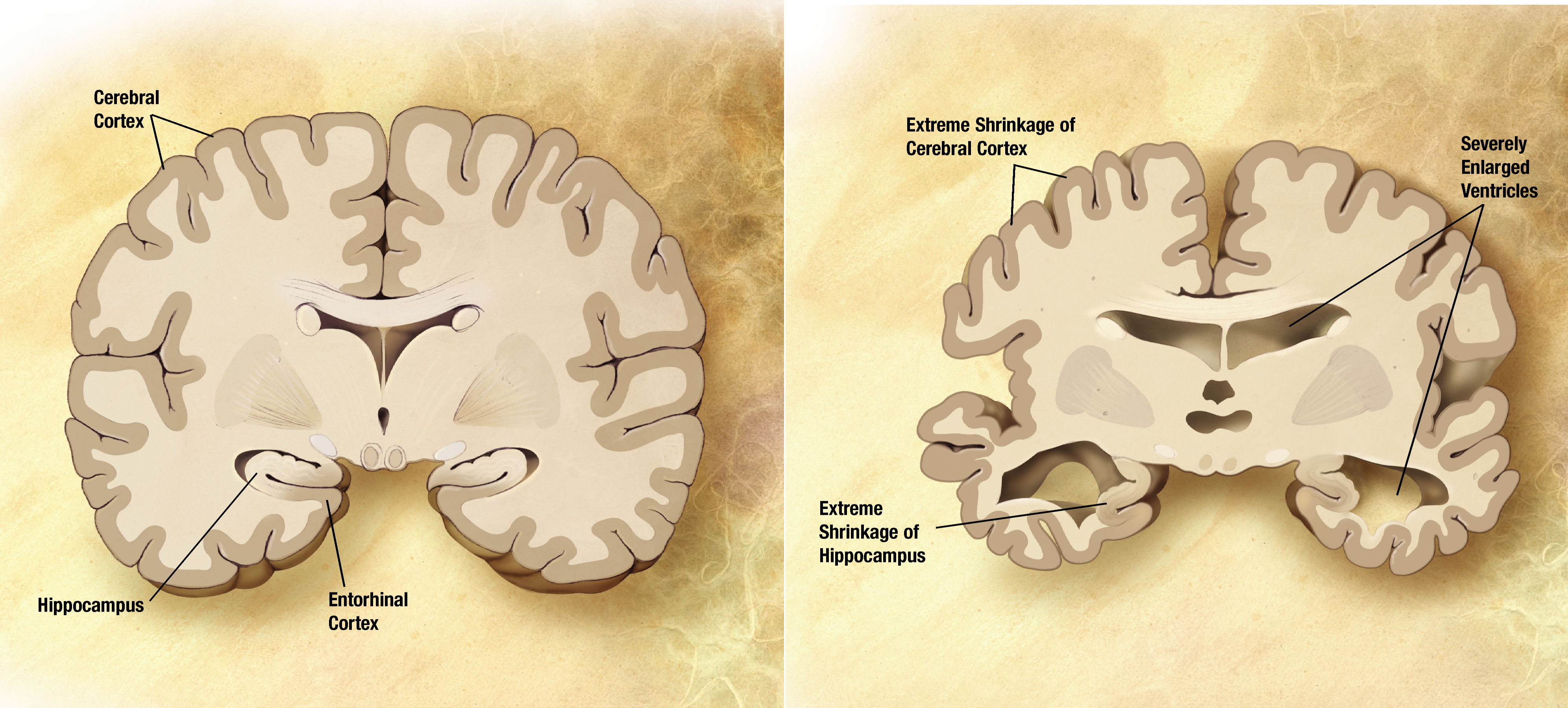

Overview

[edit | edit source]Alzheimer's is a form of dementia, a decline in mental ability that affects everyday life. This disease attacks the brain and causes problems associated with memory, thinking, and behavior. As time progresses, the symptoms usually get worse. It is usually assumed that Alzheimer's is a result of aging; however this is not the case. Aging simply increases the risk factor of obtaining this disease. As of now, there is no cure for Alzheimer's; treatments usually are only able to slow the disease from progressing.

In association with Alzheimers disease are peptide proteins known as β-Amyloid. They are found in the brains of humans diagnosed with Alzheimers Disease. Researchers have been trying to study these peptides so that they can work to find a cure (or treatment) that would help the patients. To understand so, it requires understanding the structure of the proteins. Because of the complex structures, there has been limited compilations, but there has been progress. [1]

Symptoms

[edit | edit source]Memory – Memory loss associated with Alzheimer’s disease persists and only gets worse. Some symptoms may include:

- Repeating the same sentence over and over

- Forgetting conversations, past events, or future appointments

- Misplacing of possessions

- Forgetting names of family members and relationships

- Difficulty understanding surrounds, may not know when or where

Speaking, Writing, Thinking, and Reasoning

- Initially having trouble finding the right words during a conversation

- Eventually lead to the loss of speaking and writing abilities

- May eventually having trouble understanding conversation or written text

- Poor judgment and slow response

Changes in personality and behavior

Brain degradation of Alzheimer’s may affect how people feel. People with Alzheimer's may experience:

- Depression

- Anxiety

- Social withdrawal

- Mood swings

- Distrust in others

- Increased stubbornness

- Irritability and aggressiveness

- Changes in sleeping habits

As the disease progresses, the symptoms could only increase in severity to more severe memory loss, confusion about events in regards to time and space, and disorientation. To try and understand the correlation between the symptoms and the disease, there must be an understanding of the structures of the proteins that have commonly been found in all the patients- β-amyloid (Aβ) peptides. Many people who are affected with Alzheimer's require continuous attention and care since they are unable to perform even basic daily activities.

Test For Alzheimer's

[edit | edit source]A diagnosis of Alzheimer’s disease may include a complete physical and neurological exams, CT (computed tomography), and MRI(magnetic resonance imaging). Biopsy of the brain and identifying evidence of any of the following: Neurofibrillary tangles, Neuritic plaques, and Senile plaques. Neurofibrilary tangles are twisted filaments of proteins within nerve cells that clog up the cell, inhibiting neurotransmitters and the function of the nerve cell. Neuritic plaques are abnormal clusters of invalid or dying nerve cells. Senile plaques are areas of waste products around proteins that were produced by dying nerve cells. All of these may be caused by or inducing Alzheimer’s disease.

Amyloid Fibrils

[edit | edit source]

β-amyloid (Aβ) peptides segregate into different domains. Included are amyloid fibrils, protofibrils, and oligomers. These have been under study in hopes to understand Alzheimers Desease. Unfortunately, understanding their whole structure has been difficult. The B-ayloid (AB) peptide forms naturally in the human body within in the brain as a protein precursor of a proteolytic fragment. It is specifically the AB amyloid fibriles that form the core of a dense plaque within the brain leading to alzheimers disease.

Amyloid fibrils are known as fibrillar polypeptide in collection with an intermolecular cross-β structure. X-ray diffraction showed that the B-strands hydrogen bond with each other and orient in a parallel manner along an axis. The B-amyloid (AB) peptide is amphiphilic having a hydrophobic C-terminus lasting for about 37-42 residues, and a hydrophilic N-terminus. These structures twisted as crossovers, and estimated to have a length of about 1 micrometer. Unique about the AB fibrils are their polymorphism. This refers to their ability to conform to different arrangements. These arrangements include the fibrils different in the number of protofilaments, differing in their orientation, and differing in their substructure. These differences is relevant for humans because it could contribute to folding , reactions, and eventually to the level of alzheimers that the human has. [1]

Aside from the polymorphism, there is further diversity among the amyloid peptides due to structural deformations. This includes different bends and twists. These deformations allow study of nanoscale mechanical properties of the fibrils.

Structure of β-amyloid

[edit | edit source]The β-amyloid peptide is a natural forming proteolytic peptide found in the human brain. It is intrinsically unstructured, meaning that it lacks a stable tertiary structure. Many β-amyloid peptides have disordered and unfolded structures that can only be observed using NMR (nuclear magnetic resonance). The peptide is amphipathic, possessing a hydrophilic N-terminus and a hydrophobic C-terminus. The C-terminus can bind up to 36-43 amino residues, which creates the overall structure of the peptide chain. A great number of β-amyloid isoforms differ by one amino residue; many are closely related to Alzheimer’s. β-amyloid undergoes many complex fibrillation pathways, creating intermediate structure such as oligomers, amyloid derived diffusible ligands, globulomers, paranuclei, and protofibrils. When any of these intermediates decide to plaque the walls of cerebral blood vessels, Alzehimer’s disease may be underdevelopment.

The cross-β sheet structure of a β Amyloid

[edit | edit source]Many different amyloid-like polypeptides show a common cross β-sheet structure. These β-sheets are perpendicularly attached microcrystal backbone through non-covalent and hydrogen bonds, and they have parallel conformations between sheets. Recent studies have shown crystallographic evidence of these microcrystal called steric zippers, which are present in many amyloid fibrils. Steric zipper is a structure of a pair of two cross-β sheets with side chains that resemble a zipper. There are dry and wet interfaces of the cross β-sheet conformation. The wet interface is covered by water molecules, which create a greater distance between two adjacent sheets. The dry interface does not contain water so the distance between two adjacent sheets is much closer. While the polar side-chains of the wet interface is stabilized by hydrogen bond interaction, the side-chains of the dry interface are integrated by adjacent side-chains by stacking the previously mentioned steric zippers. Different β-amyloids with different lengths of residues favor either the parallel or antiparallel form. A β-amyloid can also have segmented parallel and antiparallel structures. For example, residues 1-25 would have one conformation. Residues 26-43 would have the other. From These β-sheet structures assign many distinguishable properties to β-amyloid. For example, β-amyloids have a high affinity to specific dyes such as Congo red and Thioflavin T. These dyes can help mark and track the activity of β-amyloids inside the brain.

Models of Amyloids

[edit | edit source]There have been two forms of Aβ peptides that have been under study: Aβ(1-40) and Aβ (1-42). The numbers 40 and 42 refer to their respective amount of residues.

The Aβ(1-40) is proposed to be more pathogenic than the Aβ(1-42) form. When experimented with the model Drosophilia melanogaster, the Aβ(1-42) showed to be toxic and result in a shorter life-span. Aβ fibrils are a big factor leading to the alzheimers disease. It’s been hypothesized that it is toxic and eventually kills the cells that come in contact by penetrating their membranes. It’s suggested that the activity of these peptides are intracellular rather than extracellular. AB amyloid fibrils are complex units segregate into different populations. To try and understand Alzheimers Disease would mean having to understand the population of the Aβ amyloid fibrils. Doing so will allow researchers and scientist work for the disease treatment

It is often difficult to isolate the Aβ amyloid peptides, thus the amount of information obtained from them is very limited. The full-length structure of the AB amyloid fibrils have yet to be uncovered, even with use of X-ray crystallography. Many other forms of measurements have been used to study the Aβ- amyloids. These include infrared spectroscopy, NMR, mass spectrometry, electron paramagnetic resonance. Unfortunately, the data received are rather indirect. The most direct way would be to use solid state NMR and electros cryomicroscopy (cryo-EM). These allow the distinction of Aβ amyloid fibrils at near-atomic resolution. They give chemical shifts and even the bond angles. From here it allows the researchers to ID the residues and their sheet structure.

There have been many models of the Aβ peptides proposed. But because it must be considered that in different conditions different fibrils can conform, it’s critical to have much caution. The general model of an Aβ fibril is a U-shaped peptide told, refered as a β-arc. [2]

A Molecular Link Between the Active Component of Marijuana and Alzheimer's Disease Pathology

[edit | edit source]Recent studies have shown that the active component of marijuana, Δ9-tetrahydrocannabinol (THC), inhibits AChE-induced β-amyloids aggregation in the pathology of Alzheimer’s disease. Some studies have demonstrated the ability of THC to provide neuroprotection against the toxicity of β-amyloid peptide. One of the causes of Alzheimer’s disease is the deposition of β-amyloid in portions of the brain that are important for memory and cognition. This deposition and formation of a plaque in the brain is caused by enzyme acetylcholinesterase (AChE). AchE is an enzyme that degrades acetylcholine, which in turn increase the amount of neurotransmitters released into the synaptic cleft. It also functions as an allosteric effector that accelerates the formation of amyloid fibrils in the brain. In vitro studies have demonstrated the inhibition of AChE has decreased β-amyloid deposition in the brain, and THC is a very good inhibitor.

THC binding to AChE using AutoDock revealed that THC has a high binding affinity to AChE. Not only do they bind well, interactions were observed between THC and the carbonyl backbone of AChE, residues of Phe123 and Ser125. Furthermore, the ability of THC to inhibit AChE catalytic activities were tested using steady-state kinetic. The results have shown that THC inhibits AChE at a Ki of 10.2 uM. This number is relatively competitive with the current drugs in the market that treat Alzheimer’s disease. While THC shows competitive inhibition relative to the substrate, this does not necessitate a direct interaction between THC and the AChE active site. In fact, enzymes can bind to the PAS allosteric site on AChE while still blocks the entry into the active site of AChE, preventing it from depositing plaque. This why THC serves as an uncompetitive inhibitor of AChE substrate.

References

[edit | edit source]"What is Alzheimer's?." Alzheimer's and Dementia. Alzheimer's Association, 2012. Web. 20 Nov. 2012. <http://www.alz.org/alzheimers_disease_what_is_alzheimers.asp>. Fandrich, Marcus, Schmidt, Matthias, and Nikolaus Griforieff: Trends Biochem Sci. Author Manuscrpt: Recent Progress in Understanding Alzheimer's B-amyloid Structures. 2011 June; 36(6) 338-345.

Sipe, J.D. Amyloidosis. A. Rev. Biochem. 61, 947−76 (1992).

Glenner, G.G. Amyloid deposits and amyloidosis: The -fibrilloses (first of two parts). New. Engl. J. Med. 302, 1283−1292 (1980). | PubMed | ISI | ChemPort |

- ↑ a b Fandrich, Marcus, Matthias Schmidt, Mikolaus Grigorieff (February 2011). "Recent Profress in Understanding Alzheimer's B-amyloid Structures". Trends Biochemistry. 36 (6): 338–45. doi:10.1002/ana.410380312. PMID 7668828.

{{cite journal}}: CS1 maint: multiple names: authors list (link) Invalid<ref>tag; name "pmid7668828" defined multiple times with different content - ↑ Roher AE, Lowenson JD, Clarke S, Woods AS, Cotter RJ, Gowing E, Ball MJ (November 1993). "beta-Amyloid-(1-42) is a major component of cerebrovascular amyloid deposits: implications for the pathology of Alzheimer disease". Proc. Natl. Acad. Sci. U.S.A. 90 (22): 10836–40. Bibcode:1993PNAS...9010836R. doi:10.1073/pnas.90.22.10836. PMC 47873. PMID 8248178.

{{cite journal}}: CS1 maint: multiple names: authors list (link)

Overview

[edit | edit source]

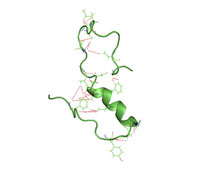

Despite the many pharmacological advancements achieved in the past few decades through structural biochemistry, prescribed medications work in fewer than fifty percent of the patients who take them. The reason for this is that, while mostly similar, everyone’s genome is slightly different and responds differently to the same medications. The underlying cause is that there exist variants in the genes that make Cytochrome P450. Cytochrome P450, an example of which is pictured at right, refers to a large and diverse family of enzymes that process the drugs that we take. Therefore, there are as many different responses to the drugs that we take as there are variants in the gene.

If one knows a patients entire genome, however, it would be relatively easy to predict the types of medications that would work and which ones would be least effective. This is the idea behind personalized medicine, a medical model that uses information from a patient’s genome and proteome to optimize his or her medical care. Personalized medicine is the ultimate goal on the medicinal stairway model, pictured at right. The lowest step is the use of blockbuster drugs; the more advanced step is the stratified medicine level; and the top step is personalized medicine, the most specific and accurate of the three techniques to patient care.

In the previous fifty years, the primary medical model has been that of the “blockbuster drugs,” or medicines that work for a majority of the generic population. Specifically, a blockbuster drug refers to a drug that generates more than $1 billion of revenue for the patent owner each year. Some examples of past blockbuster drugs are Lipitor, Celebrex, and Nexium. Leading scientists in the field of biochemistry acknowledge that we are slowly leaving the blockbuster drug era and are in the midst of moving to the second level, stratified medicine.

Stratified medicine refers to managing a patient group that has shared biological characteristics, such as the presence or absence of a gene mutation. Molecular diagnostic testing is used to confirm these similarities; then, the most optimal treatment is selected in hopes of achieving the best possible result for the group. An example of stratified medicine in practice is grouping patients with breast cancer who have estrogen receptor positivity or HER2 over-expression, and who can be treated according to these characteristics with an anti estrogens or a HER2 inhibitor.

Lastly, personalized medicine is the desired goal we have yet to fully reach. Proteomic profiling, metabolomic analysis, and genetic testing of each individual are required to optimize preventative and therapeutic care. The diagram pictured on the right summarizes the steps needed for personalized medicine to be successful. First, an individual’s genome is sequenced. As technological sequencing techniques advance, the cost of sequencing a genome will decrease, making the personalized medicine model more accessible and more economical. Then, genomic analysis techniques such as SNP genotyping and microarrays are used to gather information about which medicines will work best (for example, regarding an individual’s genetic predisposition toward certain diseases and how long a certain drug will be effective). A current example of the progress made toward personalized medicine is the measurement of erbB2 and EGFR proteins in breast, lung and colorectal cancer patients are taken before selecting proper treatments. As the personalized medicine field advances, molecular information elucidated from tissues will be combined with a patient’s medical and family history, data from imaging, and a multitude of laboratory tests to develop more effective treatments for a wider variety of conditions.

Because everyone has a unique set of genome, advantages of having personalized medicine through pharmacogenetic approaches include:

1. Increase effectiveness of the drug For example, using the right medicine and dosage in order to allow it absorb more easily by a patient’s body

2. Minimize side effects

References

[edit | edit source]PricewaterhouseCoopers’ Health Research Institute,(2009). [The new science of personalized medicine] http://www.pwc.com/personalizedmedicine

Shastry BS (2006). "Pharmacogenetics and the concept of individualized medicine". Pharmacogenomics J. 6 (1): 16–21.

Pharmaceutical Market Trends, 2010-2014, from Urch Publishing

Jørgensen JT, Winther H. The New Era of Personalized Medicine: 10 years later. Per Med 2009; 6: 423-428. A model organism is an indispensable tool used for medical research. Scientists use organisms to investigate questions about living systems that cannot be studied in any other way. These models allow scientists to compare creatures that are different in structures, but share similarities in body chemistry. Even organisms that do not have a structural body, such as yeast and mold, can be useful in providing incites to how tissues and organs work in the human body. This is because enzymes used in metabolism and the processing of nutrients are similar in all living things. Other reasons model organisms are useful are that they are simple, inexpensive, and easy to work with.

Examples of model organisms:

Escherichia Coli: Bacterium

[edit | edit source]There are good and bad bacteria. The one form of bacterium one is usually familiar with is the E. coli that is associated with tainted hamburger meat. However, there also exist "non-disease-causing" strains of E. coli. in the intestinal tracts of humans and animals. These bacteria are the main source in providing vitamins K and B-complex. They also help in the digestive system and provide protection against harmful bacteria. Differentiating between harmful and helpful strains of E. coli can help distinguish the genetic differences between bacteria in humans and bacteria that cause poisoning.

Dictyostelium Discoideum (Dicty): Amoeba

[edit | edit source]Amoeba is microscopic cell which is 100,000 times smaller than a grain of sand. This organism has between 8,000 and 10,000 genes and many of them are similar to those in humans and other animals. Dicty cells usually grow independently. However, with limited food resources, these cells can pile on top on each other to form a multicelled structure of up to 100,000 cells. When migrating, this slug-like organism will leave behind a trail of slime. They can disperse spores that are capable of generating new amoeba.

Neurospora Crassa: Bread Mold

[edit | edit source]This type of model organism is used world wide in genetic research. Researchers like to use bread mold because it is easy to grow and can answer questions about how species adapt to different environments. Neurospora is also useful in the studying of sleep cycles and rhythms of life.

Saccharomyces Cerevisiae: Yeast

[edit | edit source]Yeast is commonly used in research, but it is also an important part of life outside the laboratory. It is a fungus and has eukaryote properties. Researchers prefer yeast because it is fast to grow, cheap and safe to use, and easy to work with. Yeast can be used as a host for mammalian genes, and it allows scientists to study how they function inside the host. Discoveries of the antibiotic penicillin or the protein called sirtuin that interferes with aging resulted from observing fungus.

Arabidopsis Thaliana: Mustard Plant

[edit | edit source]Arabidopsis is a flowering plant that is related to cabbage and mustard. Researchers often use this plant to study plant growth because it has very similar genes with other flowering plants and little encoded-protein DNA, which makes it easy to study genes. Arabidopsis has eukaryotic cells, and the plant can mature quickly in six weeks. Cell communication in plants operates much like human cells do, and this makes it easier to study genetics.

Caenorhabditis Elegans: Roundworm

[edit | edit source]Roundworms are as tiny as the head of a pin, and they live in dirt. In the laboratory, they live in petri dishes and feed on bacteria. This C. elegans creature has 959 cells and one third of the cells form the nervous system. Researchers like to use roundworm because it is transparent, which allows a clear view of what goes on in the body. C. elegans has more than 19,000 genes compare to a human of about 25,000 genes. C. elegans is the first animal genome to be decoded, and the major of the genes is similar to that of humans and other organisms.