Statistics/Print version

| This is the print version of Statistics You won't see this message or any elements not part of the book's content when you print or preview this page. |

The current, editable version of this book is available in Wikibooks, the open-content textbooks collection, at

https://en.wikibooks.org/wiki/Statistics

Introduction

[edit | edit source]Your company has created a new drug that may cure arthritis. How would you conduct a test to confirm the drug's effectiveness?

The latest sales data have just come in, and your boss wants you to prepare a report for management on places where the company could improve its business. What should you look for? What should you not look for?

You and a friend are at a baseball game, and out of the blue he offers you a bet that neither team will hit a home run in that game. Should you take the bet?

You want to conduct a poll on whether your school should use its funding to build a new athletic complex or a new library. How many people do you have to poll? How do you ensure that your poll is free of bias? How do you interpret your results?

A widget maker in your factory that normally breaks 4 widgets for every 100 it produces has recently started breaking 5 widgets for every 100. When is it time to buy a new widget maker? (And just what is a widget, anyway?)

These are some of the many real-world examples that require the use of statistics. How would you approach the problem statements? There are some stepwise human algorithms, but is there a general problem statement?

- "Find possible solutions, decide on a solution, plan the solution, implement the solution, learn from the results for future solutions (or re-solution)."

- "SOAP - subjective - the problem as given, objective - the problem after examination, assessment - the better defined problem, plan - decide if guidelines to management already exist, and blueprint the solution for this case, or generate a risk-minimizing, new solution path".

- "HAMRC - hypothesis, aim, methodology, results, conclusion" - the concept that there is no real difference is the null hypothesis.

Then there is the joke that compares the different ways of thinking:

"A physicist, a chemist and a statistician were working collaboratively on a problem, when the wastepaper basket spontaneously combusted (they all swore they had stopped smoking). The chemist said, 'quick, we must reduce the concentration of the reactant which is oxygen, by increasing the relative concentration of non-reactive gases, such as carbon dioxide and carbon monoxide. Place a fire blanket over the flames.' The physicist, interjected, 'no, no, we must reduce the heat energy available for activating combustion ; get some water to douse the flame'. Meanwhile, the statistician was running around lighting more fires. The others asked with alarm, 'what are you doing?'. 'Trying to get an adequate sample size'."

General Definition

[edit | edit source]Statistics, in short, is the study of data. It includes descriptive statistics (the study of methods and tools for collecting data, and mathematical models to describe and interpret data) and inferential statistics (the systems and techniques for making probability-based decisions and accurate predictions).

Etymology

[edit | edit source]As its name implies, statistics has its roots in the idea of "the state of things". The word itself comes from the ancient Latin term statisticum collegium, meaning "a lecture on the state of affairs". Eventually, this evolved into the Italian word statista, meaning "statesman", and the German word Statistik, meaning "collection of data involving the State". Gradually, the term came to be used to describe the collection of any sort of data.

Statistics as a subset of mathematics

[edit | edit source]As one would expect, statistics is largely grounded in mathematics, and the study of statistics has lent itself to many major concepts in mathematics: probability, distributions, samples and populations, the bell curve, estimation, and data analysis.

Up ahead

[edit | edit source]Up ahead, we will learn about subjects in modern statistics and some practical applications of statistics. We will also lay out some of the background mathematical concepts required to begin studying statistics.

A remarkable amount of today's modern statistics comes from the original work of R.A. Fisher in the early 20th Century. Although there are a dizzying number of minor disciplines in the field, there are some basic, fundamental studies.

The beginning student of statistics will be more interested in one topic or another depending on his or her outside interest. The following is a list of some of the primary branches of statistics.

Probability Theory and Mathematical Statistics

[edit | edit source]Those of us who are purists and philosophers may be interested in the intersection between pure mathematics and the messy realities of the world. A rigorous study of probability — especially the probability distributions and the distribution of errors — can provide an understanding of where all these statistical procedures and equations come from. Although this sort of rigor is likely to get in the way of a psychologist (for example) learning and using statistics effectively, it is important if one wants to do serious (i.e. graduate-level) work in the field.

That being said, there is good reason for all students to have a fundamental understanding of where all these "statistical techniques and equations" are coming from! We're always more adept at using a tool if we can understand why we're using that tool. The challenge is getting these important ideas to the non-mathematician without the student's eyes glazing over. One can take this argument a step further to claim that a vast number of students will never actually use a t-test—he or she will never plug those numbers into a calculator and churn through some esoteric equations—but by having a fundamental understanding of such a test, he or she will be able to understand (and question) the results of someone else's findings.

Design of Experiments

[edit | edit source]One of the most neglected aspects of statistics—and maybe the single greatest reason that Statisticians drink—is Experimental Design. So often a scientist will bring the results of an important experiment to a statistician and ask for help analyzing results only to find that a flaw in the experimental design rendered the results useless. So often we statisticians have researchers come to us hoping that we will somehow magically "rescue" their experiments.

A friend provided me with a classic example of this. In his psychology class he was required to conduct an experiment and summarize its results. He decided to study whether music had an impact on problem solving. He had a large number of subjects (myself included) solve a puzzle first in silence, then while listening to classical music and finally listening to rock and roll, and finally in silence. He measured how long it would take to complete each of the tasks and then summarized the results.

What my friend failed to consider was that the results were highly impacted by a learning effect he hadn't considered. The first puzzle always took longer because the subjects were first learning how to work the puzzle. By the third try (when subjected to rock and roll) the subjects were much more adept at solving the puzzle, thus the results of the experiment would seem to suggest that people were much better at solving problems while listening to rock and roll!

The simple act of randomizing the order of the tests would have isolated the "learning effect" and in fact, a well-designed experiment would have allowed him to measure both the effects of each type of music and the effect of learning. Instead, his results were meaningless. A careful experimental design can help preserve the results of an experiment, and in fact some designs can save huge amounts of time and money, maximize the results of an experiment, and sometimes yield additional information the researcher had never even considered!

Sampling

[edit | edit source]Similar to the Design of Experiments, the study of sampling allows us to find a most effective statistical design that will optimize the amount of information we can collect while minimizing the level of effort. Sampling is very different from experimental design however. In a laboratory we can design an experiment and control it from start to finish. But often we want to study something outside of the laboratory, over which we have much less control.

If we wanted to measure the population of some harmful beetle and its effect on trees, we would be forced to travel into some forest land and make observations, for example: measuring the population of the beetles in different locations, noting which trees they were infesting, measuring the health and size of these trees, etc.

Sampling design gets involved in questions like "How many measurements do I have to take?" or "How do I select the locations from which I take my measurements?" Without planning for these issues, researchers might spend a lot of time and money only to discover that they really have to sample ten times as many points to get meaningful results or that some of their sample points were in some landscape (like a marsh) where the beetles thrived more or the trees grew better.

Modern Regression

[edit | edit source]Regression models relate variables to each other in a linear fashion. For example, if you recorded the heights and weights of several people and plotted them against each other, you would find that as height increases, weight tends to increase too. You would probably also see that a straight line through the data is about as good a way of approximating the relationship as you will be able to find, though there will be some variability about the line. Such linear models are possibly the most important tool available to statisticians. They have a long history and many of the more detailed theoretical aspects were discovered in the 1970s. The usual method for fitting such models is by "least squares" estimation, though other methods are available and are often more appropriate, especially when the data are not normally distributed.

What happens, though, if the relationship is not a straight line? How can a curve be fit to the data? There are many answers to this question. One simple solution is to fit a quadratic relationship, but in practice such a curve is often not flexible enough. Also, what if you have many variables and relationships between them are dissimilar and complicated?

Modern regression methods aim at addressing these problems. Methods such as generalized additive models, projection pursuit regression, neural networks and boosting allow for very general relationships between explanatory variables and response variables, and modern computing power makes these methods a practical option for many applications

Classification

[edit | edit source]Some things are different from others. How? That is, how are objects classified into their respective groups? Consider a bank that is hoping to lend money to customers. Some customers who borrow money will be unable or unwilling to pay it back, though most will pay it back as regular repayments. How is the bank to classify customers into these two groups when deciding which ones to lend money to?

The answer to this question no doubt is influenced by many things, including a customer's income, credit history, assets, already existing debt, age and profession. There may be other influential, measurable characteristics that can be used to predict what kind of customer a particular individual is. How should the bank decide which characteristics are important, and how should it combine this information into a rule that tells it whether or not to lend the money?

This is an example of a classification problem, and statistical classification is a large field containing methods such as linear discriminant analysis, classification trees, neural networks and other methods.

Time Series

[edit | edit source]Many types of research look at data that are gathered over time, where an observation taken today may have some correlation with the observation taken tomorrow. Two prominent examples of this are the fields of finance (the stock market) and atmospheric science.

We've all seen those line graphs of stock prices as they meander up and down over time. Investors are interested in predicting which stocks are likely to keep climbing (i.e. when to buy) and when a stock in their portfolio is falling. It is easy to be misled by a sudden jolt of good news or a simple "market correction" into inferring—incorrectly—that one or the other is taking place!

In meteorology scientists are concerned with the venerable science of predicting the weather. Whether trying to predict if tomorrow will be sunny or determining whether we are experiencing true climate changes (i.e. global warming) it is important to analyze weather data over time.

Survival Analysis

[edit | edit source]Suppose that a pharmaceutical company is studying a new drug which it is hoped will cause people to live longer (whether by curing them of cancer, reducing their blood pressure or cholesterol and thereby their risk of heart disease, or by some other mechanism). The company will recruit patients into a clinical trial, give some patients the drug and others a placebo, and follow them until they have amassed enough data to answer the question of whether, and by how long, the new drug extends life expectancy.

Such data present problems for analysis. Some patients will have died earlier than others, and often some patients will not have died before the clinical trial completes. Clearly, patients who live longer contribute informative data about the ability (or not) of the drug to extend life expectancy. So how should such data be analyzed?

Survival analysis provides answers to this question and gives statisticians the tools necessary to make full use of the available data to correctly interpret the treatment effect.

Categorical Analysis

[edit | edit source]In laboratories we can measure the weight of fruit that a plant bears, or the temperature of a chemical reaction. These data points are easily measured with a yardstick or a thermometer, but what about the color of a person's eyes or her attitudes regarding the taste of broccoli? Psychologists can't measure someone's anger with a measuring stick, but they can ask their patients if they feel "very angry" or "a little angry" or "indifferent". Entirely different methodologies must be used in statistical analysis from these sorts of experiments. Categorical Analysis is used in a myriad of places, from political polls to analysis of census data to genetics and medicine.

Clinical Trials

[edit | edit source]In the United States, the FDA requires that pharmaceutical companies undergo rigorous procedures called Clinical Trials and statistical analyses to assure public safety before allowing the sale or use of new drugs. In fact, the pharmaceutical industry employs more statisticians than any other business!

Further reading

[edit | edit source]

Imagine reading a book for the first few chapters and then becoming able to get a sense of what the ending will be like - this is one of the great reasons to learn statistics. With the appropriate tools and solid grounding in statistics, one can use a limited sample (e.g. read the first five chapters of Pride & Prejudice) to make intelligent and accurate statements about the population (e.g. predict the ending of Pride & Prejudice). This is what knowing statistics and statistical tools can do for you.

In today's information-overloaded age, statistics is one of the most useful subjects anyone can learn. Newspapers are filled with statistical data, and anyone who is ignorant of statistics is at risk of being seriously misled about important real-life decisions such as what to eat, who is leading the polls, how dangerous smoking is, etc. Knowing a little about statistics will help one to make more informed decisions about these and other important questions. Furthermore, statistics are often used by politicians, advertisers, and others to twist the truth for their own gain. For example, a company selling the cat food brand "Cato" (a fictitious name here), may claim quite truthfully in their advertisements that eight out of ten cat owners said that their cats preferred Cato brand cat food to "the other leading brand" cat food. What they may not mention is that the cat owners questioned were those they found in a supermarket buying Cato.

“The best thing about being a statistician is that you get to play in everyone else’s backyard.” John Tukey, Princeton University

More seriously, those proceeding to higher education will learn that statistics is the most powerful tool available for assessing the significance of experimental data, and for drawing the right conclusions from the vast amounts of data faced by engineers, scientists, sociologists, and other professionals in most spheres of learning. There is no study with scientific, clinical, social, health, environmental or political goals that does not rely on statistical methodologies. The basic reason for that is that variation is ubiquitous in nature and probability and statistics are the fields that allow us to study, understand, model, embrace and interpret variation.

See Also UCLA Brochure on Why Study Probability & Statistics

Statistics is a diverse subject and thus the mathematics that are required depend on the kind of statistics we are studying. A strong background in linear algebra is needed for most multivariate statistics, but is not necessary for introductory statistics. A background in Calculus is useful no matter what branch of statistics is being studied.

At a bare minimum the student should have a grasp of basic concepts taught in Algebra and be comfortable with "moving things around" and solving for an unknown. Most of the statistics here will derive from a few basic things that the reader should become acquainted with.

Absolute Value

[edit | edit source]

If the number is zero or positive, then the absolute value of the number is simply the same number. If the number is negative, then take away the negative sign to get the absolute value.

Examples

[edit | edit source]- |42| = 42

- |-5| = 5

- |2.21| = 2.21

Factorials

[edit | edit source]A factorial is a calculation that gets used a lot in probability. It is defined only for integers greater-than-or-equal-to zero as:

Examples

[edit | edit source]In short, this means that:

| 0! = | 1 | = 1 |

| 1! = | 1 · 1 | = 1 |

| 2! = | 2 · 1 | = 2 |

| 3! = | 3 · 2 · 1 | = 6 |

| 4! = | 4 · 3 · 2 · 1 | = 24 |

| 5! = | 5 · 4 · 3 · 2 · 1 | = 120 |

| 6! = | 6 · 5 · 4 · 3 · 2 · 1 | = 720 |

Summation

[edit | edit source]The summation (also known as a series) is used more than almost any other technique in statistics. It is a method of representing addition over lots of values without putting + after +. We represent summation using a big uppercase sigma: ∑.

Examples

[edit | edit source]Very often in statistics we will sum a list of related variables:

Here we are adding all the x variables (which will hopefully all have values by the time we calculate this). The expression below the ∑ (i=0, in this case) represents the index variable and what its starting value is (i with a starting value of 0) while the number above the ∑ represents the number that the variable will increment to (stepping by 1, so i = 0, 1, 2, 3, and then 4). Another example:

Notice that we would get the same value by moving the 2 outside of the summation (perform the summation and then multiply by 2, rather than multiplying each component of the summation by 2).

Infinite series

[edit | edit source]There is no reason, of course, that a series has to count on any determined, or even finite value—it can keep going without end. These series are called "infinite series" and sometimes they can even converge to a finite value, eventually becoming equal to that value as the number of items in your series approaches infinity (∞).

Examples

[edit | edit source]This example is the famous geometric series. Note both that the series goes to ∞ (infinity, that means it does not stop) and that it is only valid for certain values of the variable r. This means that if r is between the values of -1 and 1 (-1 < r < 1) then the summation will get closer to (i.e., converge on) 1 / 1-r the further you take the series out.

Linear Approximation

[edit | edit source]| v / α | 0.20 | 0.10 | 0.05 | 0.025 | 0.01 | 0.005 |

|---|---|---|---|---|---|---|

| 40 | 0.85070 | 1.30308 | 1.68385 | 2.02108 | 2.42326 | 2.70446 |

| 50 | 0.84887 | 1.29871 | 1.67591 | 2.00856 | 2.40327 | 2.67779 |

| 60 | 0.84765 | 1.29582 | 1.67065 | 2.00030 | 2.39012 | 2.66028 |

| 70 | 0.84679 | 1.29376 | 1.66691 | 1.99444 | 2.38081 | 2.64790 |

| 80 | 0.84614 | 1.29222 | 1.66412 | 1.99006 | 2.37387 | 2.63869 |

| 90 | 0.84563 | 1.29103 | 1.66196 | 1.98667 | 2.36850 | 2.63157 |

| 100 | 0.84523 | 1.29007 | 1.66023 | 1.98397 | 2.36422 | 2.62589 |

Let us say that you are looking at a table of values, such as the one above. You want to approximate (get a good estimate of) the values at 63, but you do not have those values on your table. A good solution here is use a linear approximation to get a value which is probably close to the one that you really want, without having to go through all of the trouble of calculating the extra step in the table.

This is just the equation for a line applied to the table of data. xi represents the data point you want to know about, is the known data point beneath the one you want to know about, and is the known data point above the one you want to know about.

Examples

[edit | edit source]Find the value at 63 for the 0.05 column, using the values on the table above.

First we confirm on the above table that we need to approximate the value. If we know it exactly, then there really is no need to approximate it. As it stands this is going to rest on the table somewhere between 60 and 70. Everything else we can get from the table:

Using software, we calculate the actual value of f(63) to be 1.669402, a difference of around 0.00013. Close enough for our purposes.

Different Types of Data

[edit | edit source]Data are assignments of values onto observations of events and objects. They can be classified by their coding properties and the characteristics of their domains and their ranges.

Identifying data type

[edit | edit source]When a given data set is numerical in nature, it is necessary to carefully distinguish the actual nature of the variable being quantified. Statistical tests are generally specific for the kind of data being handled.

Data on a nominal (or categorical) scale

[edit | edit source]Identifying the true nature of numerals applied to attributes that are not "measures" is usually straightforward and apparent. Examples in everyday use include road, car, house, book and telephone numbers. A simple test would be to ask if re-assigning the numbers among the set would alter the nature of the collection. If the plates on a car are changed, for example, it still remains the same car in reality.

Data on an Ordinal Scale

[edit | edit source]An ordinal scale is a scale with ranks. Those ranks only have sense in that they are ordered, that is what makes it ordinal scale. The distance [rank n] minus [rank n-1] is not guaranteed to be equal to [rank n-1] minus [rank n-2], but [rank n] will be greater than [rank n-1] in the same way [rank n-1] is greater than [rank n-2] for all n where [rank n], [rank n-1], and [rank n-2] exist. Ranks of an ordinal scale may be represented by a system with numbers or names and an agreed order.

We can illustrate this with a common example: the Likert scale. Consider five possible responses to a question, perhaps Our president is a great man, with answers on this scale

| Response: | Strongly Disagree | Disagree | Neither Agree nor Disagree | Agree | Strongly Agree |

|---|---|---|---|---|---|

| Code: | 1 | 2 | 3 | 4 | 5 |

Here the answers are a ranked scale reflected in the choice of numeric code. There is however no sense in which the distance between Strongly agree and Agree is the same as between Strongly disagree and Disagree.

Numerical ranked data should be distinguished from measurement data.

Measurement data

[edit | edit source]Numerical measurements exist in two forms, Meristic and continuous, and may present themselves in three kinds of scale: interval, ratio and circular.

Meristic or discrete variables are generally counts and can take on only discrete values. Normally they are represented by natural numbers. The number of plants found in a botanist's quadrant would be an example. (Note that if the edge of the quadrant falls partially over one or more plants, the investigator may choose to include these as halves, but the data will still be meristic as doubling the total will remove any fraction).

Continuous variables are those whose measurement precision is limited only by the investigator and his equipment. The length of a leaf measured by a botanist with a ruler will be less precise than the same measurement taken by micrometer. (Notionally, at least, the leaf could be measured even more precisely using a microscope with a graticule.)

Interval Scale Variables measured on an interval scale have values in which differences are uniform and meaningful but ratios will not be so. An oft quoted example is that of the Celsius scale of temperature. A difference between 5° and 10° is equivalent to a difference between 10° and 15°, but the ratio between 15° and 5° does not imply that the former is three times as warm as the latter.

Ratio Scale Variables on a ratio scale have a meaningful zero point. In keeping with the above example one might cite the Kelvin temperature scale. Because there is an absolute zero, it is true to say that 400°K is twice as warm as 200°K, though one should do so with tongue in cheek. A better day-to-day example would be to say that a 180 kg Sumo wrestler is three times heavier than his 60 kg wife.

Circular Scale When one measures annual dates, clock times and a few other forms of data, a circular scale is in use. It can happen that neither differences nor ratios of such variables are sensible derivatives, and special methods have to be employed for such data.

See also

[edit | edit source]

Statistical data can be obtained from two sources primary or secondary.

Primary Data

[edit | edit source]Primary data means original data that has been collected specially for the purpose in mind. It means someone collected the data from the original source first hand. Data collected this way is called primary data.

The people who gather primary data may be an authorized organization, investigator, enumerator or they may be just someone with a clipboard. Those who gather primary data may have knowledge of the study and may be motivated to make the study a success. These people are acting as a witness so primary data is only considered as reliable as the people who gathered it.

Research where one gathers this kind of data is referred to as field research.

For example: your own questionnaire.

Secondary Data

[edit | edit source]Secondary data is data that has been collected for another purpose. When we use Statistical Method with Primary Data from another purpose for our purpose we refer to it as Secondary Data. It means that one purpose's Primary Data is another purpose's Secondary Data. Secondary data is data that is being reused. Usually in a different context.

Research where one gathers this kind of data is referred to as desk research.

For example: data from a book.

Why Classify Data This Way?

[edit | edit source]Knowing how the data was collected allows critics of a study to search for bias in how it was conducted. A good study will welcome such scrutiny. Each type has its own weaknesses and strengths. Primary Data is gathered by people who can focus directly on the purpose in mind. This helps ensure that questions are meaningful to the purpose but can introduce bias in those same questions. Secondary Data doesn't have the privilege of this focus but is only susceptible to bias introduced in the choice of what data to reuse. Stated another way, those who gather Secondary Data get to pick the questions. Those who gather Primary Data get to write the questions.

<< Sources of Data | Statistics | >> Qualitative and Quantitative

Qualitative data

[edit | edit source]Qualitative data is a categorical measurement expressed not in terms of numbers, but rather by means of a natural language description. In statistics, it is often used interchangeably with "categorical" data.

For example:

favorite color = "blue"

height = "tall"

i hated the most = "zen"

Although we may have categories, the categories may have a structure to them. When there is not a natural ordering of the categories, we call these nominal categories. Examples might be gender, race, religion, or sport.

When the categories may be ordered, these are called ordinal variables. Categorical variables that judge size (small, medium, large, etc.) are ordinal variables. Attitudes (strongly disagree, disagree, neutral, agree, strongly agree) are also ordinal variables, however we may not know which value is the best or worst of these issues. Note that the distance between these categories is not something we can measure.

Quantitative data

[edit | edit source]Quantitative data is a numerical measurement expressed not by means of a natural language description, but rather in terms of numbers. However, not all numbers are continuous and measurable. For example, the social security number is a number, but not something that one can add or subtract.

For example:

molecule length = "450 nm"

height = "1.8 m"

Quantitative data always are associated with a scale measure.

Probably the most common scale type is the ratio-scale. Observations of this type are on a scale that has a meaningful zero value but also have an equidistant measure (i.e., the difference between 10 and 20 is the same as the difference between 100 and 110). For example, a 10 year-old girl is twice as old as a 5 year-old girl. Since you can measure zero years, time is a ratio-scale variable. Money is another common ratio-scale quantitative measure. Observations that you count are usually ratio-scale (e.g., number of widgets).

Methods of Data Collection

[edit | edit source]The main portion of Statistics is the display of summarized data. Data is initially collected from a given source, whether they are experiments, surveys, or observation, and is presented in one of four methods:

- Textual Method

- The reader acquires information through reading the gathered data.

- Tabular Method

- Provides a more precise, systematic and orderly presentation of data in rows or columns.

- Semi-tabular Method

- Uses both textual and tabular methods.

- Graphical Method

- The utilization of graphs is most effective method of visually presenting statistical results or findings.

Experiments

[edit | edit source]In a experiment the experimenter applies 'treatments' to groups of subjects. For example the experimenter may give one drug to group 1 and a different drug or a placebo to group 2, to determine the effectiveness of the drug. This is what differentiates an 'experiment' from an 'observational study'.

Scientists try to identify cause-and-effect relationships because this kind of knowledge is especially powerful, for example, drug A cures disease B. Various methods exist for detecting cause-and-effect relationships. An experiment is a method that most clearly shows cause-and-effect because it isolates and manipulates a single variable, in order to clearly show its effect. Experiments almost always have two distinct variables: First, an independent variable (IV) is manipulated by an experimenter to exist in at least two levels (usually "none" and "some"). Then the experimenter measures the second variable, the dependent variable (DV).

|

Example: Experimentation Suppose the experimental hypothesis that concerns the scientist is that reading a wiki will enhance knowledge. Notice that the hypothesis is really an attempt to state a causal relationship like, "if you read a qiki, then you will have enhanced knowledge." The antecedent condition (reading a wiki) causes the consequent condition (enhanced knowledge). Antecedent conditions are always IVs and consequent conditions are always DVs in experiments. So the experimenter would produce two levels of Wiki reading (none and some, for example) and record knowledge. If the subjects who got no Wiki exposure had less knowledge than those who were exposed to wikis, it follows that the difference is caused by the IV. |

So, the reason scientists utilize experiments is that it is the only way to determine causal relationships between variables. Experiments tend to be artificial because they try to make both groups identical with the single exception of the levels of the independent variable.

Sample surveys involve the selection and study of a sample of items from a population. A sample is just a set of members chosen from a population, but not the whole population. A survey of a whole population is called a census.

A sample from a population may not give accurate results but it helps in decision making.

Examples

[edit | edit source]Examples of sample surveys:

- Phoning the fifth person on every page of the local phonebook and asking them how long they have lived in the area. (Systematic Sample)

- Dropping a quad. in five different places on a field and counting the number of wild flowers inside the quad. (Cluster Sample)

- Selecting sub-populations in proportion to their incidence in the overall population. For instance, a researcher may have reason to select a sample consisting 30% females and 70% males in a population with those same gender proportions. (Stratified Sample)

- Selecting several cities in a country, several neighbourhoods in those cities and several streets in those neighbourhoods to recruit participants for a survey. (Multi-stage sample)

The term random sample is used for a sample in which every item in the population is equally likely to be selected.

Bias

[edit | edit source]While sampling is a more cost effective method of determining a result, small samples or samples that depend on a certain selection method will result in a bias within the results.

The following are common sources of bias:

- Sampling bias or statistical bias, where some individuals are more likely to be selected than others (such as if you give equal chance of cities being selected rather than weighting them by size)

- Systemic bias, where external influences try to affect the outcome (e.g. funding organizations wanting to have a specific result)

The most primitive method of understanding the laws of nature utilizes observational studies. Basically, a researcher goes out into the world and looks for variables that are associated with one another. Notice that, unlike experiments, in an observational study the Independent Variables are not manipulated by the experimenter. The independent variable could be something like "smoking". It would be unethical and probably impossible to do an experiment where one randomly selected group is assigned to smoke and another group is assigned to the non-smoking group. Therefore, in order do determine the health effects of smoking on humans, an observational study is more appropriate than an experiment. The health of smokers and non-smokers would be compared without the experimenter assigning treatment.

Some of the foundations of modern scientific thought are based on observational research. Charles Darwin, for example, based his explanation of evolution entirely on observations he made. Case studies, where individuals are observed and questioned to determine possible causes of problems, are a form of observational research that continues to be popular today. In fact, every time you see a physician he or she is performing observational science.

There is a problem in observational science though — it cannot ever identify causal relationships because even though two variables are related both might be caused by a third, unseen, variable. Since the underlying laws of nature are assumed to be causal laws, observational findings are generally regarded as less compelling than experimental findings.

The key way to identify experimental studies is that they involve an intervention such as the administration of a drug to one group of patients and a placebo to another group. Observational studies only collect data and make comparisons.

Medicine is an intensively studied discipline, and not all phenomenon can be studied by experimentation due to obvious ethical or logistical restrictions.

- Case series: These are purely observational, consisting of reports of a series of similar medical cases. For example, a series of patients might be reported to suffer from bone abnormalities as well as immunodeficiencies. This association may not be significant, occurring purely by chance. On the other hand, the association may point to a mutation in common pathway affecting both the skeletal system and the immune system.

- Case-Control: This involves an observation of a disease state, compared to normal healthy controls. For example, patients with lung cancer could be compared with their otherwise healthy neighbors. Using neighbors limits bias introduced by demographic variation. The cancer patients and their neighbors (the control) might be asked about their exposure history (did they work in an industrial setting), or other risk factors such as smoking. Another example of a case-control study is the testing of a diagnostic procedure against the gold standard. The gold standard represents the control, while the new diagnostic procedure is the "case." This might seem to qualify as an "intervention" and thus an experiment.

- Cross-sectional: Involves many variables collected all at the same time. Used in epidemiology to estimate prevalence, or conduct other surveys.

- Cohort: A group of subjects followed over time, prospectively. Framingham study is classic example. By observing exposure and then tracking outcomes, cause and effect can be better isolated. However this type of study cannot conclusively isolate a cause and effect relationship.

- Historic Cohort: This is the same as a cohort except that researchers use an historic medical record to track patients and outcomes.

Data Analysis

[edit | edit source]Data analysis is one of the more important stages in our research. Without performing exploratory analyses of our data, we set ourselves up for mistakes and loss of time.

Generally speaking, our goal here is to be able to "visualize" the data and get a sense of their values. We plot histograms and compute summary statistics to observe the trends and the distribution of our data.

Data Cleaning

[edit | edit source]'Cleaning' refers to the process of removing invalid data points from a dataset.

Many statistical analyses try to find a pattern in a data series, based on a hypothesis or assumption about the nature of the data. 'Cleaning' is the process of removing those data points which are either (a) Obviously disconnected with the effect or assumption which we are trying to isolate, due to some other factor which applies only to those particular data points. (b) Obviously erroneous, i.e. some external error is reflected in that particular data point, either due to a mistake during data collection, reporting etc.

In the process we ignore these particular data points, and conduct our analysis on the remaining data.

'Cleaning' frequently involves human judgement to decide which points are valid and which are not, and there is a chance of valid data points caused by some effect not sufficiently accounted for in the hypothesis/assumption behind the analytical method applied.

The points to be cleaned are generally extreme outliers. 'Outliers' are those points which stand out for not following a pattern which is generally visible in the data. One way of detecting outliers is to plot the data points (if possible) and visually inspect the resultant plot for points which lie far outside the general distribution. Another way is to run the analysis on the entire dataset, and then eliminating those points which do not meet mathematical 'control limits' for variability from a trend, and then repeating the analysis on the remaining data.

Cleaning may also be done judgementally, for example in a sales forecast by ignoring historical data from an area/unit which has a tendency to misreport sales figures. To take another example, in a double blind medical test a doctor may disregard the results of a volunteer whom the doctor happens to know in a non-professional context.

'Cleaning' may also sometimes be used to refer to various other judgemental/mathematical methods of validating data and removing suspect data.

The importance of having clean and reliable data in any statistical analysis cannot be stressed enough. Often, in real-world applications the analyst may get mesmerised by the complexity or beauty of the method being applied, while the data itself may be unreliable and lead to results which suggest courses of action without a sound basis. A good statistician/researcher (personal opinion) spends 90% of his/her time on collecting and cleaning data, and developing hypothesis which cover as many external explainable factors as possible, and only 10% on the actual mathematical manipulation of the data and deriving results.

Summary Statistics

[edit | edit source]Summary Statistics

[edit | edit source]The most simple example of statistics "in practice" is in the generation of summary statistics. Let us consider the example where we are interested in the weight of eighth graders in a school. (Maybe we're looking at the growing epidemic of child obesity in America!) Our school has 200 eighth graders, so we gather all their weights. What we have are 200 positive real numbers.

If an administrator asked you what the weight was of this eighth grade class, you wouldn't grab your list and start reading off all the individual weights; it's just too much information. That same administrator wouldn't learn anything except that she shouldn't ask you any questions in the future! What you want to do is to distill the information — these 200 numbers — into something concise.

What might we express about these 200 numbers that would be of interest? The most obvious thing to do is to calculate the average or mean value so we know how much the "typical eighth grader" in the school weighs. It would also be useful to express how much this number varies; after all, eighth graders come in a wide variety of shapes and sizes! In reality, we can probably reduce this set of 200 weights into at most four or five numbers that give us a firm comprehension of the data set.

Averages

[edit | edit source]An average is simply a number that is representative of data. More particularly, it is a measure of central tendency. There are several types of average. Averages are useful for comparing data, especially when sets of different size are being compared. It acts as a representative figure of the whole set of data.

Perhaps the simplest and commonly used average the arithmetic mean or more simply mean which is explained in the next section.

Other common types of average are the median, the mode, the geometric mean, and the harmonic mean, each of which may be the most appropriate one to use under different circumstances.

Statistics | Summary Statistics | >> Mean, Median and Mode

Mean, Median and Mode

[edit | edit source]Mean

[edit | edit source]The mean, or more precisely the arithmetic mean, is simply the arithmetic average of a group of numbers (or data set) and is shown using -bar symbol . So the mean of the variable is , pronounced "x-bar". It is calculated by adding up all of the values in a data set and dividing by the number of values in that data set :.For example, take the following set of data: {1,2,3,4,5}. The mean of this data would be:

Here is a more complicated data set: {10,14,86,2,68,99,1}. The mean would be calculated like this:

Median

[edit | edit source]The median is the "middle value" in a set. That is, the median is the number in the center of a data set that has been ordered sequentially.

For example, let's look at the data in our second data set from above: {10,14,86,2,68,99,1}. What is its median?

- First, we sort our data set sequentially: {1,2,10,14,68,85,99}

- Next, we determine the total number of points in our data set (in this case, 7.)

- Finally, we determine the central position of or data set (in this case, the 4th position), and the number in the central position is our median - {1,2,10,14,68,85,99}, making 14 our median.

| Helpful Hint! | |

|---|---|

| An easy way to determine the central position or positions for any ordered set is to take the total number of points, add 1, and then divide by 2. If the number you get is a whole number, then that is the central position. If the number you get is a fraction, take the two whole numbers on either side. |

Because our data set had an odd number of points, determining the central position was easy - it will have the same number of points before it as after it. But what if our data set has an even number of points?

Let's take the same data set, but add a new number to it: {1,2,10,14,68,85,99,100} What is the median of this set?

When you have an even number of points, you must determine the two central positions of the data set. (See side box for instructions.) So for a set of 8 numbers, we get (8 + 1) / 2 = 9 / 2 = 4 1/2, which has 4 and 5 on either side.

Looking at our dataset, we see that the 4th and 5th numbers are 14 and 68. From there, we return to our trusty friend the mean to determine the median. (14 + 68) / 2 = 82 / 2 = 41. find the median of 2, 4, 6, 8 => firstly we must count the numbers to determine its odd or even as we see it is even so we can write: M=(4+6)/2=10/2=5 5 is the median of above sequential numbers.

Mode

[edit | edit source]The mode is the most common or "most frequent" value in a data set. Example: the mode of the following data set (1, 2, 5, 5, 6, 3) is 5 since it appears twice. This is the most common value of the data set. Data sets having one mode are said to be unimodal, with two are said to be bimodal and with more than two are said to be multimodal . An example of a unimodal dataset is {1, 2, 3, 4, 4, 4, 5, 6, 7, 8, 8, 9}. The mode for this data set is 4. An example of a bimodal data set is {1, 2, 2, 3, 3}. This is because both 2 and 3 are modes. Please note: If all points in a data set occur with equal frequency, it is equally accurate to describe the data set as having many modes or no mode.

Midrange

[edit | edit source]The midrange is the arithmetic mean strictly between the minimum and the maximum value in a data set.

Relationship of the Mean, Median, and Mode

[edit | edit source]The relationship of the mean, median, and mode to each other can provide some information about the relative shape of the data distribution. If the mean, median, and mode are approximately equal to each other, the distribution can be assumed to be approximately symmetrical. If the mean > median > mode, the distribution will be skewed to the right. If the mean < median < mode, the distribution will be skewed to the left.

Questions

[edit | edit source]1. There is an old joke that states: "Using median size as a reference it's perfectly possible to fit four ping-pong balls and two blue whales in a rowboat." Explain why this statement is true.

Geometric Mean

[edit | edit source]The Geometric Mean is calculated by taking the nth root of the product of a set of data.

For example, if the set of data was:

1,2,3,4,5

The geometric mean would be calculated:

Of course, with large n this can be difficult to calculate. Taking advantage of two properties of the logarithm:

We find that by taking the logarithmic transformation of the geometric mean, we get:

Which leads us to the equation for the geometric mean:

When to use the geometric mean

[edit | edit source]The arithmetic mean is relevant at any time several quantities add together to produce a total. The arithmetic mean answers the question, "if all the quantities had the same value, what would that value have to be in order to achieve the same total?"

In the same way, the geometric mean is relevant any time several quantities multiply together to produce a product. The geometric mean answers the question, "if all the quantities had the same value, what would that value have to be in order to achieve the same product?"

For example, suppose you have an investment which returns 10% the first year, 50% the second year, and 30% the third year. What is its average rate of return? It is not the arithmetic mean, because what these numbers mean is that on the first year your investment was multiplied (not added to) by 1.10, on the second year it was multiplied by 1.50, and the third year it was multiplied by 1.30. The relevant quantity is the geometric mean of these three numbers. Written by Hafiz G m

It is known that the geometric mean is always less than or equal to the arithmetic mean (equality holding only when A=B). The proof of this is quite short and follows from the fact that is always a non-negative number. This inequality can be surprisingly powerful though and comes up from time to time in the proofs of theorems in calculus. Source.

Harmonic Mean

[edit | edit source]The arithmetic mean cannot be used when we want to average quantities such as speed.

Consider the example below:

Example 1: The distance from my house to town is 40 km. I drove to town at a speed of 40 km per hour and returned home at a speed of 80 km per hour. What was my average speed for the whole trip?.

Solution: If we just took the arithmetic mean of the two speeds I drove at, we would get 60 km per hour. This isn't the correct average speed, however: it ignores the fact that I drove at 40 km per hour for twice as long as I drove at 80 km per hour. To find the correct average speed, we must instead calculate the harmonic mean.

For two quantities A and B, the harmonic mean is given by:

This can be simplified by adding in the denominator and multiplying by the reciprocal:

For N quantities: A, B, C......

Harmonic mean =

Let us try out the formula above on our example:

Harmonic mean =

Our values are A = 40, B = 80. Therefore, harmonic mean

Is this result correct? We can verify it. In the example above, the distance between the two towns is 40 km. So the trip from A to B at a speed of 40 km will take 1 hour. The trip from B to A at a speed to 80 km will take 0.5 hours. The total time taken for the round distance (80 km) will be 1.5 hours. The average speed will then be 53.33 km/hour.

The harmonic mean also has physical significance.

Relationships among Arithmetic, Geometric and Harmonic Mean

[edit | edit source]The Means mentioned above are realizations of the generalized mean

and ordered this way:

Measures of dispersion

[edit | edit source]Range of Data

[edit | edit source]The range of a sample (set of data) is simply the maximum possible difference in the data, i.e. the difference between the maximum and the minimum values. A more exact term for it is "range width" and is usually denoted by the letter R or w. The two individual values (the max. and min.) are called the "range limits". Often these terms are confused and students should be careful to use the correct terminology.

For example, in a sample with values 2 3 5 7 8 11 12, the range is 11 (|12|-|2|+1=11) and the range limits are 2 and 12.

The range is the simplest and most easily understood measure of the dispersion (spread) of a set of data, and though it is very widely used in everyday life, it is too rough for serious statistical work. It is not a "robust" measure, because clearly the chance of finding the maximum and minimum values in a population depends greatly on the size of the sample we choose to take from it and so its value is likely to vary widely from one sample to another. Furthermore, it is not a satisfactory descriptor of the data because it depends on only two items in the sample and overlooks all the rest. A far better measure of dispersion is the standard deviation (s), which takes into account all the data. It is not only more robust and "efficient" than the range, but is also amenable to far greater statistical manipulation. Nevertheless the range is still much used in simple descriptions of data and also in quality control charts.

The mean range of a set of data is however a quite efficient measure (statistic) and can be used as an easy way to calculate s. What we do in such cases is to subdivide the data into groups of a few members, calculate their average range, and divide it by a factor (from tables), which depends on n. In chemical laboratories for example, it is very common to analyse samples in duplicate, and so they have a large source of ready data to calculate s.

(The factor k to use is given under standard deviation.)

For example: If we have a sample of size 40, we can divide it into 10 sub-samples of n=4 each. If we then find their mean range to be, say, 3.1, the standard deviation of the parent sample of 40 items is approximately 3.1/2.059 = 1.506.

With simple electronic calculators now available, which can calculate s directly at the touch of a key, there is no longer much need for such expedients, though students of statistics should be familiar with them.

{tBookCat}}

Quartiles

[edit | edit source]The quartiles of a data set are formed by the two boundaries on either side of the median, which divide the set into four equal sections. The lowest 25% of the data being found below the first quartile value, also called the lower quartile (Q1). The median, or second quartile divides the set into two equal sections. The lowest 75% of the data set should be found below the third quartile, also called the upper quartile (Q3). These three numbers are measures of the dispersion of the data, while the mean, median and mode are measures of central tendency.

Examples

[edit | edit source]Given the set {1,3,5,8,9,12,24,25,28,30,41,50} we would find the first and third quartiles as follows:

There are 12 elements in the set, so 12/4 gives us three elements in each quarter of the set.

So the first or lowest quartile is: 5, the second quartile is the median 12, and the third or upper quartile is 28.

However some people when faced with a set with an even number of elements (values) still want the true median (or middle value), with an equal number of data values on each side of the median (rather than 12 which has 5 values less than and 6 values greater than. This value is then the average of 12 and 24 resulting in 18 as the true median (which is closer to the mean of 19 2/3. The same process is then applied to the lower and upper quartiles, giving 6.5, 18, and 29. This is only an issue if the data contains an even number of elements with an even number of equally divided sections, or an odd number of elements with an odd number of equally divided sections.

Inter-Quartile Range

[edit | edit source]The inter quartile range is a statistic which provides information about the spread of a data set, and is calculated by subtracting the first quartile from the third quartile), giving the range of the middle half of the data set, trimming off the lowest and highest quarters. Since the IQR is not affected at all by outliers in the data, it is a more robust measure of dispersion than the range

IQR = Q3 - Q1

Another useful quantile is the quintiles which subdivide the data into five equal sections. The advantage of quintiles is that there is a central one with boundaries on either side of the median which can serve as an average group. In a Normal distribution the boundaries of the quintiles have boundaries ±0.253*s and ±0.842*s on either side of the mean (or median),where s is the sample standard deviation. Note that in a Normal distribution the mean, median and mode coincide.

Other frequently used quantiles are the deciles (10 equal sections) and the percentiles (100 equal sections)

Variance and Standard Deviation

[edit | edit source]

Measure of Scale

[edit | edit source]When describing data it is helpful (and in some cases necessary) to determine the spread of a distribution. One way of measuring this spread is by calculating the variance or the standard deviation of the data.

In describing a complete population, the data represents all the elements of the population. As a measure of the "spread" in the population one wants to know a measure of the possible distances between the data and the population mean. There are several options to do so. One is to measure the average absolute value of the deviations. Another, called the variance, measures the average square of these deviations.

A clear distinction should be made between dealing with the population or with a sample from it. When dealing with the complete population the (population) variance is a constant, a parameter which helps to describe the population. When dealing with a sample from the population the (sample) variance is actually a random variable, whose value differs from sample to sample. Its value is only of interest as an estimate for the population variance.

Population variance and standard deviation

[edit | edit source]Let the population consist of the N elements x1,...,xN. The (population) mean is:

- .

The (population) variance σ2 is the average of the squared deviations from the mean or (xi - μ)2 - the square of the value's distance from the distribution's mean.

- .

Because of the squaring the variance is not directly comparable with the mean and the data themselves. The square root of the variance is called the Standard Deviation σ. Note that σ is the root mean squared of differences between the data points and the average.

Sample variance and standard deviation

[edit | edit source]Let the sample consist of the n elements x1,...,xn, taken from the population. The (sample) mean is:

- .

The sample mean serves as an estimate for the population mean μ.

The (sample) variance s2 is a kind of average of the squared deviations from the (sample) mean:

- .

Also for the sample we take the square root to obtain the (sample) standard deviation s

A common question at this point is "why do we square the numerator?" One answer is: to get rid of the negative signs. Numbers are going to fall above and below the mean and, since the variance is looking for distance, it would be counterproductive if those distances factored each other out.

Example

[edit | edit source]When rolling a fair die, the population consists of the 6 possible outcomes 1 to 6. A sample may consist instead of the outcomes of 1000 rolls of the die.

The population mean is:

- ,

and the population variance:

The population standard deviation is:

- .

Notice how this standard deviation is somewhere in between the possible deviations.

So if we were working with one six-sided die: X = {1, 2, 3, 4, 5, 6}, then σ2 = 2.917. We will talk more about why this is different later on, but for the moment assume that you should use the equation for the sample variance unless you see something that would indicate otherwise.

Note that none of the above formulae are ideal when calculating the estimate and they all introduce rounding errors. Specialized statistical software packages use more complicated logarithms that take a second pass of the data in order to correct for these errors. Therefore, if it matters that your estimate of standard deviation is accurate, specialized software should be used. If you are using non-specialized software, such as some popular spreadsheet packages, you should find out how the software does the calculations and not just assume that a sophisticated algorithm has been implemented.

For Normal Distributions

[edit | edit source]The empirical rule states that approximately 68 percent of the data in a normally distributed dataset is contained within one standard deviation of the mean, approximately 95 percent of the data is contained within 2 standard deviations, and approximately 99.7 percent of the data falls within 3 standard deviations.

As an example, the verbal or math portion of the SAT has a mean of 500 and a standard deviation of 100. This means that 68% of test-takers scored between 400 and 600, 95% of test takers scored between 300 and 700, and 99.7% of test-takers scored between 200 and 800 assuming a completely normal distribution (which isn't quite the case, but it makes a good approximation).

Robust Estimators

[edit | edit source]For a normal distribution the relationship between the standard deviation and the interquartile range is roughly: SD = IQR/1.35.

For data that are non-normal, the standard deviation can be a terrible estimator of scale. For example, in the presence of a single outlier, the standard deviation can grossly overestimate the variability of the data. The result is that confidence intervals are too wide and hypothesis tests lack power. In some (or most) fields, it is uncommon for data to be normally distributed and outliers are common.

One robust estimator of scale is the "average absolute deviation", or aad. As the name implies, the mean of the absolute deviations about some estimate of location is used. This method of estimation of scale has the advantage that the contribution of outliers is not squared, as it is in the standard deviation, and therefore outliers contribute less to the estimate. This method has the disadvantage that a single large outlier can completely overwhelm the estimate of scale and give a misleading description of the spread of the data.

Another robust estimator of scale is the "median absolute deviation", or mad. As the name implies, the estimate is calculated as the median of the absolute deviation from an estimate of location. Often, the median of the data is used as the estimate of location, but it is not necessary that this be so. Note that if the data are non-normal, the mean is unlikely to be a good estimate of location.

It is necessary to scale both of these estimators in order for them to be comparable with the standard deviation when the data are normally distributed. It is typical for the terms aad and mad to be used to refer to the scaled version. The unscaled versions are rarely used.

External links

[edit | edit source]Other summaries

[edit | edit source]Moving Average

[edit | edit source]A moving average is used when you want to get a general picture of the trends contained in a data set. The data set of concern is typically a so-called "time series", i.e a set of observations ordered in time. Given such a data set X, with individual data points , a 2n+1 point moving average is defined as , and is thus given by taking the average of the 2n points around . Doing this on all data points in the set (except the points too close to the edges) generates a new time series that is somewhat smoothed, revealing only the general tendencies of the first time series.

The moving average for many time-based observations is often lagged. That is, we take the 10 -day moving average by looking at the average of the last 10 days. We can make this more exciting (who knew statistics was exciting?) by considering different weights on the 10 days. Perhaps the most recent day should be the most important in our estimate and the value from 10 days ago would be the least important. As long as we have a set of weights that sums to 1, this is an acceptable moving-average. Sometimes the weights are chosen along an exponential curve to make the exponential moving-average.

Displaying Data

[edit | edit source] A single statistic tells only part of a dataset’s story. The mean is one perspective; the median yet another. And when we explore relationships between multiple variables, even more statistics arise. The coefficient estimates in a regression model, the Cochran-Maentel-Haenszel test statistic in partial contingency tables; a multitude of statistics are available to summarize and test data.

But our ultimate goal in statistics is not to summarize the data, it is to fully understand their complex relationships. A well designed statistical graphic helps us explore, and perhaps understand, these relationships.

This section will help you let the data speak, so that the world may know its story.

Types of Data Display

- Bar_Charts

- Histograms

- Scatter_Graphs

- Box_Plots

- Pie_Charts

- Comparative_Pie_Charts

- Pictograms

- Line_Graphs

- Frequency_Polygon

External Links

- "The Visual Display of Quantitative Information" is the seminal work on statistical graphics. It is a must read.

- [1] "Show me the Numbers" by Stephen Few has a less technical approach to creating graphics. You might want to scan through this book if you are building a library on making graphs.

Bar Charts

[edit | edit source]The Bar Chart (or Bar Graph) is one of the most common ways of displaying catagorical/qualitative data. Bar Graphs consist of 2 variables, one response (sometimes called "dependent") and one predictor (sometimes called "independent"), arranged on the horizontal and vertical axis of a graph. The relationship of the predictor and response variables is shown by a mark of some sort (usually a rectangular box) from one variable's value to the other's.

To demonstrate we will use the following data(tbl. 3.1.1) representing a hypothetical relationship between a qualitative predictor variable, "Graph Type", and a quantitative response variable, "Votes".

tbl. 3.1.1 - Favourite Graphs

| Graph Type | Votes |

|---|---|

| Bar Charts | 5 |

| Pie Graphs | 2 |

| Histograms | 3 |

| Pictograms | 8 |

| Comp. Pie Graphs | 4 |

| Line Graphs | 9 |

| Frequency Polygon | 1 |

| Scatter Graphs | 5 |

From this data we can now construct an appropriate graphical representation which, in this case will be a Bar Chart. The graph may be orientated in several ways, of which the vertical chart (fig. 3.1.1) is most common, with the horizontal chart(fig. 3.1.2) also being used often

Take note that the height and width of the bars, in the vertical and horizontal Charts, respectfully, are equal to the response variable's corresponding value - "Bar Chart" bar equals the number of votes that the Bar Chart type received in tbl. 3.1.1

Also take note that there is a pronounced amount of space between the individual bars in each of the graphs, this is important in that it help differentiate the Bar Chart graph type from the Histogram graph type discussed in a later section.

External Links

[edit | edit source]Histograms

[edit | edit source]

It is often useful to look at the distribution of the data, or the frequency with which certain values fall between pre-set bins of specified sizes. The selection of these bins is up to you, but remember that they should be selected in order to illuminate your data, not obfuscate it.

A histogram is similar to a bar chart. However histograms are used for continuous (as opposed to discrete or qualitative) data. The defining property of a histogram is:

- The area of each bar is proportional to the frequency.

If each bin has an equal width, then this can be easily done by plotting frequency on the vertical axis. However histograms can also be drawn with unequal bin sizes, for which one can plot frequency density.

To produce a histogram with equal bin sizes:

- Select a minimum, a maximum, and a bin size. All three of these are up to you. In the histogram data used above the minimum is 1, the maximum is 110, and the bin size is 10.

- Calculate your bins and how many values fall into each of them. For the histogram data the bins are:

- 1 ≤ x < 10, 16 values.

- 10 ≤ x < 20, 4 values.

- 20 ≤ x < 30, 4 values.

- 30 ≤ x < 40, 2 values.

- 40 ≤ x < 50, 2 values.

- 50 ≤ x < 60, 1 values.

- 60 ≤ x < 70, 0 values.

- 70 ≤ x < 80, 0 values.

- 80 ≤ x < 90, 0 values.

- 90 ≤ x < 100, 0 value.

- 100 ≤ x < 110, 0 value.

- 110 ≤ x < 120, 1 value.

- Plot the counts you figured out above. Do this using a standard bar plot.

Worked Problem

[edit | edit source]Let's say you are an avid roleplayer who loves to play Mechwarrior, a d6 (6 sided die) based game. You have just purchased a new 6 sided die and would like to see whether it is biased (in combination with you when you roll it).

What We Expect

[edit | edit source]So before we look at what we get from rolling the die, let's look at what we would expect. First, if a die is unbiased it means that the odds of rolling a six are exactly the same as the odds of rolling a 1--there wouldn't be any favoritism towards certain values. Using the standard equation for the arithmetic mean find that μ = 3.5. We would also expect the histogram to be roughly even all of the way across--though it will almost never be perfect simply because we are dealing with an element of random chance.

What We Get

[edit | edit source]Here are the numbers that you collect:

| 1 | 5 | 6 | 4 | 1 | 3 | 5 | 5 | 6 | 4 | 1 | 5 | 6 | 6 | 4 | 5 | 1 | 4 | 3 | 6 |

| 1 | 3 | 6 | 4 | 2 | 4 | 1 | 6 | 4 | 2 | 2 | 4 | 3 | 4 | 1 | 1 | 6 | 3 | 5 | 5 |

| 4 | 3 | 5 | 3 | 4 | 2 | 2 | 5 | 6 | 5 | 4 | 3 | 5 | 3 | 3 | 1 | 5 | 4 | 4 | 5 |

| 1 | 2 | 5 | 1 | 6 | 5 | 4 | 3 | 2 | 4 | 2 | 1 | 3 | 3 | 3 | 4 | 6 | 1 | 1 | 3 |

| 6 | 6 | 1 | 4 | 6 | 6 | 6 | 5 | 3 | 1 | 5 | 6 | 3 | 4 | 5 | 5 | 5 | 2 | 4 | 4 |

Analysis

[edit | edit source]Referring back to what we would expect for an unbiased die, this is pretty close to what we would expect. So let's create a histogram to see if there is any significant difference in the distribution.

The only logical way to divide up dice rolls into bins is by what's showing on the die face:

| 1 | 2 | 3 | 4 | 5 | 6 |

| 16 | 9 | 17 | 21 | 20 | 17 |

If we are good at visualizing information, we can simple use a table, such as in the one above, to see what might be happening. Often, however, it is useful to have a visual representation. As the amount of variety of data we want to display increases, the need for graphs instead of a simple table increases.

Looking at the above figure, we clearly see that sides 1, 3, and 6 are almost exactly what we would expect by chance. Sides 4 and 5 are slightly greater, but not too much so, and side 2 is a lot less. This could be the result of chance, or it could represent an actual anomaly in the data and it is something to take note of keep in mind. We'll address this issue again in later chapters.

Frequency Density

[edit | edit source]Another way of drawing a histogram is to work out the Frequency Density.

- Frequency Density

- The Frequency Density is the frequency divided by the class width.

The advantage of using frequency density in a histogram is that doesn't matter if there isn't an obvious standard width to use. For all the groups, you would work out the frequency divided by the class width for all of the groups.

External Links

Return to Statistics.

Scatter Plots

[edit | edit source]

Scatter Plot is used to show the relationship between 2 numeric variables. It is not useful when comparing discrete variables versus numeric variables. A scatter plot matrix is a collection of pairwise scatter plots of numeric variables.

External Links

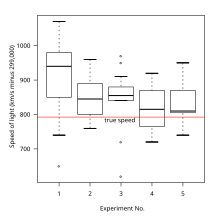

Box Plots

[edit | edit source]

A box plot (also called a box and whisker diagram) is a simple visual representation of key features of a univariate sample.

The box lies on a vertical axis in the range of the sample. Typically, a top to the box is placed at the 1st quartile, the bottom at the third quartile. The width of the box is arbitrary, as there is no x-axis (though see Violin Plots, below).

In between the top and bottom of the box is some representation of central tendency. A common version is to place a horizontal line at the median, dividing the box into two. Additionally, a star or asterisk is placed at the mean value, centered in the box in the horizontal direction.

Another common extension is to the 'box-and-whisker' plot. This adds vertical lines extending from the top and bottom of the plot to for example, the maximum and minimum values, The farthest value within 2 standard deviations above and below the mean. Alternatively, the whiskers could extend to the 2.5 and 97.5 percentiles. Finally, it is common in the box-and-whisker plot to show outliers (however defined) with asterisks at the individual values beyond the ends of the whiskers.

Violin Plots are an extension to box plots using the horizontal information to present more data. They show some estimate of the CDF instead of a box, though the quantiles of the distribution are still shown.

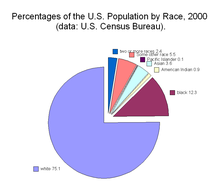

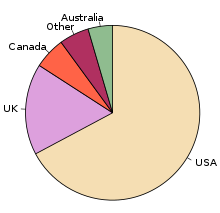

Pie Charts

[edit | edit source]

A Pie-Chart/Diagram is a graphical device - a circular shape broken into sub-divisions. The sub-divisions are called "sectors", whose areas are proportional to the various parts into which the whole quantity is divided. The sectors may be coloured differently to show the relationship of parts to the whole. A pie diagram is an alternative of the sub-divided bar diagram.

To construct a pie-chart, first we draw a circle of any suitable radius then the whole quantity which is to be divided is equated to 360 degrees. The different parts of the circle in terms of angles are calculated by the following formula.

Component Value / Whole Quantity * 360

The component parts i.e. sectors have been cut beginning from top in clockwise order.

Note that the percentages in a list may not add up to exactly 100% due to rounding. For example if a person spends a third of their time on each of three activities: 33%, 33% and 33% sums to 99%.

Warning: Pie charts are a poor way of communicating information. The eye is good at judging linear measures and bad at judging relative areas. A bar chart or dot chart is a preferable way of displaying this type of data.

Cleveland (1985), page 264: "Data that can be shown by pie charts always can be shown by a dot chart. This means that judgements of position along a common scale can be made instead of the less accurate angle judgments." This statement is based on the empirical investigations of Cleveland and McGill as well as investigations by perceptual psychologists.

Three-dimensional (3d) pie charts compound perceptual misinterpretation of statistical information by altering the relative angle of pie slices to create the impression of depth into a vanishing point. Angles and areas at the bottom of the chart must be exaggerated and the angles and areas at the top of the chart reduced in order to create the dimensional effect; a specifically false depiction of the data.

External Links

- Interactive Java-based Pie-Chart Applet

- See section 2.4 (cognostics) in D. Carr's and L. Pickle's book Visualizing Data Patterns with Micromaps for more information

Comparative Pie Charts

[edit | edit source]

The comparative pie charts are very difficult to read and compare if the ratio of the pie chart is not given.

Examine our example of color preference for two different groups. How much work does it take to see that it is quite challenging to work out who ate the pie? First, we have to find Fingerprints on either pie, and then remember how many sensirivity vectors it has. If we did not include the share for blue in the label, then we would probably be approximating the comparison. So, if we use multiple pie charts, we have to expect that comparisions between charts would only be approximate.

What is the most popular color in the left graph? Red. But note, that you have to look at all of the colors and read the label to see which it might be. Also, this author was kind when creating these two graphs because he used the same color for the same object. Imagine the confusion if one had made the most important color get Red in the right-hand chart?

If two shares of data should not be compared via the comparative pie chart, what kind of graph would be preferred? The stacked bar chart is probably the most appropriate for sharing of the total comparisons. Again, exact comparisons cannot be done with graphs and therefore a table may supplement the graph with detailed information.

Pictograms

[edit | edit source]A pictogram is simply a picture that conveys some statistical information. A very common example is the thermometer graph so common in fund drives. The entire thermometer is the goal (number of dollars that the fund raisers wish to collect. The red stripe (the "mercury") represents the proportion of the goal that has already been collected.

Another example is a picture that represents the gender constitution of a group. Each small picture of a male figure might represent 1,000 men and each small picture of a female figure would, then, represent 1,000 women. A picture consisting of 3 male figures and 4 female figures would indicate that the group is made up of 3,000 men and 4,000 women.