Sensory Systems/Arthropods

Olfactory System of Ants

[edit | edit source]Introduction

[edit | edit source]Ants are a very successful species, owing in large part to their intricate social organization and parsimonious array of sensory processing capabilities. As ants live in colonies of millions of members, solid communication abilities, such as signaling to other individuals the whereabouts and plentifulness of food sources or foreign colonies, are crucial. Keeping track of their environment allows ants to regulate their foraging activities. Ants also use their olfactory sensation to find back to their nest and use pheromone deposition to regulate colony-scale emergent behavior to find the shortest paths to food sources.

Olfaction

[edit | edit source]Olfaction in Ants is carried out by pheromones, small organic molecules that are produced by different glands, such as Dufour’s gland, the poison gland, the anal gland, and glands on feet, abdomen and thorax. These pheromones are used to exchange information about mating, predators, trail marking or food sources. Some ant species, such as the Pharaoh’s ant, have distinct pheromones of different valence and volatility. Pheromones can be either high or low volatility and attractant or repellant. This allows foraging ants to reinforce tracks to plentiful food sources in order to amplify rewarding paths and cut down attractance to scarce food sources. This way a pheromone network is created that is easily adaptable to changing environmental conditions. In a way ant colonies work like a single super-organism that can retain memory chemically with short and long term memory.

Olfaction in pathfinding

[edit | edit source]

An interesting perspective linking olfaction to pathfinding was elucidated by the following experiment on desert ants: Researchers marked the visually inconspicuous entrance to their nest (a small hole in the ground) by four odorants which were previously shown to bear no valence, neither attractive nor repellent. The four organic molecules were deposited at the corners of an imaginary square surrounding the nest entrance. Ants subsequently learned to associate the smells with their nest entrance, such that under test conditions (without any nest present) they would actively seek out the center of the odor square. However, when the spatial arrangement of the odors was modified, the ants no longer recognized it, suggesting that the olfactory memory they resort to for navigation has an essential spatial dimension. Finally, when one antenna was severed (ants possess two antennae equipped with olfactory receptors), ants also failed to recognize the odor square. This led researchers to conclude that ants “smell their scenery in stereo” [1]

The ability to quickly find the shortest path to ephemeral food sources is a key survival factor for ants. When placed in an environment where the nest and a food source are connected by two paths of different length ants become increasingly biased towards the shorter path. In the beginning ants choose randomly between the two available paths, but ants that chose the shorter one are able to make the trip several times, thus leaving more chemoattractants on their path. The higher pheromone concentration will induce more ants to select the short path, thereby reinforcing the bias with every journey. This shows that a complex navigation problem can be solved by the colony-scale behavior emerging in a system with a single, positively valenced pheromone. [2]

Role and morphology of the antenna

[edit | edit source]

The major channel of sensory input for ants is the antenna. By moving it around, they can touch, taste, and smell everything within their reach. The function of these antennae goes well beyond taste and olfaction, though: the chemical cues picked up through them are key to several behavioral functions necessary for their survival. They include mate recognition and selection, discrimination between nestmates and foreigners, locomotion and exploring new grounds for food, communication, and defensive behaviors. There are also examples of subfamilies of ants (Dorylinae, Leptanilinae, Cerapachyinae) that are all blind and rely exclusively on chemosensory signals. It is therefore mainly through chemosensing that ants are able to form the highly efficient organized colonies they are known for. The ant antenna is unique in that it is bent, or “elbowed”. It consists of a long elongated basal segment called the scape, and three to eleven distal segments collectively called the funiculus, or agellum. The scape and agellum meet at an angle, therefore giving the ant antenna its characteristic bent shape. Each segment is called an antennomer, so the total antennomer count for ants is between four and twelve.

The antenna contains three types of sensory receptors: odorant receptors (ORs), gustatory receptors (GRs), and ionotropic glutamate receptors (IRs). GRs contribute to the ant's sense of taste and pick up a limited amount of pheromones, while IRs are narrowly tuned receptors whose main use is to detect poisonous and toxic compounds. Ant IR and GR levels are comparable to IR and GR levels in other insects; however OR levels, responsible for responding to odorants and a wider array of pheromones, are disproportionately high in ants and allow them to detect chemical substances at lower concentrations than other insects.

Neuronal morphology of the antenna

[edit | edit source]The sensory neurons are located on silia, hair-like structures on the antennae. Within those structures are the sensilium filters (also referred to as sensilia), a cuticular apparatus that contains a group of sensory neurons and their auxiliary cells. Each antennomer contains a few different types of sensilia and secretory gland cells and can be observed with scanning electron microscopy. The types of sensilia differ not only between ant species but are also specific to the gender and caste of the ant in question. Different types and functions of sensilia Based on the morphological features of the sensilia (for instance, the presence or absence of pores), one can speculate on their functions and how they serve a specific sensory modality. There are seven different types of sensilia each with their own types of inputs: [3]

- 1. Sensilla chaetica: mainly related to mechanosensory inputs

- 2. S. trichodea: olfactory

- 3. S. trichodea curvata: olfactory, including pheromones

- 4. S. basiconica: olfactory

- 5. S. coeloconica: olfactory

- 6. S. ampullacea: has no pores and contains hygroreceptors that respond to humidity, thermoreceptors that respond to temperature, and specialized receptors that respond to CO2 cues

- 7. S. campaniformia: olfactory

The physiological functions of these sensory neurons in ants have mostly been studied using bioassay techniques and electrophysiological recordings using a variety of different ant species. Again, not all sensilia are always present on an ant, but five dominant types are usually present for all: S. basiconica, S. chaetica, S. trichodea curvata, S. coeloconica, and S. ampullacea. They also all have a characteristic protruding pore plate (S. trichodea curvata) structure, which provides a higher absorption probability for odorous molecules, as opposed to a at plate. The large surface area found in S. basiconica (long pegs), S. trichodea curvata (long hairs), and S. coeloconica (large openings) could also favor the collection of more molecules [4]. The major type of sensilia for both males and females are S. trichodea curvata, which serve an olfactory purpose.

Odorant Receptor Neurons

[edit | edit source]OR neurons are located on specialized olfactory sensilia. Each OR neuron usually expresses a single receptor, along with what is called an Orco (odorant receptor co-receptor), together forming a functional receptor unit. OR neurons convert chemical activity into neural signals sent to glomeruli in the antennal lobe. The sensilia present in a specific ant depends on the ant's species, caste and gender. Some OR gene family members are also enriched specifically in worker ant antennae. OR neurons have also been proposed to be the principle mechanism by which ants detect queen cuticular hydrocarbons, which in turn regulates worker-specific behaviors.

Sexual and caste dimorphism in sensilia relates to function

[edit | edit source]Colonies of ants consist of non-reproductive females and reproductive males and females. All worker, soldier, and queen ants are female. The few fertile male ants don't work in the colony and are only alive for a few months to fertilize the queen ant during nuptial flights. Sensilia are sexually and caste dimorphic: they differ depending on the sex and caste of the ant. Overall, males have about a third of the number of ORs found in females. Recent research has shown that males also have ORs specifically tuned to pheromones produced by the queen. Scanning electron microscopy studies on the red fire ant have given us some other interesting examples, for example male antenna have porous sensilia on all segments. Morphological differences in sensilia have been proposed to not only relate to, but determine ant functional needs. In other words, the absence or presence of sensilia sensitive to task-related odors and cues could constitute a possible mechanism by which task assignments are determined in a colony. For example, in S. invicta worker ants are polymorphic, with the total number of sensilia depending on club length, which constitutes a difference in sensitivity to olfactory signals.

References

[edit | edit source]- ↑ Kathrin Steck, Markus Knaden, and Bill S. Hansson. Do desert ants smell the scenery in stereo? Animal Behaviour, 79(4):939-945, 2010.

- ↑ S Goss, J L Deneuborg, and J M Pasteels. Self-organized shortcuts in the Argentine ant. Naturwissenschaften, 76(1959):579-581, 1989.

- ↑ Klaus Dumpert. Bau und verteilung der sensillen auf der antennengeiel von lasius fuliginosus (latr.) (hymenoptera, formicidae). Zoomorphology, 73(2):95-116, 1972.

- ↑ Yoshiaki Hashimoto. Unique features of sensilla on the antennae of formicidae (hymenoptera). Applied Entomology and Zoology, 25(4):491-501, 1990.

Visual system of the Mantis shrimp

[edit | edit source]

Introduction

[edit | edit source]Mantis shrimps or Stomatopods are a family of crustaceans that are usually between 10 and 20 cm in length. They can be brightly colored and live in shallow waters in tropical or subtropical oceans. A big part of the Stomatopod's thorax is covered with a resilient carapace with the head in the front and the two eyeballs sticking out on a pair of stalks. They carry multiple pairs of limbs where the second pair is noticeably larger and known for excellent punching abilities. What they are probably most famous for is though their complex visual system. [1]

A unique visual system

[edit | edit source]Mantis shrimps have one of the most complex visual system discovered in animals. Instead of using 2-4 photoreceptors types for color vision like most other species, they use 12! In addition, they have 4-7 receptor types (depending on the species) that are sensitive for linear and circular polarized light. [2] This has made people wonder how the Mantis shrimps see the world and if they have a 12-dimensional color space compared to our 3-dimensional.

Scientists have assumed that having so many types of photoreceptors would make the Mantis shrimp able to distinguish colors only a few nano-meters apart if it made analog comparisons between spectral sensitivities. On the contrary, a recent study showed that they have trouble distinguishing colors less than 25 nm apart which is approximately the distance between the sensitivity peaks of different photoreceptors. This suggests that the animals don't process visual information by comparing input from different photoreceptors like humans do but rather detect which receptor gives the strongest signal. [2] This would mean that the Mantis shrimps do not have a 12-dimensional continuous color space but rather a discrete color space with 12 color bins. The advantage of this system is that it allows the animals to determine colors very fast and reliably without the delay that occurs in a multidimensional color space. The neural processing of the system is though still to be determined. [2]

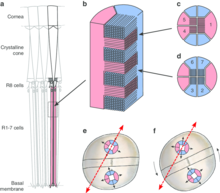

Anatomy of the eye

[edit | edit source]The eye of Mantis shrimps is a compound eye made up of optical units called ommatidia. An ommatidia has a lens that is covered with a cornea and behind the lens there is a light guide, called rhabdom. Surrounding the rhabdom are photoreceptors that can be sensitive to ultraviolet light or light in the human visible range. [3]The eyes are usually elliptical and are divided in morphologically different zones. [4] Each eye is divided horizontally in three regions, the dorsal hemisphere, the mid-band and the ventral hemisphere which all explore the space. [2] [4] It is believed that the two hemispheres allow the animal to have stereoscopic vision on each eye. The ommatidia are arranged in rows where each row has the same morphology. [4]

The ommatidia in the hemispheres of the eye are similar to the ommatidia found in other crustaceans.[5]The mid-band contains larger, specialized ommatidia with photoreceptors that are responsible for most of the spectral diversity. [6] Having the mid-band horizontally between the hemispheres makes the animal able to keep objects anywhere on the horizon within the focal area without much horizontal saccadic eye movements. In fact, most saccadic movements are vertical. [4] Rows 1 through 4 of the mid-band are involved with color vision while rows 5 and 6 detect linear and circular polarized light. In rows 1 to 4 there are 12 different types of cells, each sensitive to a different wavelength of light. Additionally, there are 4 cell types sensitive to ultraviolet light distally in the first four rows. [2] [4]

Each rhabdom has an individual optic system which results in a high number of optical units. This way, all photoreceptors in an ommatidium can view the same field for simultaneous analysis of different properties. Two separate regions in the same eye can even view the same field which makes the system flexible and increases the possibilities of parallel processing. The disadvantage is an eye with low spatial resolution compared to its size. Ommatidia in each hemisphere of the eye are able to view the same field which makes each eye stereoscopic. [5]

Each eye is seated on a stalk and can move relatively freely in all axes thanks to six groups of muscles. In addition, each eye can move independently of the other. The independent moving of each eye makes it hard to make use of binocular stereopsis. Instead, they probably use the overlapping of the view of the two hemispheres of each eye to estimate distance. When the stalks of the eyes move, distant objects move more slowly than close objects which adds to their depth perception. [5] The top part of the ommatidium in the hemispheres is composed of a cornea above a crystalline cone. This part is dedicated to the optics and focuses the incoming light onto the photosensitive rhabdom below. The rhabdom is composed of eight receptor cells, the R8 cell at the top and cells R1-7 surrounding the rhabdom below. These cells form a light guide. The R8 cell is only sensitive to ultraviolet light while cells R1-7 are sensitive to wavelengths around 500 nm. The R8 cells are not sensitive to polarization but cells R1-7 have two types of receptors that are sensitive to polarization orthogonal to each other. [5]

The ommatidia in the mid-band of the eye are different from those in the hemispheres and the mid-band contains three types of ommatidia. The first type of ommatidia are in the two most ventral rows and sense polarized light. The R8 cells of each of the two rows sense polarization planes orthogonal to each other. The R1-7 cells sense orthogonal wavelengths around 500 nm using two types of receptors. The R8 receptors also convert circular polarized light to linear polarized light that is then sensed by the R1-7 receptors.

The second type of ommatidia are in two of the four most dorsal rows. The R1-7 cells are split in two layers so incoming light first goes through the ultraviolet sensitive R8 part, then the distal part of R1-7 and finally the proximal part. Each layer absorbs certain wavelengths before the light reaches the layers below, together creating narrow-band photoreceptors.

The third type of ommatidia are in the two remaining rows. They contain colored photo-stable filters between the receptor layers so incoming light is filtered by these filters as well as the absorption of the receptors.

The second and third type of ommatidia are insensitive to polarization. The four rows of the second and third type of ommatidia, have two types of receptor layers each. These total of eight receptor types have different visual pigments so together they span the spectrum of around 400-700 nm. Adding the ultraviolet receptors and the polarization sensitivity, gives around 16-21 receptor classes, depending on the species. [5][2]

Ultraviolet vision

[edit | edit source]Unlike humans, Mantis shrimps are able to detect ultraviolet light. The ultraviolet photoreceptors are evenly spaced in the eye which suggests that the ultraviolet vision in Mantis shrimps is a part of their color vision system with sensitivity in the range of 300-700 nm wavelengths, compared to 400-700 nm in humans. The ultraviolet sensitivities are too narrow to result only from visual- pigment absorption which made scientists believe that they were tuned with ultraviolet filters in the photoreceptors. [7]

A recent experiment found four types of ultraviolet absorbing MAA (mycosporine-like amino acids) in the mid-band that are sensitive to ultraviolet light. These pigments work as either short- or long-pass ultraviolet filters that act on the same visual pigment in the retina, multiplying the sensitivity of the ultraviolet spectrum. This way, they can generate six types of ultraviolet receptors. [6] [8]

In deep waters, fluorescence can contribute more to color than on land because of its contrast to the surrounding blue color. Many sea organisms have fluorescent coloration, one of them is the Mantis shrimp. The Mantis shrimp species Lysiosquillina glabriuscula has fluorescent markings on its antennal scales and carapace. The fluorescence is estimated to give rise to 7-10% of the total photons from the markings in the depth range inhabited by the animal. When looked at from the L. glabriuscula's perspective, the fluorescence is of more importance because of their special visual system and accounts for up to 30% of the total number of photons. The fluorescence makes the animal able to enhance its color signal underwater where shorter wavelengths don't arrive. [9]

Polarisation vision

[edit | edit source]Polarisation can be described with the Stokes' parameters,

with as the intensity and standing for horizontal, vertical, diagonal, anti-diagonal, right-hand circular and left-hand circular, respectively. S0 is the total intensity which does not affect the polarization. Polarized light is common in nature, especially reflected light and arthropods as well as crustaceans are sensitive to linearly polarized light. It can for example give information about the texture and orientation of an object. A single linear polarization component provides more contrast, especially in turbulent water, while more linear components probably influence orientation, navigation, prey detection, predator avoidance, and intra-species signaling.

Optimal polarization vision is simultaneous sensitivity to all six linear and circular polarized components and the Gonodactylidae family of the Mantis shrimps is the first organism discovered that possesses this ability. The dorsal and ventral hemispheres in their eye sense linear polarization, rotated 45 deg from each other. What makes the Gonodactylidae special is though their sensitivity for circular polarization in two rows of the mid-band which makes it possible for them to measure all six Stokes' parameters. In addition to these anatomical features, it has the neuronal features for measuring the Stokes' parameters. [3] [10] [5]

Filtered vision

[edit | edit source]Different Mantis shrimp species live at various depths. Animals that live in shallow waters are exposed to illumination over a much broader spectrum than animals in deeper waters. Experiments on the Mantis shrimp species Haptosquilla trispinosa revealed that they use colored filters in front of their photoreceptors to tune the spectral sensitivity. In animals that live in shallow waters, the filters are used on most of the visible spectrum. Meanwhile, those that live in deeper waters have their filters shifted to transmit shorter wavelengths (green-blue light) as longer wavelengths are attenuated by the water. This makes them able to distinguish smaller differences of the short wavelength light in the ocean. [11]

Visual processing

[edit | edit source]The visual processing in Mantis shrimps is different from humans and may be compared to artificial systems as they use serial and parallel processing. Mantis shrimps must move their eyes to collect some types of visual information from the environment, unlike most other animals. This lies in the fact that the most important region for visual analysis is in the narrow mid-band which can only scan a slice of the visual space. Mantis shrimps solve this problem by moving the eye slowly up and down and thus getting information about color, polarization and ultraviolet intensity for the whole visual field.

A lot of the visual processing in Mantis shrimps takes place within the eye and even in single photoreceptors. This decreases the amount of data needed to deliver information to higher areas. From the retina, it appears that information is sent via multiple parallel streams into the central nervous system which makes it able to minimize processing at higher levels. Another advantage of Mantis shrimps' splitting of the visual spectrum into discrete channels is its color constancy. Visual systems that have few receptors with a broad wavelength spectrum, can adapt strongly to wavelengths that are far from their peak sensitivity wavelength which makes it difficult to recognize colors in different environments, such as underwater. [5]

Benefits from a developed visual system

[edit | edit source]Mantis shrimp lives involve incredibly fast movements while attacking prey which makes it impor- tant to have fast processing of visual information. [2] Mantis shrimps are known to attack, not only to hunt prey but also to fight members of the same species. This is believed to have evolved their signaling behaviour that involves polarised light and color. They use their color in signaling to a greater extent than other crustaceans and it is believed that their special visual system with high color constancy makes that possible. [5]

Inspiring technology

[edit | edit source]Many features of the visual system of Mantis shrimps could influence the development of artificial optical systems. When designing optical systems where color constancy is important, the Mantis shrimps' visual system can be used as a model where the narrow spectral channels increase the accuracy. Contrary to current optical sensor properties, motion is essential to the Mantis shrimp's vision. This opens up the idea of integrating the possibility of motion into optical sensors. [5] Mantis shrimps' eye design is a good model for visual electronics as it is able to do analysis within individual units. Its visual processing in the eye before information gets passed on to higher centers is also an inspiration for efficient, low-power artificial optical systems. Processing data at the sensor level can reduce the bandwidth and the power needed. Their polarization sensitivity has also inspired scientists in the development of polarization sensors. In fact, Mantis shrimps' alignment of polarization sensitive ommatidia has been replicated with aluminum nanowires functioning as linear polarization filters on top of photo-diodes to create a CMOS imager. This real-time polarization imaging has enabled early diagnosis of cancerous tissue that has not been possible before and has many potential future applications. [12]

References

[edit | edit source]- ↑ Ross Piper, Extraordinary Animals: An Encyclopedia of Curious and Unusual Animals, Green- wood Press, 2007.

- ↑ a b c d e f g Hanne H. Thoen et al, A Dierent Form of Color Vision in Mantis Shrimp, Science 343: 411- 413, 2014.

- ↑ a b Kleinlogel S, White AG, The Secret World of Shrimps: Polarisation Vision at Its Best, PLoS ONE 3(5): e2190, 2008.

- ↑ a b c d e David Cowles, Jaclyn R. Van Dolson, Lisa R. Hainey, Dallas M. Dick, The use of dierent eye regions in the mantis shrimp Hemisquilla californiensis Stephenson, 1967 (Crustacea: Stom- atopoda) for detecting objects, Journal of Experimental Marine Biology and Ecology 330 (2): 528534, 2006.

- ↑ a b c d e f g h i Thomas W. Cronin, Justin Marshall, Parallel processing and image analysis in the eyes of mantis shrimps, The Biological Bulletin 200 (2): 177183, 2001.

- ↑ a b Michael Bok, Megan Porter, Allen Place, Thomas Cronin, Biological Sunscreens Tune Polychro- matic Ultraviolet Vision in Mantis Shrimp, Current Biology 24 (14): 163642, 2014.

- ↑ Justin Marshall, Johannes Oberwinkler, Ultraviolet vision: the colourful world of the mantis shrimp, Nature 401 (6756): 873874, 1999.

- ↑ Ellis R. Loew, Vision: Two Plus Four Equals Six, Current Biology 24 (16): 753-755, 2014.

- ↑ C. H. Mazel, T. W. Cronin, R. L. Caldwell, N. J. Marshall, Fluorescent enhancement of sig- naling in a mantis shrimp, Science 303 (5654): 51, 2004.

- ↑ Tsyr-Huei Chiou et el, Circular polarization vision in a stomatopod crustacean, Current Biology 18 (6): 42934, 2008.

- ↑ Thomas W. Cronin, Roy L. Caldwell, Justin Marshall, Tunable colour vision in a mantis shrimp, Nature 411, 547, 2001.

- ↑ T. York et al, Bioinspired polarization imaging sensors: from circuits and optics to signal processing algorithms and biomedical applications, Proceedings of the IEEE 102 (10): 14501469, 2014.

Spider´s Visual System

[edit | edit source]Introduction

[edit | edit source]While the highly developed visual systems of some spider species have been subject to extensive studies for many decades, terms like animal intelligence or cognition were not usually used in the context of spider studies. Instead, spiders were traditionally portrayed as rather simple, instinct driven animals (Bristowe 1958, Savory 1928), processing visual input in pre-programmed patterns rather than actively interpreting the information received from their visual apparatus towards appropriate reactions. While Although this still seems to be the case in a majority of spiders, which primarily interact with the world through tactile sensation rather than by visual cues, some spider species have shown surprisingly intelligent use of their eyes. Considering its limited dimensions within the body, a spider´s optical apparatus and visual processing perform extremely well.[1] Recent research points towards a very sophisticated use of visual cues in a spider´s world when investigating topics such as the complex hunting schemes of the vision-guided jumping spiders (Salticidae) taking huge leaps of up to 30 times their own body length onto prey or a wolf spider´s (Lycosidae) ability to visually recognize asymmetries in potential mates. Even in the case of the night-active Cupiennius salei (Ctenidae), relying primarily on other sensory organs, or the ogre-faced Dinopis hunting at night by spinning small webs and throwing them at approaching prey, the visual system is still highly developed. Findings like these are not only fascinating but are also inspiring other scientific and engineering fields such as robotics and computer-guided image analysis.

General structure of a spider´s anatomy

[edit | edit source]

A spider´s anatomy primarily consists of two major body segments, the prosoma and the opisthosoma, which are also known as the cephalothorax and abdomen, respectively. All extremities as well as the sensory organs including the eyes are located in the prosoma. Other than the visual system of arthropods featuring compound eyes, modern arachnid eyes are ocelli (simple eyes consisting of a lens covering a vitreous fluid-filled pit with a retina at the bottom), of which spiders have six or eight, characteristically arranged in three or four rows across the prosoma´s carapace. Overall, 99% of all spiders have eight eyes and of the remaining 1% almost all have six. Spiders with only six eyes lack the “principal eyes”, which are described in detail below.

The pairs of eyes are called anterior median eyes (AME), anterior lateral eyes (ALE), posterior median eyes (PME), and posterior lateral eyes (PLE). The large principal eyes facing forward are the anterior median eyes, which provide the highest spatial resolution to a spider, at the cost of a very narrow field of view. The smaller forward-facing eyes are the anterior lateral eyes with a moderate field of view and medium spatial resolution. The two posterior eye pairs are rather peripheral, secondary eyes with wide field of view. They are extremely sensitive and suitable for low-light conditions. Spiders use their secondary eyes for sensing motion, while their principal eyes allow shape and object recognition. In contrast to insect vision, a visually-based spider´s brain is almost completely devoted to vision, as it receives only the optic nerves and consists of only the optic ganglia and some association centers. The brain is apparently able to recognize object motion, but even more to also classify the counterpart into a potential mate, rival or prey by seeing legs (lines) at a particular angle to the body. Such stimulus will result in a spider displaying either courtship or threatening signs respectively.

A Spider´s eyes

[edit | edit source]Although spider eyes may be described as “camera eyes”, they are very different in their details from the “camera eyes” of mammals or any other animals. In order to fit a high-resolution eye into such a small body, neither an insect´s compound eyes nor spherical eyes, as we humans have them, would solve the problem. The ocelli found in spiders are the optically better solution, as their resolution is not limited by refractive effects at the lens which would be the case with compound eyes. When replacing the eye of a spider by a compound eye of the same resolving power, it would simply not fit into the spider´s prosoma. By using ocelli, the spatial acuity of some spiders is more similar to that of a mammal than to that of an insect, with a huge size difference and only a few thousand photocells, e.g. in a jumping spider´s eye, as compared to more than 150 million photocells in the human retina.

Principal eyes

[edit | edit source]

The anterior median eyes (AME), which are present in most spider species, are also called the principal eyes. Details about the principal eye´s structure and its components are illustrated in the figure below and are explained in the following by going through the AME of the jumping spider Portia (family Salticidae), which is famous for its high-spatial-acuity eyes and vision-guided behavior despite its very small body size of 4.5-9.5 mm.

When a light beam enters the principal eye it firstly passes a large corneal lens. This lens features a long focal length enabling it to magnify even distant objects. The combined field of view of the two principal eyes´ corneal lenses would cover about 90° in front of the salticid spider, however a retina with the desired acuity would be too large to fit inside a spider´s eye. The surprising solution is a small, elongated retina, which lies behind a long, narrow tube and a second lens (a concave pit) at its end. Such combination of a corneal lens (with a long focal length) and a long eye tube (magnifying the image from the corneal lens) resembles a telephoto system, making the pair of principal eyes similar to a pair of binoculars.

The salticid spider captures light beams successively on four retina layers of receptors, which lie behind each other (in contrast, the human retina is arranged in only one plane). This structure allows not only a larger number of photoreceptors in a confined area but also enables color vision, as the light is split into different colours (chromatic aberration) by the lens system. Different wavelengths of light thus come into focus at different distances, which correspond to the positions of the retina´s layers. While salticids discern green (layer 1 – ~580 nm, layer 2 – ~520-540 nm), blue (layer 3 – ~480-500 nm) and ultraviolet (layer 4 – ~360 nm) using their principal eyes, it is only the two rearmost layers (layers 1 and 2) which allow shape and form detection due to their close receptor spacing.

As in human eyes, there is a central region in layer 1 called the “fovea”, where the inter-receptor spacing was measured to about 1 μm. This was found to be optimal, as the telephoto optical system provides images precise enough to be sampled in this resolution, but any closer spacing would reduce the retina´s sampling quality due to quantum-level interference between adjacent receptors. Equipped with such eyes, Portia exceeds any insect by far when it comes to visual acuity: While the dragonfly Sympetrum striolatus has the highest acuity known for insects (0.4°), the acuity of Portia is ten times higher (0.04°) with much smaller eyes. The human eye with 0.007° acuity is only five times better than Portia´s. With such visual precision, Portia would be technically able to discriminate two objects which are 0.12 mm apart from a distance of 200 mm. The spatial acuity of other salticid eyes is usually not far behind that of Portia.[2][3][4]

Principal eye retina movements

[edit | edit source]Such spectacular visual abilities come at a price within small animals as the jumping spiders: The retina in each of Portia´s principal eyes has only 2-5° field of view, while its fovea even captures only 0.6° field of view. This results from the principal retina having elongated boomerang-like shapes which span about 20° vertically and only 1° horizontally, corresponding to about six receptor rows. This severe limitation is compensated by sweeping the eye tube over the whole image of the scene using eye muscles, of which jumping spiders have six. These are attached to the outside of the principal eye tube and allow the same three degrees of freedom – horizontal, vertical, rotation – as in human eyes. Principal retinae can move by as much as 50° horizontally and vertically and rotate about the optical axis (torsion) by a similar amount.

Spiders making sophisticated use of visual cues move their principal eyes´ retinae either spontaneously, in “saccades” fixating the fovea on a moving visual target (“tracking”), or by “scanning”, which serves presumably for pattern recognition. It seems today, that spiders scan a scene sequentially by moving the eye-tube in complex patterns, allowing it to process high amounts of visual information despite their very limited brain capacities.

The spontaneous retinal movements, so-called “microsaccades”, are a mechanism thought to prevent the photoreceptor cells of the anterior-median eyes from adapting to a motionless visual stimulus. Cupiennius spiders, which feature 4 eye muscles - two dorsal and two ventral ones – continuously perform such microsaccades of 2° to 4° in the dorso-median direction, lasting about 80 ms (when fixed to a holder). The 2-4° of microsaccadic movements match closely to Cupiennius´ angle of about 3° between the receptor cells, supporting the idea of its function preventing adaption. In contrast, retinal movements elicited by mechanical stimulation (directing an air puff onto the tarsus of the second walking leg) can be considerably larger than the spontaneous retinal movements, with deflections up to 15°. Such stimulus increases eye muscle activity from being spontaneously active at 12 ± 1 Hz at the resting level to 80 Hz with the air puff stimulation applied. Active retinal movement of the two principal eyes is however never activated simultaneously during such experiments and no correlation exists between the two eyes regarding their direction either. These two mechanisms, spontaneous microsaccades as well as active “peering” by active retinal movement, seemingly allow spiders to follow and analyze stationary visual targets efficiently using only their principal eyes without reinforcing the saccadic movements by body movements.

However, there is another factor influencing visual capacities of a spider´s eye, which is the problem of keeping objects at different distances in focus. In human eyes, this is solved by accommodation, i.e. changing the shape of the lens, but salticids take a different approach: the receptors in layer 1 of their retina are arranged on a “staircase” at different distances from the lens. Thus, the image of any object, whether a few centimeters or some meters in front of the eye, will be in focus on some part of the layer-1 staircase. Additionally, the salticid can swing the eye tubes side to side without moving the corneal lenses and will thus sweep the staircase of each retina across the image of the corneal lense, sequentially obtaining a sharp image of the object.

The resulting visual performance is impressive: Jumping spiders such as Portia focus accurately on an object at distances between 2 centimeters to infinity, being able to see up to about 75 centimeters in practice. The time needed to recognize objects is however relatively long (seemingly in the range of 10-20 s) because of the complex scanning process needed to capture high-quality images from such tiny eyes. Due to this limitation, it is very difficult for spiders such as Portia to identify much larger predators fast enough because of the predator´s size, making the small spider an easy prey for birds, frogs and other predators.[5][6]

Blurry vision for distance estimation

[edit | edit source]An unexpected finding recently surprised researchers, when it was shown that jumping spiders use a technique called blurry vision to estimate their distance to previously recognized prey before taking a jump. Where humans achieve depth perception using binocular vision and other animals do so by moving their heads around or measuring ultrasound responses, jumping spiders perform this task within their principal eyes. As in other jumping spider species, the principal eyes of Hasarius adansoni feature four retinal layers with the two bottom ones featuring photocells responding to green impulses. However, green light will only ever focus sharply on the bottom one, layer 1, due to its distance from the inner lens. Layer 2 would receive focused blue light, however these photoreceptor cells are not sensitive to blue and receive a fuzzy green image instead. Interestingly, the amount of blur depends on the distance of an object from the spider´s eye – the closer it is, the more out of focus it will appear on the second retina layer. At the same time, the first retina layer 1 always receives a sharp image due to its staircase structure. Jumping spiders are thus able to estimate depth using a single unmoving eye by comparing the images of the two bottom retina layers. This was confirmed by letting spiders jump at prey in an arena flooded with green light versus red light of equal brightness. Without the ability to use the green retina layers, jumping spiders would repeatedly fail to judge distance accurately and miss their jump.

Secondary eyes

[edit | edit source]

In contrast to the principal eyes responsible for object analysis and discrimination, a spider´s secondary eyes act as motion detectors and therefore do not feature eye muscles to analyze a scene more extensively. Depending on their arrangement on the spider´s carapace, secondary eyes enable the animal to have panoramic vision detecting moving objects almost 360° around its body. The anterior and posterior lateral eyes (i.e. secondary eyes) only feature a single type of visual cells with a maximum spectral sensitivity for green colored light of ~535-540 nm wavelength. The number and arrangement of secondary eyes differs significantly between or even within different spider families, as does their structure: Large secondary eyes can contain several thousand rhabdomeres (the light-sensitive parts of the retina) and support hunters or nocturnal spiders with their high sensitivity to light, while small secondary eyes contain at most a few hundred rhabdomeres and only providing basic movement detection. Differently from the principal eyes which are everted (the rhabdomeres point towards the light), the secondary eyes of a spider are inverted, i.e. their rhabdomeres point away from the light, as is the case for vertebrates like the human eye. Spatial resolution of the secondary eyes e.g. in the extensively studied Cupiennius salei is greatest in horizontal direction, enabling the spider to analyse horizontal movements well even with the secondary eyes, while vertical movement may not be especially important when living in a “flat world”.

The reaction time of jumping spiders´ lateral eyes is comparably slow and amounts to 80-120 ms, measured with a 3°-sized (inter-receptor angle) square stimulus travelling past the animal´s eyes. The minimum stimulus travel distances, until the spider reacts, are 0.1° at a stimulus velocity of 1°/s, 1° at 9°/s and 2.5° at 27°/s. This means that a jumping spider´s visual system detects motion even if an object is travelling only a tenth of the secondary eyes´ inter-receptor angle at slow speed. If the stimulus gets even smaller to a size of only 0.5°, responds occur only after long delays, indicating that they lie at the spiders´ limit of perceivable motion.

Secondary eyes of (night-active) spiders usually feature a tapetum behind the rhabdomeres, which is a layer of crystals reflecting light back to the receptors to increase visual sensitivity. This allows night-hunting spiders to have eyes with an aperture as large as f/0.58 enabling them to capture visual information even in ultra-low-light conditions. Secondary eyes containing a tapetum thus easily reveal a spider´s location at night when illuminated e.g. by a flashlight.[7][8]

Central nervous system and visual processing in the brain

[edit | edit source]As anywhere in neuroscience, we still know very little about a spider´s central nervous system (CNS), especially regarding its functioning in visually controlled behavior. Of all the spiders, the CNS of Cupiennius has been studied most extensively, focusing mainly on the CNS structure. As of today, only little is known about electrophysiological properties of central neurons in Cupiennius, and even less about other spiders in this regard.

The structure of a spider´s nervous system is closely related to its body´s subdivisions, but instead of being spread all over the body, the nervous tissue is enormously concentrated and centralized. The CNS is made up of two paired, rather simple nerve cell clusters (ganglia), which are connected to the spider´s muscles and sensory systems by nerves. The brain is formed by fusion of these ganglia in the head segments ahead of and behind the mouth and fills the prosoma largely with nervous tissue, while no ganglia exist in the abdomen. Looking at the spider´s brain, it receives direct inputs from only one sensory system, the eyes - unlike any insects and crustaceans. The eight optic nerves enter the brain from the front and their signals are processed in two optic lobes in the anterior region of the brain. When a spider´s behavior is especially dependent on vision, as in the case of the jumping spider, the optic ganglia contribute up to 31% of the brain´s volume, indicating the brain to be almost completely devoted to vision. This score still amounts to 20% for Cupiennius, whereas other spiders like Nephila and Ephebopus come in at only 2%.

The distinction between principal and secondary eyes persists in the brain. Both types of eyes have their own visual pathway with two separate neuropil regions fulfilling distinct tasks. Thus spiders evidently process the visual information provided by their two eye types in parallel, with the secondary eyes being specialized for detecting horizontal movement of objects and the principal eyes being used for the detection of shape and texture.

Two visual systems in one brain

[edit | edit source]While principal and secondary eyesight seems to be distinct in spiders´ brains, surprising inter-relations between both visual systems in the brain are known as well. In visual experiments principal eye muscle activity of Cupiennius was measured while covering either its principal or secondary eyes. When stimulating the animals in a white arena with short sequences of moving black bars, the principal eyes moved involuntarily whenever a secondary eye detected motion within its visual field. This activity increase of the principal eye muscles, compared to no stimulation presented, would not change when covering the principal eyes with black paint, but would stop with the secondary eyes masked. Thus it is now clear, that only the input received from secondary eyes controls principal eye muscle activity. Also, a spider´s principal eyes do not seem to be involved in motion detection, which is only the secondary eyes´ responsibility.

Other experiments using dual-channel telemetric registration of the eye muscle activities of Cupiennius have shown that the spider actively peers into the walking direction: The ipsilateral retina of the principal eyes was measured to shift with respect to the walking direction before, during and after a turn, while the contralateral retina remained in its resting position. This happened independently from the actual light conditions, suggesting a “voluntary” peering initiated by the spider´s brain.

Pattern recognition using principal eyes

[edit | edit source]

Recognition of shape and form by jumping spiders is believed to be accomplished through a scanning process of the visual field, which consists of a complex set of rotations (torsional movements) and translations of the anterior-median eyes´ retinae. As described in the section “Principal eye retina movements”, a spider´s retinae are narrow and shaped like boomerangs, which can be matched with straight features by sweeping over the visual scene. When investigating a novel target, the eyes scan it in a stereotyped way: By moving slowly from side to side at speeds of 3-10° per second and rotating through ± 25°, horizontal and torsional retina movement allows the detection of differently positioned and rotated lines. This method can be understood as template matching where the template has elongated shape and produces a strong neural response whenever the retina matches a straight feature in the scene. This identifies a straight line with little or no further processing necessary.

A computer vision algorithm for straight line detection as an optimization problem (da Costa, da F. Costa) was inspired by the jumping spider´s visual system and uses the same approach of scanning a scene sequentially using template matching. While the well-known Hough Transform allows robust detection of straight visual features in an image, its efficiency is limited due to the necessity to calculate a good part or even the whole parameter space while searching for lines. In contrast the alternative approach used in salticid visual systems suggests searching the visual space by using a linear window, which allows adaptive searching schemes during the straight line search process without the need to systematically calculate the parameter space. Also, solving the straight line detection in such a way allows to understand it as an optimization problem, which makes efficient processing by computers possible. While it is necessary to find appropriate parameters controlling the annealing-based scanning experimentally, the approach taking a jumping spider´s path of straight line detection was proven to be very effective, especially with properly set parameters.[9]

Visually-guided behavior

[edit | edit source]Discernment of visual targets

[edit | edit source]

The ability of discerning between slightly different visual targets has been shown for Cupiennius salei, although this species relies mainly on its mechanosensory systems during prey catching or mating behavior. When presenting two targets at a distance of 2 m to the spider, its walking path depends on their visual appearance: Having to choose between two identical targets such as vertical bars, Cupiennius shows no preference. However the animal strongly prefers a vertical bar to a sloping bar or a V-shaped target.

The discrimination of different targets has been shown to be only possible with the principal eyes uncovered, while the spider is able to detect the targets using any of the eyes. This suggests that many spiders´ anterior-lateral (secondary) eyes are capable of much more than simply object movement detection. With all eyes covered, the spider exhibits totally undirected walking paths.

Placing Cupiennius in total darkness however results not only in undirected walks but also elicits a change of gait: Instead of using all eight legs the spider will only walk with six and employ the first legs as antennae, comparable to a blind person´s cane. In order to feel the surroundings the extended forelegs are moved up and down as well as sideways. This is specific to the first leg pair only, influenced solely by the visual input when the normal room light is switched to the invisible infrared light.

Vision-based decision making in jumping spiders

[edit | edit source]The behavior of jumping spiders after having detected movement with the eyes depends on three factors: the target´s size, speed and distance. If it has more than twice the spider´s size, the object is not approached and the spider tries to escape if it comes towards her. If the target has adequate size, its speed is visually analyzed using the secondary eyes. Fast moving targets with a speed of more than 4°/s are chased by jumping spiders, guided by her anterior-lateral eyes. Slower objects are carefully approached and analyzed with the anterior-median (i.e. principal) eyes to determine whether it is prey or another spider of the same species. This is seemingly achieved by applying the above described straight line detection, to find out whether a visual target features legs or not. While jumping spiders have shown to approach potential prey of appropriate characteristics as long as it moves, males are pickier in deciding whether their current counterpart might be a potential mate.

Potential mate detection

[edit | edit source]Experiments have shown that drawings of a central dot with leg-like appendages on the sides will result in courtship displays, suggesting that visual feature extraction is used by jumping spiders to detect the presence and orientation of linear structures in the target. Additionally, a spider´s behavior towards a considered conspecific spider depends on different factors such as sex and maturity of both involved spiders and whether it is mating time. Female wolf spiders, Schizocosa ocreata, even discern asymmetries in male secondary sexual characters when choosing their mate, possibly to avoid developmental instability in their offspring. Conspicuous tufts of bristles on a male´s forelegs, which are used for visual courtship signaling, appear to influence female mate choice and asymmetry of these body parts in consequence of leg loss and regeneration apparently reduces female receptivity to such male spiders.[10]

Secondary eye-guided hunting

[edit | edit source]A jumping spider´s stalking behavior when hunting insect prey is comparable to a cat stalking birds. If something moves within the visual field of the secondary eyes, they initiate a turn to bring the larger, forward-facing pair of principal eyes into position for classifying the object´s shape into mate, rival or prey. Even very small, low contrast dot stimuli moving at slow or fast speeds elicit such orientation behavior. Like Cupiennius, jumping spiders are also able to use their secondary eyes for more sophisticated tasks than just motion detection: Presenting visual prey cues to salticids with only visual information from the secondary eyes available and both primary eyes covered, results in the animal exhibiting complete hunting sequences. This suggests that the anterior lateral eyes of jumping spiders may be the most versatile components of their visual system. Besides detecting motion, the secondary eyes obviously also feature a spatial acuity which is good enough to direct complete visually-guided hunting sequences.

Prey “face recognition”

[edit | edit source]

Visual cues also play an important role for jumping spiders (salticids) when discriminating between salticid and non-salticid prey using principal eyesight. To this end a salticid prey´s large principal eyes provide critical cues, to which the jumping spider Portia fimbriata reacts by exhibiting cryptic stalking tactics before attacking (walking very slowly with palps retracted and freezing when faced). This behavior is only used when identifying a prey as salticid. This was exploited in experiments presenting computer-rendered, realistic three-dimensional lures with modified principal eyes to Portia fimbriata. While intact virtual lures resulted in cryptic stalking, lures without or with smaller principal eyes than usual (as sketched in the figure on the right) elicited different behavior. Presenting virtual salticid prey with only one anterior-median eye or a regular lure with two enlarged secondary eyes elicited cryptic stalking behavior suggesting successful recognition of a salticid, while P. fimbriata froze less often when faced by a Cyclops-like lure (a single principal eye centered between the two secondary eyes). Lures with square-edged principal eyes were usually not classified as a salticid, indicating that the shape of the principal eyes´ edges are an important cue to identify fellow salticids.[11]

Jumping decisions from visual features

[edit | edit source]

Spiders in the genus Phidippus have been tested within a study for their willingness to cross inhospitable open space by placing visual targets on the other side of a gap. It was found that whether the spider takes the risk of crossing open ground or not is mainly dependent on factors like distance to target, relative target size compared to distance and the target´s color and shape. In independent test runs, the spider moved to tall, distant targets equally often as to short, close targets, with both objects appearing equally sized on the spider´s retina. When giving the choice of moving to either white or green grass-like targets, the spiders consistently chose the green target irrespective of its contrast with the background, thus proving their ability to use color discernment in hunting situations.[12]

Identifying microhabitat traits by visual cues

[edit | edit source]Presented with manipulated real plants and photos of plants, Psecas chapoda (a bromeliad-dwelling salticid spider) is able to detect a favorable microhabitat by visually analyzing architectural features of the host plant´s leaves and rosette. By using black-and-white photos, any potential influence of other cues, such as color and smell, on host plant selection by the spider could be excluded during a study, leaving only shape and form as discerning characteristics. Even when having to decide solely from photographs, Psecas chapoda consistently preferred rosette-shaped plants (Agavaceae) with narrow and long leaves over differently looking plants, which proves that some spider species are able to evaluate and distinguish physical structure of microhabitats only on the basis of shape from visual cues of plant traits.[13]

Johnston's Organs (Antennae in Bees and Butterflies)

[edit | edit source]

Butterflies and moth keep their balance with Johnston's organ: this is an organ at the base of a butterfly's antennae, and is responsible for maintaining the butterfly's sense of balance and orientation, especially during flight.

Introduction

[edit | edit source]The perception of sound for some insects is important for mating behavior, e.g. Drosophila [14]. The ability of hearing in Insecta and Crustacea is given by chordotonal organs: mechanoreceptors, which respond to mechanical deformation [15]. These chordotonal organs are widely distributed throughout the insect’s body and differ in their function: proprioceptors are sensitive to forces generated by the insect itself and exteroreceptors to external forces. These receptors allow detection of sound via the vibrations of particles when sound is transmitted though a medium such as air or water. Far-field sounds refer to the phenomenon when air particles transmit the vibration as a pressure change over a long distance from the source. Near-field sounds refer to sound close to the source, where the velocity of the particles can move lightweight structures. Some insects have visible hearing organs such as the ears of noctuoid moths, whereas other insects lack a visible auditory organ, but are still able to register sound. In these insects the "Johnston's Organ" plays an important role for hearing.

Johnston's organ

[edit | edit source]The Johnston’s Organ (JO) is a chordotonal organ present in most insects. Christopher Johnston was the first who described this organ in mosquitoes, thus the name Johnston’s Organs [16]. Quarterly Journal of Microscopical Science. 1855, Vols. s1-3, 10, pp. 97-102.. This organ is located at the stem of the insect’s antenna. It has developed the highest degree of complexity in the Diptera (two-wings), for which hearing is of particular importance [15]. The JO consists of organized base sensory units called scolopidia (SP). The number of scolopidia varies among the different animals. JO has various mechanosensory functions, such as detection of touch, gravity, wind and sound, for example in honeybees JO (≈ 300 SPs) is responsible to detect sound coming from another “dancing” honeybee [17]. In male mosquitoes (≈ 7000 SPs) JO is used to detect and locate female flight sound for mating behavior [18]. . The antenna of these insects is specialized to capture near-field sound. It acts as a physical mechanotransducer.

Anatomy of the Johnston’s Organ

[edit | edit source]A typical insect antenna has three basic segments: the scape (base), the pedicel (stem) and the flagellum [19]. Some insects have a bristle at the third segment called an arista. Figure 1 shows the Drosophila antenna. For the Drosophila the antenna segment a3 fits loosely into the sockets on segment a2 and can rotate when sound energy is absorbed [20]. This leads to stretching or compression of JO neurons of the scolopidia. In Diptera the JO scolopidia are located in the second antennal segment a2 the pedicel (Yack, 2004). JO is not only associated with sound perception (exteroreceptor), it can also function as a proprioceptors giving information on the orientation and position of the flagellum relative to the pedicel [21].

A scolopidia is the base sensory unit of the JO. A scolopidia comprises four cell types [15]: (1) one or more bipolar sensory cell neurons, each with a distal dendrite; (2) a scolopale cell enveloping the dendrite; (3) one or more attachment cells associated with the distal region of the scolopale cell; (4) one or more glial cells surrounding the proximal region of the sensory neuron cell body. The scolopale cell surrounds the sensory dendrite (cilium) and forms with this the scolopale lumen / receptor lymph cavity. The scolopale lumen is tightly sealed. The cavity is filled with a lymph, which is thought to have high potassium content and low sodium content, thus closely resembling the endolymph in the cochlea of mammals. Scolopidia are classified according different criteria. The cap cell produces an extracellular cap, which envelopes the cilia tips and connects them to the third antennal segment a3 [22].

Type 1 and Type 2 scolopidia differ by the type of ciliary segment in the sensory cell. In Type 1 the cilium is of uniform diameter, except for a distal dilation at around 2/3 along its length. The cilium inserts into a cap rather than into a tube. In Type 2 the ciliary segment has an increasing diameter into a distal dilation, which can be densely packed with microtubules. The distal part ends in a tube. Mononematic and amphinematic scolopidia differ by the extracellular structure associated with the scolopale cell and the dendritic cilium. Mononematic scolopidia have the dendritic tip inserted into a cap shape which is an electron dense structure. In amphinematic scolopidia the tip is enveloped by an electron-dense tube. Monodynal and Heterodynal scolopidia are distinguished in their number of sensory neurons. Monodynal scolopidia have a single sensory cell and heterodynal ones have more than one.

JO studied in the fruit fly (Drosophila melanogaster)

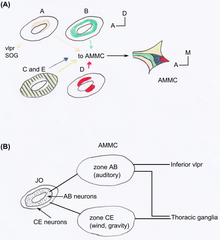

[edit | edit source]The JO in Drosophila consists of an array of approximately 277 scolopidia located between the a2/a3 joint and the a2 cuticle (a type of an outer tissue layer) [23]. The scolopidia in Drosophila are mononematic [20]. Most are heterodynal and contain two or three neurons, thus the JO comprises around 480 neurons. It is the largest mechanosensory organ of the fruit fly [14]. Perception by JO of male Drosophila courtship songs (produced by their wings) makes females reduce locomotion and males to chase each other forming courtship chains [24]. JO is not only important to perceive sound, but also to gravity [25] and wind [26] sensing. Using GAL4 enhancer trap lines in the JO showed that JO neurons of flies can be categorized anatomically into five subgroups, A-E [23]. Each has a different target area of the antennal mechanosensory and motor centre (AMMC) in the brain (see Figure 2). Kamikouchi et al. showed that the different subgroups are specialized to distinct types of antennal movement [14]. Different groups are used for sound and gravity response.

Neural activities in the JO

[edit | edit source]To study JO neurons activities it is possible to observe intracellular calcium signals in the neurons caused by antenna movement [14]. Furthermore flies should be immobilized (e.g. by mounting on a coverslip and immobilizing the second antennal segment to prevent muscle-caused movements). The antenna can be actuated mechanically using an electrostatic force. The antenna receiver vibrates when sound energy is absorbed and deflects backwards and forwards when the Drosophila walks. Deflecting and vibrating the antenna yields different activity patterns in the JO neurons: deflecting the receiver backwards with a constant force gives negative signals in the anterior region and positive ones in the posterior region of the JO. Forward deflection produces the opposite behavior. Courtship songs (pulse song with a dominant frequency of ≈ 200Hz) evoke broadly distributed signals. The opposite patterns for the forward and backward deflection reflect the opposing arrangements of the JO neurons. Their dendrites connect to anatomically distinct sides of the pedicel: the anterior and posterior sides of the receiver. Deflecting the receiver forwards stretches the JO neurons in the anterior region and compresses neurons in the posterior one. From this is can be concluded that JO neurons are activated (i.e. depolarized) by stretch and deactivated (i.e. hyperpolarized) by compression.

Different JO neurons

[edit | edit source]A JO neuron usually targets only one zone of the AMMC, and neurons targeting the same zone are located in characteristic spatial regions within JO [23]. Similar projecting neurons are organized into concentric rings or paired clusters (see Figure 2A).

Vibration sensitive neurons for sound perception

[edit | edit source]A and B neurons (AB) were activated maximally by receiver vibration between 19 Hz and 952 Hz. This response was frequency dependent. Subgroup B showed larger response to low-frequency vibrations. Thus subgroup A is responsible for the high-frequency responses.

Deflection sensitive neurons for gravity and wind perception

[edit | edit source]C and E showed maximal activity for static receiver deflection. Thus these neurons provide information about the direction of a force. They have a larger displacement threshold of the arista than the neurons of AB [26]. Nevertheless CE neurons can respond to small displacement of the arista (e.g. gravitational force): gravity displaces the arista-tip by 1 µm (see S1 of [14]). They also respond to larger displacement caused by air-flow (e.g. wind) [26]. Zone C and E neurons showed distinct sensitivity to air flow direction, which causes deflection of the arista in different directions. Air flow applied to the front of the head resulted in strong activation in zone E and little activation in zone C. Air flow applied from the rear showed the opposite result. Air flow applied to the side of the head yielded in zone C in ipsilaterally activation and in zone E in contralaterally one. The different activation allows the Drosophila to sense from which direction the wind comes. It is not known whether the same subgroups-CE neurons mediate wind and gravity detection or if there are more sensitive CE neurons for gravity detection and less sensitive CE neurons for wind detection [14]. A proof that wild-type Drosophila melanogaster can perceive gravity is that the flies tend to fly upwards against the force vector of gravitation (negative gravitaxis) after getting shaken in a test tube. When the antennal aristae were ablated this negative gravitaxis behavior vanished, but not the phototaxis behavior (flies fly towards light source). Removing also the second segment, i.e. where the JO is located, the negative gravitaxis behavior came present again. This shows that when JO is lost, Drosophila can still perceive gravitational force through other organs, for example mechanoreceptors on neck or legs. These receptors were shown to be responsible for gravity sensing in other insect species [27].

Silencing specific neurons

[edit | edit source]It is possible to silence selectively subgroups of JO neurons using tetanus toxin combined with subgroup-specific GAL4 drivers and tubulin-GAL80. The latter is a temperature-sensitive GAL4 blocker. With this it could be confirmed that neurons of subgroup CE are responsible for gravitaxis behavior. Elimination of neurons of subgroups CE did not impair the ability of hearing [26]. Silencing subgroup B impaired the male’s response to courtship songs, whereas silencing groups CE or ACE did not [14]. Since subgroup A was found to be involved in hearing (see above) this result was unexpected. From different experiment, in which the sound-evoked compound action potential (sum of action potentials) were investigated the conclusion was drawn that subgroup A is required for nanometer-range receiver vibrations as imposed by faint songs of courting males.

As mentioned above the anatomically different subgroups of JO neurons have different functions [14]. The neurons do attach to the same antennal receiver, but they differ in opposing connection sites on the receiver. Thus for e.g. forward deflection some neurons get stretched whereas others get compressed, which yields different response characteristics (opposing calcium signals). The difference for vibration- and deflection-sensitive neurons may come from distinct molecular machineries for transduction (i.e. adapting or non-adapting channels and NompC-dependent or not). Sound-sensitive neurons express the mechanotransducer channel NompC (no mechanoreceptor potential C, also known as TRPN1) channel whereas subgroups CE are independent of NompC [14]. In addition JO neurons of subgroup AB transduce dynamic receiver vibrations, but adapt fast for static receiver deflection (i.e. they respond phasically) [28]. Neurons of subgroups CE showed a sustained calcium signal response during the static deflection (i.e. they respond tonically). The two distinct behaviors show that there are transduction channels with distinct adaption characteristics, which is also known for the mammalian cochlea or mammalian skin (i.e. tonically activated Merkel calls and rapidly adapting Meissner’s corpuscles) [26].

Differences in gravitation and sound perception in the brain

[edit | edit source]Neurons of subgroups A and B target on one side zones of the primary auditory centre in the AMMC and on the other side the inferior part of ventrolateral protocerebrum (VLP) (see Figure 2B)). These zones show many commissural connections between themselves and with the VLP. For neurons of subgroups CE almost no commissural connection between the target zones were found, nor connections to the VLP. Neurons associated with the zones of subgroup CE descended or ascended from the thoracic ganglia. This difference in the AB and CE neurons projection reminds strongly on the separate vertebrate projection of the auditory and vestibular pathways in mammals [20].

Johnston’s Organ in honeybees

[edit | edit source]

The JO in bees is also located in the pedicel of the antenna and used to detect near field sounds [17]. In a hive some bees perform a waggle dance, which is believed to inform conspecifics about the distance, direction and profitability of a food source. Followers have to decode the message of the dance in the darkness of the hive, i.e. visual perception is not involved in this process. Perception of sound is a possible way to get the information of the dance. The sound of a dancing bee has a carrier frequency of about 260 Hz and is produced by wing vibrations. Bees have various mechanosensors, such as hairs on the cuticle or bristles on the eyes. Dreller et al. found that the mechanosensors in JO are responsible for sound perception in bees [17]. Nevertheless hair sensors could still be involved in detection of further sound-sources, when the amplitude is too low to vibrate the flagellum. Dreller et al. trained bees to associate sound signals with a sucrose reward. After the bees were trained some of the mechanosensors were abolished on different bees. Then the bee’s ability to associate the sound with the reward was tested again. Manipulating the JO yielded loss of the learnt skill. Training could be done with a frequency of 265 Hz, but also of 10 Hz, which shows that JO is also involved in low-frequency hearing. Bees with only one antenna made more mistakes, but were still better than bees that had ablated both antennas. Two JO in each antenna could help followers to calculate the direction of the dancing bee. Hearing could also be used by bees in other contexts, e.g. to keep a swarming colony together. The decoding of the waggle dance is not only done by auditory perception, but also or even more by electric field perception. JO in bees allows detection of electric fields [29]. If body parts are moved together, bees accumulate electric charge in their cuticle. Insects respond to electric fields, e.g. by a modified locomotion (Jackson, 2011). Surface charge is thought to play a role in pollination, because flowers are usually negatively charged and arriving insects have a positive surface charge [29]. This could help bees to take up pollen. By training bees to static and modulated electric fields, Greggers et al. showed that bees can perceive electric fields [29]. Dancing bees produce electric fields, which induce movements of the flagellum 10 times more strongly than the mechanical stimulus of wing vibrations alone. The vibrations of the flagellum in bees are monitored with JO, which responds to displacement amplitudes induced by oscillation of a charged wing. This was proven by recording compound action potential responses from JO axons during electric field stimulation. Electric field reception with JO does not work without antenna. Whether also other non-antennal mechanoreceptors are involved in electric field reception has not been excluded. The results of Greggers et al. suggest that electric fields (and with it JO) are relevant for social communication in bees.

Importance of JO (and chordotonal organs in general) for research

[edit | edit source]Chordotonal organs, like JO, are only found in Insecta and Crustacea [15]. Chordotonal neurons are ciliated cells [30]. Genes that encode proteins needed for functional cilia are expressed in chordotonal neurons. Mutations in the human homologues result in genetic diseases. Knowledge of the mechanisms of ciliogenesis can help to understand and treat human diseases which are caused by defects in the formation or function of human cilia. This is because the process of controlling neuronal specification in insects and in vertebrates is based on highly conserved transcription factors, which is shown by the following example: Atonal (Ato), a proneural transcription factor, specifies chordotonal organ formation. The mouse orthologue Atoh1 is necessary for hair cell development in the cochlea. Mice which expressed a mutant Atoh1 phenotype, which are deaf, can be cured by the atonal gene of Drosophila. Studying chordotonal organs in insects can lead to more insights of mechanosensation and cilia construction. Drosophila is a versatile model to study the chordotonal organs [31]. The fruit fly is easy and inexpensive to culture, produces large numbers of embryos, can be genetically modified in numerous ways and has a short life cycle, which allows investigating several generations within a relative short time. In addition comes that most of the fundamental biological mechanisms and pathways that control development and survival are conserved across Drosophila and other species, such as humans. While the human sensory system offers us stunning ways of perceiving our movement and environment, the sensory systems of insects and spiders are not any less fascinating. To give just a few examples, spiders have up to eight eyes, and some see almost as sharply as humans; bees "feel the rhythm" when other bees dance in the bee-hive, and learn from this the location of food sources; mosquitoes hunt their victims by smell. In addition, studies in insects have many fewer ethical or methodological limitations than studies in mammals. And especially in flies, with molecular genetic tools any gene can be targeted (e.g. knocked out or overexpressed), and the system is much more manageable than in humans.

The insect olfactory system

[edit | edit source]This sensory systems book is mostly about human sensory systems and there is a chapter about the olfactory system, so why do we need a chapter on the insect olfactory system? The fruit fly (drosophila melanogaster), which we will focus on here, is a very important model animal in biology and a lot of research on sensory systems is done in the fruit fly. The visual as well as the olfactory system are studied intensively and there are less ethical or methodological limitations. With molecular genetic tools, any gene in a fly can be targeted (e.g. knocked out or overexpressed) and the system is much more manageable than in humans. While the olfactory system functions quite different from the human’s, it is possible to find common principles. Furthermore, the insect olfactory system inspires engineering in robotics, medicine and many other areas.

The nature of smell

[edit | edit source]

To understand the specifics of odor sensing one has to be aware that smell is quite different from other stimuli. It differs from light and sound by the fact that it is not carried by waves but by diffusion, air flows and turbulences. Furthermore, while light and sound only have the two perceptually relevant characteristics of frequency composition and amplitude, smell has a variety of discrete odorants and even more possible mixtures in different concentrations.

Overview

[edit | edit source]

In insects (but also in most vertebrates) the sensory system is of importance for orientation and food foraging but has also social (nest mate recognition e.g. in ants) and sexual (mating partner search and selection by pheromones) significance. The main path of the odor information begins at the olfactory sensilla (insect’s sensory organs that contain the sensory neurons) that can in most insects be found on the antennae and look like small hairs in the fly (see Figure). There exists a huge variety of antenna types (that are not only used for olfaction) and many different sensillum types.

To understand the general principle the example of drosophila melanogaster basiconic sensilla should suffice. The odorant molecules go through slits or pores of the cuticle into the aqueous sensillum lymph, where some types of odorant molecules are bound to odorant binding proteins and carried towards the dendrites of the olfactory receptor neurons (ORN), others diffuse in the lymph towards the dendrites. On the membrane of the dendrites there are odorant receptors (OR) that bind the odorant molecules and are responsible for the conversion of the signal into a membrane current. This current propagates through the dendrite to the cell body where (at the axon hill), an action potential is generated. The action potential travels in the ORN axon to the antennal lobe (which is analog to the olfactory bulb in vertebrates), where ORN make synapses to local interneurons and projection neurons. The antennal lobe is organized in so called glomeruli. It is not fully understood how they are involved in pattern recognition, but the glomerular activation pattern can provide information about the odors presented to the fly.

Projection neurons project into the lateral horn (where probably innate odor responses are processed) and to the Kenyon cells in the mushroom bodies. The mushroom bodies are a neuropil in the insect brain and have their name from the similarity to mushrooms. There, odors are associated with other sensory modalities and behavior which is why the mushroom bodies are an important model system to study learning and memory.

Odorant reception in ORN

[edit | edit source]