- Example 1.1

Consider a map  with domain

with domain  and codomain

and codomain  (fixing

(fixing

as the bases for these spaces) that is determined by this action on the vectors in the domain's basis.

To compute the action of this map on any vector at all from the domain,

we first express  and

and  with respect to the codomain's basis:

with respect to the codomain's basis:

and

(these are easy to check).

Then, as described in the preamble, for any member  of the domain,

we can express the image

of the domain,

we can express the image  in terms of the

in terms of the  's.

's.

Thus,

with  then

then  .

.

For instance,

with  then

then

.

.

We will express computations like the one above with a matrix notation.

In the middle is the argument  to the map,

represented with respect to the domain's basis

to the map,

represented with respect to the domain's basis  by a column vector with components

by a column vector with components  and

and  .

On the right is the value

.

On the right is the value  of the map on that argument,

represented with respect to the codomain's basis

of the map on that argument,

represented with respect to the codomain's basis  by a column vector with components

by a column vector with components  , etc.

The matrix on the left is the new thing.

It consists of the coefficients from the vector on the right,

, etc.

The matrix on the left is the new thing.

It consists of the coefficients from the vector on the right,

and

and  from the first row,

from the first row,  and

and  from the

second row, and

from the

second row, and  and

and  from the third row.

from the third row.

This notation simply breaks the parts from the right,

the coefficients and the  's, out separately on the left, into a vector that

represents the map's argument and a matrix that we will take to

represent the map itself.

's, out separately on the left, into a vector that

represents the map's argument and a matrix that we will take to

represent the map itself.

- Definition 1.2

Suppose that  and

and  are vector spaces of dimensions

are vector spaces of dimensions  and

and

with bases

with bases  and

and  ,

and that

,

and that  is a linear map.

If

is a linear map.

If

then

is the matrix representation of  with respect to

with respect to  .

.

Briefly, the vectors

representing the  's are adjoined to

make the matrix representing the map.

's are adjoined to

make the matrix representing the map.

Observe that the number of columns  of the matrix is the

dimension of the domain of the map,

and the number of rows

of the matrix is the

dimension of the domain of the map,

and the number of rows  is the dimension of the codomain.

is the dimension of the codomain.

- Example 1.3

If  is given by

is given by

then where

the action of  on

on  is given by

is given by

and a simple calculation gives

showing that this is the matrix representing  with respect to the bases.

with respect to the bases.

We will use lower case letters for a map,

upper case for the matrix,

and lower case again for the entries of the matrix.

Thus for the map  , the matrix representing it is

, the matrix representing it is  , with

entries

, with

entries  .

.

- Theorem 1.4

Assume that  and

and  are vector spaces

of dimensions

are vector spaces

of dimensions  and

and  with bases

with bases  and

and  ,

and that

,

and that  is a linear map.

If

is a linear map.

If  is represented by

is represented by

and  is represented by

is represented by

then the representation of the image of  is this.

is this.

We will think of the matrix  and the vector

and the vector  as combining to make the vector

as combining to make the vector  .

.

- Definition 1.5

The matrix-vector product of a  matrix and a

matrix and a

vector is this.

vector is this.

The point of Definition 1.2 is to generalize

Example 1.1, that is, the point of the definition is

Theorem 1.4,

that the matrix describes how to get from the

representation of a domain vector with respect to the domain's basis to the

representation of its image in the codomain with respect to the codomain's

basis.

With Definition 1.5, we can restate this

as: application of a linear map is represented by the matrix-vector product

of the map's representative and the vector's representative.

- Example 1.7

Let  be projection onto the

be projection onto the  -plane.

To give a matrix representing this map, we first fix bases.

-plane.

To give a matrix representing this map, we first fix bases.

For each vector in the domain's basis, we find its image under the map.

Then we find the representation of each image with respect to the codomain's

basis

(these are easily checked).

Finally, adjoining these representations gives the matrix representing

with respect to

with respect to  .

.

We can illustrate Theorem 1.4 by computing

the matrix-vector product representing the following statement

about the projection map.

Representing this vector from the domain

with respect to the domain's basis

gives this matrix-vector product.

Expanding this representation into a linear combination of vectors from

checks that the map's action is indeed

reflected in the operation of the matrix.

(We will sometimes compress these three displayed equations into one

in the course of a calculation.)

We now have two ways to compute the effect of projection,

the straightforward formula that drops each three-tall vector's third component

to make a two-tall vector,

and the above formula that uses representations and matrix-vector

multiplication.

Compared to the first way, the second way might seem complicated.

However, it has advantages.

The next example shows that giving a formula for some maps is

simplified by this new scheme.

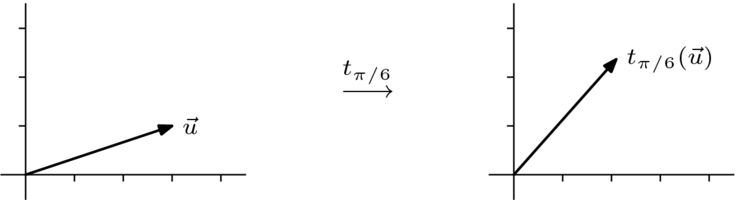

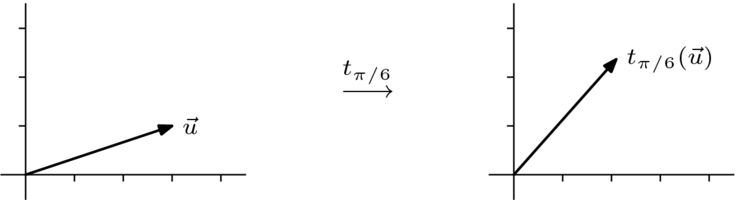

- Example 1.8

To represent a rotation

map  that

turns all vectors in the plane counterclockwise through an angle

that

turns all vectors in the plane counterclockwise through an angle

we start by fixing bases.

Using  both as a domain basis and as a codomain basis is natural,

Now, we find the image under the map of each

vector in the domain's basis.

both as a domain basis and as a codomain basis is natural,

Now, we find the image under the map of each

vector in the domain's basis.

Then we represent these images with respect to the codomain's basis.

Because this basis is  , vectors are represented by themselves.

Finally, adjoining the representations gives the matrix representing the map.

, vectors are represented by themselves.

Finally, adjoining the representations gives the matrix representing the map.

The advantage of this scheme is that just by knowing how to

represent the image of the two basis vectors,

we get a formula that tells us the image of any vector at

all; here a vector rotated by  .

.

(Again, we are using the fact that, with respect to  , vectors represent themselves.)

, vectors represent themselves.)

We have already seen the addition and scalar multiplication

operations of matrices and

the dot product operation of vectors.

Matrix-vector multiplication is a new operation in the arithmetic of

vectors and matrices.

Nothing in Definition 1.5 requires us to view

it in terms of representations.

We can get some insight into this operation

by turning away from what is being represented, and instead focusing on

how the entries combine.

- Example 1.9

In the definition

the width of the matrix equals the height of the vector.

Hence, the first product below is defined while the second is not.

One reason that this product is not defined is purely formal: the

definition requires that the sizes match, and these sizes don't match.

Behind the formality, though,

is a reason why we will leave it undefined— the

matrix represents a map with a three-dimensional domain while the vector represents a member of a two-dimensional space.

A good way to view a matrix-vector product is

as the dot products of the rows of the matrix with the column vector.

Looked at in this row-by-row way,

this new operation generalizes dot product.

Matrix-vector product can also be viewed column-by-column.

- Example 1.10

The result has the

columns of the matrix weighted by the entries of the vector.

This way of looking at it

brings us back to the objective stated at the start of this section, to compute

as

as

.

.

We began this section

by noting that the equality of these two enables us to compute the action

of  on any

argument knowing only

on any

argument knowing only  , ...,

, ...,  .

We have developed this into a scheme to

compute the action of the map by taking

the matrix-vector product of the matrix representing the

map and the vector representing the argument.

In this way, any linear map is represented with respect to some bases

by a matrix.

In the next subsection, we will show the converse, that any matrix represents

a linear map.

.

We have developed this into a scheme to

compute the action of the map by taking

the matrix-vector product of the matrix representing the

map and the vector representing the argument.

In this way, any linear map is represented with respect to some bases

by a matrix.

In the next subsection, we will show the converse, that any matrix represents

a linear map.

- This exercise is recommended for all readers.

- This exercise is recommended for all readers.

- Problem 3

Solve this matrix equation.

- This exercise is recommended for all readers.

- This exercise is recommended for all readers.

- Problem 5

Assume that  is determined by

this action.

is determined by

this action.

Using the standard bases, find

- the matrix representing this map;

- a general formula for

.

.

- This exercise is recommended for all readers.

- Problem 6

Let  be the derivative

transformation.

be the derivative

transformation.

- Represent

with respect to

with respect to  where

where

.

.

- Represent

with respect to

with respect to  where

where

.

.

- This exercise is recommended for all readers.

- Problem 7

Represent each linear map with respect to each pair of bases.

-

with respect to

with respect to

where

where  , given by

, given by

-

with respect to

with respect to

where

where  , given by

, given by

-

with respect to

with respect to

where

where  and

and  , given by

, given by

-

with respect to

with respect to

where

where  and

and  , given by

, given by

-

with respect

to

with respect

to  where

where  , given by

, given by

- Problem 8

Represent the identity map on any nontrivial

space with respect to  , where

, where  is any basis.

is any basis.

- Problem 9

Represent, with respect to the natural basis,

the transpose transformation on the space

of

of  matrices.

matrices.

- Problem 10

Assume that

is a basis for a vector space.

Represent with respect to

is a basis for a vector space.

Represent with respect to  the transformation that is determined

by each.

the transformation that is determined

by each.

-

,

,

,

,

,

,

-

,

,

,

,

,

,

-

,

,

,

,

,

,

- This exercise is recommended for all readers.

- Problem 13

Suppose that  is nonsingular so that

by Theorem II.2.21, for any basis

is nonsingular so that

by Theorem II.2.21, for any basis

the image

the image

is a basis for

is a basis for  .

.

- Represent the map

with respect to

with respect to  .

.

- For a member

of the domain, where

the representation of

of the domain, where

the representation of  has components

has components  , ...,

, ...,  ,

represent the image vector

,

represent the image vector  with respect to

the image basis

with respect to

the image basis  .

.

- Problem 14

Give a formula for the product of a matrix and  , the

column vector that is all zeroes except for a single one in the

, the

column vector that is all zeroes except for a single one in the  -th

position.

-th

position.

- This exercise is recommended for all readers.

- Problem 15

For each vector space of functions of one real variable,

represent the derivative transformation with respect to  .

.

-

,

,

-

,

,

-

,

,

- This exercise is recommended for all readers.

- Problem 17

Can one matrix represent two different linear maps?

That is, can  ?

?

- This exercise is recommended for all readers.

- Problem 20 (Schur's Triangularization Lemma)

- Let

be a subspace of

be a subspace of  and fix bases

and fix bases

.

What is the relationship between the representation of a vector

from

.

What is the relationship between the representation of a vector

from  with

respect to

with

respect to  and the representation of that vector

(viewed as a member of

and the representation of that vector

(viewed as a member of  ) with

respect to

) with

respect to  ?

?

- What about maps?

- Fix a basis

for

for  and observe that the spans

and observe that the spans

![{\displaystyle [\{{\vec {0}}\}]=\{{\vec {0}}\}\subset [\{{\vec {\beta }}_{1}\}]\subset [\{{\vec {\beta }}_{1},{\vec {\beta }}_{2}\}]\subset \quad \cdots \quad \subset [B]=V}](https://wikimedia.org/api/rest_v1/media/math/render/svg/3414ca6462a266e81a770fece4e99534b9ae335d)

form a strictly increasing chain of subspaces.

Show that for any linear map  there is a chain

there is a chain

of

subspaces of

of

subspaces of  such that

such that

![{\displaystyle h([\{{\vec {\beta }}_{1},\dots ,{\vec {\beta }}_{i}\}])\subset W_{i}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/8a96d738e2b917d08ef2413bf0cccf59449c4022)

for each  .

.

- Conclude that for every linear map

there are

bases

there are

bases  so the matrix representing

so the matrix representing  with respect to

with respect to

is upper-triangular

(that is, each entry

is upper-triangular

(that is, each entry  with

with  is zero).

is zero).

- Is an upper-triangular representation unique?

Solutions

![{\displaystyle [\{{\vec {0}}\}]=\{{\vec {0}}\}\subset [\{{\vec {\beta }}_{1}\}]\subset [\{{\vec {\beta }}_{1},{\vec {\beta }}_{2}\}]\subset \quad \cdots \quad \subset [B]=V}](https://wikimedia.org/api/rest_v1/media/math/render/svg/3414ca6462a266e81a770fece4e99534b9ae335d)

![{\displaystyle h([\{{\vec {\beta }}_{1},\dots ,{\vec {\beta }}_{i}\}])\subset W_{i}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/8a96d738e2b917d08ef2413bf0cccf59449c4022)