Probability density function

The red curve is the standard normal distribution

|

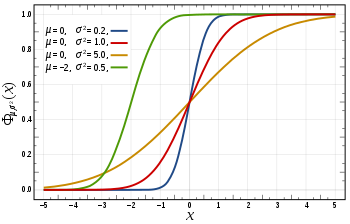

Cumulative distribution function

|

| Notation

|

|

| Parameters

|

μ ∈ R — mean (location)

σ2 > 0 — variance (squared scale)

|

| Support

|

x ∈ R

|

| PDF

|

|

| CDF

|

![{\displaystyle {\frac {1}{2}}\left[1+\operatorname {erf} \left({\frac {x-\mu }{\sqrt {2\sigma ^{2}}}}\right)\right]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/04670b14acb4ddb796469f3812ead9d9cccec275)

|

| Mean

|

μ

|

| Median

|

μ

|

| Mode

|

μ

|

| Variance

|

|

| Skewness

|

0

|

| Ex. kurtosis

|

0

|

| Entropy

|

|

| MGF

|

|

| CF

|

|

| Fisher information

|

|

Normal distribution is without exception the most widely used distribution. It also goes under the name Gaussian distribution. It assumes that the observations are closely clustered around the mean, μ, and this amount is decaying quickly as we go farther away from the mean. The measure of spread is quantified by the variance,  .

.

Some examples of applications are:

- If the average man is 175 cm tall with a variance of 6 cm, what is the probability that a man found at random will be 183 cm tall?

- If the average man is 175 cm tall with a variance of 6 cm and the average woman is 168 cm tall with a variance of 3cm, what is the probability that the average man will be shorter than the average woman?

- If cans are assumed to have a variance of 4 grams, what does the average weight need to be in order to ensure that the 99% of all cans have a weight of at least 250 grams?

The density function is:

where  .

.

and the cumulative distribution function cannot be integrated into a single expression.

Normal distribution with parameters μ and σ is denoted as  . If the rv X is normally distributed with expectation μ and standard deviation σ, one denotes:

. If the rv X is normally distributed with expectation μ and standard deviation σ, one denotes:

To verify that f(x) is a valid pmf we must verify that (1) it is non-negative everywhere, and (2) that the total integral is equal to 1. The first is obvious, so we move on to verify the second.

Now let  . We see that

. We see that  .

.

Now we use the Gaussian integral that

We derive the mean as follows

![{\displaystyle \operatorname {E} [X]=\int _{-\infty }^{\infty }x\cdot f(x)dx}](https://wikimedia.org/api/rest_v1/media/math/render/svg/1c925eb7f3d99af3083ee5838c3bec6f3838997a)

![{\displaystyle =\int _{-\infty }^{\infty }[(x-\mu )+\mu ]{\frac {1}{\sigma {\sqrt {2\pi }}}}e^{-(x-\mu )^{2}/2\sigma ^{2}}dx}](https://wikimedia.org/api/rest_v1/media/math/render/svg/efc7a4e49f0658d302e610e2c327d9256056e798)

We now see that the right integral is the complete integral over a normal pmf. This is therefore 1.

![{\displaystyle \operatorname {E} [X]={\frac {1}{\sigma {\sqrt {2\pi }}}}(-\sigma ^{2})\left[e^{-(x-\mu )^{2}/2\sigma ^{2}}\right]_{-\infty }^{\infty }+\mu }](https://wikimedia.org/api/rest_v1/media/math/render/svg/199dfe628bd03625c353799d3dc9bbdd4ef62ee2)

![{\displaystyle ={\frac {1}{\sigma {\sqrt {2\pi }}}}(-\sigma ^{2})[0-0]+\mu }](https://wikimedia.org/api/rest_v1/media/math/render/svg/2ecc3da777cb4ce7e4e995d4dc314ea7800b64a2)

![{\displaystyle \operatorname {Var} (X)=\operatorname {E} [(X-{E}[X])^{2}]=\int _{-\infty }^{\infty }(x-\mu )^{2}\cdot f(x)dx=\int _{-\infty }^{\infty }(x-\mu )^{2}{\frac {1}{\sigma {\sqrt {2\pi }}}}e^{-{\frac {1}{2}}\cdot \left({\frac {x-\mu }{\sigma }}\right)^{2}}dx}](https://wikimedia.org/api/rest_v1/media/math/render/svg/3e3bed0ee5839f63ce5208213bed008d13a57601)

We let

We now use integration by parts with u=w and v=(-1/2)e^(-w^2)

![{\displaystyle \operatorname {Var} (X)={\frac {2\sigma ^{2}}{\sqrt {\pi }}}\left(\left[w{-1 \over 2}e^{-w^{2}}\right]_{-\infty }^{\infty }-\int _{-\infty }^{\infty }{-1 \over 2}e^{-w^{2}}dw\right)}](https://wikimedia.org/api/rest_v1/media/math/render/svg/3c19383f6494c4d4144cbd047b6d835b93033c2b)

We see that the bracketed term is zero by L'Hôpital's rule.

Now we use the Gaussian integral again

![{\displaystyle {\frac {1}{2}}\left[1+\operatorname {erf} \left({\frac {x-\mu }{\sqrt {2\sigma ^{2}}}}\right)\right]}](https://wikimedia.org/api/rest_v1/media/math/render/svg/04670b14acb4ddb796469f3812ead9d9cccec275)

![{\displaystyle \operatorname {E} [X]=\int _{-\infty }^{\infty }x\cdot f(x)dx}](https://wikimedia.org/api/rest_v1/media/math/render/svg/1c925eb7f3d99af3083ee5838c3bec6f3838997a)

![{\displaystyle =\int _{-\infty }^{\infty }[(x-\mu )+\mu ]{\frac {1}{\sigma {\sqrt {2\pi }}}}e^{-(x-\mu )^{2}/2\sigma ^{2}}dx}](https://wikimedia.org/api/rest_v1/media/math/render/svg/efc7a4e49f0658d302e610e2c327d9256056e798)

![{\displaystyle \operatorname {E} [X]={\frac {1}{\sigma {\sqrt {2\pi }}}}(-\sigma ^{2})\left[e^{-(x-\mu )^{2}/2\sigma ^{2}}\right]_{-\infty }^{\infty }+\mu }](https://wikimedia.org/api/rest_v1/media/math/render/svg/199dfe628bd03625c353799d3dc9bbdd4ef62ee2)

![{\displaystyle ={\frac {1}{\sigma {\sqrt {2\pi }}}}(-\sigma ^{2})[0-0]+\mu }](https://wikimedia.org/api/rest_v1/media/math/render/svg/2ecc3da777cb4ce7e4e995d4dc314ea7800b64a2)

![{\displaystyle \operatorname {Var} (X)=\operatorname {E} [(X-{E}[X])^{2}]=\int _{-\infty }^{\infty }(x-\mu )^{2}\cdot f(x)dx=\int _{-\infty }^{\infty }(x-\mu )^{2}{\frac {1}{\sigma {\sqrt {2\pi }}}}e^{-{\frac {1}{2}}\cdot \left({\frac {x-\mu }{\sigma }}\right)^{2}}dx}](https://wikimedia.org/api/rest_v1/media/math/render/svg/3e3bed0ee5839f63ce5208213bed008d13a57601)

![{\displaystyle \operatorname {Var} (X)={\frac {2\sigma ^{2}}{\sqrt {\pi }}}\left(\left[w{-1 \over 2}e^{-w^{2}}\right]_{-\infty }^{\infty }-\int _{-\infty }^{\infty }{-1 \over 2}e^{-w^{2}}dw\right)}](https://wikimedia.org/api/rest_v1/media/math/render/svg/3c19383f6494c4d4144cbd047b6d835b93033c2b)