Gamma

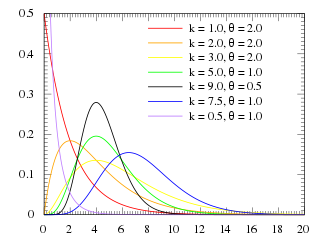

Probability density function

|

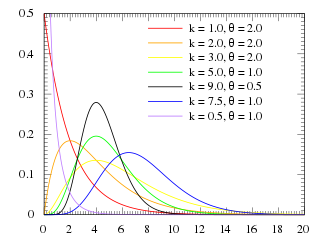

Cumulative distribution function

|

| Parameters

|

shape shape scale scale

|

| Support

|

|

| PDF

|

|

| CDF

|

|

| Mean

|

![{\displaystyle \scriptstyle \operatorname {E} [X]=k\theta \!}](https://wikimedia.org/api/rest_v1/media/math/render/svg/4bcd0564c15fa09e51ad6cef42d662ef6d1dca35)

![{\displaystyle \scriptstyle \operatorname {E} [\ln X]=\psi (k)+\ln(\theta )\!}](https://wikimedia.org/api/rest_v1/media/math/render/svg/ade84f1fd2e20c78ef0cf51012ad6626335092c2)

(see digamma function)

|

| Median

|

No simple closed form

|

| Mode

|

|

| Variance

|

![{\displaystyle \scriptstyle \operatorname {Var} [X]=k\theta ^{2}\,\!}](https://wikimedia.org/api/rest_v1/media/math/render/svg/333617e4e5bd65bdbe335c76c1a7d0327573320e)

![{\displaystyle \scriptstyle \operatorname {Var} [\ln X]=\psi _{1}(k)\!}](https://wikimedia.org/api/rest_v1/media/math/render/svg/7633714acaea7355cb1750b03b1c68d8053d6196)

(see trigamma function )

|

| Skewness

|

|

| Ex. kurtosis

|

|

| Entropy

|

![{\displaystyle \scriptstyle {\begin{aligned}\scriptstyle k&\scriptstyle \,+\,\ln \theta \,+\,\ln[\Gamma (k)]\\\scriptstyle &\scriptstyle \,+\,(1\,-\,k)\psi (k)\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/80d2c89d9e0f1b9542b044217fab69ab3de01add)

|

The Gamma distribution is very important for technical reasons, since it is the parent of the exponential distribution and can explain many other distributions.

The probability distribution function is:

Where  is the Gamma function. The cumulative distribution function cannot be found unless p=1, in which case the Gamma distribution becomes the exponential distribution. The Gamma distribution of the stochastic variable X is denoted as

is the Gamma function. The cumulative distribution function cannot be found unless p=1, in which case the Gamma distribution becomes the exponential distribution. The Gamma distribution of the stochastic variable X is denoted as  .

.

Alternatively, the gamma distribution can be parameterized in terms of a shape parameter  and an inverse scale parameter

and an inverse scale parameter  , called a rate parameter:

, called a rate parameter:

where the  constant can be calculated setting the integral of the density function as 1:

constant can be calculated setting the integral of the density function as 1:

following:

and, with change of variable  :

:

following:

Probability Density Function[edit | edit source]

We first check that the total integral of the probability density function is 1.

Now we let y=x/a which means that dy=dx/a

![{\displaystyle \operatorname {E} [X]=\int _{-\infty }^{\infty }x\cdot {\frac {1}{a^{p}\Gamma (p)}}x^{p-1}e^{-x/a}dx}](https://wikimedia.org/api/rest_v1/media/math/render/svg/2041b84abc19d0b68d9be98a49e51b025c98402b)

Now we let y=x/a which means that dy=dx/a.

![{\displaystyle \operatorname {E} [X]=\int _{0}^{\infty }ay\cdot {\frac {1}{\Gamma (p)}}y^{p-1}e^{-y}dy}](https://wikimedia.org/api/rest_v1/media/math/render/svg/54f1d35c1a15d3ae2ed4481c2ec1eccf01e8b4f4)

![{\displaystyle \operatorname {E} [X]={\frac {a}{\Gamma (p)}}\int _{0}^{\infty }y^{p}e^{-y}dy}](https://wikimedia.org/api/rest_v1/media/math/render/svg/59e45dd2f2dc64776612607cc9c0821c049a5c63)

![{\displaystyle \operatorname {E} [X]={\frac {a}{\Gamma (p)}}\Gamma (p+1)}](https://wikimedia.org/api/rest_v1/media/math/render/svg/9b500fdb08277ba4c426a844dd4a0293d04d5dee)

We now use the fact that

![{\displaystyle \operatorname {E} [X]={\frac {a}{\Gamma (p)}}p\Gamma (p)=ap}](https://wikimedia.org/api/rest_v1/media/math/render/svg/ec6ebf9c846951a550635d7f913c1903886bee57)

We first calculate E[X^2]

![{\displaystyle \operatorname {E} [X^{2}]=\int _{-\infty }^{\infty }x^{2}\cdot {\frac {1}{a^{p}\Gamma (p)}}x^{p-1}e^{-x/a}dx}](https://wikimedia.org/api/rest_v1/media/math/render/svg/dd81fa18392582d5fdf8dfe72690d597d05a22fd)

Now we let y=x/a which means that dy=dx/a.

![{\displaystyle \operatorname {E} [X^{2}]=\int _{0}^{\infty }a^{2}y^{2}\cdot {\frac {1}{a\Gamma (p)}}y^{p-1}e^{-y}ady}](https://wikimedia.org/api/rest_v1/media/math/render/svg/5ab45ebf7e86bcd131f504933497e55af902b9ce)

![{\displaystyle \operatorname {E} [X^{2}]={\frac {a^{2}}{\Gamma (p)}}\int _{0}^{\infty }y^{p+1}e^{-y}dy}](https://wikimedia.org/api/rest_v1/media/math/render/svg/3da9177001a8304d085db66b2c51174e46c88c54)

![{\displaystyle \operatorname {E} [X^{2}]={\frac {a^{2}}{\Gamma (p)}}\Gamma (p+2)=pa^{2}(p+1)}](https://wikimedia.org/api/rest_v1/media/math/render/svg/4d399ef4add4c2afee9106d9fcc6b035e140128c)

Now we use calculate the variance

![{\displaystyle \operatorname {Var} (X)=\operatorname {E} [X^{2}]-(\operatorname {E} [X])^{2}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/cd5a922df13bdee788c0f06474fe002a42c25d8a)

![{\displaystyle \scriptstyle \operatorname {E} [X]=k\theta \!}](https://wikimedia.org/api/rest_v1/media/math/render/svg/4bcd0564c15fa09e51ad6cef42d662ef6d1dca35)

![{\displaystyle \scriptstyle \operatorname {E} [\ln X]=\psi (k)+\ln(\theta )\!}](https://wikimedia.org/api/rest_v1/media/math/render/svg/ade84f1fd2e20c78ef0cf51012ad6626335092c2)

![{\displaystyle \scriptstyle \operatorname {Var} [X]=k\theta ^{2}\,\!}](https://wikimedia.org/api/rest_v1/media/math/render/svg/333617e4e5bd65bdbe335c76c1a7d0327573320e)

![{\displaystyle \scriptstyle \operatorname {Var} [\ln X]=\psi _{1}(k)\!}](https://wikimedia.org/api/rest_v1/media/math/render/svg/7633714acaea7355cb1750b03b1c68d8053d6196)

![{\displaystyle \scriptstyle {\begin{aligned}\scriptstyle k&\scriptstyle \,+\,\ln \theta \,+\,\ln[\Gamma (k)]\\\scriptstyle &\scriptstyle \,+\,(1\,-\,k)\psi (k)\end{aligned}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/80d2c89d9e0f1b9542b044217fab69ab3de01add)

![{\displaystyle \operatorname {E} [X]=\int _{-\infty }^{\infty }x\cdot {\frac {1}{a^{p}\Gamma (p)}}x^{p-1}e^{-x/a}dx}](https://wikimedia.org/api/rest_v1/media/math/render/svg/2041b84abc19d0b68d9be98a49e51b025c98402b)

![{\displaystyle \operatorname {E} [X]=\int _{0}^{\infty }ay\cdot {\frac {1}{\Gamma (p)}}y^{p-1}e^{-y}dy}](https://wikimedia.org/api/rest_v1/media/math/render/svg/54f1d35c1a15d3ae2ed4481c2ec1eccf01e8b4f4)

![{\displaystyle \operatorname {E} [X]={\frac {a}{\Gamma (p)}}\int _{0}^{\infty }y^{p}e^{-y}dy}](https://wikimedia.org/api/rest_v1/media/math/render/svg/59e45dd2f2dc64776612607cc9c0821c049a5c63)

![{\displaystyle \operatorname {E} [X]={\frac {a}{\Gamma (p)}}\Gamma (p+1)}](https://wikimedia.org/api/rest_v1/media/math/render/svg/9b500fdb08277ba4c426a844dd4a0293d04d5dee)

![{\displaystyle \operatorname {E} [X]={\frac {a}{\Gamma (p)}}p\Gamma (p)=ap}](https://wikimedia.org/api/rest_v1/media/math/render/svg/ec6ebf9c846951a550635d7f913c1903886bee57)

![{\displaystyle \operatorname {E} [X^{2}]=\int _{-\infty }^{\infty }x^{2}\cdot {\frac {1}{a^{p}\Gamma (p)}}x^{p-1}e^{-x/a}dx}](https://wikimedia.org/api/rest_v1/media/math/render/svg/dd81fa18392582d5fdf8dfe72690d597d05a22fd)

![{\displaystyle \operatorname {E} [X^{2}]=\int _{0}^{\infty }a^{2}y^{2}\cdot {\frac {1}{a\Gamma (p)}}y^{p-1}e^{-y}ady}](https://wikimedia.org/api/rest_v1/media/math/render/svg/5ab45ebf7e86bcd131f504933497e55af902b9ce)

![{\displaystyle \operatorname {E} [X^{2}]={\frac {a^{2}}{\Gamma (p)}}\int _{0}^{\infty }y^{p+1}e^{-y}dy}](https://wikimedia.org/api/rest_v1/media/math/render/svg/3da9177001a8304d085db66b2c51174e46c88c54)

![{\displaystyle \operatorname {E} [X^{2}]={\frac {a^{2}}{\Gamma (p)}}\Gamma (p+2)=pa^{2}(p+1)}](https://wikimedia.org/api/rest_v1/media/math/render/svg/4d399ef4add4c2afee9106d9fcc6b035e140128c)

![{\displaystyle \operatorname {Var} (X)=\operatorname {E} [X^{2}]-(\operatorname {E} [X])^{2}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/cd5a922df13bdee788c0f06474fe002a42c25d8a)