This Quantum World/print version

| This is the print version of This Quantum World You won't see this message or any elements not part of the book's content when you print or preview this page. |

The current, editable version of this book is available in Wikibooks, the open-content textbooks collection, at

//en.wikibooks.org/wiki/This_Quantum_World

Atoms

[edit | edit source]What does an atom look like?

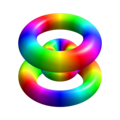

[edit | edit source]Like this?

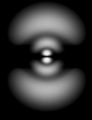

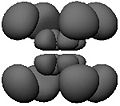

[edit | edit source]Or like this?

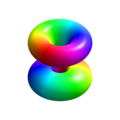

[edit | edit source]None of these images depicts an atom as it is. This is because it is impossible to even visualize an atom as it is. Whereas the best you can do with the images in the first row is to erase them from your memory—they represent a way of viewing the atom that is too simplified for the way we want to start thinking about it—the eight fuzzy images in the next row deserve scrutiny. Each represents an aspect of a stationary state of atomic hydrogen. You see neither the nucleus (a proton) nor the electron. What you see is a fuzzy position. To be precise, what you see are cloud-like blurs, which are symmetrical about the vertical and horizontal axes, and which represent the atom's internal relative position—the position of the electron relative to the proton or the position of the proton relative to the electron.

- What is the state of an atom?

- What is a stationary state?

- What exactly is a fuzzy position?

- How does such a blur represent the atom's internal relative position?

- Why can we not describe the atom's internal relative position as it is?

Quantum states

[edit | edit source]In quantum mechanics, states are probability algorithms. We use them to calculate the probabilities of the possible outcomes of measurements on the basis of actual measurement outcomes. A quantum state takes as its input

- one or several measurement outcomes,

- a measurement M,

- the time of M,

and it yields as its output the probabilities of the possible outcomes of M.

A quantum state is called stationary if the probabilities it assigns are independent of the time of the measurement.

From the mathematical point of view, each blur represents a density function . Imagine a small region like the little box inside the first blur. And suppose that this is a region of the (mathematical) space of positions relative to the proton. If you integrate over you obtain the probability of finding the electron in provided that the appropriate measurement is made:

"Appropriate" here means capable of ascertaining the truth value of the proposition "the electron is in ", the possible truth values being "true" or "false". What we see in each of the following images is a surface of constant probability density.

Now imagine that the appropriate measurement is made. Before the measurement, the electron is neither inside nor outside . If it were inside, the probability of finding it outside would be zero, and if it were outside, the probability of finding it inside would be zero. After the measurement, on the other hand, the electron is either inside or outside

Conclusions:

- Before the measurement, the proposition "the electron is in " is neither true nor false; it lacks a (definite) truth value.

- A measurement generally changes the state of the system on which it is performed.

As mentioned before, probabilities are assigned not only to measurement outcomes but also on the basis of measurement outcomes. Each density function serves to assign probabilities to the possible outcomes of a measurement of the electron's position relative to the proton. And in each case the assignment is based on the outcomes of a simultaneous measurement of three observables: the atom's energy (specified by the value of the principal quantum number ), its total angular momentum (specified by a letter, here p, d, or f), and the vertical component of its angular momentum .

Fuzzy observables

[edit | edit source]We say that an observable with a finite or countable number of possible values is fuzzy (or that it has a fuzzy value) if and only if at least one of the propositions "The value of is " lacks a truth value. This is equivalent to the following necessary and sufficient condition: the probability assigned to at least one of the values is neither 0 nor 1.

What about observables that are generally described as continuous, like a position?

The description of an observable as "continuous" is potentially misleading. For one thing, we cannot separate an observable and its possible values from a measurement and its possible outcomes, and a measurement with an uncountable set of possible outcomes is not even in principle possible. For another, there is not a single observable called "position". Different partitions of space define different position measurements with different sets of possible outcomes.

- Corollary: The possible outcomes of a position measurement (or the possible values of a position observable) are defined by a partition of space. They make up a finite or countable set of regions of space. An exact position is therefore neither a possible measurement outcome nor a possible value of a position observable.

So how do those cloud-like blurs represent the electron's fuzzy position relative to the proton? Strictly speaking, they graphically represent probability densities in the mathematical space of exact relative positions, rather than fuzzy positions. It is these probability densities that represent fuzzy positions by allowing us to calculate the probability of every possible value of every position observable.

It should now be clear why we cannot describe the atom's internal relative position as it is. To describe a fuzzy observable is to assign probabilities to the possible outcomes of a measurement. But a description that rests on the assumption that a measurement is made, does not describe an observable as it is (by itself, regardless of measurements).

Planck

[edit | edit source]Quantum mechanics began as a desperate measure to get around some spectacular failures of what subsequently came to be known as classical physics.

In 1900 Max Planck discovered a law that perfectly describes the spectrum of a glowing hot object. Planck's radiation formula turned out to be irreconcilable with the physics of his time. (If classical physics were right, you would be blinded by ultraviolet light if you looked at the burner of a stove, aka the UV catastrophe.) At first, it was just a fit to the data, "a fortuitous guess at an interpolation formula" as Planck himself called it. Only weeks later did it turn out to imply the quantization of energy for the emission of electromagnetic radiation: the energy of a quantum of radiation is proportional to the frequency of the radiation, the constant of proportionality being Planck's constant

- .

We can of course use the angular frequency instead of . Introducing the reduced Planck constant , we then have

- .

This theory is valid at all temperatures and helpful in explaining radiation by black bodies.

Rutherford

[edit | edit source]In 1911 Ernest Rutherford proposed a model of the atom based on experiments by Geiger and Marsden. Geiger and Marsden had directed a beam of alpha particles at a thin gold foil. Most of the particles passed the foil more or less as expected, but about one in 8000 bounced back as if it had encountered a much heavier object. In Rutherford's own words this was as incredible as if you fired a 15 inch cannon ball at a piece of tissue paper and it came back and hit you. After analysing the data collected by Geiger and Marsden, Rutherford concluded that the diameter of the atomic nucleus (which contains over 99.9% of the atom's mass) was less than 0.01% of the diameter of the entire atom. He suggested that the atom is spherical in shape and the atomic electrons orbit the nucleus much like planets orbit a star. He calculated mass of electron as 1/7000th part of the mass of an alpha particle. Rutherford's atomic model is also called the Nuclear model.

The problem of having electrons orbit the nucleus the same way that a planet orbits a star is that classical electromagnetic theory demands that an orbiting electron will radiate away its energy and spiral into the nucleus in about 0.5×10-10 seconds. This was the worst quantitative failure in the history of physics, under-predicting the lifetime of hydrogen by at least forty orders of magnitude! (This figure is based on the experimentally established lower bound on the proton's lifetime.)

Bohr

[edit | edit source]In 1913 Niels Bohr postulated that the angular momentum of an orbiting atomic electron was quantized: its "allowed" values are integral multiples of :

- where

Why quantize angular momentum, rather than any other quantity?

- Radiation energy of a given frequency is quantized in multiples of Planck's constant.

- Planck's constant is measured in the same units as angular momentum.

Bohr's postulate explained not only the stability of atoms but also why the emission and absorption of electromagnetic radiation by atoms is discrete. In addition it enabled him to calculate with remarkable accuracy the spectrum of atomic hydrogen — the frequencies at which it is able to emit and absorb light (visible as well as infrared and ultraviolet). The following image shows the visible emission spectrum of atomic hydrogen, which contains four lines of the Balmer series.

Apart from his quantization postulate, Bohr's reasoning at this point remained completely classical. Let's assume with Bohr that the electron's orbit is a circle of radius The speed of the electron is then given by and the magnitude of its acceleration by Eliminating yields In the cgs system of units, the magnitude of the Coulomb force is simply where is the magnitude of the charge of both the electron and the proton. Via Newton's the last two equations yield where is the electron's mass. If we take the proton to be at rest, we obtain for the electron's kinetic energy.

If the electron's potential energy at infinity is set to 0, then its potential energy at a distance from the proton is minus the work required to move it from to infinity,

The total energy of the electron thus is

We want to express this in terms of the electron's angular momentum Remembering that and hence and multiplying the numerator by and the denominator by we obtain

Now comes Bohr's break with classical physics: he simply replaced by . The "allowed" values for the angular momentum define a series of allowed values for the atom's energy:

As a result, the atom can emit or absorb energy only by amounts equal to the absolute values of the differences

one Rydberg (Ry) being equal to This is also the ionization energy of atomic hydrogen — the energy needed to completely remove the electron from the proton. Bohr's predicted value was found to be in excellent agreement with the measured value.

Using two of the above expressions for the atom's energy and solving for we obtain For the ground state this is the Bohr radius of the hydrogen atom, which equals The mature theory yields the same figure but interprets it as the most likely distance from the proton at which the electron would be found if its distance from the proton were measured.

de Broglie

[edit | edit source]In 1923, ten years after Bohr had derived the spectrum of atomic hydrogen by postulating the quantization of angular momentum, Louis de Broglie hit on an explanation of why the atom's angular momentum comes in multiples of Since 1905, Einstein had argued that electromagnetic radiation itself was quantized (and not merely its emission and absorption, as Planck held). If electromagnetic waves can behave like particles (now known as photons), de Broglie reasoned, why cannot electrons behave like waves?

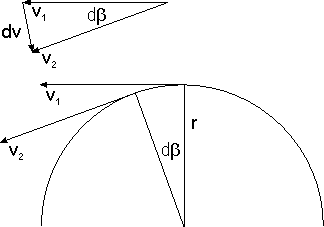

Suppose that the electron in a hydrogen atom is a standing wave on what has so far been thought of as the electron's circular orbit. (The crests, troughs, and nodes of a standing wave are stationary.) For such a wave to exist on a circle, the circumference of the latter must be an integral multiple of the wavelength of the former:

Einstein had established not only that electromagnetic radiation of frequency comes in quanta of energy but also that these quanta carry a momentum Using this formula to eliminate from the condition one obtains But is just the angular momentum of a classical electron with an orbit of radius In this way de Broglie derived the condition that Bohr had simply postulated.

Schrödinger

[edit | edit source]If the electron is a standing wave, why should it be confined to a circle? After de Broglie's crucial insight that particles are waves of some sort, it took less than three years for the mature quantum theory to be found, not once, but twice. By Werner Heisenberg in 1925 and by Erwin Schrödinger in 1926. If we let the electron be a standing wave in three dimensions, we have all it takes to arrive at the Schrödinger equation, which is at the heart of the mature theory.

Let's keep to one spatial dimension. The simplest mathematical description of a wave of angular wavenumber and angular frequency (at any rate, if you are familiar with complex numbers) is the function

Let's express the phase in terms of the electron's energy and momentum

The partial derivatives with respect to and are

We also need the second partial derivative of with respect to :

We thus have

In non-relativistic classical physics the kinetic energy and the kinetic momentum of a free particle are related via the dispersion relation

This relation also holds in non-relativistic quantum physics. Later you will learn why.

In three spatial dimensions, is the magnitude of a vector . If the particle also has a potential energy and a potential momentum (in which case it is not free), and if and stand for the particle's total energy and total momentum, respectively, then the dispersion relation is

By the square of a vector we mean the dot (or scalar) product . Later you will learn why we represent possible influences on the motion of a particle by such fields as and

Returning to our fictitious world with only one spatial dimension, allowing for a potential energy , substituting the differential operators and for and in the resulting dispersion relation, and applying both sides of the resulting operator equation to we arrive at the one-dimensional (time-dependent) Schrödinger equation:

In three spatial dimensions and with both potential energy and potential momentum present, we proceed from the relation substituting for and for The differential operator is a vector whose components are the differential operators The result:

where is now a function of and This is the three-dimensional Schrödinger equation. In non-relativistic investigations (to which the Schrödinger equation is confined) the potential momentum can generally be ignored, which is why the Schrödinger equation is often given this form:

The free Schrödinger equation (without even the potential energy term) is satisfied by (in one dimension) or (in three dimensions) provided that equals which is to say: However, since we are dealing with a homogeneous linear differential equation — which tells us that solutions may be added and/or multiplied by an arbitrary constant to yield additional solutions — any function of the form

with solves the (one-dimensional) Schrödinger equation. If no integration boundaries are specified, then we integrate over the real line, i.e., the integral is defined as the limit The converse also holds: every solution is of this form. The factor in front of the integral is present for purely cosmetic reasons, as you will realize presently. is the Fourier transform of which means that

The Fourier transform of exists because the integral is finite. In the next section we will come to know the physical reason why this integral is finite.

So now we have a condition that every electron "wave function" must satisfy in order to satisfy the appropriate dispersion relation. If this (and hence the Schrödinger equation) contains either or both of the potentials and , then finding solutions can be tough. As a budding quantum mechanician, you will spend a considerable amount of time learning to solve the Schrödinger equation with various potentials.

Born

[edit | edit source]In the same year that Erwin Schrödinger published the equation that now bears his name, the nonrelativistic theory was completed by Max Born's insight that the Schrödinger wave function is actually nothing but a tool for calculating probabilities, and that the probability of detecting a particle "described by" in a region of space is given by the volume integral

— provided that the appropriate measurement is made, in this case a test for the particle's presence in . Since the probability of finding the particle somewhere (no matter where) has to be 1, only a square integrable function can "describe" a particle. This rules out which is not square integrable. In other words, no particle can have a momentum so sharp as to be given by times a wave vector , rather than by a genuine probability distribution over different momenta.

Given a probability density function , we can define the expected value

and the standard deviation

as well as higher moments of . By the same token,

and

Here is another expression for

To check that the two expressions are in fact equal, we plug into the latter expression:

Next we replace by and shuffle the integrals with the mathematical nonchalance that is common in physics:

The expression in square brackets is a representation of Dirac's delta distribution the defining characteristic of which is for any continuous function (In case you didn't notice, this proves what was to be proved.)

Heisenberg

[edit | edit source]In the same annus mirabilis of quantum mechanics, 1926, Werner Heisenberg proved the so-called "uncertainty" relation

Heisenberg spoke of Unschärfe, the literal translation of which is "fuzziness" rather than "uncertainty". Since the relation is a consequence of the fact that and are related to each other via a Fourier transformation, we leave the proof to the mathematicians. The fuzziness relation for position and momentum follows via . It says that the fuzziness of a position (as measured by ) and the fuzziness of the corresponding momentum (as measured by ) must be such that their product equals at least

The Feynman route to Schrödinger

[edit | edit source]The probabilities of the possible outcomes of measurements performed at a time are determined by the Schrödinger wave function . The wave function is determined via the Schrödinger equation by What determines ? Why, the outcome of a measurement performed at — what else? Actual measurement outcomes determine the probabilities of possible measurement outcomes.

Two rules

[edit | edit source]In this chapter we develop the quantum-mechanical probability algorithm from two fundamental rules. To begin with, two definitions:

- Alternatives are possible sequences of measurement outcomes.

- With each alternative is associated a complex number called amplitude.

Suppose that you want to calculate the probability of a possible outcome of a measurement given the actual outcome of an earlier measurement. Here is what you have to do:

- Choose any sequence of measurements that may be made in the meantime.

- Assign an amplitude to each alternative.

- Apply either of the following rules:

Rule A: If the intermediate measurements are made (or if it is possible to infer from other measurements what their outcomes would have been if they had been made), first square the absolute values of the amplitudes of the alternatives and then add the results.

- Rule B: If the intermediate measurements are not made (and if it is not possible to infer from other measurements what their outcomes would have been), first add the amplitudes of the alternatives and then square the absolute value of the result.

In subsequent sections we will explore the consequences of these rules for a variety of setups, and we will think about their origin — their raison d'être. Here we shall use Rule B to determine the interpretation of given Born's probabilistic interpretation of .

In the so-called "continuum normalization", the unphysical limit of a particle with a sharp momentum is associated with the wave function

Hence we may write

is the amplitude for the outcome of an infinitely precise momentum measurement. is the amplitude for the outcome of an infinitely precise position measurement performed (at time t) subsequent to an infinitely precise momentum measurement with outcome And is the amplitude for obtaining by an infinitely precise position measurement performed at time

The preceding equation therefore tells us that the amplitude for finding at is the product of

- the amplitude for the outcome and

- the amplitude for the outcome (at time ) subsequent to a momentum measurement with outcome

summed over all values of

Under the conditions stipulated by Rule A, we would have instead that the probability for finding at is the product of

- the probability for the outcome and

- the probability for the outcome (at time ) subsequent to a momentum measurement with outcome

summed over all values of

The latter is what we expect on the basis of standard probability theory. But if this holds under the conditions stipulated by Rule A, then the same holds with "amplitude" substituted from "probability" under the conditions stipulated by Rule B. Hence, given that and are amplitudes for obtaining the outcome in an infinitely precise position measurement, is the amplitude for obtaining the outcome in an infinitely precise momentum measurement.

Notes:

- Since Rule B stipulates that the momentum measurement is not actually made, we need not worry about the impossibility of making an infinitely precise momentum measurement.

- If we refer to as "the probability of obtaining the outcome " what we mean is that integrated over any interval or subset of the real line is the probability of finding our particle in this interval or subset.

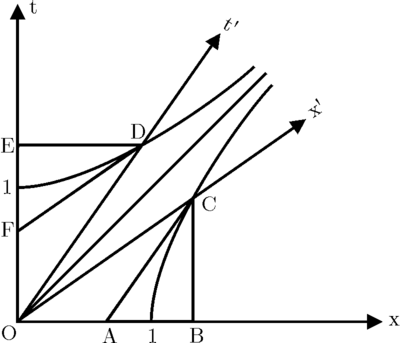

An experiment with two slits

[edit | edit source]

In this experiment, the final measurement (to the possible outcomes of which probabilities are assigned) is the detection of an electron at the backdrop, by a detector situated at D (D being a particular value of x). The initial measurement outcome, on the basis of which probabilities are assigned, is the launch of an electron by an electron gun G. (Since we assume that G is the only source of free electrons, the detection of an electron behind the slit plate also indicates the launch of an electron in front of the slit plate.) The alternatives or possible intermediate outcomes are

- the electron went through the left slit (L),

- the electron went through the right slit (R).

The corresponding amplitudes are and

Here is what we need to know in order to calculate them:

- is the product of two complex numbers, for which we shall use the symbols and

- By the same token,

- The absolute value of is inverse proportional to the distance between A and B.

- The phase of is proportional to

For obvious reasons is known as a propagator.

Why product?

[edit | edit source]Recall the fuzziness ("uncertainty") relation, which implies that as In this limit the particle's momentum is completely indefinite or, what comes to the same, has no value at all. As a consequence, the probability of finding a particle at B, given that it was last "seen" at A, depends on the initial position A but not on any initial momentum, inasmuch as there is none. Hence whatever the particle does after its detection at A is independent of what it did before then. In probability-theoretic terms this means that the particle's propagation from G to L and its propagation from L to D are independent events. So the probability of propagation from G to D via L is the product of the corresponding probabilities, and so the amplitude of propagation from G to D via L is the product of the corresponding amplitudes.

Why is the absolute value inverse proportional to the distance?

[edit | edit source]Imagine (i) a sphere of radius whose center is A and (ii) a detector monitoring a unit area of the surface of this sphere. Since the total surface area is proportional to and since for a free particle the probability of detection per unit area is constant over the entire surface (explain why!), the probability of detection per unit area is inverse proportional to The absolute value of the amplitude of detection per unit area, being the square root of the probability, is therefore inverse proportional to

Why is the phase proportional to the distance?

[edit | edit source]The multiplicativity of successive propagators implies the additivity of their phases. Together with the fact that, in the case of a free particle, the propagator (and hence its phase) can only depend on the distance between A and B, it implies the proportionality of the phase of to

Calculating the interference pattern

[edit | edit source]According to Rule A, the probability of detecting at G an electron launched at D is

If the slits are equidistant from G, then and are equal and is proportional to

Here is the resulting plot of against the position of the detector:

(solid line) is the sum of two distributions (dotted lines), one for the electrons that went through L and one for the electrons that went through R.

According to Rule B, the probability of detecting at D an electron launched at G is proportional to

where is the difference and is the wavenumber, which is sufficiently sharp to be approximated by a number. (And it goes without saying that you should check this result.)

Here is the plot of against for a particular set of values for the wavenumber, the distance between the slits, and the distance between the slit plate and the backdrop:

Observe that near the minima the probability of detection is less if both slits are open than it is if one slit is shut. It is customary to say that destructive interference occurs at the minima and that constructive interference occurs at the maxima, but do not think of this as the description of a physical process. All we mean by "constructive interference" is that a probability calculated according to Rule B is greater than the same probability calculated according to Rule A, and all we mean by "destructive interference" is that a probability calculated according to Rule B is less than the same probability calculated according to Rule A.

Here is how an interference pattern builds up over time[1]:

-

100 electrons

-

3000 electrons

-

20000 electrons

-

70000 electrons

- ↑ A. Tonomura, J. Endo, T. Matsuda, T. Kawasaki, & H. Ezawa, "Demonstration of single-electron buildup of an interference pattern", American Journal of Physics 57, 117-120, 1989.

Bohm's story

[edit | edit source]Hidden Variables

[edit | edit source]Suppose that the conditions stipulated by Rule B are met: there is nothing — no event, no state of affairs, anywhere, anytime — from which the slit taken by an electron can be inferred. Can it be true, in this case,

- that each electron goes through a single slit — either L or R — and

- that the behavior of an electron that goes through one slit does not depend on whether the other slit is open or shut?

To keep the language simple, we will say that an electron leaves a mark where it is detected at the backdrop. If each electron goes through a single slit, then the observed distribution of marks when both slits are open is the sum of two distributions, one from electrons that went through L and one from electrons that went through R:

If in addition the behavior of an electron that goes through one slit does not depend on whether the other slit is open or shut, then we can observe by keeping R shut, and we can observe by keeping L shut. What we observe if R is shut is the left dashed hump, and what we observed if L is shut is the right dashed hump:

Hence if the above two conditions (as well as those stipulated by Rule B) are satisfied, we will see the sum of these two humps. In reality what we see is this:

Thus all of those conditions cannot be simultaneously satisfied. If Rule B applies, then either it is false that each electron goes through a single slit or the behavior of an electron that goes through one slit does depend on whether the other slit is open or shut.

Which is it?

According to one attempt to make physical sense of the mathematical formalism of quantum mechanics, due to Louis de Broglie and David Bohm, each electron goes through a single slit, and the behavior of an electron that goes through one slit depends on whether the other slit is open or shut.

So how does the state of, say, the right slit (open or shut) affect the behavior of an electron that goes through the left slit? In both de Broglie's pilot wave theory and Bohmian mechanics, the electron is assumed to be a well-behaved particle in the sense that it follows a precise path — its position at any moment is given by three coordinates — and in addition there exists a wave that guides the electron by exerting on it a force. If only one slit is open, this passes through one slit. If both slits are open, this passes through both slits and interferes with itself (in the "classical" sense of interference). As a result, it guides the electrons along wiggly paths that cluster at the backdrop so as to produce the observed interference pattern:

According to this story, the reason why electrons coming from the same source or slit arrive in different places, is that they start out in slightly different directions and/or with slightly different speeds. If we had exact knowledge of their initial positions and momenta, we could make an exact prediction of each electron's subsequent motion. Obtaining this exact knowledge, however, is impossible in practice. The [[../../Serious illnesses/Born#Heisenberg|uncertainty principle ]] prevents us from making exact predictions of a particle's motion. Hence even though according to Bohm the initial positions and momenta are in possession of precise values, we can never know them.

If positions and momenta have precise values, then why can we not measure them? It used to be said that this is because a measurement exerts an uncontrollable influence on the value of the observable being measured. Yet this merely raises another question: why do measurements exert uncontrollable influences? This may be true for all practical purposes, but the uncertainty principle does not say that merely holds for all practical purposes. Moreover, it isn't the case that measurements necessarily "disturb" the systems on which they are performed.

The statistical element of quantum mechanics is an essential feature of the theory. The postulate of an underlying determinism, which in order to be consistent with the theory has to be a crypto-determinism, not only adds nothing to our understanding of the theory but also precludes any proper understanding of this essential feature of the theory. There is, in fact, a simple and obvious reason why hidden variables are hidden: the reason why they are strictly (rather than merely for all practical purposes) unobservable is that they do not exist.

At one time Einstein insisted that theories ought to be formulated without reference to unobservable quantities. When Heisenberg later mentioned to Einstein that this maxim had guided him in his discovery of the uncertainty principle, Einstein replied something to this effect: "Even if I once said so, it is nonsense." His point was that before one has a theory, one cannot know what is observable and what is not. Our situation here is different. We have a theory, and this tells in no uncertain terms what is observable and what is not.

Propagator for a free and stable particle

[edit | edit source]The propagator as a path integral

[edit | edit source]Suppose that we make m intermediate position measurements at fixed intervals of duration Each of these measurements is made with the help of an array of detectors monitoring n mutually disjoint regions Under the conditions stipulated by Rule B, the propagator now equals the sum of amplitudes

It is not hard to see what happens in the double limit (which implies that ) and The multiple sum becomes an integral over continuous spacetime paths from A to B, and the amplitude becomes a complex-valued functional — a complex function of continuous functions representing continuous spacetime paths from A to B:

The integral is not your standard Riemann integral to which each infinitesimal interval makes a contribution proportional to the value that takes inside the interval, but a functional or path integral, to which each "bundle" of paths of infinitesimal width makes a contribution proportional to the value that takes inside the bundle.

As it stands, the path integral is just the idea of an idea. Appropriate evaluation methods have to be devised on a more or less case-by-case basis.

A free particle

[edit | edit source]Now pick any path from A to B, and then pick any infinitesimal segment of . Label the start and end points of by inertial coordinates and respectively. In the general case, the amplitude will be a function of and In the case of a free particle, depends neither on the position of in spacetime (given by ) nor on the spacetime orientiaton of (given by the four-velocity but only on the proper time interval

(Because its norm equals the speed of light, the four-velocity depends on three rather than four independent parameters. Together with they contain the same information as the four independent numbers )

Thus for a free particle With this, the multiplicativity of successive propagators tells us that

It follows that there is a complex number such that where the line integral gives the time that passes on a clock as it travels from A to B via

A free and stable particle

[edit | edit source]By integrating (as a function of ) over the whole of space, we obtain the probability of finding that a particle launched at the spacetime point still exists at the time For a stable particle this probability equals 1:

If you contemplate this equation with a calm heart and an open mind, you will notice that if the complex number had a real part then the integral between the two equal signs would either blow up or drop off exponentially as a function of , due to the exponential factor .

Meaning of mass

[edit | edit source]The propagator for a free and stable particle thus has a single "degree of freedom": it depends solely on the value of If proper time is measured in seconds, then is measured in radians per second. We may think of with a proper-time parametrization of as a clock carried by a particle that travels from A to B via provided we keep in mind that we are thinking of an aspect of the mathematical formalism of quantum mechanics rather than an aspect of the real world.

It is customary

- to insert a minus (so the clock actually turns clockwise!):

- to multiply by (so that we may think of as the rate at which the clock "ticks" — the number of cycles it completes each second):

- to divide by Planck's constant (so that is measured in energy units and called the rest energy of the particle):

- and to multiply by (so that is measured in mass units and called the particle's rest mass):

The purpose of using the same letter everywhere is to emphasize that it denotes the same physical quantity, merely measured in different units. If we use natural units in which rather than conventional ones, the identity of the various 's is immediately obvious.

From quantum to classical

[edit | edit source]Action

[edit | edit source]Let's go back to the propagator

For a free and stable particle we found that

where is the proper-time interval associated with the path element . For the general case we found that the amplitude is a function of and or, equivalently, of the coordinates , the components of the 4-velocity, as well as . For a particle that is stable but not free, we obtain, by the same argument that led to the above amplitude,

where we have introduced the functional , which goes by the name action.

For a free and stable particle, is the proper time (or proper duration) multiplied by , and the infinitesimal action is proportional to :

Let's recap. We know all about the motion of a stable particle if we know how to calculate the probability (in all circumstances). We know this if we know the amplitude . We know the latter if we know the functional . And we know this functional if we know the infinitesimal action or (in all circumstances).

What do we know about ?

The multiplicativity of successive propagators implies the additivity of actions associated with neighboring infinitesimal path segments and . In other words,

implies

It follows that the differential is homogeneous (of degree 1) in the differentials :

This property of makes it possible to think of the action as a (particle-specific) length associated with , and of as defining a (particle-specific) spacetime geometry. By substituting for we get:

Something is wrong, isn't it? Since the right-hand side is now a finite quantity, we shouldn't use the symbol for the left-hand side. What we have actually found is that there is a function , which goes by the name Lagrange function, such that .

Geodesic equations

[edit | edit source]Consider a spacetime path from to Let's change ("vary") it in such a way that every point of gets shifted by an infinitesimal amount to a corresponding point except the end points, which are held fixed: and at both and

If then

By the same token,

In general, the change will cause a corresponding change in the action: If the action does not change (that is, if it is stationary at ),

then is a geodesic of the geometry defined by (A function is stationary at those values of at which its value does not change if changes infinitesimally. By the same token we call a functional stationary if its value does not change if changes infinitesimally.)

To obtain a handier way to characterize geodesics, we begin by expanding

This gives us

Next we use the product rule for derivatives,

to replace the last two terms of (*), which takes us to

The second integral vanishes because it is equal to the difference between the values of the expression in brackets at the end points and where and If is a geodesic, then the first integral vanishes, too. In fact, in this case must hold for all possible (infinitesimal) variations and whence it follows that the integrand of the first integral vanishes. The bottom line is that the geodesics defined by satisfy the geodesic equations

Principle of least action

[edit | edit source]If an object travels from to it travels along all paths from to in the same sense in which an electron goes through both slits. Then how is it that a big thing (such as a planet, a tennis ball, or a mosquito) appears to move along a single well-defined path?

There are at least two reasons. One of them is that the bigger an object is, the harder it is to satisfy the conditions stipulated by Rule Another reason is that even if these conditions are satisfied, the likelihood of finding an object of mass where according to the laws of classical physics it should not be, decreases as increases.

To see this, we need to take account of the fact that it is strictly impossible to check whether an object that has travelled from to has done so along a mathematically precise path Let us make the half realistic assumption that what we can check is whether an object has travelled from to within a a narrow bundle of paths — the paths contained in a narrow tube The probability of finding that it has, is the absolute square of the path integral which sums over the paths contained in

Let us assume that there is exactly one path from to for which is stationary: its length does not change if we vary the path ever so slightly, no matter how. In other words, we assume that there is exactly one geodesic. Let's call it and let's assume it lies in

No matter how rapidly the phase changes under variation of a generic path it will be stationary at This means, loosely speaking, that a large number of paths near contribute to with almost equal phases. As a consequence, the magnitude of the sum of the corresponding phase factors is large.

If is not stationary at all depends on how rapidly it changes under variation of If it changes sufficiently rapidly, the phases associated with paths near are more or less equally distributed over the interval so that the corresponding phase factors add up to a complex number of comparatively small magnitude. In the limit the only significant contributions to come from paths in the infinitesimal neighborhood of

We have assumed that lies in If it does not, and if changes sufficiently rapidly, the phases associated with paths near any path in are more or less equally distributed over the interval so that in the limit there are no significant contributions to

For a free particle, as you will remember, From this we gather that the likelihood of finding a freely moving object where according to the laws of classical physics it should not be, decreases as its mass increases. Since for sufficiently massive objects the contributions to the action due to influences on their motion are small compared to this is equally true of objects that are not moving freely.

What, then, are the laws of classical physics?

They are what the laws of quantum physics degenerate into in the limit In this limit, as you will gather from the above, the probability of finding that a particle has traveled within a tube (however narrow) containing a geodesic, is 1, and the probability of finding that a particle has traveled within a tube (however wide) not containing a geodesic, is 0. Thus we may state the laws of classical physics (for a single "point mass", to begin with) by saying that it follows a geodesic of the geometry defined by

This is readily generalized. The propagator for a system with degrees of freedom — such as an -particle system with degrees of freedom — is

where and are the system's respective configurations at the initial time and the final time and the integral sums over all paths in the system's -dimensional configuration spacetime leading from to In this case, too, the corresponding classical system follows a geodesic of the geometry defined by the action differential which now depends on spatial coordinates, one time coordinate, and the corresponding differentials.

The statement that a classical system follows a geodesic of the geometry defined by its action, is often referred to as the principle of least action. A more appropriate name is principle of stationary action.

Energy and momentum

[edit | edit source]Observe that if does not depend on (that is, ) then

is constant along geodesics. (We'll discover the reason for the negative sign in a moment.)

Likewise, if does not depend on (that is, ) then

is constant along geodesics.

tells us how much the projection of a segment of a path onto the time axis contributes to the action of tells us how much the projection of onto space contributes to If has no explicit time dependence, then equal intervals of the time axis make equal contributions to and if has no explicit space dependence, then equal intervals of any spatial axis make equal contributions to In the former case, equal time intervals are physically equivalent: they represent equal durations. In the latter case, equal space intervals are physically equivalent: they represent equal distances.

If equal intervals of the time coordinate or equal intervals of a space coordinate are not physically equivalent, this is so for either of two reasons. The first is that non-inertial coordinates are used. For if inertial coordinates are used, then every freely moving point mass moves by equal intervals of the space coordinates in equal intervals of the time coordinate, which means that equal coordinate intervals are physically equivalent. The second is that whatever it is that is moving is not moving freely: something, no matter what, influences its motion, no matter how. This is because one way of incorporating effects on the motion of an object into the mathematical formalism of quantum physics, is to make inertial coordinate intervals physically inequivalent, by letting depend on and/or

Thus for a freely moving classical object, both and are constant. Since the constancy of follows from the physical equivalence of equal intervals of coordinate time (a.k.a. the "homogeneity" of time), and since (classically) energy is defined as the quantity whose constancy is implied by the homogeneity of time, is the object's energy.

By the same token, since the constancy of follows from the physical equivalence of equal intervals of any spatial coordinate axis (a.k.a. the "homogeneity" of space), and since (classically) momentum is defined as the quantity whose constancy is implied by the homogeneity of space, is the object's momentum.

Let us differentiate a former result,

with respect to The left-hand side becomes

while the right-hand side becomes just Setting and using the above definitions of and we obtain

is a 4-scalar. Since are the components of a 4-vector, the left-hand side, is a 4-scalar if and only if are the components of another 4-vector.

(If we had defined without the minus, this 4-vector would have the components )

In the rest frame of a free point mass, and Using the Lorentz transformations, we find that this equals

where is the velocity of the point mass in Compare with the above framed equation to find that for a free point mass,

Lorentz force law

[edit | edit source]To incorporate effects on the motion of a particle (regardless of their causes), we must modify the action differential that a free particle associates with a path segment In doing so we must take care that the modified (i) remains homogeneous in the differentials and (ii) remains a 4-scalar. The most straightforward way to do this is to add a term that is not just homogeneous but linear in the coordinate differentials:

Believe it or not, all classical electromagnetic effects (as against their causes) are accounted for by this expression. is a scalar field (that is, a function of time and space coordinates that is invariant under rotations of the space coordinates), is a 3-vector field, and is a 4-vector field. We call and the scalar potential and the vector potential, respectively. The particle-specific constant is the electric charge, which determines how strongly a particle of a given species is affected by influences of the electromagnetic kind.

If a point mass is not free, the expressions at the end of the previous section give its kinetic energy and its kinetic momentum Casting (*) into the form

and plugging it into the definitions

we obtain

and are the particle's potential energy and potential momentum, respectively.

Now we plug (**) into the geodesic equation

For the right-hand side we obtain

while the left-hand side works out at

Two terms cancel out, and the final result is

As a classical object travels along the segment of a geodesic, its kinetic momentum changes by the sum of two terms, one linear in the temporal component of and one linear in the spatial component How much contributes to the change of depends on the electric field and how much contributes depends on the magnetic field The last equation is usually written in the form

called the Lorentz force law, and accompanied by the following story: there is a physical entity known as the electromagnetic field, which is present everywhere, and which exerts on a charge an electric force and a magnetic force

(Note: This form of the Lorentz force law holds in the Gaussian system of units. In the MKSA system of units the is missing.)

Whence the classical story?

[edit | edit source]Imagine a small rectangle in spacetime with corners

Let's calculate the electromagnetic contribution to the action of the path from to via for a unit charge () in natural units ( ):

Next, the contribution to the action of the path from to via :

Look at the difference:

Alternatively, you may think of as the electromagnetic contribution to the action of the loop

Let's repeat the calculation for a small rectangle with corners

Thus the electromagnetic contribution to the action of this loop equals the flux of through the loop.

Remembering (i) Stokes' theorem and (ii) the definition of in terms of we find that

In (other) words, the magnetic flux through a loop (or through any surface bounded by ) equals the circulation of around the loop (or around any surface bounded by the loop).

The effect of a circulation around the finite rectangle is to increase (or decrease) the action associated with the segment relative to the action associated with the segment If the actions of the two segments are equal, then we can expect the path of least action from to to be a straight line. If one segment has a greater action than the other, then we can expect the path of least action from to to curve away from the segment with the larger action.

Compare this with the classical story, which explains the curvature of the path of a charged particle in a magnetic field by invoking a force that acts at right angles to both the magnetic field and the particle's direction of motion. The quantum-mechanical treatment of the same effect offers no such explanation. Quantum mechanics invokes no mechanism of any kind. It simply tells us that for a sufficiently massive charge traveling from to the probability of finding that it has done so within any bundle of paths not containing the action-geodesic connecting with is virtually 0.

Much the same goes for the classical story according to which the curvature of the path of a charged particle in a spacetime plane is due to a force that acts in the direction of the electric field. (Observe that curvature in a spacetime plane is equivalent to acceleration or deceleration. In particular, curvature in a spacetime plane containing the axis is equivalent to acceleration in a direction parallel to the axis.) In this case the corresponding circulation is that of the 4-vector potential around a spacetime loop.

Schrödinger at last

[edit | edit source]The Schrödinger equation is non-relativistic. We obtain the non-relativistic version of the electromagnetic action differential,

by expanding the root and ignoring all but the first two terms:

This is obviously justified if which defines the non-relativistic regime.

Writing the potential part of as makes it clear that in most non-relativistic situations the effects represented by the vector potential are small compared to those represented by the scalar potential If we ignore them (or assume that vanishes), and if we include the charge in the definition of (or assume that ), we obtain

for the action associated with a spacetime path

Because the first term is the same for all paths from to it has no effect on the differences between the phases of the amplitudes associated with different paths. By dropping it we change neither the classical phenomena (inasmuch as the extremal path remains the same) nor the quantum phenomena (inasmuch as interference effects only depend on those differences). Thus

We now introduce the so-called wave function as the amplitude of finding our particle at if the appropriate measurement is made at time accordingly, is the amplitude of finding the particle first at (at time ) and then at (at time ). Integrating over we obtain the amplitude of finding the particle at (at time ), provided that Rule B applies. The wave function thus satisfies the equation

We again simplify our task by pretending that space is one-dimensional. We further assume that and differ by an infinitesimal interval Since is infinitesimal, there is only one path leading from to We can therefore forget about the path integral except for a normalization factor implicit in the integration measure and make the following substitutions:

This gives us

We obtain a further simplification if we introduce and integrate over instead of (The integration "boundaries" and are the same for both and ) We now have that

Since we are interested in the limit we expand all terms to first order in To which power in should we expand? As increases, the phase increases at an infinite rate (in the limit ) unless is of the same order as In this limit, higher-order contributions to the integral cancel out. Thus the left-hand side expands to

while expands to

The following integrals need to be evaluated:

The results are

Putting Humpty Dumpty back together again yields

The factor of must be the same on both sides, so which reduces Humpty Dumpty to

Multiplying by and taking the limit (which is trivial since has dropped out), we arrive at the Schrödinger equation for a particle with one degree of freedom subject to a potential :

Trumpets please! The transition to three dimensions is straightforward:

The Schrödinger equation: implications and applications

[edit | edit source]In this chapter we take a look at some of the implications of the Schrödinger equation

How fuzzy positions get fuzzier

[edit | edit source]We will calculate the rate at which the fuzziness of a position probability distribution increases, in consequence of the fuzziness of the corresponding momentum, when there is no counterbalancing attraction (like that between the nucleus and the electron in atomic hydrogen).

Because it is easy to handle, we choose a Gaussian function

which has a bell-shaped graph. It defines a position probability distribution

If we normalize this distribution so that then and

We also have that

- the Fourier transform of is

- this defines the momentum probability distribution

- and

The fuzziness of the position and of the momentum of a particle associated with is therefore the minimum allowed by the "uncertainty" relation:

Now recall that

where This has the Fourier transform

and this defines the position probability distribution

Comparison with reveals that Therefore,

The graphs below illustrate how rapidly the fuzziness of a particle the mass of an electron grows, when compared to an object the mass of a molecule or a peanut. Here we see one reason, though by no means the only one, why for all intents and purposes "once sharp, always sharp" is true of the positions of macroscopic objects.

Above: an electron with nanometer. In a second, grows to nearly 60 km.

Below: an electron with centimeter. grows only 16% in a second.

Next, a molecule with nanometer. In a second, grows to 4.4 centimeters.

Finally, a peanut (2.8 g) with nanometer. takes the present age of the universe to grow to 7.5 micrometers.

Time-independent Schrödinger equation

[edit | edit source]If the potential V does not depend on time, then the Schrödinger equation has solutions that are products of a time-independent function and a time-dependent phase factor :

Because the probability density is independent of time, these solutions are called stationary.

Plug into

to find that satisfies the time-independent Schrödinger equation

Why energy is quantized

[edit | edit source]Limiting ourselves again to one spatial dimension, we write the time independent Schrödinger equation in this form:

Since this equation contains no complex numbers except possibly itself, it has real solutions, and these are the ones in which we are interested. You will notice that if then is positive and has the same sign as its second derivative. This means that the graph of curves upward above the axis and downward below it. Thus it cannot cross the axis. On the other hand, if then is negative and and its second derivative have opposite signs. In this case the graph of curves downward above the axis and upward below it. As a result, the graph of keeps crossing the axis — it is a wave. Moreover, the larger the difference the larger the curvature of the graph; and the larger the curvature, the smaller the wavelength. In particle terms, the higher the kinetic energy, the higher the momentum.

Let us now find the solutions that describe a particle "trapped" in a potential well — a bound state. Consider this potential:

Observe, to begin with, that at and where the slope of does not change since at these points. This tells us that the probability of finding the particle cannot suddenly drop to zero at these points. It will therefore be possible to find the particle to the left of or to the right of where classically it could not be. (A classical particle would oscillates back and forth between these points.)

Next, take into account that the probability distributions defined by must be normalizable. For the graph of this means that it must approach the axis asymptotically as

Suppose that we have a normalized solution for a particular value If we increase or decrease the value of the curvature of the graph of between and increases or decreases. A small increase or decrease won't give us another solution: won't vanish asymptotically for both positive and negative To obtain another solution, we must increase by just the right amount to increase or decrease by one the number of wave nodes between the "classical" turning points and and to make again vanish asymptotically in both directions.

The bottom line is that the energy of a bound particle — a particle "trapped" in a potential well — is quantized: only certain values yield solutions of the time-independent Schrödinger equation:

A quantum bouncing ball

[edit | edit source]As a specific example, consider the following potential:

is the gravitational acceleration at the floor. For the Schrödinger equation as given in the previous section tells us that unless The only sensible solution for negative is therefore The requirement that for ensures that our perfectly elastic, frictionless quantum bouncer won't be found below the floor.

Since a picture is worth more than a thousand words, we won't solve the time-independent Schrödinger equation for this particular potential but merely plot its first eight solutions:

Where would a classical bouncing ball subject to the same potential reverse its direction of motion? Observe the correlation between position and momentum (wavenumber).

All of these states are stationary; the probability of finding the quantum bouncer in any particular interval of the axis is independent of time. So how do we get it to move?

Recall that any linear combination of solutions of the Schrödinger equation is another solution. Consider this linear combination of two stationary states:

Assuming that the coefficients and the wave functions are real, we calculate the mean position of a particle associated with :

The first two integrals are the (time-independent) mean positions of a particle associated with and respectively. The last term equals

and this tells us that the particle's mean position oscillates with frequency and amplitude about the sum of the first two terms.

Visit this site to watch the time-dependence of the probability distribution associated with a quantum bouncer that is initially associated with a Gaussian distribution.

Atomic hydrogen

[edit | edit source]While de Broglie's theory of 1923 featured circular electron waves, Schrödinger's "wave mechanics" of 1926 features standing waves in three dimensions. Finding them means finding the solutions of the time-independent Schrödinger equation

with the potential energy of a classical electron at a distance from the proton. (Only when we come to the relativistic theory will we be able to shed the last vestige of classical thinking.)

In using this equation, we ignore (i) the influence of the electron on the proton, whose mass is some 1836 times larger than that of he electron, and (ii) the electron's spin. Since relativistic and spin effects on the measurable properties of atomic hydrogen are rather small, this non-relativistic approximation nevertheless gives excellent results.

For bound states the total energy is negative, and the Schrödinger equation has a discrete set of solutions. As it turns out, the "allowed" values of are precisely the values that Bohr obtained in 1913:

However, for each there are now linearly independent solutions. (If are independent solutions, then none of them can be written as a linear combination of the others.)

Solutions with different correspond to different energies. What physical differences correspond to linearly independent solutions with the same ?

Using polar coordinates, one finds that all solutions for a particular value are linear combinations of solutions that have the form

turns out to be another quantized variable, for implies that with In addition, has an upper bound, as we shall see in a moment.

Just as the factorization of into made it possible to obtain a -independent Schrödinger equation, so the factorization of into makes it possible to obtain a -independent Schrödinger equation. This contains another real parameter over and above whose "allowed" values are given by with an integer satisfying The range of possible values for is bounded by the inequality The possible values of the principal quantum number the angular momentum quantum number and the so-called magnetic quantum number thus are:

Each possible set of quantum numbers defines a unique wave function and together these make up a complete set of bound-state solutions () of the Schrödinger equation with The following images give an idea of the position probability distributions of the first three states (not to scale). Below them are the probability densities plotted against Observe that these states have nodes, all of which are spherical, that is, surfaces of constant (The nodes of a wave in three dimensions are two-dimensional surfaces. The nodes of a "probability wave" are the surfaces at which the sign of changes and, consequently, the probability density vanishes.)

Take another look at these images:

The letters s,p,d,f stand for l=0,1,2,3, respectively. (Before the quantum-mechanical origin of atomic spectral lines was understood, a distinction was made between "sharp," "principal," "diffuse," and "fundamental" lines. These terms were subsequently found to correspond to the first four values that can take. From onward the labels follows the alphabet: f,g,h...) Observe that these states display both spherical and conical nodes, the latter being surfaces of constant (The "conical" node with is a horizontal plane.) These states, too, have a total of nodes, of which are conical.

Because the "waviness" in is contained in a phase factor it does not show up in representations of To make it visible, the phase can be encoded as color:

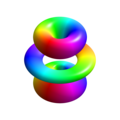

In chemistry it is customary to consider real superpositions of opposite like as in the following images, which are also valid solutions.

-

or

-

or

-

or

-

-

or

-

-

-

The total number of nodes is again the total number of non-spherical nodes is again but now there are plane nodes containing the axis and conical nodes.

What is so special about the axis? Absolutely nothing, for the wave functions which are defined with respect to a different axis, make up another complete set of bound-state solutions. This means that every wave function can be written as a linear combination of the functions and vice versa.

Observables and operators

[edit | edit source]Remember the mean values

As noted already, if we define the operators

- ("multiply with ") and

then we can write

By the same token,

Which observable is associated with the differential operator ? If and are constant (as the partial derivative with respect to requires), then is constant, and

Given that and this works out at or

Since, classically, orbital angular momentum is given by so that it seems obvious that we should consider as the operator associated with the component of the atom's angular momentum.

Yet we need to be wary of basing quantum-mechanical definitions on classical ones. Here are the quantum-mechanical definitions:

Consider the wave function of a closed system with degrees of freedom. Suppose that the probability distribution (which is short for ) is invariant under translations in time: waiting for any amount of time makes no difference to it:

Then the time dependence of is confined to a phase factor

Further suppose that the time coordinate and the space coordinates are homogeneous — equal intervals are physically equivalent. Since is closed, the phase factor cannot then depend on and its phase can at most linearly depend on waiting for should have the same effect as twice waiting for In other words, multiplying the wave function by should have same effect as multiplying it twice by :

Thus

So the existence of a constant ("conserved") quantity or (in conventional units) is implied for a closed system, and this is what we mean by the energy of the system.

Now suppose that is invariant under translations in the direction of one of the spatial coordinates say :

Then the dependence of on is confined to a phase factor

And suppose again that the time coordinates and are homogeneous. Since is closed, the phase factor cannot then depend on or and its phase can at most linearly depend on : translating by should have the same effect as twice translating it by In other words, multiplying the wave function by should have same effect as multiplying it twice by :

Thus

So the existence of a constant ("conserved") quantity or (in conventional units) is implied for a closed system, and this is what we mean by the j-component of the system's momentum.

You get the picture. Moreover, the spatial coordinates might as well be the spherical coordinates If is invariant under rotations about the axis, and if the longitudinal coordinate is homogeneous, then

In this case we call the conserved quantity the component of the system's angular momentum.

Now suppose that is an observable, that is the corresponding operator, and that satisfies

We say that is an eigenfunction or eigenstate of the operator and that it has the eigenvalue Let's calculate the mean and the standard deviation of for We obviously have that

Hence

since For a system associated with is dispersion-free. Hence the probability of finding that the value of lies in an interval containing is 1. But we have that

So, indeed, is the operator associated with the component of the atom's angular momentum.

Observe that the eigenfunctions of any of these operators are associated with systems for which the corresponding observable is "sharp": the standard deviation measuring its fuzziness vanishes.

For obvious reasons we also have

If we define the commutator then saying that the operators and commute is the same as saying that their commutator vanishes. Later we will prove that two observables are compatible (can be simultaneously measured) if and only if their operators commute.

Exercise: Show that

One similarly finds that and The upshot: different components of a system's angular momentum are incompatible.

Exercise: Using the above commutators, show that the operator commutes with and

Beyond hydrogen: the Periodic Table

[edit | edit source]If we again assume that the nucleus is fixed at the center and ignore relativistic and spin effects, then the stationary states of helium are the solutions of the following equation:

The wave function now depends on six coordinates, and the potential energy is made up of three terms. and are associated with the respective distances of the electrons from the nucleus, and is associated with the distance between the electrons. Think of as the value the potential energy associated with the two electrons would have if they were at and respectively.

Why are there no separate wave functions for the two electrons? The joint probability of finding the first electron in a region and the second in a region (relative to the nucleus) is given by

If the probability of finding the first electron in were independent of the whereabouts of the second electron, then we could assign to it a wave function and if the probability of finding the second electron in were independent of the whereabouts of the first electron, we could assign to it a wave function In this case would be given by the product of the two wave functions, and would be the product of and But in general, and especially inside a helium atom, the positional probability distribution for the first electron is conditional on the whereabouts of the second electron, and vice versa, given that the two electrons repel each other (to use the language of classical physics).

For the lowest energy levels, the above equation has been solved by numerical methods. With three or more electrons it is hopeless to look for exact solutions of the corresponding Schrödinger equation. Nevertheless, the Periodic Table and many properties of the chemical elements can be understood by using the following approximate theory.

First,we disregard the details of the interactions between the electrons. Next, since the chemical properties of atoms depend on their outermost electrons, we consider each of these atoms subject to a potential that is due to (i) the nucleus and (ii) a continuous, spherically symmetric, charge distribution doing duty for the other electrons. We again neglect spin effects except that we take account of the Pauli exclusion principle, according to which the probability of finding two electrons (more generally, two fermions) having exactly the same properties is 0. Thus two electrons can be associated with exactly the same wave function provided that their spin states differ in the following way: whenever the spins of the two electrons are measured with respect to a given axis, the outcomes are perfectly anticorrelated; one will be "up" and the other will be "down". Since there are only two possible outcomes, a third electron cannot be associated with the same wave function.

This approximate theory yields stationary wave functions called orbitals for individual electrons. These are quite similar to the stationary wave functions one obtains for the single electron of hydrogen, except that their dependence on the radial coordinate is modified by the negative charge distribution representing the remaining electrons. As a consequence of this modification, the energies associated with orbitals with the same quantum number but different quantum numbers are no longer equal. For any given obitals with higher yield a larger mean distance between the electron and the nucleus, and the larger this distance, the more the negative charge of the remaining electrons screens the positive charge of the nucleus. As a result, an electron with higher is less strongly bound (given the same ), so its ionization energy is lower.

Chemists group orbitals into shells according to their principal quantum number. As we have seen, the -th shell can "accommodate" up to electrons. Helium has the first shell completely "filled" and the second shell "empty." Because the helium nucleus has twice the charge of the hydrogen nucleus, the two electrons are, on average, much nearer the nucleus than the single electron of hydrogen. The ionization energy of helium is therefore much larger, 2372.3 J/mol as compared to 1312.0 J/mol for hydrogen. On the other hand, if you tried to add an electron to create a negative helium ion, it would have to go into the second shell, which is almost completely screened from the nucleus by the electrons in the first shell. Helium is therefore neither prone to give up an electron not able to hold an extra electron. It is chemically inert, as are all elements in the rightmost column of the Periodic Table.

In the second row of the Periodic Table the second shell gets filled. Since the energies of the 2p orbitals are higher than that of the 2s orbital, the latter gets "filled" first. With each added electron (and proton!) the entire electron distribution gets pulled in, and the ionization energy goes up, from 520.2 J/mol for lithium (atomic number Z=3) to 2080.8 J/mol for neon (Z=10). While lithium readily parts with an electron, fluorine (Z=9) with a single empty "slot" in the second shell is prone to grab one. Both are therefore quite active chemically. The progression from sodium (Z=11) to argon (Z=18) parallels that from lithium to neon.

There is a noteworthy peculiarity in the corresponding sequences of ionization energies: The ionization energy of oxygen (Z=8, 1313.9 J/mol) is lower than that of nitrogen (Z=7, 1402.3 J/mol), and that of sulfur (Z=16, 999.6 J/mol) is lower than that of phosphorus (Z=15, 1011.8 J/mol). To understand why this is so, we must take account of certain details of the inter-electronic forces that we have so far ignored.

Suppose that one of the two 2p electrons of carbon (Z=6) goes into the orbital with respect to the axis. Where will the other 2p electron go? It will go into any vacant orbital that minimizes the repulsion between the two electrons, by maximizing their mean distance. This is neither of the orbitals with with respect to the axis but an orbital with with respect to some axis perpendicular to the axis. If we call this the axis, then the third 2p electron of nitrogen goes into the orbital with relative to axis. The fourth 2p electron of oxygen then has no choice but to go — with opposite spin — into an already occupied 2p orbital. This raises its energy significantly and accounts for the drop in ionization from nitrogen to oxygen.

By the time the 3p orbitals are "filled," the energies of the 3d states are pushed up so high (as a result of screening) that the 4s state is energetically lower. The "filling up" of the 3d orbitals therefore begins only after the 4s orbitals are "occupied," with scandium (Z=21).

Thus even this simplified and approximate version of the quantum theory of atoms has the power to predict the qualitative and many of the quantitative features of the Period Table.

Probability flux

[edit | edit source]The time rate of change of the probability density (at a fixed location ) is given by

With the help of the Schrödinger equation and its complex conjugate,

one obtains

The terms containing cancel out, so we are left with

Next, we calculate the divergence of :

The upshot:

Integrated over a spatial region with unchanging boundary

According to Gauss's law, the outward flux of through equals the integral of the divergence of over

We thus have that

If is the continuous density of some kind of stuff (stuff per unit volume) and is its flux (stuff per unit area per unit time), then on the left-hand side we have the rate at which the stuff inside increases, and on the right-hand side we have the rate at which stuff enters through the surface of So if some stuff moves from place A to place B, it crosses the boundary of any region that contains either A or B. This is why the framed equation is known as a continuity equation.

In the quantum world, however, there is no such thing as continuously distributed and/or continuously moving stuff. and respectively, are a density (something per unit volume) and a flux (something per unit area per unit time) only in a formal sense. If is the wave function associated with a particle, then the integral gives the probability of finding the particle in if the appropriate measurement is made, and the framed equation tells us this: if the probability of finding the particle inside as a function of the time at which the measurement is made, increases, then the probability of finding the particle outside as a function of the same time, decreases by the same amount. (Much the same holds if is associated with a system having degrees of freedom and is a region of the system's configuration space.) This is sometimes expressed by saying that "probability is (locally) conserved." When you hear this, then remember that the probability for something to happen in a given place at a given time isn't anything that is situated at that place or that exists at that time.

Entanglement (a preview)

[edit | edit source]Bell's theorem: the simplest version

[edit | edit source]Quantum mechanics permits us to create the following scenario.

- Pairs of particles are launched in opposite directions.

- Each particle is subjected to one of three possible measurements (1, 2, or 3).

- Each time the two measurements are chosen at random.

- Each measurement has two possible results, indicated by a red or green light.

Here is what we find:

- If both particles are subjected to the same measurement, identical results are never obtained.

- The two sequences of recorded outcomes are completely random. In particular, half of the time both lights are the same color.

If this doesn't bother you, then please explain how it is that the colors differ whenever identical measurements are performed!

The obvious explanation would be that each particle arrives with an "instruction set" — some property that pre-determines the outcome of every possible measurement. Let's see what this entails.

Each particle arrives with one of the following 23 = 8 instruction sets:

- RRR, RRG, RGR, GRR, RGG, GRG, GGR, or GGG.

(If a particle arrives with, say, RGG, then the apparatus flashes red if it is set to 1 and green if it is set to 2 or 3.) In order to explain why the outcomes differ whenever both particles are subjected to the same measurement, we have to assume that particles launched together arrive with opposite instruction sets. If one carries the instruction (or arrives with the property denoted by) RRG, then the other carries the instruction GGR.