Software Engineering with an Agile Development Framework/Iteration One/Interaction Design

Introduction

[edit | edit source]Computers define and control many aspects of our lives. From banking systems to air traffic control, food production to medical care, we are continuously exposed to technological systems which potentially track our behaviour, invade our privacy and put our safety at risk. How then, to ensure that ethical concerns are taken into account? A litigious society ensures (most of the time) that human lives are not at risk from faulty products, but can we also be sure that software meets the needs of the disabled community, or that the ecological impact is minimised?

Sociotechnical computing

[edit | edit source]Developers naturally focus on getting the technical components of a system correct. Is the system effective, is it robust, is there sufficient bandwidth to meet the client’s requirements? However the software may be used in ways never intended by the developers and assumptions made during the development process can have a critical impact on outcomes. For example, in industrial settings, users sometimes disable electronic safety systems in order to increase outputs, possibly at the cost of fingers or limbs.

Computing systems are tools that are increasingly embedded and invisible to the end user. Computer errors, whether intentional or accidental, can affect educational outcomes, financial records, alter personal data or determine elections, This potentially creates a power imbalance as computer ‘experts’ have the ability to manipulate systems to their own advantage.

In addition, software systems do not function in isolation. They are designed and used by humans, who bring their own values and biases to the process. It is important to take these factors into consideration when designing software, by using thoughtful value choices and being aware of the choices we are making.

Example

[edit | edit source]The Therac-25 was a computer controlled medical linear accelerator used for treating cancer. Many of these machines were in use in the United States during the 1980’s. See http://en.wikipedia.org/wiki/Therac-25 for details.

Code of ethics

[edit | edit source]For software engineers, ethical practice has two dimensions:

1. Technical Ethics

Doing a technically competent job at all phases of the software development process

2. Professional Ethics

Using a set of moral values to guide the technical decisions

Software engineering has grown from its computer engineering roots into a recognised professional discipline. The development of a code of ethics was an important step in this process, and the Software Engineering Code of Ethics was adopted by the IEEE Computer Society and the ACM in 1999.

The code, developed through extensive consultation within the software engineering industry, “documents the

ethical and professional obligations of software engineers.” (Gotterbarn, Miller, Rogerson, 1999) and provides normative guidelines rather than taking a regulatory approach.

In brief, the code suggests that software engineers should

- consider who is affected by their work

- examine if they and their colleagues are treating other human beings with due respect;

- consider how the public, if reasonably well informed, would view their decisions;

- analyze how the least empowered will be affected by their decisions;

- consider whether their acts would be judged worthy of the ideal professional working as a software engineer.

Stakeholders

[edit | edit source]A critical aspect of any ethical analysis is to identify the stakeholders who may be affected by the use of the system under development.

What is a stakeholder? Traditional definitions from the corporate world usually assume a financial stakeholding in a project. This narrow focus would usually only include the client who has commissioned the software system.

Williamson (2003) defines four levels of stakeholder:

1. Direct - People or groups who directly interact with the project (eg, end users, operators).

2. Indirect - People or groups who do not directly interact with the project, but exercise strong influence over direct users (eg, employers, supervisors).

3. Remote - People or groups who remain at a distance from the project, but could be affected/influenced by the project (eg, patients, clients).

4. Societal - Wider social influences. This might include government or regulatory agencies who have an interest in the organisation where the software is used.

This broader view ensures that we consider the ‘downstream’ effects of the system being developed. For an ethical analysis there is a need to check for issues at every stage of development, with every stakeholder and from every perspective.

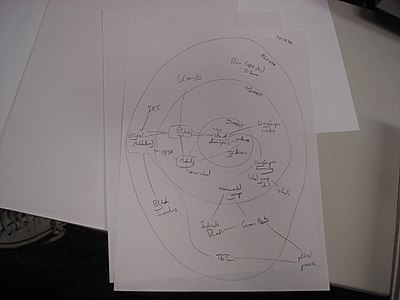

Williamson uses a "audience map" to help identify the stakeholders.

Sodis

[edit | edit source]SoDIS (Software Development Impact Statement) is a system which uses a stakeholder impact analysis to identify, evaluate and mitigate risks in the software development process. Through this process the developer is encouraged to consider of people, groups or organisations and how they are related to the proposed project and its products or deliverables.

This tool is designed to identify risks in a software engineering project through a rigorous analysis of the impact on stakeholders of the development and use of the final product. It primarily addresses ethical issues but in the process wider concerns are often identified. It can be used at any stage of the SDLC as a review exercise, but would generally be applied in the early planning stages.

The SoDIS Project Auditor is a software package that uses SoDIS to facilitate the process of auditing projects for risks.

We are using SoDIS during the first iteration of the Design Concept. In this way we can identify potential design issues with the system before any significant design decisions are made. Later we will do an in depth Sodis analysis, which will highlight risks associated with the use of the system in place.

links/references

Don Gotterbarn http://www.cs.utexas.edu/users/ethics/professionalism/education.html

Code of Ethics

http://www.acm.org/serving/se/code.htm

SoDIS

Williamson, A. Defining your Stakeholders. Wairua Consulting, Auckland. 2003.

Gotterbarn, D., Miller, K. W., Computer Ethics in the Undergraduate Curriculum: Case Studies and the Joint Software Engineer’s Code, Consortium for Computing Sciences in Colleges, 2004.

|

Group: Chad Roulston and Siaosi Napualani Vaka

|