Sensory Systems/Visual System/Inter-Species Comparison of the Visual System

Inter-Species Comparison of the Visual System

[edit | edit source]In this chapter we will discuss briefly the three basic photoreceptor cell classes. Then we start to explore the visual ecology of animal visual systems: How they are adapted to different spectra and light intensity. This leads to the tradeoff between sensitivity and resolution, since both compete for the available resources of the animal. If not marked differently, the information in this chapter stems from the excellent book Visual Ecology. [1]

Basic classes of photoreceptors

[edit | edit source]Throughout the animal kingdom, there are a huge variety of photoreceptor cells. Despite their differences they all work on the same principle: Since the visual pigments - the molecules responsible for detecting photons - can only be positioned at the cellmembrane, all photoreceptors form layers of membrane to capture enough photons.

There are two basic classes of photoreceptor cells. In vertebrates (mammals, fish birds, reptiles, etc.) the visual receptors are derived from ciliary epithelial cells and are therefore called "ciliary receptors". They are further divided into rods and cones, with most vertebrates possessing both of them. Ciliary receptors pile their membrane in the outer segment (see figure).

Arthropods (insects, crusteans, etc.) and molluscs (scallops, octopus, etc.) have tons of small, cylindrical microvilli which contain the visual pigments. These cylinders stick out from the cell body like bristles from a toothbrush. These receptor cells are called rhabdoms. Very few animals, e.g. scallops use both ciliary and rhabdomeric photoreceptors.

|

|

Sensitivity to different parts of the spectrum

[edit | edit source]In all animals, vision starts with the photochemical detection of the incoming light. Photopigments are molecules that change when they intercept a photon (more on this process can be found here). Photopigments that are used in visual systems are called visual pigments. A broad array of different visual pigments exist and they have different absorbtion spectra i.e. they detect light of different colour. Animals that have color vision need several visual pigments with different spectral sensitivities. However most animals have a single visual pigment that is expressed at a far higher level. A reasonable explanation is the sensitivity hypothesis. A possible formulation could be:

"Rod visual pigment of a given species are spectrally placed to maximize photon capture in the natural environment"—Cronin et al., Visual Ecology

Rods are responsible for dim light vision where the available light is of little intensity and it is important to be sensitive to the light available. This hypothesis was developed during investigations in deep-sea animals for which it holds very well. For all other habitats it is problematic.

Spectral adaptation in the deep-sea

[edit | edit source]

Early research by Denton and Warren as well as Munz in 1957 found that the visual pigments in deep-sea critters have a maximum absorbance in the blue part of the spectrum. A more recent study of deep sea fish reports that the maximum absorbance is tightly clustered around 480 nm.[2] This matches well with the spectrum of the deep-sea where several meters of water have filtered out all other wavelengths. Why that deep-sea shrimp tend to have a maximum absorbance at a higher wavelength of around 500 nm is still not understood. [3]

Marine mammals show a much broader range of maximum absorbtion wavelength than terrestial mammals. While the rod pigments of terrestial mammals absorb maximally at around 500 nm, marine mammal absorbtion peaks between 480 to 505 nm. Deep foraging animals have visual pigments shifted towards the blue colors (λmax ≤ 490 nm) while mammals dwelling close to the coast have λmax -values close to land-dwelling animals.[4]

Spetra in various habitats

[edit | edit source]Habitats have very unique spectra. Light intensity and spectrum composition varies with the incident angle over the globe. Apart from the atmosphere also water and vegetation can serve as natural filters. In aquatic habitats red colors are filtered out quickly with blue colors reaching greater depth. [5]

A similar effect can be observed for forest canopies where the green vegetation filters the light. Travelling through the leaves the light's non-green component are filtered out first leaving green to be the dominant intensities in the upper part of the canopy (23 to 11 m). Below there is dim light dominated by near infrared radiation invisible to the human eye. [6]

Problem of the sensitivity hypothesis

[edit | edit source]For habitats other than the deep-sea the sensitivity hypothesis falls short. Already in clear water close to the surface the environments spectrum would predict visual pigments with a maximum absorbance at higher wavelength than actually encountered. No vertebrate has rods with a maximum absorbance at a wavelength beyond 525 nm even though the light intensity increases continually for higher wavelength. Most rod absorbances peak near 500 nm or less. Insects (even if they are active during the night) do not have photoreceptors with an absorbance peak beyond 545 nm.

One possibility is that visual pigments used for the detection of higher wavelength are unreliable due to their lower thermal activation threshold. They are thus more susceptible to thermal noise. The idea that thermal noise could put a constraint on visual systems is supported by experiments with cold-blooded amphibians adapted to low light. Toads and frogs outperform humans at detecting low light intensities and do increasingly worse as the temperature increases, showing a monotone relation between the minimal intensity perceivable by the animal and the estimated thermal isomerization rate of the retina (i.e. how often the visual pigments are triggered by thermal motion rather than an incoming photon). [7]

Basic design principles for true eyes

[edit | edit source]An eye is considered to be a "true eye" if it is capable of spatial vision i.e. it has to have some form of spatial resolution and imaging. An eye spot for example - a simple patch of photoreceptors - is not considered a true eye as it can only detect the presence of light but cannot locate its source exept maybe as a coarse direction. These eyes do have their use: A burrowing animal could use it to detect when it breaks the surface, it could inform an animal, whether it has entered or left the shadows or it could use it as a means to detect dangerous levels of UV irradiance.[8] In the following we will focus on true eyes. All true eyes have photoreceptors at unique positions in the retina and receives light from a restricted region of the surrounding world. This means each photoreceptor works as a pixel. To restrict the region of space from which the photoreceptor receives light, all true eyes possess an aperture or pupil.

In an animal the size of the eye is governed by energetic needs - bigger eyes need more upkeep. In an eye of a given size there is inevitably a tradeoff between resolution and sensitivity. High-resolution vision requires small and dense pixels/photoreceptors which are susceptible to noise at low light intensities. A nocturnal animal might therefore evolve coarser pixels that gather more light at the cost of reduced resolution.

Types of Noise Limiting Sensitivity

[edit | edit source]Several types of extrinsic (shot noise) and intrinsic noise (transducer and dark noise)

Shot noise Due to the particle nature of light, the number of photons reaching a photoreceptor can be described as a poisson process with a signal to noise ratio (SNR) of

with N being the average number of incident photons and being the standard deviation. While the human eye is able to perceive as few as 5 to 15 photons[9], it is impossible to see any features beyond the existence of the light source. The de Vries-Rose square law describes how the contrast increases with the SNR. [10] This means that the minimal contrast that can be perceived is set by shot noise.

Transducer noise Photoreceptors are unable to respond to each absorbed photon with an identical electrical signal.[11]

Dark or thermal noise As already discussed above, another source of noise is the random thermal activation of the biochemical pathways transducing the photons. Baylor et al. identified two components of the dark noise: A small continuous fluctuation and event-like spikes indistinguishable from the arrival of photons attributed to the thermal activation of a visual pigment.[12]

Formulas for Sensitivity

[edit | edit source]To calculate the optical sensitivity S of an eye, three factors have to be multiplied: The area of the pupil, the solid angle viewed by photoreceptor and the fraction of light absorbed[13]

Here A is the diameter of the aperture (pupil), f is the focal length of the eye and d, l and k are the diameter, length and absorbtion coefficient of the photoreceptors, respectively. In the above equation, the fraction of light absorbed has been calculated for daylight. In the deep sea, the light is mostly monochromatic blue light of 480 nm, and therefore the fraction can be replaced by the absorptance.[13]

The absorption coefficient k describes the fraction of light a photoreceptor absorbs per unit length. Warrant and Nilsson (1998) give some values for k that range from 0.005 μm-1 for a house fly to 0.064 μm-1 for deep sea fish, with human rods at 0.028 μm-1. Interrestingly, he also notes that the values for vertebrates are five times higher than the values of invertebrates living at the same light intensities. By studying the sensitivity equations we can see that strategies for increasing sensitivity include big pupils (big A), small focal length (f) and larger photoreceptors (d,l). Since big pupils correlate with a bigger focal length, bigger eyes do not automatically provide better sensitivities than small eyes. The nocturnal moth Ephestia eyes are almost 300-times more sensitive than human eyes, despite it's eye being tiny.[13]

The table below gives an overview over the huge range of sensitivities found in nature.

| Species | Animal | Eye type | A (μm) | d (μm) | f (μm) | l (μm) | S (μm2 sr) | Δρ (°) |

|---|---|---|---|---|---|---|---|---|

| Cirolana | Isopod | lower mesopelagic apposition | 150 | 90 | 100 | 90 | 5,092 c | 52 |

| Oplophorus | Shrimp | mesopelagic superposition | 600 | 200 b | 226 | 200 b | 3,300 c | 8.1 |

| Dinopis | Spider | nocturnal camera e | 1,325 | 55 | 771 | 55 | 101 | 1.5 |

| Deilephila | Hawkmoth | nocturnal superposition | 937 d | 414 b | 675 | 414 b | 69 | 0.9 |

| Onitis aygulus | Dung beetle | nocturnal superposition | 845 | 86 | 503 | 86 | 58.9 | 3.3 |

| Ephestia | Moth | nocturnal superposition | 340 | 110 b | 170 | 110 b | 38.4 | 2.7 |

| Macroglossum | Hawkmoth | diurnal superposition | 581 d | 362 b | 409 | 362 b | 37.9 | 1.1 |

| Octopus | Octopus | epipelagic camera | 8,000 | 200 | 10,000 | 200 | 4.2 c | 0.02 |

| Pecten | Scallop | littoral concave mirror | 450 | 15 | 270 | 15 | 4 | 1.6 |

| Megalopta | Sweat bee | nocturnal apposition | 36 | 350 | 97 | 350 | 2.7 | 4.7 |

| Bufo | Toad | nocturnal camera | 5,550 | 54 | 4,714 | 54 | 2.41 | 0.03 |

| Architeuthis | Squid | lower mesopelagic camera | 90,000 | 677 | 112,500 a | 677 | 2.3 c | 0.002 |

| Onitis belial | Dung beetle | diurnal superposition | 309 | 32 | 338 | 32 | 1.9 | 1.1 |

| Planaria | Flatworm | pigment cup | 30 | 6 | 25 | 6 | 1.5 | 22.9 |

| Homo | Human | diurnal camera | 8,000 | 30 | 16,700 | 30 | 0.93 | 0.01 |

| Littorina | Marine snail | littoral camera | 108 | 20 | 126 | 20 | 0.4 | 1.8 |

| Vanadis | Marine worm | littoral camera | 250 | 80 | 1,000 | 80 | 0.26 | 0.3 |

| Apis | Honeybee | diurnal apposition | 20 | 320 | 66 | 320 | 0.1 | 1.7 |

| Phidippus | Spider | diurnal camera f | 380 | 23 | 767 | 23 | 0.038 | 0.2 |

Notes : S has units μm 2 sr. Δρ is calculated using equation below and has units of degrees. . See chapter 5 for a full description of eye types. a Focal length is calculated from Matthiessen’s ratio: f = 1.25 A. b Rhabdom length quoted as double the actual length due to the presence of a tapetum. c S was calculated with k = 0.0067 μm –1 , using equation for monochromatic light. All other values were calculated with equation for broad-spectrum light. d Values taken from frontal eye. e Posterior medial (PM) eye; f Anterior lateral (AL) eye. Source: Visual Ecology, 2014.

Factors limiting Resolution

[edit | edit source]The size of a photoreceptors receptive field can be approximated by:[1]

where d is the diameter of the photoreceptor and f is the focal length. This equation tells us that a bigger eye can achieve better resolution, as it has a higher focal length while the size of the photoreceptors stay the same. Essentially, resolution can be increased by cramming as much receptors as possible on the retina. Birds of prey have much higher visual acuity than humans. The eye of an eagle for example is a big as a humans eye which means it is much bigger relative to body size, but it has a far higher density of photoreceptors.

There are of course further restrictions. Much like in photography eye lenses are associated with a number of errors.

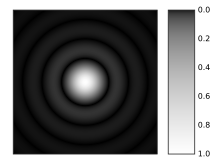

Diffraction blurs the image. A point-like light source (e.g. a star in the night sky) is projected on a bigger point with faint circles around it. This image is also called an Airy Disk. This blurres the vision, when the light from the point source is projected to multiple photoreceptors.[14]

Chromatic aberration The refractive index n is a function of the wavelength. This means that a lens fails to focus all wavelengths on the same point. Some spiders have adapted to use this for depth perception. The jumping spider has a retina consisting of four layers on each of which light of different wavelengths is focused. Nagata et al. found green photoreceptor in both the deepest and the second-deepest layer, even though the green light is focused on the deepest layer. This means that the green image in the second-deepest layer is always out of focus. From the mismatch between the layers, the jumping spider gains depth perception.[15]

Some fish have evolved to compensate for chromatic aberration with multifocal lenses.<ref>Kröger; et al. (1999), Multifocal lenses compensate for chromatic defocus in vertebrate eyes. {{citation}}: Explicit use of et al. in: |author= (help); Text "Journal of Computational Physiology" ignored (help)

Spherical aberration Since real lenses are unable to focu incoming paralell light in a point an error called spherical aberration occurs. Adaptations that circumvent this problem include non-spherical lenses (even though this creates other problems) and lenses with a graded reflective index.

Another problem that will not be discussed here, is the optical cross talk between photoreceptors.

- ↑ a b Thomas W. Cronin, Sönke Johnsen, N. Justin Marshall, Eric J. Warrant (2014), Visual Ecology, Princeton University Press

{{citation}}: CS1 maint: multiple names: authors list (link) - ↑ Douglas; et al. (2003), "Spectral Sensitivity Tuning in the Deep-Sea", Sensory Processing in Aquatic Environments

{{citation}}: Explicit use of et al. in:|author=(help) - ↑ Marshall; et al. (2003), "The design of color signals and color vision in fishes", Sensory Processing in Aquatic Environments

{{citation}}: Explicit use of et al. in:|author=(help) - ↑ Fasick JI, Robinson PR. (2000), Spectral-tuning mechanisms of marine mammal rhodopsins and correlations with foraging depth.

- ↑ Tuuli Kauer, Helgi Arst, Lea Tuvikene (2010), "Underwater light field and spectral distribution of attenuation depth in inland and coastal waters", Oceanologia

{{citation}}: CS1 maint: multiple names: authors list (link) - ↑ De Castro, Francisco (1999), "Light spectral composition in a tropical forest: measurements and model", tree physiology

- ↑ Aho; et al. (1988), "Low retinal noise in animals with low body temperature allows high visual sensitivity", Nature

{{citation}}: Explicit use of et al. in:|author=(help) - ↑ Nilsson, DE (2013), "Eye evolution and its functional basis", Visual Neuroscience

- ↑ Pirenne, M.H. (1948), Vision and the Eye, Chapman and Hall, Ltd.

- ↑ Rose, A. (1948), "The Sensitivity Performance of the Human Eye on an Absolute Scale", Journal of the Optical Society of America

- ↑ P. G. Lillywhite & S. B. Laughlin (1980), "Transducer noise in a photoreceptor", Nature

- ↑ Baylor; et al. (1979), "Two components of electrical dark noise in toad retinal rod outer segments", Journal of Physiology

{{citation}}: Explicit use of et al. in:|author=(help) - ↑ a b c Warrant EJ, Nilsson DE (1998), "Absorption of white light in photoreceptors", Vision Research

- ↑ Jones; et al. (2007), "Avian Vision: A Review of Form and Function with Special Consideration to Birds of Prey", Journal of Exotic Pet Medicine

{{citation}}: Explicit use of et al. in:|author=(help) - ↑ Nagata (2012), "Depth perception from image defocus in a jumping spider.", Science