- Problem 1

For

check that  .

.

- Answer

One way to proceed is left to right.

- This exercise is recommended for all readers.

- Problem 3

Show that these matrices are not similar.

- Answer

Gauss' method shows that the first matrix represents maps of rank two while the second matrix represents maps of rank three.

- Problem 4

Consider the transformation  described by

described by

,

,  , and

, and  .

.

- Find

where

where  .

.

- Find

where

where  .

.

- Find the matrix

such that

such that  .

.

- Answer

- Because

is described with the members of

is described with the members of  ,

finding the matrix representation is easy:

,

finding the matrix representation is easy:

gives this.

- We will find

,

,  , and

, and  ,

to find how each is represented with respect to

,

to find how each is represented with respect to  .

We are given that

.

We are given that  , and the other two are easy to see:

, and the other two are easy to see:

and

and  .

By eye, we get the representation of each vector

.

By eye, we get the representation of each vector

and thus the representation of the map.

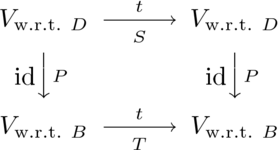

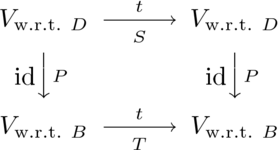

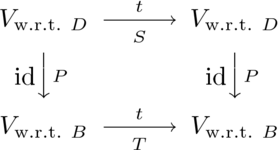

- The diagram, adapted for this

and

and  ,

,

shows that  .

.

- This exercise is recommended for all readers.

- Problem 5

Exhibit an nontrivial similarity relationship in this way: let

act by

act by

and pick two bases,

and represent  with respect to then

with respect to then

and

and  .

Then compute

the

.

Then compute

the  and

and  to change bases from

to change bases from  to

to  and

back again.

and

back again.

- Answer

One possible choice of the bases is

(this  is suggested by the map description).

To find the matrix

is suggested by the map description).

To find the matrix  , solve the relations

, solve the relations

to get  ,

,  ,

,  and

and

.

.

Finding  involves a bit more computation.

We first find

involves a bit more computation.

We first find  .

The relation

.

The relation

gives  and

and  , and so

, and so

making

and hence  acts on the first basis vector

acts on the first basis vector  in this way.

in this way.

The computation for  is similar.

The relation

is similar.

The relation

gives  and

and  , so

, so

making

and hence  acts on the second basis vector

acts on the second basis vector  in this way.

in this way.

Therefore

and these are the change of basis matrices.

The check of these computations is routine.

- Problem 6

Explain Example 1.3 in terms of maps.

- Answer

The only representation of a zero map is a zero matrix, no matter what the pair of bases  , and so in particular for any single basis

, and so in particular for any single basis  we have

we have  . The case of the identity is related, but slightly different: the only representation of the identity map, with respect to any

. The case of the identity is related, but slightly different: the only representation of the identity map, with respect to any  , is the identity

, is the identity  . (Remark: of course, we have seen examples where

. (Remark: of course, we have seen examples where  and

and  — in fact, we have seen that any nonsingular matrix is a representation of the identity map with respect to some

— in fact, we have seen that any nonsingular matrix is a representation of the identity map with respect to some  .)

.)

- This exercise is recommended for all readers.

- This exercise is recommended for all readers.

- Problem 8

Prove that if two matrices are similar and one is invertible then

so is the other.

- Answer

Matrix similarity is a special case of matrix equivalence (if matrices are similar then they are matrix equivalent) and matrix equivalence preserves nonsingularity.

(This is an extension of the rule that similar matrices have equal determinants, which can be used as indicator if it's invertible.)

- This exercise is recommended for all readers.

- Problem 10

Consider a

matrix representing, with respect to some  ,

reflection across the

,

reflection across the  -axis in

-axis in  .

Consider also

a matrix representing, with respect to some

.

Consider also

a matrix representing, with respect to some  ,

reflection across the

,

reflection across the  -axis.

Must they be similar?

-axis.

Must they be similar?

- Answer

Let  and

and  be the reflection maps (sometimes called "flip"s).

For any bases

be the reflection maps (sometimes called "flip"s).

For any bases

and

and  , the matrices

, the matrices  and

and

are similar.

First note that

are similar.

First note that

are similar because the second matrix is the representation of  with respect to the basis

with respect to the basis  :

:

where  .

.

Now the conclusion follows from the transitivity part of

Problem 9.

To finish without relying on that exercise, write  and

and  . Using the equation in the first paragraph, the first of these two becomes

. Using the equation in the first paragraph, the first of these two becomes  and rewriting the second of these two as

and rewriting the second of these two as  and substituting gives the desired relationship

and substituting gives the desired relationship

Thus the matrices  and

and  are similar.

are similar.

- Problem 11

Prove that similarity preserves determinants and rank.

Does the converse hold?

- Answer

We must show that if two matrices are similar then they have the same

determinant and the same rank.

Both determinant and rank are properties of matrices that we

have already shown to be preserved by matrix equivalence.

They are therefore preserved by similarity (which is a

special case of matrix equivalence: if two matrices

are similar then they are matrix equivalent).

To prove the statement without quoting the results about

matrix equivalence, note first that

rank is a property of the map (it is the dimension of the rangespace)

and since we've shown that

the rank of a map is the rank of a representation,

it must be the same for all representations.

As for determinants,

.

.

The converse of the statement does not hold; for instance, there are matrices with the same determinant that are not similar. To check this, consider a nonzero matrix with a determinant of zero. It is not similar to the zero matrix, the zero matrix is similar only to itself, but they have they same determinant. The argument for rank is much the same.

- Problem 13

Can two different diagonal matrices be in the same similarity class?

- Answer

Yes, these are similar

since, where the first matrix is  for

for  , the second matrix is

, the second matrix is  for

for  .

.

- This exercise is recommended for all readers.

- Problem 14

Prove that if two matrices are similar then their  -th powers

are similar when

-th powers

are similar when  .

What if

.

What if  ?

?

- Answer

The  -th powers are similar because, where each matrix represents

the map

-th powers are similar because, where each matrix represents

the map  , the

, the  -th powers represent

-th powers represent

, the composition of

, the composition of  -many

-many  's.

(For instance, if

's.

(For instance, if  then

then  .)

.)

Restated more computationally, if  then

then

.

Induction extends that to all powers.

.

Induction extends that to all powers.

For the  case, suppose that

case, suppose that  is invertible and that

is invertible and that  . Note that

. Note that  is invertible:

is invertible:  , and that same equation shows that

, and that same equation shows that  is similar to

is similar to  . Other negative powers are now given by the first paragraph.

. Other negative powers are now given by the first paragraph.

- This exercise is recommended for all readers.

- Problem 16

List all of the matrix equivalence classes of  matrices.

Also list the similarity classes, and describe which similarity classes are

contained inside of each matrix equivalence class.

matrices.

Also list the similarity classes, and describe which similarity classes are

contained inside of each matrix equivalence class.

- Answer

There are two equivalence classes, (i) the class of rank zero matrices,

of which there is one:

,

and (2) the class of rank one matrices,

of which there are infinitely many:

,

and (2) the class of rank one matrices,

of which there are infinitely many:

.

.

Each  matrix is alone in its similarity class.

That's because any transformation of a one-dimensional space

is multiplication by a scalar

matrix is alone in its similarity class.

That's because any transformation of a one-dimensional space

is multiplication by a scalar  given by

given by

.

Thus, for any basis

.

Thus, for any basis  ,

the matrix representing a transformation

,

the matrix representing a transformation  with respect to

with respect to  is

is

.

.

So, contained in the matrix equivalence class  is (obviously) the single similarity class consisting of the matrix

is (obviously) the single similarity class consisting of the matrix  . And, contained in the matrix equivalence class

. And, contained in the matrix equivalence class  are the infinitely many, one-member-each, similarity classes consisting of

are the infinitely many, one-member-each, similarity classes consisting of  for

for  .

.

- Problem 17

Does similarity preserve sums?

- Answer

No.

Here is an example that has two pairs, each of two similar matrices:

and

(this example is mostly arbitrary, but not entirely, because

the center matrices on the two left sides add to the zero matrix).

Note that the sums of these similar matrices are not similar

since the zero matrix is similar only to itself.

- Halmos, Paul P. (1958), Finite Dimensional Vector Spaces (Second ed.), Van Nostrand.