- This exercise is recommended for all readers.

- Problem 1

Verify, using Example 1.4 as a model, that the two correspondences given before the definition are isomorphisms.

- Example 1.1

- Example 1.2

- Answer

- Call the map

.

.

It is one-to-one because if  sends two members of the domain to the same image, that is, if

sends two members of the domain to the same image, that is, if  , then the definition of

, then the definition of  gives that

gives that

and since column vectors are equal only if they have equal components, we have that  and that

and that  . Thus, if

. Thus, if  maps two row vectors from the domain to the same column vector then the two row vectors are equal:

maps two row vectors from the domain to the same column vector then the two row vectors are equal:  .

To show that

.

To show that  is onto we must show that any member of the codomain

is onto we must show that any member of the codomain  is the image under

is the image under  of some row vector. That's easy;

of some row vector. That's easy;

is  .

The computation for preservation of addition is this.

.

The computation for preservation of addition is this.

The computation for preservation of scalar multiplication is similar.

- Denote the map from Example 1.2 by

. To show that it is one-to-one, assume that

. To show that it is one-to-one, assume that  . Then by the definition of the function,

. Then by the definition of the function,

and so  and

and  and

and  . Thus

. Thus  , and consequently

, and consequently  is one-to-one.

The function

is one-to-one.

The function  is onto because there is a polynomial sent to

is onto because there is a polynomial sent to

by  , namely,

, namely,  .

As for structure, this shows that

.

As for structure, this shows that  preserves addition

preserves addition

and this shows

that it preserves scalar multiplication.

- This exercise is recommended for all readers.

- Problem 3

Show that the natural map  from

Example 1.5

is an isomorphism.

from

Example 1.5

is an isomorphism.

- Answer

To verify it is one-to-one, assume that  . Then

. Then  by the definition of

by the definition of  . Members of

. Members of  are equal only when they have the same coefficients, so this implies that

are equal only when they have the same coefficients, so this implies that  and

and  and

and  . Therefore

. Therefore  implies that

implies that  , and so

, and so  is one-to-one.

is one-to-one.

To verify that it is onto, consider an arbitrary member of the codomain  and observe that it is indeed the image of a member of the domain, namely, it is

and observe that it is indeed the image of a member of the domain, namely, it is  .

(For instance,

.

(For instance,  .)

.)

The computation checking that  preserves addition is this.

preserves addition is this.

The check that  preserves scalar multiplication is this.

preserves scalar multiplication is this.

- This exercise is recommended for all readers.

- Problem 4

Decide whether each map is an isomorphism (if it is an isomorphism then prove it and if it isn't then state a condition that it fails to satisfy).

-

given by

given by

-

given by

given by

-

given by

given by

-

given by

given by

- Answer

- No; this map is not one-to-one. In particular, the matrix of all zeroes is mapped to the same image as the matrix of all ones.

- Yes, this is an isomorphism.

It is one-to-one:

gives that  , and that

, and that  , and that

, and that  , and that

, and that  .

It is onto, since this shows

.

It is onto, since this shows

that any four-tall vector is the image of a  matrix.

Finally, it preserves combinations

matrix.

Finally, it preserves combinations

and so item 2 of Lemma 1.9 shows that it preserves structure.

- Yes, it is an isomorphism.

To show that it is one-to-one, we suppose that two members of the domain have the same image under

.

.

This gives, by the definition of  , that

, that  and then the fact that polynomials are equal only when their coefficients are equal gives a set of linear equations

and then the fact that polynomials are equal only when their coefficients are equal gives a set of linear equations

that has only the solution  ,

,  ,

,  , and

, and  .

To show that

.

To show that  is onto, we note that

is onto, we note that  is the image under

is the image under  of this matrix.

of this matrix.

We can check that  preserves structure by using item 2 of Lemma 1.9.

preserves structure by using item 2 of Lemma 1.9.

- No, this map does not preserve structure. For instance, it does not send the zero matrix to the zero polynomial.

- This exercise is recommended for all readers.

- Problem 6

Refer to Example 1.1. Produce two more isomorphisms (of course, that they satisfy the conditions in the definition of isomorphism must be verified).

- Answer

Many maps are possible. Here are two.

The verifications are straightforward adaptations of the others above.

- Problem 7

Refer to Example 1.2. Produce two more isomorphisms (and verify that they satisfy the conditions).

- Answer

Here are two.

Verification is straightforward (for the second, to show that it is onto, note that

is the image of  ).

).

- This exercise is recommended for all readers.

- Problem 9

Find two isomorphisms between  and

and  .

.

- Answer

Here are two:

Verification that each is an isomorphism is easy.

- This exercise is recommended for all readers.

- Problem 12

Prove that the map in Example 1.7, from  to

to  given by

given by  , is a vector space isomorphism.

, is a vector space isomorphism.

- Answer

This is the map, expanded.

To finish checking that it is an isomorphism, we apply item 2 of Lemma 1.9 and show that it preserves linear combinations of two polynomials. Briefly, the check goes like this.

- Problem 13

Why, in Lemma 1.8, must there be a  ? That is, why must

? That is, why must  be nonempty?

be nonempty?

- Answer

No vector space has the empty set underlying it. We can take  to be the zero vector.

to be the zero vector.

- Problem 15

In the proof of Lemma 1.9, what about the zero-summands case (that is, if  is zero)?

is zero)?

- Answer

A linear combination of  vectors adds to the zero vector and so Lemma 1.8 shows that the three statements are equivalent in this case.

vectors adds to the zero vector and so Lemma 1.8 shows that the three statements are equivalent in this case.

- This exercise is recommended for all readers.

- Problem 17

These prove that isomorphism is an equivalence relation.

- Show that the identity map

is an isomorphism. Thus, any vector space is isomorphic to itself.

is an isomorphism. Thus, any vector space is isomorphic to itself.

- Show that if

is an isomorphism then so is its inverse

is an isomorphism then so is its inverse  . Thus, if

. Thus, if  is isomorphic to

is isomorphic to  then also

then also  is isomorphic to

is isomorphic to  .

.

- Show that a composition of isomorphisms is an isomorphism: if

is an isomorphism and

is an isomorphism and  is an isomorphism then so also is

is an isomorphism then so also is  . Thus, if

. Thus, if  is isomorphic to

is isomorphic to  and

and  is isomorphic to

is isomorphic to  , then also

, then also  is isomorphic to

is isomorphic to  .

.

- Answer

In each item, following item 2 of Lemma 1.9, we show that the map preserves

structure by showing that the it preserves linear combinations of two members of the domain.

-

The identity map is clearly one-to-one and onto. For linear combinations the check is easy.

- The inverse of a correspondence is also a correspondence (as stated in the appendix), so we need only check that the inverse preserves linear combinations. Assume that

(so

(so  ) and assume that

) and assume that  .

.

- The composition of two correspondences is a correspondence (as stated in the appendix), so we need only check that the composition map preserves linear combinations.

- Problem 19

Suppose that  is an isomorphism. Prove that the set

is an isomorphism. Prove that the set  is linearly dependent if and only if the set of images

is linearly dependent if and only if the set of images  is linearly dependent.

is linearly dependent.

- Answer

We will prove something stronger— not only is the existence of a dependence preserved by isomorphism, but each instance of a dependence is preserved, that is,

The  direction of this statement holds by item 3 of Lemma 1.9. The

direction of this statement holds by item 3 of Lemma 1.9. The  direction holds by regrouping

direction holds by regrouping

and applying the fact that  is one-to-one, and so for the two vectors

is one-to-one, and so for the two vectors  and

and  to be mapped to the same image by

to be mapped to the same image by  , they must be equal.

, they must be equal.

- This exercise is recommended for all readers.

- Problem 20

Show that each type of map from Example 1.6 is an automorphism.

- Dilation

by a nonzero scalar

by a nonzero scalar  .

.

- Rotation

through an angle

through an angle  .

.

- Reflection

over a line through the origin.

over a line through the origin.

Hint.

For the second and third items, polar coordinates are useful.

- Answer

-

This map is one-to-one because if

then by definition of the map,

then by definition of the map,  and so

and so  , as

, as  is nonzero. This map is onto as any

is nonzero. This map is onto as any  is the image of

is the image of  (again, note that

(again, note that  is nonzero). (Another way to see that this map is a correspondence is to observe that it has an inverse: the inverse of

is nonzero). (Another way to see that this map is a correspondence is to observe that it has an inverse: the inverse of  is

is  .)

To finish, note that this map preserves linear combinations

.)

To finish, note that this map preserves linear combinations

and therefore is an isomorphism.

- As in the prior item, we can show that the map

is a correspondence by noting that it has an inverse,

is a correspondence by noting that it has an inverse,  .

That the map preserves structure is geometrically easy to see. For instance, adding two vectors and then rotating them has the same effect as rotating first and then adding. For an algebraic argument, consider polar coordinates: the map

.

That the map preserves structure is geometrically easy to see. For instance, adding two vectors and then rotating them has the same effect as rotating first and then adding. For an algebraic argument, consider polar coordinates: the map  sends the vector with endpoint

sends the vector with endpoint  to the vector with endpoint

to the vector with endpoint  . Then the familiar trigonometric formulas

. Then the familiar trigonometric formulas  and

and  show how to express the map's action in the usual rectangular coordinate system.

show how to express the map's action in the usual rectangular coordinate system.

Now the calculation for preservation of addition

is routine.

The calculation for preservation of scalar multiplication is similar.

-

This map is a correspondence because it has an inverse (namely, itself).

As in the last item, that the reflection map preserves structure is geometrically easy to see: adding vectors and then reflecting gives the same result as reflecting first and then adding, for instance. For an algebraic proof, suppose that the line

has slope

has slope  (the case of a line with undefined slope can be done as a separate, but easy, case). We can follow the hint and use polar coordinates: where the line

(the case of a line with undefined slope can be done as a separate, but easy, case). We can follow the hint and use polar coordinates: where the line  forms an angle of

forms an angle of  with the

with the  -axis, the action of

-axis, the action of  is to send the vector with endpoint

is to send the vector with endpoint  to the one with endpoint

to the one with endpoint  .

.

To convert to rectangular coordinates, we will use some trigonometric formulas, as we did in the prior item. First observe that  and

and  can be determined from the slope

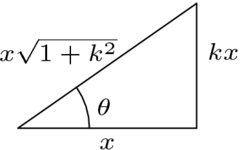

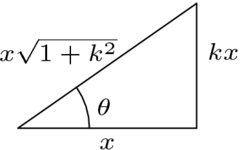

can be determined from the slope  of the line. This picture

of the line. This picture

gives that  and

and  . Now,

. Now,

and thus the first component of the image vector is this.

A similar calculation shows that the second component of the image

vector is this.

With this algebraic description of the action of

checking that it preserves structure is routine.

- Problem 22

- Show that a function

is an automorphism if and only if it has the form

is an automorphism if and only if it has the form  for some

for some  .

.

- Let

be an automorphism of

be an automorphism of  such that

such that  . Find

. Find  .

.

- Show that a function

is an automorphism if and only if it has the form

is an automorphism if and only if it has the form

for some  with

with  . Hint. Exercises in prior subsections have shown that

. Hint. Exercises in prior subsections have shown that

if and only if  .

.

- Let

be an automorphism of

be an automorphism of  with

with

Find

- Answer

- For the "only if" half, let

to be an isomorphism. Consider the basis

to be an isomorphism. Consider the basis  . Designate

. Designate  by

by  . Then for any

. Then for any  we have that

we have that  , and so

, and so  's action is multiplication by

's action is multiplication by  . To finish this half, just note that

. To finish this half, just note that  or else

or else  would not be one-to-one.

For the "if" half we only have to check that such a map is an isomorphism when

would not be one-to-one.

For the "if" half we only have to check that such a map is an isomorphism when  . To check that it is one-to-one, assume that

. To check that it is one-to-one, assume that  so that

so that  and divide by the nonzero factor

and divide by the nonzero factor  to conclude that

to conclude that  . To check that it is onto, note that any

. To check that it is onto, note that any  is the image of

is the image of  (again,

(again,  ). Finally, to check that such a map preserves combinations of two members of the domain, we have this.

). Finally, to check that such a map preserves combinations of two members of the domain, we have this.

- By the prior item,

's action is

's action is  . Thus

. Thus  .

.

- For the "only if" half, assume that

is an automorphism. Consider the standard basis

is an automorphism. Consider the standard basis  for

for  . Let

. Let

Then the action of  on any vector is determined by by its action on the two basis vectors.

on any vector is determined by by its action on the two basis vectors.

To finish this half, note that if  , that is, if

, that is, if  is a multiple of

is a multiple of  , then

, then  is not one-to-one.

For "if" we must check that the map is an isomorphism, under the condition that

is not one-to-one.

For "if" we must check that the map is an isomorphism, under the condition that  . The structure-preservation check is easy; we will here show that

. The structure-preservation check is easy; we will here show that  is a correspondence. For the argument that the map is one-to-one, assume this.

is a correspondence. For the argument that the map is one-to-one, assume this.

Then, because  , the resulting system

, the resulting system

has a unique solution, namely the trivial one

and

and  (this follows from the hint).

The argument that this map is onto is closely related— this system

(this follows from the hint).

The argument that this map is onto is closely related— this system

has a solution for any  and

and  if and only if

this set

if and only if

this set

spans  , i.e., if and only if this set is

a basis (because it is a two-element subset of

, i.e., if and only if this set is

a basis (because it is a two-element subset of  ),

i.e., if and only if

),

i.e., if and only if  .

.

-

- Problem 23

Refer to Lemma 1.8 and Lemma 1.9. Find two more things preserved by isomorphism.

- Answer

There are many answers; two are linear independence and subspaces.

To show that if a set  is linearly independent then its image

is linearly independent then its image  is also linearly independent, consider a linear relationship among members of the image set.

is also linearly independent, consider a linear relationship among members of the image set.

Because this map is an isomorphism, it is one-to-one. So  maps only one vector from the domain to the zero vector in the range, that is,

maps only one vector from the domain to the zero vector in the range, that is,  equals the zero vector (in the domain, of course). But, if

equals the zero vector (in the domain, of course). But, if  is linearly independent then all of the

is linearly independent then all of the  's are zero, and so

's are zero, and so  is linearly independent also. (Remark. There is a small point about this argument that is worth mention. In a set, repeats collapse, that is, strictly speaking, this is a one-element set:

is linearly independent also. (Remark. There is a small point about this argument that is worth mention. In a set, repeats collapse, that is, strictly speaking, this is a one-element set:  , because the things listed as in it are the same thing. Observe, however, the use of the subscript

, because the things listed as in it are the same thing. Observe, however, the use of the subscript  in the above argument. In moving from the domain set

in the above argument. In moving from the domain set  to the image set

to the image set  , there is no collapsing, because the image set does not have repeats, because the isomorphism

, there is no collapsing, because the image set does not have repeats, because the isomorphism  is one-to-one.)

is one-to-one.)

To show that if  is an isomorphism and if

is an isomorphism and if  is a subspace of the domain

is a subspace of the domain  then the set of image vectors

then the set of image vectors  is a subspace of

is a subspace of  , we need only show that it is closed under linear combinations of two of its members (it is nonempty because it contains the image of the zero vector). We have

, we need only show that it is closed under linear combinations of two of its members (it is nonempty because it contains the image of the zero vector). We have

and  is a member of

is a member of  because of the closure of a subspace under combinations. Hence the combination of

because of the closure of a subspace under combinations. Hence the combination of  and

and  is a member of

is a member of  .

.

- Problem 24

We show that isomorphisms can be tailored to fit in that, sometimes, given vectors in the domain and in the range we can produce an isomorphism associating those vectors.

- Let

be a basis for

be a basis for  so that any

so that any  has a unique representation as

has a unique representation as  , which we denote in this way.

, which we denote in this way.

Show that the  operation is a function from

operation is a function from  to

to  (this entails showing that with every domain vector

(this entails showing that with every domain vector  there is an associated image vector in

there is an associated image vector in  , and further, that with every domain vector

, and further, that with every domain vector  there is at most one associated image vector).

there is at most one associated image vector).

- Show that this

function is one-to-one and onto.

function is one-to-one and onto.

- Show that it preserves structure.

- Produce an isomorphism from

to

to

that fits these specifications.

that fits these specifications.

- Answer

- The association

is a function if every member  of the domain is associated with at least one member of the codomain, and if every member

of the domain is associated with at least one member of the codomain, and if every member  of the domain is associated with at most one member of the codomain. The first condition holds because the basis

of the domain is associated with at most one member of the codomain. The first condition holds because the basis  spans the domain— every

spans the domain— every  can be written as at least one linear combination of

can be written as at least one linear combination of  's. The second condition holds because the basis

's. The second condition holds because the basis  is linearly independent— every member

is linearly independent— every member  of the domain can be written as at most one linear combination of the

of the domain can be written as at most one linear combination of the  's.

's. - For the one-to-one argument, if

, that is, if

, that is, if  then

then

and so  and

and  and

and  , which gives the conclusion that

, which gives the conclusion that  . Therefore this map is one-to-one.

For onto, we can just note that

. Therefore this map is one-to-one.

For onto, we can just note that

equals  , and so any member of the codomain

, and so any member of the codomain  is the image of some member of the domain

is the image of some member of the domain  .

.

- This map respects addition and scalar multiplication because it respects combinations of two members of the domain (that is, we are using item 2 of Lemma 1.9): where

and

and  , we have this.

, we have this.

- Use any basis

for

for  whose first two members are

whose first two members are  and

and  , say

, say  .

.

- Problem 26

(Requires the subsection on Combining Subspaces, which is optional.) Let  and

and  be vector spaces. Define a new vector space, consisting of the set

be vector spaces. Define a new vector space, consisting of the set  along with these operations.

along with these operations.

This is a vector space, the external direct sum of  and

and  .

.

- Check that it is a vector space.

- Find a basis for, and the dimension of, the external direct sum

.

.

- What is the relationship among

,

,  , and

, and  ?

?

- Suppose that

and

and  are subspaces of a vector space

are subspaces of a vector space  such that

such that  (in this case we say that

(in this case we say that  is the internal direct sum of

is the internal direct sum of  and

and  ). Show that the map

). Show that the map  given by

given by

is an isomorphism. Thus if the internal direct sum is defined then the internal and external direct sums are isomorphic.

- Answer

- Most of the conditions in the definition of a vector space are routine. We here sketch the verification of part 1 of that definition.

For closure of

, note that because

, note that because  and

and  are closed, we have that

are closed, we have that  and

and  and so

and so  . Commutativity of addition in

. Commutativity of addition in  follows from commutativity of addition in

follows from commutativity of addition in  and

and  .

.

The check for associativity of addition is similar. The zero element is  and the additive inverse of

and the additive inverse of  is

is  .

The checks for the second part of the definition of a vector space are also straightforward.

.

The checks for the second part of the definition of a vector space are also straightforward.

- This is a basis

because there is one and only one way to represent any member of  with respect to this set; here is an example.

with respect to this set; here is an example.

The dimension of this space is five.

- We have

as this is a basis.

as this is a basis.

- We know that if

then each

then each  can be written as

can be written as  in one and only one way. This is just what we need to prove that the given function an isomorphism.

First, to show that

in one and only one way. This is just what we need to prove that the given function an isomorphism.

First, to show that  is one-to-one we can show that if

is one-to-one we can show that if  , that is, if

, that is, if  then

then  and

and  . But the statement "each

. But the statement "each  is such a sum in only one way" is exactly what is needed to make this conclusion. Similarly, the argument that

is such a sum in only one way" is exactly what is needed to make this conclusion. Similarly, the argument that  is onto is completed by the statement that "each

is onto is completed by the statement that "each  is such a sum in at least one way".

This map also preserves linear combinations

is such a sum in at least one way".

This map also preserves linear combinations

and so it is an isomorphism.

![{\displaystyle f^{-1}(x)={\sqrt[{3}]{x}}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/c7c3ee50a06fa60166baec42d9c2200474fbf2a1)