Internet Governance/Print version

| This is the print version of Internet Governance You won't see this message or any elements not part of the book's content when you print or preview this page. |

The current, editable version of this book is available in Wikibooks, the open-content textbooks collection, at

https://en.wikibooks.org/wiki/Internet_Governance

Foreword

Foreword — Preface — Introduction — Background and Key Concepts — Issues and Actors — Internet Governance and Development — Models and Concepts — Conclusion: Best Practices and Looking Forward — Works Cited and Suggestions for Further Reading — Appendix 1: Selected Organizations Involved in Internet Governance — Appendix 2: Additional Background — Notes — About the Author — APDIP

Foreword

[edit | edit source]Since its origins over 30 years ago, the Internet has become a major new global telecommunications infrastructure. It is no wonder, then, that it has become a central topic in the more general discussion being held under the auspices of the Information Society. A World Summit on the Information Society (WSIS) was first held in December 2003 in Geneva. At that Summit, a number of Millennium Development Goals (MDGs) were discussed, focused on harnessing Information and Communications Technology (ICT) for the benefit of the world’s population. The Internet, seen as a prototype for the technologies that would underlie the Information Society, understandably became a focal point (and a flashpoint) of discussions. Ultimately, in response to debates over the concept of “Internet governance”, a Working Group on Internet Governance (WGIG) was established, with the objective of defining the term and providing input to the second phase of the World Summit, planned for Tunis in November 2005. The Working Group released its report on 18 July 2005 and offered a definition of Internet governance as well as some options for approaches to it. The primer you have before you is intended to provide background on the Internet and its operation as a contribution to the dialogue underway in preparation for the next Summit.

The Internet is a global, distributed system of hundreds of thousands, if not millions, of independently operated and interconnected computer communication networks. All of these networks use a standard set of protocols, sometimes referred to as the TCP/IP protocol suite. TCP and IP are the core protocols of the Internet and had their origins in research sponsored by the U.S. Defense Advanced Research Projects Agency in 1973. It is a system that, by design, is relatively insensitive to national boundaries.

For most of its existence, the Internet has been developed in the private sector with very light oversight on the part of the U.S. Government. The official roll-out of the system occurred on 1 January 1983 in the U.S. and at locations in the UK and Norway. By 1994, the Internet was becoming available to the public through commercial networks, and by 1995, a collection of commercial, interconnected public Internet backbones had replaced the private, U.S. Government-sponsored backbone. The scene was set for the so-called “dot-com boom” of the late 1990s. During this period, massive amounts of capital went into “Internet” companies, many of them bereft of serious business models other than a plan to go public. By April 2000, the bubble had burst. But the Internet continued to grow, even during the financial winter following the madness. Amidst the hyperbole, some very successful Internet businesses thrived or were born (e.g., Amazon, eBay, Google). Not counting early research in the 1970s, Voice over Internet Protocol (VoIP) arrived in 1993 but did not really take off until 10 years later, threatening to disrupt the century-old business model of the local and inter-exchange carriers. As the network and its applications became more widespread, and as the global economy began to rely upon its operation, governments began to realize that this new infrastructure, and what was done with it, might be tactically and strategically important to the well-being of their citizens.

The Government of Tunisia called for the second phase of WSIS, not focused solely on the Internet, but on the more general notion of a global economy interlinked in a web of information and the ability to process it with powerful, programmable and often portable tools. As the discussions unfolded in Geneva in 2003 and in regional fora, debates ensued as to the meaning of “governance” in this online environment. Particular focus was placed on Internet governance and the role that the Internet Corporation for Assigned Names and Numbers (ICANN) plays in that arena.

In the course of the WSIS debates, there have been calls for increased governmental oversight and even regulation of the Internet. It is important to recall that this is a network of hundreds of thousands of networks, not a single entity run by a single organization. It has operating components around the world. It has over a billion users. It is, by design, distributed and its components operated by a vast range of government, private sector and academic organizations. Few would dispute the importance of the Internet and its content to a wide swath of modern society. It is therefore vital that in the debates surrounding the perceived abuses of the Internet, we do not destroy all that is so beneficial in this system of systems.

One of the central reasons for the Internet’s success thus far has been its largely apolitical management. It seems important that any modifications to the general oversight and operation of the Internet avoid unnecessary and disruptive politicization. The Internet should remain a key infrastructure and not become a political football, subject to disputes between or among countries. We should build on the existing systems and bodies that have thus far served the Internet community with reasonable success. With few exceptions, most of the public policy issues associated with the Internet lie outside the purview of ICANN and can and should be addressed in different venues. For example, ‘spam’ and its instant messaging and Internet telephony relatives, ‘spim’ and ‘spit’, are pernicious practices that may only be successfully addressed through legal means, although there are some technical measures that can be undertaken by Internet Service Providers (ISPs) and end users to filter out the unwanted messages. Similarly fraudulent practices such as ‘phishing’ and ‘pharming’ may best be addressed through legal means. Intellectual property protection may, in part, be addressed through the World Intellectual Property Organization (WIPO) and business disputes through the World Trade Organization (WTO) or through alternative dispute resolution methods such as mediation and arbitration.

As these examples suggest, there can be little doubt that the development of a global Information Society will require extraordinary cooperation, collaboration and coordination. The Internet and its many players illustrate this observation and draw attention to what is possible when a spirit of cooperation can be fostered in the long term.

Only through understanding the full range of players in the Internet arena, their roles, responsibilities, authorities and limits of capabilities can we fashion reasonable outcomes for Internet governance and, more generally, an agenda for the development of an Information Society. This primer represents contributions towards the dialogue that is needed to frame Information Society goals and the methods to achieve them. The participants in these dialogues have an opportunity to help shape a constructive approach to the opportunities before us. We can but hope that they will take up the challenge and pursue the MDGs in a positive and successful fashion.

Vinton G. Cerf (India)

Chairman of the Board, ICANN

Preface

Foreword — Preface — Introduction — Background and Key Concepts — Issues and Actors — Internet Governance and Development — Models and Concepts — Conclusion: Best Practices and Looking Forward — Works Cited and Suggestions for Further Reading — Appendix 1: Selected Organizations Involved in Internet Governance — Appendix 2: Additional Background — Notes — About the Author — APDIP

Preface to the First Edition

[edit | edit source]At the start of 2005, there were an estimated 750 million Internet users worldwide. This figure is expected to grow exponentially in the coming years, particularly in the Asia-Pacific region, home to over half the world’s population. In fact, the Asia-Pacific region already contributes a larger share of users (about one-third of the total) than either North America or Europe.

Inevitably, such numbers will have a profound impact on the structure and use of the Internet. In turn, this impact will have a transformative effect on development around the world. Making the Internet work for sustainable human development, therefore, requires policies and interventions that are responsive to the needs of all countries. It requires a strong voice from different stakeholders and their constructive engagement in the policy-making processes related to Internet governance.

Achieving these goals is a challenge, especially for developing countries who participate in the governance process at a disadvantage. Most of the foundational rules of the Internet are already well-established, or under long-term negotiation. Newcomers to the Internet have had little opportunity to generate awareness among stakeholder groups, mobilize the required policy expertise and coordinate strategies for effective engagement.

Developing countries are further challenged by the global nature of the Internet, which means that many areas of governance require cooperation at the global level, often in fora dominated by the developed world. Furthermore, participating in conferences and meetings at these fora is often expensive, or otherwise difficult for stakeholders from developing countries.

In sum, the continuing march of Internet governance threatens to leave behind developing countries. Fortunately, such an outcome is not inevitable. The Open Regional Dialogue on Internet Governance (ORDIG) is a response to this threat, and an attempt to transform the challenges of governance into an opportunity. Since October 2004, ORDIG has gathered and analyzed perspectives and priorities through an extensive multi-stakeholder and participatory process that has involved more than 3,000 people in the Asia-Pacific region. This represents the first step in greater involvement by the region, and particularly by traditionally under-represented nations.

ORDIG activities thus far have included a regional online discussion forum involving more than 180 participants; a multilingual survey on Internet governance that collected 1,243 responses from 37 countries; a series of sub-regional consultations; and a variety of research on various topics and issues such as access costs, voice over Internet protocol, root servers, country code top-level domains, internationalized domain names, IP address management, content pollution, and cybercrime.

Based on these activities and research, ORDIG has produced a report entitled, “Voices from Asia-Pacific: Internet Governance Priorities and Recommendations”, which was considered by the High Level Asia-Pacific Conference for WSIS in Tehran, 31 May–2 June 2005, and was tabled at the Fourth Meeting of the UN Working Group on Internet Governance in Geneva in June 2005.

This primer is another important output. It is designed to help all stakeholders (government, private sector and civil society) gain quick access to basic facts, concepts and priority issues, laying the foundation for a comprehensive understanding of Internet governance issues from a distinctively Asia-Pacific perspective.

We welcome and encourage feedback from any constituency in the Asia-Pacific region. For more information on ORDIG, please visit the online knowledge portal at www.igov.apdip.net or contact <info@igov.apdip.net.>

Shahid Akhtar

Programme Coordinator, UNDP-APDIP

Introduction

Foreword — Preface — Introduction — Background and Key Concepts — Issues and Actors — Internet Governance and Development — Models and Concepts — Conclusion: Best Practices and Looking Forward — Works Cited and Suggestions for Further Reading — Appendix 1: Selected Organizations Involved in Internet Governance — Appendix 2: Additional Background — Notes — About the Author — APDIP

Introduction

[edit | edit source]- We are creating a world that all may enter without privilege or prejudice accorded by race, economic power, military force, or station of birth.

- We are creating a world where anyone, anywhere may express his or her beliefs, no matter how singular, without fear of being coerced into silence or conformity.

- We will create a civilization of the Mind in Cyberspace. May it be more humane and fair than the world your governments have made before.

- — John Perry Barlow, “A Declaration of the Independence of Cyberspace”, 1996

Almost a decade has passed since Barlow wrote his famous Declaration of Independence. Those were heady times. Netscape’s phenomenal Initial Public Offering (IPO) had taken place a year earlier, launching a thousand (often short-lived) fortunes, and apparently transforming the social, economic and even political landscape. Every week seemed to herald a new innovation, a new technology or company that appeared to vindicate Barlow’s utopian vision. Indeed, as Lawrence Lessig argued in his influential book, Code and Other Laws of Cyberspace, the rise of the Internet was accompanied by a euphoria akin to that which followed the collapse of the Berlin wall [1] .

Since that time, the Internet has significantly matured. Today it is no longer a novelty or a curiosity; and, while significant economic opportunities remain, the get-rich frontier mentality of the 1990s is a fading memory. Indeed, for many users, particularly those in developed countries and urban centres, the Internet is so woven into the fabric of daily life that it is easy to forget just how special and transformative the network really is. In a sense, the Internet has shifted from the foreground to the background: as a global infrastructure that drives our economic and social life, it is today the engine behind many of the events and developments that we consider most newsworthy or attention-grabbing.

It is easy, given such conditions, to take the Internet for granted. But in fact, for all its staying power and phenomenal growth, the network remains in some senses a delicate, and even fragile, phenomenon. It relies on a bedrock of technical standards that are the outcome of a finely balanced consensus among users, government officials, business, and members of the disparate technology communities. Its global reach – what experts call the network’s global seamlessness – must always navigate the shoals of competing legal jurisdictions and various concerns over national sovereignty. More generally, a host of agreements, laws, treaties, institutions, technical protocols, and non-binding precedents function in a tenuous coalition to ensure the smooth functioning and stability of the network.

Put together, it is these various forces, which collectively determine what can and cannot happen on the network, that constitute the broad concept of Internet governance. In what follows, we offer an overview of that concept, discussing its history, the issues at stake, and the various actors involved. One of the challenges, in any such discussion, is providing some conceptual clarity to what is often a nebulously defined field. As we shall see, there exists a multitude of competing definitions of Internet governance, and a similarly vast range of actors. Moreover, the issues at stake are so broad and varied that it is difficult to discuss them in any systematic manner, especially in a brief and general primer such as this one.

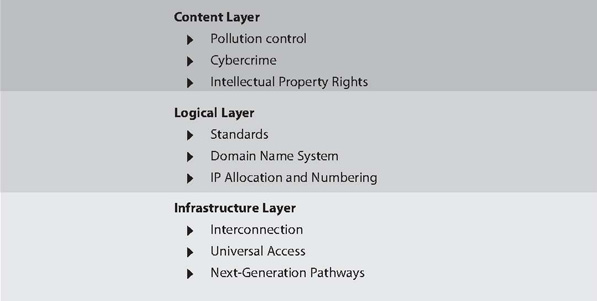

Section I, therefore, attempts to provide some definitions, and offers an analytical scheme by which to conceptualize the topic at hand. Internet governance, it suggests, can be understood through a metaphor of “layers” – a division of issues and actors into three broad categories, each of which corresponds to a different facet of the network. As the text explains, there exist many possible layers. This primer chooses to divide the network into three layers: infrastructure, logical, and content.

Section II addresses some of the specific issues at stake in Internet governance. It also discusses some of the actors – the bodies, institutions and fora – involved in these issues. In order to provide a certain amount of order to the crowded field of issues and actors, the discussion is organized by the previously mentioned layers.

Section III discusses an issue of particular relevance to readers in the Asia-Pacific region: the interaction of Internet governance and development. It attempts to show how governance decisions can have social and economic ramifications, and it suggests some steps that can be taken to enhance developing country participation in Internet governance.

Section IV returns to the broader picture. It explains a number of concepts, and evaluates some models for governance. Finally, Section V, the Conclusion, offers some best practices, and considers the future of Internet governance.

Background and Key Concepts

Foreword — Preface — Introduction — Background and Key Concepts — Issues and Actors — Internet Governance and Development — Models and Concepts — Conclusion: Best Practices and Looking Forward — Works Cited and Suggestions for Further Reading — Appendix 1: Selected Organizations Involved in Internet Governance — Appendix 2: Additional Background — Notes — About the Author — APDIP

What is “governance”?

[edit | edit source]The term governance often gives rise to confusion because it is (erroneously) assumed that it must refer solely to acts or duties of the government. Of course, governments do play an important role in many kinds of governance. However, in fact, the concept is far broader, and extends beyond merely the State. For example, we have seen increasing reference recently to the notion of “corporate governance”, a process that involves oversight both by the State and by a host of non-State bodies, including corporations themselves.

Don McLean points out that the word governance derives from the Latin word “gubernare”, which refers to the action of steering a ship [2] . This etymology suggests a broader definition for governance. One important implication of this broader view is that governance includes multiple tools and mechanisms. Traditional law and policy are certainly among those mechanisms. However, as we shall see throughout this primer, governance can take place through many other channels. Technology, social norms, decision-making procedures, and institutional design: all of these are as equally important in governance as law or policy.

What is “Internet governance”?

[edit | edit source]This broader view of governance is particularly important when it comes to discussions of Internet governance. In recent years, the notion of Internet governance has become hotly contested, a subject of political and ideological debate. Many divisions can be identified:

- Technical or holistic? Some people feel that Internet governance is a purely technical matter, best left to programmers and engineers; others argue for a more holistic approach that would take account of the social, legal and economic consequences of technical decisions.

- What is the place of governments? Another division is between those who would give greater (or even sole) authority to national governments, and those who would either include a wider range of actors (including civil society and private sector) or eliminate government altogether. The traditional role of national governments is also challenged by the global nature of the Internet and the resulting importance of supra-national entities. In recent years, questions regarding participation by governments in these entities have become more frequent, with some arguing that the relevance of governments has diminished, and others suggesting a need for greater government participation.

- Evolutionary or revolutionary? A further split can be identified between those who believe existing institutions and laws can be modified to manage the Internet (the “evolutionary” approach), and those who believe that an altogether new system is required (the “revolutionary” approach).

Leaving aside the merits of these various positions, the disagreement itself suggests a certain conceptual confusion. It shows that despite over a decade of debate and discussion, Internet governance remains a work in progress, a concept in search of definition. Still, this primer begins from the premise that certain principles can be established to lay down a working definition of Internet governance.

It was precisely in search of such a working definition that, as part of the United Nations-initiated WSIS, a Working Group was established in 2004 and asked to develop a definition of Internet governance. In its report, issued in June 2005, WGIG proposed the following definition:

- Internet governance is the development and application by Governments, the private sector and civil society, in their respective roles, of shared principles, norms, rules, decision-making procedures, and programmes that shape the evolution and use of the Internet [3] .

For the purposes of this primer, it is the broad and holistic view indicated by this definition that will be used to discuss Internet governance. Two points in particular stand out and will recur in what follows. First, that Internet governance includes a wider variety of actors than just the government; actors from the private sector and civil society are also important stakeholders—and second, that Internet governance refers to more than just Internet domain name and address management or technical decision-making. Indeed, the report of the WGIG goes on to make it clear that Internet governance “also includes other significant public policy issues, such as critical Internet resources, the security and safety of the Internet, and developmental aspects and issues [4] .” These and a variety of other issues will be considered throughout this primer.

What are “layers” and how are they relevant to Internet governance?

[edit | edit source]One way to conceptualize this more holistic approach is with reference to “layers” of governance. This method was originally proposed by law professor Yochai Benkler, who argued that modern communications networks should be understood as a series of “layers” rather than as an assorted bouquet of different technologies. Benkler lists three such layers: a “physical infrastructure” layer, through which information travels; a “code” or “logical” layer that controls the infrastructure; and a “content” layer, which contains the information that runs through the network [5] .

Today, it has become fairly common to conceptualize the Internet in this fashion. Some would change the names of the layers, and others would include additional layers [6] . The important point, however, is not which specific layers we choose, but the more general point that the Internet can be broken up into discrete analytical categories; and that, consequently, Internet governance itself takes place on multiple levels (or “layers”). In taking a holistic approach to governance, therefore, it is critical that we consider multiple layers.

In this primer, we focus on the three original layers mentioned by Benkler: infrastructure, logical, and content. Each of these layers, displayed in Figure 1, is discussed in greater detail in Section II. That section will also discuss the range of actors involved in governance at each layer (see also Appendix 1).

Figure 1: Internet Governance Issues by Layer

|

What is ICT and what is its relevance to the Internet?

[edit | edit source]ICT is an acronym that, unpacked, means “Information and Communications Technology”. As defined on Wikipedia,

- ICT is the technology required for information processing. In particular the use of electronic computers and computer software to convert, store, protect, process, transmit, and retrieve information from anywhere, anytime [7] .

The use of ICT as a general term is sometimes criticized for a lack of precision, but it is becoming increasingly common given the growing reality of digital convergence. Convergence refers to the phenomenon by which various different forms of digital content (voice, data, rich media like movies and music) can now not only travel along the same physical infrastructure but also be managed and manipulated together by the same systems. It will be covered more fully in Section IV, but what is important to understand here is that, in discussing the Internet, we are in fact discussing several underlying technologies and means of access. This is particularly relevant at the infrastructure layer, where a variety of technologies are deployed. Indeed, the Internet is accessed through a range of infrastructures including traditional telecommunications, cable, satellite, and various wireless methods. Internet governance will therefore have an impact on all these underlying technologies.

Why is the Internet difficult to govern?

[edit | edit source]The conceptual confusion over the meaning of Internet governance also stems from the fact that there exists no single central authority or mechanism – no traditional form of “governance” – with responsibility for all aspects of the network. This lack of a single authority is, in part, due to historical reasons but also due to the network’s technical architecture, which makes it very difficult to exert control. Unlike traditional networks (a telephone network or an early office LAN, for example), the Internet is not reliant upon a central server. Instead, the Internet is a network of autonomous networks, and control rests with the various distributed facilities that, together, make up the collaborative resource referred to as the Internet. For this reason, the Internet is said to be empowered at the edges (i.e., at the individual facilities); it is also sometimes defined as an end-to-end (e2e) network [8] .

The e2e nature of the network is largely a result of its technical design, and particularly of its packet-based data transfer. Using the TCP/IP (Transmission Control Protocol/Internet Protocol) suite, messages on the Internet are broken up into individual packets of data; the network is generally neutral with regard to these packets, and simply routes them using the most efficient path available, without regard to content or origin. This means that intelligence on the Internet rests at the edges: the power to innovate, to create new applications and services or types of content, rests with individual users. The network is also said to be “dumb” with regard to the content that it carries; as long as data packets fit into the basic TCP/IP protocol, the network simply routes them along, without discrimination or control.

The Internet’s open standards [9] and e2e model are at the root of its tremendous success and power to drive innovation. However, they are also why the Internet is so hard to manage. On a neutral network, there exists no gatekeeper or central authority to verify the contents of a packet. Viruses, spam, pornography, voice packets (from phone calls), and innocuous email messages: all of these are treated equally. In addition, the fact that multiple pathways exist to route packets from one source to another makes it very difficult for any party to block information; the packets will simply find another route.

This dilemma – the same technical architecture that allows the Internet to flourish also permits a number of harmful activities – is at the heart of many current debates over Internet governance. While the need for some form of control to limit harmful content is widely recognized, there is also widespread agreement that governance mechanisms should facilitate and not compromise the Internet’s core technical architecture. In particular, solutions must be found that maintain the principle of e2e and the underlying open standards upon which it is based.

What is the history of governance on the Internet?

[edit | edit source]As noted, there exists no central authority on the Internet. Instead, there exists a multitude of actors, institutions and bodies, exerting control or authority in a variety of ways, and at multiple levels. This does not necessarily imply anarchy, as some may suggest; these participants in general have formal and well-defined roles, and they address specific tasks or responsibilities. Section II contains a more detailed discussion of some of these actors and the issues they address. Here, we identify certain key milestones in Internet governance [10] .

Somewhat surprisingly, given the Internet’s eventual distance from the state, the network actually began as a government project. In the 1960s, the US Defense Department sponsored the development of the ARPANET by the Defense Advanced Research Projects Agency (DARPA). ARPANET was a distributed network that was designed to foster communication between research centres. Soon, this network, which remained under the control of the US government, was being used by a wider set of users, particularly in the academic community. In the 1970s, DARPA also developed a mobile packet radio network and a packet satellite network. These were conceptually integrated into an Internet in 1973. In 1983, the operational Internet was launched. The National Science Foundation Network (NSFNET) joined the Internet in 1986, spreading access to the Internet to an international community of users.

The Internet Activities Board was formed in 1984 and was made up of a number of task forces. One of these became the Internet Engineering Task Force (IETF) in 1986. It was created to manage the development of technical standards for the Internet. It represented early seeds of “governance” on the Internet, albeit of a unique kind. IETF governed through a consultative, open and co-operative approach. Decisions were made by consensus, and involved a wide variety of individuals and institutions. This freewheeling and decentralized decision-making process remains in many ways the hallmark of Internet governance; it accounts for a significant amount of resistance to any attempt by national governments to exert control over the network.

The Domain Name System (DNS) was developed in the mid-1980s. It was managed by the Internet Assigned Numbers Authority (IANA) at the University of Southern California’s (USC’s) Information Sciences Institute under US Government contract for many years. The root servers of the DNS were operated voluntarily by 13 different organizations. The root zone file, defining the top level domains of the DNS was distributed by IANA to the root server operators. In 1994, the US Government outsourced the management of the DNS to a private entity, Network Solutions. This led to a considerable degree of dissatisfaction among other members of the Internet community, who feared that the network would become too commercialized. A long process of conflict and deliberation led ultimately to the creation, in 1998, of ICANN, a non-profit corporation charged with managing the Internet’s DNS. ICANN inherited the responsibilities of IANA in the course of its creation. Please refer to Appendix 2 for more information regarding the roles and functions of ICANN and IANA.

The creation of ICANN, in many ways, initiated the latest round of debates over Internet governance. Although created with primarily a technical mandate (i.e., managing the DNS, the allocation of Internet address space, and the recordation of parameters unique to the Internet protocol suite), ICANN quickly became embroiled in a host of controversies. Among other things, critics charge the organization with a lack of democracy and transparency, with being too close to the US Government, and with a perceived exclusion of voices from the developing world [11] .

Recently, controversy over the need for and nature of Internet governance has combined with changes in the underlying nature of the network itself to suggest that a new model of governance may be required. These changes include the tremendous growth of the network, and the increasing reliance of our social, economic and even political lives on what WGIG calls a “global facility [12] .” Together, all these events have called into question the relatively informal, consensual and trust-based models of governance that currently exist, and have led to exploration of new or modified governance models.

In 2002, the UN General Assembly took an important step towards such a new model when it called for a global conference, WSIS that would consider alternatives and ways to increase participation by developing countries. The first WSIS meeting was held in 2003, in Geneva, where representatives adopted a Declaration of Principles and a Plan of Action [13] . A follow-up meeting is scheduled for November 2005 in Tunis.

For the moment, the WSIS process remains incomplete, and its final outcome is not yet clear. Nonetheless, certain issues have risen to the top of the agenda, and are likely to feature prominently in future discussions. These include debates over the respective roles and authority of ICANN, the International Telecommunications Union (ITU) and, especially, national governments. Many developing countries, in particular, would like to see the role of States extended, while others remain wary of granting governments too much control. In addition, questions regarding participation by developing countries and the Internet’s role in fostering human and social development are also on the agenda. We discuss these issues more fully later, in Section III.

Should there be governance on the Internet?

[edit | edit source]The Internet’s architectural and ideological foundations – open standards, e2e, empowered users, absence of control – have from the start bred a certain libertarian streak that rejects any attempt to exert control, particularly by the government. Since the very success of the network stems from an absence of the control, the argument goes, government intervention would only stifle the network.

The most famous expression of this extreme form of libertarianism remains John Perry Barlow’s “Declaration of the Independence of Cyberspace,” quoted in the Introduction. At the start of that manifesto, Barlow declares that:

- Governments of the Industrial World, you weary giants of flesh and steel, I come from Cyberspace, the new home of Mind. On behalf of the future, I ask you of the past to leave us alone. You are not welcome among us. You have no sovereignty where we gather [14] .

Today, it is apparent that his vision was somewhat utopian. Nonetheless, many less radical observers continue to believe that the Internet’s success depends on keeping governance (by the State, or by any other authority) to a minimum. Some observers have invoked traditions of the commons, or the public forum, to envision a virtual space where ideas are exchanged freely, without rules, without regulation, and without controls.

These ideas are important to acknowledge because the Internet’s success does, to a significant extent, depend on its free and open culture. Nonetheless, it is also clear that the absence of rules can be as detrimental to this commons as the existence of bad rules; anarchy is as harmful as stifling regulation. The right question is, therefore, not whether there should be governance but rather what constitutes good governance. The goal for any governance mechanism should be to balance rules and freedom, control and anarchy, process and innovation.

Issues and Actors

Foreword — Preface — Introduction — Background and Key Concepts — Issues and Actors — Internet Governance and Development — Models and Concepts — Conclusion: Best Practices and Looking Forward — Works Cited and Suggestions for Further Reading — Appendix 1: Selected Organizations Involved in Internet Governance — Appendix 2: Additional Background — Notes — About the Author — APDIP

What are some of the issues involved in Internet governance?

[edit | edit source]As we have seen, Internet governance encompasses a range of issues and actors, and takes place at many layers. Throughout the network, there exist problems that need solutions, and, more importantly, potential that can be unleashed by better governance. It is not possible here to capture the full range of issues. This section, rather, seeks to provide a sampling. It describes the issues by layers, and it also discusses key actors for each layer. A more extensive description of actors and their responsibilities can be found in Appendix 1; Figure 1 also contains a representation of issues by layer.

Most importantly, this section attempts to make clear the real importance of Internet governance by drawing links between apparently technical decisions and their social, economic or political ramifications. Indeed, an important principle (and difficulty) of Internet governance is that the line between technical and policy decision-making is often blurred. Understanding the “real world” significance of even the most arcane technical decision is essential to understanding that decision and its processes, and to thinking of new ways to structure Internet governance.

What are some of the governance issues at the infrastructure layer?

[edit | edit source]The infrastructure layer can be considered the foundational layer of the Internet: It includes the copper and optical fibre cables (or “pipes”) and radio waves that carry data around the world and into users’ homes. It is upon this layer that the other two layers (logical and content) are built, and governance of the infrastructure layer is therefore critical to maintaining the seamlessness and viability of the entire network. Given the infrastructure layer’s importance, it makes sense that a wide range of issues requiring governance can be located at this level. Three, in particular, merit further discussion.

Interconnection

The Internet is a “network of networks”; it is composed of a multitude of smaller networks that must connect together (“interconnect”) in order for the global network to function seamlessly. In traditional telecommunications networks, interconnection is clearly regulated at the national level by State authorities, and at the international level (i.e., between national networks) by well-defined principles and agreements, some of which are supervised by the ITU. Interconnection between Internet networks, however, is not clearly governed by any entity, rules or laws. In recent years, this inherent ambiguity has become increasingly problematic, leading to high access costs for remote and developing countries, and in need of some kind of governance solution. Indeed, in its final report, the WGIG identified the ambiguity and uneven distribution of international interconnection costs as one of the key issues requiring a governance solution.15

On the Internet, access providers must interconnect with each other across international, national or local boundaries. Although not formalized, it is commonly said that there are three categories of access providers in this context: Tier 1 (large international backbone operators); Tier 2 (national or regional backbone operators); and Tier 3 (local ISPs). In most countries, there is some regulation of interconnection at national and local levels (for Tiers 2 and 3 ISPs), which may dictate rates and other terms between these providers. Internationally, however, there is no regulation, and the terms of any interconnection agreement are generally determined on the basis of negotiation and bargaining. In theory, this allows the market to determine interconnection in an efficient manner. In practice, however, unequal market position, and in particular the important positions occupied by Tier 1 providers, means that the larger providers are often able to dictate terms to the smaller ones, which in turn must bear the majority of the costs of connection.

This situation is particularly problematic for developing countries, which generally lack ownership of Tier 1 infrastructure and are often in a poor position to negotiate favourable access rates. By some accounts, ISPs in the Asia-Pacific region paid companies in the United States US$ 5 billion in “reverse subsidies” in 2000; in 2002, it was estimated that African ISPs were paying US$ 500 million a year. One commentator, writing on access in Africa, argues that “the existence of these reverse subsidies is the single largest factor contributing to high bandwidth costs”. 16

It should be noted that not everyone would agree with that statement, and that high international access costs are not by any means the only reason for high local access costs. A related – indeed, in a sense, the underlying – problem is the general lack of good local content in many developing countries. It is this shortage of local content, stored on local servers, that leads to high international connectivity costs as users are forced to access sites and information stored outside the country. Moreover, as we shall discuss further later, the lack of adequate competition policies and inadequately developed national markets also play a significant role in raising access costs for end-users. Increasing connectivity within regions has reduced some of the concerns for the costs of connection to major backbones, as has absolute cost of undersea optical cable services.

Actors Involved

This opaque setup – in effect, the absence of interconnection governance at the Tier 1 level – has created a certain amount of dissatisfaction, and some initial moves towards a governance solution. One of the first bodies to raise the issue of interconnection pricing was the Asia-Pacific Economic Cooperation Telecommunications and Information Working Group (APEC Tel), which, in 1998, questioned the existing system (or lack thereof ) of International Charging Arrangements for Internet Services (ICAIS). In addition, Australia, whose ISPs pay very high international access charges due to remoteness and relative lack of competition, has also expressed unhappiness with the current arrangement.

Regional groups such as APEC Tel have played an important role in putting today’s shortcomings on the agenda. However, the main body actually dealing with the issue is ITU, where a study group has been discussing governance mechanisms that could alleviate the current situation. Three main proposals appear to be on the table, with the chief disagreement being between larger industry players who would prefer a market-driven solution; and smaller industry players and developing countries, who would prefer a system that resembles the settlement currently used in international telecommunications. Under this system, the amount of traffic carried by operators is measured in terms of call-minutes and reconciled using previously agreed-upon rates. In the case of inter-provider Internet connections, however, there is no such thing as a “call minute,” since all traffic flows by way of packets which are not identified with specific calls. While packets can be easily counted, it is not necessarily clear which party, the sender or receiver, should be charged for any particular packet, particularly when the source and destination for those packets may not reside on the individual providers who are exchanging traffic.17

An added difficulty is that the settlement system relies on negotiated and often protracted bilateral agreements, whereas the Internet seems to require a global, multilateral solution. Identifying the appropriate global forum, however, has proven difficult: the issue does not fall under ICANN’s remit, and progress at the ITU has been slow. Some have suggested that interconnection charges should be considered a trade-related matter that could be taken up under WTO. For the moment, the lack of a global forum to deal with this issue represents perhaps the most serious obstacle to its resolution.

As noted earlier, it should also be reemphasized that the lack of an international settlement regime is not the only reason for high access costs. Often, poor (or absent) competition policies within countries also contribute to the problem, leading to inefficient markets and inflated costs for ISPs. Thus, a holistic approach to the problem of interconnection and access costs would address both the international and the local dimensions of the problem. For example, some countries have taken positive steps in this regard by de-licensing or drastically reducing entry barriers for ISPs. We discuss these issues further later in Section IV.

Universal Access

Another key area of governance concerns access, and in particular the notion of universal access. This notion is somewhat hard to define; in fact, one of the important tasks for governance would be to clarify between several competing definitions.18 Outstanding issues include whether universal access should cover:

- access for every citizen on an individual or household basis, or for communities (e.g., villages and small towns) to ensure that all citizens are within reach of an access point;

- access only to basic telephony (i.e., narrow-band), or access also to value-added services like the Internet and broadband; and

- access only to infrastructure, or also to content, services and applications.

In addition, any adequate definition of universal access must also address the following questions:

- How to define “universal”? Universal access is frequently taken to mean access across geographic areas, but it could equally refer to access across all gender, income, or age groups. In addition, the term is frequently used almost synonymously with the digital divide, to refer to the need for equitable access between rich and poor countries.

- Should universal access include support services? Access to content or infrastructure is not very useful if users are unable to make use of that access due to the fact that they are illiterate or uneducated. For this reason, it is sometimes argued that universal access policies must include a range of socio-economic support services.

Each of these goals, or a combination of them, is generally widely held to be desirable. However, the realization of universal access is complicated by the fact that there usually exist significant economic disincentives to connect traditionally underserved populations. For example, network providers argue that connecting geographically remote customers is financially unremunerative, and that the required investments to do so would make their businesses unsustainable. For this reason, one of the key governance decisions that must be made in any attempt to provide universal access is whether that goal should be left to market forces alone, or whether the State should provide some form of financial support to providers.

When (as is frequently the case) States decide to provide some form of public subsidy, then it is essential to determine the appropriate funding mechanism. Universal service funds, which allocate money to providers that connect underserved areas, are one possible mechanism. A more recent innovation has been the use of least cost-subsidy auctions, in which providers bid for a contract to connect underserved areas; the provider requiring the lowest subsidy is awarded the contract.

In addition to funding, the governance of universal access also encompasses a range of other topics. For instance, definitions of universal access need to be regularly updated to reflect technological developments – recently, some observers have suggested that universal service obligations (traditionally imposed only on fixed-line telecommunications providers), should also be imposed on cellular phone companies, and possibly even on ISPs. Interconnection arrangements, rights-of-way, and licensing policies are other matters that are relevant to universal access. The range of issues suggests the complexity of an adequate governance structure – but it also suggests the importance of such a structure.

Actors Involved

Since traditional universal access regulation involves fixed-line telephony, national and international telecommunications regulators are usually the most actively involved in governance for universal access. At the international level, the ITU-D, the development wing of ITU, plays an important role in developing policies, supporting various small-scale experiments in countries around the world, and in providing training and capacity building to its member states.

Most of the policies concerning universal access, however, are set within individual countries, by national governments and domestic regulatory agencies. In India, for example, the Department of Telecommunications (DOT), in consultation with the Telecommunications Regulatory Authority of India (TRAI), administers a universal service fund that disburses subsidies to operators serving rural areas. The Nepalese government has extended telecommunication access in its eastern region through least cost-subsidy auctions that award licenses to private operators that bid the lowest for a government subsidy. And in Hong Kong, universal service costs are estimated and shared among telecommunications operators based on the amount of international minutes traffic carried by them.

In addition to these traditional telecommunications authorities, international institutions and aid groups have begun taking an increasing interest in the subject of universal access. Both the World Bank (WB) and the United Nations (UN), for example, devote significant resources to the issue, as do several nongovernmental organizations (NGOs). For example, WB is providing US$ 53 million to fund the eSri Lanka project that aims to bridge the access gap between the western province and the northern, eastern and southern provinces. The project will subsidize the building of a fibre-optic backbone and rural telecentres. Similarly, the International Development Research Centre (IDRC) has funded several projects that consider optimal approaches for achieving rural connectivity (e.g., through the use of Wireless Fidelity (WiFi), or by setting up village telecentres).

One important venue where governance (and other) issues related to universal access are being discussed is WSIS. At its inception, the representatives of WSIS declared their

- common desire and commitment to build a people-centred, inclusive and development-oriented Information Society, where everyone can create, access, utilize and share information and knowledge, enabling individuals, communities and peoples to achieve their full potential in promoting their sustainable development and improving their quality of life, premised on the purposes.19

WSIS is likely to increase the interest of international aid agencies in the subject of universal access. In the future, as convergence becomes an increasing reality, multilateral bodies like the UN, ITU and others are likely to become more involved in developing appropriate governance mechanisms.

Next-Generation Pathways

Technology evolves at a rapid pace, and this evolution often brings great benefits to the Internet. However, the process of adopting new technologies can also be complicated, and is a further area requiring governance. Two issues, in particular, can benefit from governance.

The first concerns decisions on when to deploy new technologies. Many members of the technical community (and others) would argue that such decisions should simply be left to consumer choice. But governments often feel otherwise. For example, some governments have resisted the use of IP technology for phone calls, fearing the resulting loss of revenue to incumbent telecom operators. Likewise, many governments have yet to de-license the necessary spectrum for Wi-Fi networks, often citing security concerns. States may also choose to prioritize some technologies (e.g., narrowband connectivity) over others (e.g., more expensive broadband) in an effort to pursue social or developmental goals.

Such decisions are often met with scepticism, but the issue is not whether governments are right or wrong in resisting certain next-generation technologies. What matters is to understand that the decision on introducing new pathways is a governance decision: it is the product of active management by the State, and, ideally, by other involved stakeholders. Thus, a comprehensive approach to Internet governance would include mechanisms and steps to introduce next-generation pathways in a smooth and effective manner.

Next-generation technologies also require governance to ensure that they are deployed in a manner that is harmonious with pre-existing (or “legacy”) systems. Such coordination is essential at every layer of the network, but it is especially critical at the infrastructure layer. If new means of transmitting information cannot communicate with older systems, then that defeats the very purpose of deploying new systems. For example, much attention has been given in recent years to the promise of broadband wireless technologies like third generation (3G – for the cellular network) and Worldwide Interoperatibility of Microwave Access (WiMax – which is a wireless backbone technology that could potentially extend the reach of wireless Internet connectivity). Such network technologies are useful to the extent that they are compatible with existing communications networks. As with the decision on when to introduce new pathways, then, governance solutions are also required to decide how to introduce them, and in particular to ensure that standards and other technical specifications are compatible with existing networks.20

Actors Involved

As with other the topics discussed here, the governance of next-generation pathways is a broad-ranging process that involves a range of stakeholders. National governments are of course important, and play a determining role in deciding which technologies are adopted. This role, however, is often supplemented by advice from other groups. For example, ITU and other multilateral organizations play a key role in recommending the deployment of new technologies to national governments. In addition, industry groups sometimes play a role in lobbying for certain technologies over others. To balance their role, it is also important for governments to take into account the views of consumer groups and civil society.

Finally, standards bodies like IETF, the International Standards Organization (ISO) and others have an essential role to play, particularly in ensuring compatibility between new and legacy systems. In the case of standards based on open source, it is also possible for consumer and user groups to have a greater say over which technologies are adopted, and how they can promote social and other values.

What are some of the governance issues at the logical layer?

[edit | edit source]The logical layer sits on top of the infrastructure layer. Sometimes called the “code”layer, it consists of the software programs and protocols that“drive” the infrastructure, and that provide an interface to the user. At this layer, too, there exist various ways in which governance can address problems, enhance existing processes, and help to ensure that the Internet achieves its full potential.

Standards

Standards are among the most important issues addressed by Internet governance at any layer. As noted, the Internet is only able to function seamlessly over different networks, operating systems, browsers and devices because it sits on a bedrock of commonly agreed-upon technical standards. TCP/IP, discussed earlier, is perhaps the most important of these standards. Two other key standards are the HyperText Mark-up Language (HTML) and the HyperText Transfer Protocol (HTTP), developed by Tim Berners-Lee and his colleagues at CERN in Geneva to standardize the presentation and transport of web pages. Much as TCP/IP is the basis for the growth of the Internet’s infrastructure, so HTTP and HTML are the basis for the phenomenal growth of the World Wide Web. Other critical standards include Extensible Mark-up Language (XML), a standard for presenting information on web pages, and IPv6 (Internet Protocol, version 6, the successor to the current IPv4), used in Internet-addressing systems (see discussion below).

The centrality of standards to the Internet means that discussions over the best mechanism to manage and implement them are as old as the network itself. Indeed, long before the current governance debate, standards were already the product of de facto governance, primarily by consensus-driven technical bodies. This need for governance occurs because standards rely for their effectiveness on universal acceptance, which, in turn, relies on groups or bodies to decide upon and publish standard specifications. Without such control, the Internet would simply fragment into a Babel of competing technical specifications. Indeed, such a spectre haunts the future of HTML and XML, which over time has become increasingly fragmented due to competing versions and add-ons by private companies.

Another important issue concerns what some perceive as the gradual “privatization” of standards. While many standards on the Internet have traditionally been “open” (in the sense that their specifications have been available to all, often without a fee), there have been some indications of a move towards fee-based standards. For example, in 2001, the World Wide Web Consortium (W3C), a critical Internet standards body, raised the ire of the Internet community when it proposed endorsing patented standards for which users would have to make royalty payments; the proposal was later withdrawn, but it raised significant concerns that such moves could reduce the openness of the network.

Finally, standards also require governance so that they can be updated to accommodate new technologies or needs of the Internet community. For example, ongoing network security concerns (driven by the rise of viruses, spam, and other forms of unwanted content) have prompted some to call for new specifications for TCP/IP that would include more security mechanisms. Likewise, some feel that the spread of broadband, and the rise of applications relying on voice and rich media like movies, require the introduction of Quality of Service (QOS) standards to prioritize certain packets over others.

Currently, for example, the network does not differentiate between an email or a phone call, which is why Internet telephony remains somewhat unreliable (voice packets can be delayed or dropped along the way). However, the introduction of QOS standards, which could discriminate between packets, could mean a departure from the Internet’s cherished e2e architecture. This difficult dilemma – balancing the competing needs of openness with flexibility and manageability – is an example of the importance of adequate governance mechanisms that are able to reconcile competing values and goals.

Actors involved

Currently, Internet standards are determined in various places, including international multi-sectoral bodies, regional bodies, industry fora and consortiums, and professional organizations. This wide range of venues is emblematic not just of the variety of actors involved in Internet governance (broadly defined), but also of the range of issues and interests that must be accommodated. Industry representatives, for example, are often far more concerned with speed and efficiency in decision-making, while civil society representatives would sacrifice a certain amount of speed in the name of greater consultation and deliberation.

Amidst this variety of actors, three in particular are critical to the development of core Internet standards:

The Internet Engineering Task Force (IETF): IETF, a large body with open participation to all individuals and groups, is the primary standards body for the Internet. Through its various working groups, it sets standards for Internet security, packeting, and routing, among other issues. Probably the most important standards that fall under IETF are the TCP and IP protocols.

In addition to being one of the most important groups, IETF has also been a quintessential Internet decision-making body. Its open participation, consultative decision-making processes, and relative lack of organizational hierarchy have made it a model for an inclusive, yet highly effective, system of governance that is unique to the online world. Until recently, most of IETF’s work took place informally, primarily via face to face meetings, mailing lists and other virtual tools. However, as the organization’s membership (and the Internet itself ) grew in size and complexity, certain administrative changes were introduced to streamline – and, to a certain extent centralize – decision-making. The Internet Activities Board (now the Internet Architecture Board (IAB)) made standards decisions until 1992 when this task became the responsibility of the Internet Engineering Steering Group (IESG). Pleases refer to Appendix 2, ‘Internet Standards’, for more information on IETF and other standards bodies.

International Telecommunication Union-Telecommunication Standardization (ITU-T): ITUT is the standard-setting wing of the ITU. It operates through study groups whose recommendations must subsequently be approved by member-states. As one of the oldest standards bodies, and as a member of the UN with an intergovernmental membership body, the ITU-T’s standard recommendations traditionally carry considerable weight. However, during the 1990s, with the rise of the Internet, ITU-T (at that time known as the International Telegraph and Telephone Consultative Committee, or CCITT), found its relevance called into question due to the increasing importance of new bodies like IETF. Since that time, ITU-T has substantially revamped itself and is today a key player in the standards-setting community.

ITU-T and IETF do attempt to work together, but they have a somewhat contentious relationship. While the latter represents the open and free-wheeling culture of the Internet, the former has evolved from the more formal culture of telecommunications. IETF consists primarily of technical experts and practitioners, most of them from the developed world; ITU-T is made up of national governments, and as such can claim membership (and the resulting legitimacy) of many developing country states. Their disparate cultures, yet similar importance, highlights the necessity (and the challenge) of different groups working together to ensure successful governance.

World Wide Web Consortium (W3C): W3C develops standards and protocols that exist on top of core Internet standards. It was created in 1994 as a body that would enhance the World Wide Web by developing new protocols while at the same time ensuring interoperability. Among others issues, it has developed standards to promote privacy (the P3P platform), and a protocol that would allow users to filter content (PICS). W3C also works on standards to facilitate access for disabled people.

The consortium is headed by Tim Berners-Lee, sometimes referred to as the “inventor of the World Wide Web” for his pioneering work in developing key standards like HTML and HTTP. It is a fee-based organization, with a significant portion of its membership made up by industry representatives.

Management of the Domain Name System

The coordination and management of the DNS is another key area requiring governance at the logical layer. In recent years, the DNS has been the focus of some of the most heated (and most interesting) debates over governance, largely due to the central role played by ICANN.21

Understanding the DNS

In order to understand some of the governance issues surrounding the DNS, it is first necessary to understand what the DNS is, and how it functions. Operating as a lookup system, the DNS allows users to use memorable alphanumeric names to identify network services such as the World Wide Web and email servers. It is a system that maps names (e.g., www.undp.org) to a string of four numbers separated by periods called IP addresses (e.g., 165.65.35.38).

Until 2000, the Internet had eight top-level domain names: .arpa, .com, .net, .org, .int, .edu, .gov and .mil. These domains are called generic top-level domains, or gTLDs. As the Internet grew, there were increasing calls for more top-level domain names to be added, and, in 2000, ICANN announced seven new gTLDs: .aero, .biz, .coop, .info, .museum, .name, and .pro. Another series of new gTLDs have also been announced recently, although not all of them are yet operational.

In addition to these gTLDs, the DNS also includes another set of top-level domains known as country code top-level domains, or ccTLDs. These were created to represent individual countries, and include two-letter codes such as .au (Australia), .fr (France), .gh (Ghana), and .in (India).

Actors Involved

ICANN, a non-profit corporation formed to manage the DNS by the US government in 1998, is the main body responsible for governance of the DNS. At its founding, ICANN was upheld as a new model for governance on the Internet – one that would be international, democratic, and include a wide variety of stakeholders from all sectors.

Almost from the start, however, ICANN has proven to be controversial, and its many shortcomings (perceived or real) have led some observers to conclude that a more traditional system of governance, modeled after multilateral organization like ITU or the UN, would be more appropriate for Internet governance. Indeed, although not always explicitly mentioned, one sub-text of the WSIS process is, precisely, such a rethink of governance models. Many developing countries, in particular, would like to see a greater role for national governments, and a distancing of core DNS functions from the US government, under whose aegis ICANN still functions.

ICANN’s missteps and mishaps cannot be fully documented here. They include a short-lived attempt to foster online democracy through direct elections to ICANN’s board (the effort was troubled from the start and the elections no longer take place). They also include a variety of decisions that have led many to question the organization’s legitimacy, accountability and representation. To be fair to ICANN, its many troubles are probably indications not so much of a single organization’s shortcomings, but rather of the challenges in developing new models of governance for the Internet.

ICANN’s troubles also shed light on the difficulty of distinguishing between technical decision-making and policy decisions which have political, social, and economic ramifications. ICANN’s original mandate is clear: technical management of the DNS. Esther Dyson, ICANN’s first chair, has argued that

- ICANN does not “aspire to address” any Internet governance issues; in effect, it governs the plumbing, not the people. It has a very limited mandate to administer certain (largely technical) aspects of the Internet infrastructure in general and the Domain Name System in particular.22

Despite such claims, ICANN’s history shows that even the most narrowly defined technical decisions can have important policy ramifications. For example, the decision on which new top-level domain names to create was a highly charged process that appeared to give special status to some industries (e.g., the airline industry). In addition, ICANN’s decisions regarding ccTLDs have proven contentious, touching upon issues of national sovereignty and even the digital divide. Indeed, one of the more difficult issues confronting governance of the DNS is the question of how, and by whom, ccTLDs should be managed. Long before the creation of ICANN, management of many ccTLDs was originally granted by IANA to volunteer entities that were not located in, nor related to, the countries in question. As a result, some countries (e.g., South Africa) have taken legal recourse to reclaim their ccTLDs. To be fair, many of these assignments were undertaken at a time when few governments, let alone developing country governments, had any interest or awareness of the Internet. The DNS was created in 1984, long before the creation of ICANN in 1998. ICANN’s relationship with country operators has also sometimes proven difficult due to the oversight of the organization by the US government.

This has led to a situation in which many country domain operators have created their own organizations to manage regional TLDs. For example, European domain operators have created their own regional entity, the Council of European National TLD Registries (CENTR). While not quite such a dramatic development yet, regional entities do raise the frightening prospect of Internet balkanization, in which a fragmented DNS is managed by competing entities, and the Internet is no longer a global, seamless network. To remedy this problem, ICANN created a supporting organization of ccTLDs called the country code supporting organization or ccNSO. All ccTLD operators have been invited to join this organization, including all the participants in CENTR. Significant progress has been made in achieving this objective.

IP Allocation and Numbering

As mentioned, IP addresses are composed of sets of four numbers (ranging from 0 to 255) separated by periods – this is just a representation of a 32-bit number that expresses an IP address in IPv4. In fact, every device on the network requires a number, and numbering decisions for IP addresses as well as for other devices are critical to the smooth functioning of the Internet.

Several governance steps have already been taken in the realm of numbering. One of the most important areas of governance concerns recent moves to address a perceived shortage of IP addresses. Under the current protocol, IPv4, there exist some 4.2 billion possible unique IP addresses. The number may appear large, but the proliferation of Internet-enabled devices like cell phones, digital organizers and home appliances – each of which is assigned a unique IP number – could in theory deplete the available addresses, thereby stunting the spread of the network.

In addition, as we shall see below, the shortage of IP space has been a particular concern for developing countries.

To address this potential shortage, two steps have been taken:

- First, the technical community has developed a new protocol known as IPv6. This protocol, which would allow for some 340 undecillion (3.4 × 1038) addresses, essentially solves the shortage problem. IPv6 also introduces a range of additional features not currently supported in IPv4, including better security, and the ability to differentiate between different streams of packets (e.g., voice and data).

- Second, the technical community also introduced a process known as “Network Address Translation” (NAT), which allowed for the use of private addresses. Under NAT, individual computers on a single private network (for example, within a company or university) use non-unique private addresses that are translated into public, unique IP addresses as they leave the private network boundary. Many Internet architects find this to create a serious erosion of the Internet’s end-to-end principles.

An additional example of governance in the realm of numbering can be found in recent efforts to develop a shared platform for the Public Switched Telephone Network (PSTN) and the IP network. These efforts have been led by the IETF, which has developed a standard known as ENUM. Essentially, ENUM translates telephone numbers into web addresses, and as such “merges” the DNS with the existing telephone numbering system. Although not widely deployed yet, it offers potential in several areas. For example, it should allow telephone users, with access only to a 12-digit keypad, to access Internet services; it should also make it significantly easier to place telephone calls between the PSTN and the Internet using VoIP.

Actors Involved

The main body involved in the distribution of IP numbers is IANA. IANA allocates blocks of IP address space to the Regional Internet Registries (RIRs)23 , which allocate IP addresses and numbers to ISPs and large organizations. Currently, the issue of how numbers will be allocated under IPv6 has become a matter of some contention. In particular, it appears possible that national governments, which have not shown much interest in IP allocation thus far, may henceforth play a greater role. Unless this is done with great care, the basic need to constrain the growth of routing tables could be seriously affected.

Although the IANA distributes IP space, it was the IETF, in consultation with industry and technical groups that played the leading role in developing IPv6. As noted, the IETF has also been at the forefront of ENUM development. However, given the bridging role played by ENUM between the Internet and existing telephone systems, organizations more traditionally involved in telecommunications governance have also claimed a role. In particular, as the international authority for telephone codes, the ITU has been involved in the application of ENUM; the IETF design specified a key role for the ITU in validating the topmost levels of delegation for ENUM domain names. In addition, as PSTN numbering still remain largely the domain of national regulators, it seems likely that any widespread deployment of ENUM will by necessity involve governments too.

What are some of the governance issues at the content layer?

[edit | edit source]For average users, the content layer is their only experience of the Internet. This is where the programs and services and applications they access on an everyday basis exist. This does not mean that governance on the content layer is the only area relevant to average users. As should be clear by now, the three layers are inter-dependent, and what happens at the content layer is very much contingent on what happens at the other layers. For example, without an effective mechanism for ensuring interconnection, it would be impossible – or at any rate fruitless – to use a web-browser at the content level.

Nonetheless, governance at this layer is a matter of critical (if not singular) importance for users. Here, we examine three issues of particular importance:

Internet Pollution

Pollution is the generalized term used to refer to a variety of harmful and illegal forms of content that clog (or pollute) the Internet. Although the best known examples of pollution are probably spam (unsolicited email) and viruses, the term also encompasses spyware, phishing attacks (in which an email or other message solicits and misuses sensitive information, e.g., bank account numbers), and pornography and other harmful content.

From a minor nuisance just a few years ago, Internet pollution has risen to epidemic proportions. By some estimates, 10 out of every 13 emails sent today is spam.24 Such messages clog often scarce bandwidth, diminish productivity, and impose an economic toll. According to one study, spam results in an annual Euro 10-billion loss just through lost bandwidth.25 Similarly, in the United States, the Federal Trade Commission (FTC) announced in 2003 that up to 27.3 million Americans have been victims of some form of identity theft within the past five years, and that in 2002 alone, the cost to businesses of such theft amounted to nearly US$ 48 billion.26 It should be made clear that the losses are a consequence of the creation of new credit card accounts by the identity thieves, not necessarily the stealing of money from the individual victims of identity theft.

In addition to the economic damage, pollution also damages the Internet by reducing the amount of trust average users have in the network.27 Trust is critical to the Internet’s continued growth. If users begin fearing the openness that has thus far made the network such a success, this would slow the spread of the network, damage the prospects for e-commerce, and possibly result in a number of “walled gardens” where users hide from the wider Internet community.

Actors Involved

One of the reasons for the rapid growth of pollution is the great difficulty in combating it. Spam and viruses exploit the Internet’s e2e architecture and anonymity. With few exceptions, unsolicited mailers and those who spread viruses are extremely difficult to track down using conventional methods. In this sense, pollution represents a classic Internet challenge to traditional governance mechanisms: combating it requires new structures and tools, and new forms of collaboration between sectors.

A variety of actors and bodies are involved in trying to combat spam. They employ a diverse range of approaches, which can be broadly divided into two categories:

Technical approaches: Technical solutions to spam include the widely deployed junk mail filters we find in our email accounts, as well as a host of other detection and prevention mechanisms. Yahoo and Microsoft, for example, have discussed implementing an e-stamp solution that would charge tiny amounts for legitimate emails to be accepted in an inbox. Although such proposals are likely to encounter opposition, they address the underlying economic reality that spam is so prolific in part because it is so cheap (indeed, free) to send unwanted emails.

A number of industry and civil society groups also exist to develop technical solutions to pollution, and to spam in particular. For example, the Messaging Anti Abuse Working Group (MAAWG) is a coalition of leading ISPs, including Yahoo, Microsoft, Earthlink, America Online, and France Telecom. MAAWG has advocated a set of technical guidelines and best practices to stem the tide of spam. It has also evaluated methods to authenticate email senders using IP addresses and digital content signatures. Similarly, the Spamhaus Project is an international non-profit organization that works with law enforcement agencies to track down the Internet’s Spam Gangs, and lobbies governments for effective anti-spam legislation.

Legal and regulatory approaches: These joint industry efforts have led to some successes in combating spam. However, technical efforts to thwart spam must always confront the problem of false positives – i.e., the danger that valid emails will wrongly be classified as spam by the program or other technical tool.