Cyberbotics' Robot Curriculum/Novice programming Exercises

This chapter is composed of a series of exercises for the novices. We assume that the previous exercises are acquired. BotStudio is still used for the first exercises. More complex automata will be created using this module. Then, C programming will be introduced. You will discover the e-puck devices in detail.

*A train of e-pucks* [Novice]

[edit | edit source]The aim of this exercise is to create a more complex automaton. Moreover, you will manipulate several virtual e-pucks at the same time. This simulation uses more e-pucks, your computer has to be recent to avoid lags and glitches.

Open the World File

[edit | edit source]Open the following world file:

.../worlds/novice_train.wbt

The e-puck which is the closest to the simulation camera is the last of the queue. Each e-puck has its own BotStudio window. The order of the BotStudio windows is the same as the order of the e-pucks, i.e., the first e-puck of the queue is linked to the upper BotStudio window.

Upload the Robot Controller on several e-pucks

[edit | edit source]Stopping the simulation before uploading is recommended. Choose a BotStudio window (let say the lowest one). You can modify its automaton as usual. You can use either the same robot controller for every e-puck or a different robot controller for every e-puck. If you want to use the same controller for every e-puck, save the desired automaton and load the saved file on the other e-pucks. This way is recommended.

![]() [P.1] Create an automaton so that the e-pucks form a chain, i.e., the first e-puck goes somewhere, the second e-puck follow the first one, the third one follow the second one, etc. (Hints: Create two automata; one for the first e-puck of the chain (the ”locomotive”) and one for the others (the ”chariots”). The locomotive should go significantly slower than the chariots)

[P.1] Create an automaton so that the e-pucks form a chain, i.e., the first e-puck goes somewhere, the second e-puck follow the first one, the third one follow the second one, etc. (Hints: Create two automata; one for the first e-puck of the chain (the ”locomotive”) and one for the others (the ”chariots”). The locomotive should go significantly slower than the chariots)

If you don't succeed it, you can open the following automaton and try to improve it:

.../novice_train/novice_train_corr.bsg

Note that there are two automata in a single file. The chariots must have the ”chariot init” state as initial state, and the locomotive must have the ”locomotive init” state as initial state.

Remain in Shadow [Novice]

[edit | edit source]The purpose of this exercise is to create an automaton of wall following. You will see that it isn't so easy. This exercise still uses BotStudio, but is more difficult than precedent ones.

Open the world file

[edit | edit source]Open the following world file:

.../worlds/novice_remain_in_shadow.wbt

You observe that the world is changed. The board is larger, there are more obstacles and there is a doubled wall. The purpose of the doubled wall is to perform either an inner or an outer wall following, and the purpose of the obstacles is to turn around them. So, don't hesitate to move the e-puck or the obstacles by shift-clicking.

Wall following Algorithm

[edit | edit source]Let's think about a wall following algorithm. There are a lot of different ways to apprehend this problem. The proposed solution can be implemented with an FSM. First of all, e-puck has to go forward until it meets an obstacle, then it spins on itself at left or at right (let's say at right) to be perpendicular to the wall. Then, it has to follow the wall. Of course, it doesn't know the wall shape. So, if it is too close to the wall, it has to rectify its trajectory to left. On the contrary, if it is too far from the wall, it has to rectify to right.

![]() [P.1] Create the automaton which corresponds to the precedent description. Test only on the virtual e-puck. (Hints: Setting parameters of the conditions is a difficult task. If you don't achieve to a solution, run your e-puck close to a wall, observe the sensors values and think about conditions. Don't hesitate to add more states (like ”easy rectification - left” and ”hard rectification - left”).)

[P.1] Create the automaton which corresponds to the precedent description. Test only on the virtual e-puck. (Hints: Setting parameters of the conditions is a difficult task. If you don't achieve to a solution, run your e-puck close to a wall, observe the sensors values and think about conditions. Don't hesitate to add more states (like ”easy rectification - left” and ”hard rectification - left”).)

If you don't achieve to a solution, open the following automaton in BotStudio (it's a good beginning but it's not a perfect solution):

.../controllers/novice_remain_in_shadow/novice_remain_in_shadow_corr.bsg

![]() [P.2] If you tested your automaton on an inner wall, modify it to work on an outer wall, and inversely.

[P.2] If you tested your automaton on an inner wall, modify it to work on an outer wall, and inversely.

![]() [P.3] Until now the environment hasn't changed. Add a transition in order to find again a wall if you lost the contact with an obstacle. If the e-puck turns around an obstacle which is removed, it should find another wall.

[P.3] Until now the environment hasn't changed. Add a transition in order to find again a wall if you lost the contact with an obstacle. If the e-puck turns around an obstacle which is removed, it should find another wall.

![]() [P.4] Modify parameters of the automaton in order to reproduce the same behavior on a real e-puck.

[P.4] Modify parameters of the automaton in order to reproduce the same behavior on a real e-puck.

Your Progression

[edit | edit source]With the two previous exercises, you learned:

- How to construct a complex FSM using BotStudio

- What are the limitations of BotStudio

The following exercises will teach you another way to program your e-puck: C language.

Introduction to the C Programming

[edit | edit source]Until now, you have programmed the robot behavior by using BotStudio. This tool enables to program quickly and intuitively simple behaviors. Probably you also remarked the limitations of this tool in particular that the expression freedom is limited and that some complex programs become quickly unreadable. For this reason, a more powerful programming tool is needed: the C programming language. This programming language has an important expression freedom. With it and the Webots libraries, you can program the robot controller by writing programs. The problem is that you have to know its syntax. Learning the C language is out of the focus of the present document. For this reason, please refer to a book about the C programming. There are also very useful tutorials on the web about this subject like those wikibooks on C programming

You don't need to know every subtleties of this language to successfully go through the following exercises. If you are totally beginner, focus first on: variables, arrays, functions, control structures (Boolean tests, if ... else ..., switch, for, while, etc.).

In the four following exercises, you will discover the e-puck devices independently. The exercises about devices are sorted according to their difficulty, i.e., respectively: the LEDs, the stepper motors, the IR sensors, the accelerometer and the camera.

The Structure of a Webots Simulation

[edit | edit source]To create a simulation in Webots, two kinds of files are required:

- The world file: It defines the virtual environment, i.e., the shape, the physical bounds, the position and the orientation of every object (including the robot), and some other global parameters like the position of the windows, the parameters of the simulation camera, the parameters of the light sources, the gravitational vector, etc. This file is written in the VRML[1] language.

- The controller file: It is the program used by the robot. It defines the behavior of the robot. You already saw that a controller file can be a BotStudio automaton and you will see that it can also be a C program which uses the Webots libraries.

Note that almost all the world files of this document use the same definition of the e-puck located at:

webots_root/project/default/protos/EPuck.proto

This file contains the description of a standard e-puck including notably the names of the devices. This will be useful for getting the device tags.

The simplest Program

[edit | edit source]The simplest Webots program is written here. This script uses a Webots library (refer to the first line) to obtain the basic robot functionality.

// included libraries

#include <webots/robot.h>

// global defines

#define TIME_STEP 64 // [ms]

// global variables...

int main() {

// Webots init

wb_robot_init();

// put here your initialization code

// main loop

while(wb_robot_step(TIME_STEP) != -1) {

}

// put here your cleanup code

// Webots cleanup

wb_robot_cleanup();

return 0;

}

![]() [P.1] Read carefully the programming code.

[P.1] Read carefully the programming code.

![]() [Q.1] What is the meaning of the TIME_STEP variable ?

[Q.1] What is the meaning of the TIME_STEP variable ?

![]() [Q.2] What could be defined as a global variable ?

[Q.2] What could be defined as a global variable ?

![]() [Q.3] What should we put in the main loop ? And in the initialization section ?

[Q.3] What should we put in the main loop ? And in the initialization section ?

K-2000 [Novice]

[edit | edit source]For the first time, this exercise uses the C programming. You will create a LEDs loop around the robot as you did before in exercise The blinking e-puck.

Open the World File and the Text Editor Window

[edit | edit source]Open the following world file:

.../worlds/curriculum_novice_k2000.wbt

This will open a small board. Indeed, when one plays with the LEDs we don't need a lot of room. You remark also a new window: the text editor window (you can see it on the picture). In this window you can write C programs, load, save and compile[2] them (for compiling click on compile button).

![]() [P.1] Run the program on the virtual e-puck (Normally, it should already run after the opening of the world file. If it is not the case, you have to compile the controller and to revert the simulation). Observe the e-puck behavior.

[P.1] Run the program on the virtual e-puck (Normally, it should already run after the opening of the world file. If it is not the case, you have to compile the controller and to revert the simulation). Observe the e-puck behavior.

![]() [P.2] Observe carefully the C programming code of this exercise. Note the second include statement.

[P.2] Observe carefully the C programming code of this exercise. Note the second include statement.

![]() [Q.1] With which function the state of a LED is changed? In which library can you find this function? Explain the utility of the i global variable in the main function.

[Q.1] With which function the state of a LED is changed? In which library can you find this function? Explain the utility of the i global variable in the main function.

Simulation, Remote-Control Session and Cross-Compilation

[edit | edit source]There are three utilization modes for the e-puck:

- The simulation: By using the Webots libraries, you can write a robot controller, compile it and run it in a virtual 3D environment. It's what you have done in the previous subsection.

- The remote-control session: You can write the same program, compile it as before and run it on the real e-puck through a Bluetooth connection.

- The cross-compilation: You can write the same program, cross-compile it for the e-puck processor and upload it on the real robot. In this case, the old e-puck program (firmware) is substituted by your program. In this case, your program is not dependent on Webots and can survive after the rebooting of the e-puck.

If you want to create a remote-control session, you just have to select your Bluetooth instead of simulation in the robot window. Your robot must have the right firmware as explained in the section E-puck prerequisites.

For the cross-compilation, select first the Build | Cross-compile... menu in the text editor window (or click on the corresponded icon in the tool bar). This action will create a .hex file which can be executed on the e-puck. When the cross-compilation is performed, Webots ask you to upload the generated file (located in the directory of the e-puck). Click on the Yes button and select which bluetooth connection you want to use. The file should be uploaded on the e-puck. You can also upload a file by selecting the Tool | Upload to e-puck robot... menu in the simulation window.

For knowing in which mode is the robot, you can call the wb_robot_get_mode() function. It returns 0 in a simulation, 1 in a cross-compilation and 2 in a remote-control session.

![]() [P.3] Cross-compile the program and to upload it on the e-puck.

[P.3] Cross-compile the program and to upload it on the e-puck.

![]() [P.4] Upload the firmware on the e-puck (see the section E-puck prerequisites). Compile the program and launch a remote-control session.

[P.4] Upload the firmware on the e-puck (see the section E-puck prerequisites). Compile the program and launch a remote-control session.

Modifications

[edit | edit source]![]() [P.5] Modify the given programming code in order to change the direction of the LEDs rotation.

[P.5] Modify the given programming code in order to change the direction of the LEDs rotation.

![]() [P.6] Modify the given programming code in order to blink all the LEDs in a synchronous manner.

[P.6] Modify the given programming code in order to blink all the LEDs in a synchronous manner.

![]() [P.7] Determine by tests at which led correspond each device name. For example, the ”led0” device name corresponds to the most front led of the e-puck.

[P.7] Determine by tests at which led correspond each device name. For example, the ”led0” device name corresponds to the most front led of the e-puck.

Motors [Novice]

[edit | edit source]The goal of this exercise is to use some other e-puck devices: the two stepper motors.

Open the World File

[edit | edit source]Open the following world file:

.../worlds/novice_motors.wbt

The Whirligig

[edit | edit source]"A stepper motor is an electromechanical device which converts electrical pulses into discrete mechanical movements"[3]. It can divide a full rotation into a large number of steps. An e-puck stepper motor has 1000 steps. This kind of motor has a precision of \pm1 step. It is directly compatible with digital technologies. You can set the motor speed by using the wb_differential_wheels_set_speed(...) function. This function receives two arguments: the motor left speed and the motor right speed. The e-puck accepts speed values between -1000 and 1000. The maximum speed corresponds to about a rotation every second.

For knowing the position of a wheel, the encoder device can be used. Note that an e-puck has not any physical encoder device which measures the position of the wheel like on some other robots. But a counter is incremented when a motor step is performed, or it is decremented if the wheel turns on the other side. This is a good approximation of the wheel position. Unfortunately, if the e-puck is blocked and the wheel doesn't slide, the encoder counter can be incremented even if the wheel doesn't turn.

The encoders are not implemented yet in the remote-control mode. So, if you want to use it on a real e-puck, use the cross-compilation.

![]() [Q.1] Without running the simulation, describe what will be the e-puck behavior.

[Q.1] Without running the simulation, describe what will be the e-puck behavior.

![]() [P.1] Run the simulation on the virtual e-puck.

[P.1] Run the simulation on the virtual e-puck.

Modifications

[edit | edit source]![]() [P.2] Modify the given programming code to obtain the following behavior: the e-puck goes forward and stops after exactly a full rotation of its wheels. Try your program both on the real and on the virtual e-puck.

[P.2] Modify the given programming code to obtain the following behavior: the e-puck goes forward and stops after exactly a full rotation of its wheels. Try your program both on the real and on the virtual e-puck.

![]() [P.3] Modify the given programming code to obtain the following behavior: the e-puck goes forward and stops after exactly 10 cm. (Hint: the radius of an e-puck wheel is 2.1 cm)

[P.3] Modify the given programming code to obtain the following behavior: the e-puck goes forward and stops after exactly 10 cm. (Hint: the radius of an e-puck wheel is 2.1 cm)

![]() [P.4] [Challenge] Modify the given programming code to obtain the following behavior: the e-puck moves to a specific XZ-coordinates (relative to the initial position of the e-puck).

[P.4] [Challenge] Modify the given programming code to obtain the following behavior: the e-puck moves to a specific XZ-coordinates (relative to the initial position of the e-puck).

The IR Sensors [Novice]

[edit | edit source]You will manipulate in this exercise the IR sensors. This device is a less intuitive than the previous ones. Indeed, an IR sensor can have different uses. In this exercise, you will see what information is provided by this device and how to use it. The log window will also be introduced.

The IR Sensors

[edit | edit source]Eight IR sensors are placed around the e-puck in a not regular way. There are more sensors in the front of the e-puck than in the back. An IR sensor is composed of two parts: an IR emitter and a photo-sensor. This configuration enables an IR sensor to play two roles.

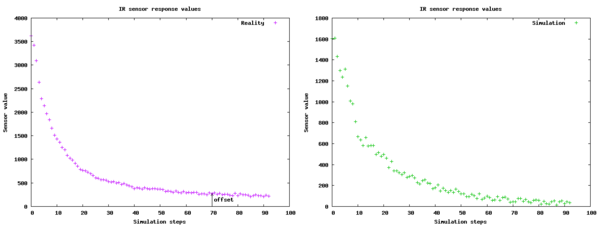

Firstly, they can measure the distance between them and an obstacle. Indeed, the IR emitter emits infrared light which bounce on a potential obstacle. The received light is measured by the photo-sensor. The intensity of this light gives directly the distance of the object. This first use is probably the most interesting one because they enable to know the nearby environment of the e-puck. In Webots, an IR sensor in this mode of operation is modeled by using a distance sensor. Note that the values measured by an IR sensor behave in a non linear way. For illustrating this fact, the following experience was performed. An e-puck is placed in front of a wall. When the experiment begins, then the e-puck moves backwards. The values of the front right IR sensor are stored in a file. These values are plotted in the figure. Note that the time steps are not identical for the two curves. The distance between the e-puck and the wall grows linearly but the measures of the IR sensor are non-linear. Then, observe the offset value. This value depends principally of the lighted environment. So, this offset is often meaningless. Note also that the distance estimation depends on the obstacle (color, orientation, shape, material) and on the IR sensor (properties and calibration).

Secondly, the photo-sensor can be used alone. In that case, the IR sensors quantify the amount of received infrared light. A typical application is the phototaxy, i.e., the robot follows or avoids a light stimulus. This behavior is inspired of the biology (particularly of the insects). In Webots, an IR sensor in this mode of operation is modeled by using a light sensor. Note that in this exercise, only the distance sensors are manipulated. But the light sensors are also ready to use.

Open the World File

[edit | edit source]Open the following world file:

.../worlds/novice_ir_sensors.wbt

You should observe a small board, on which there is just one obstacle. To test the IR sensors, you can move either the e-puck or the obstacle. Please note also that the window depicted in the figure. This window is called the log window. It displays text. Two entities can write in this window: either a robot controller or Webots.

Calibrate your IR Sensors

[edit | edit source]![]() [P.1] Without running the simulation, observe carefully the programming code of this exercise.

[P.1] Without running the simulation, observe carefully the programming code of this exercise.

![]() [Q.1] Why the distance sensor values are subtracted by an offset? Describe a way to compute this offset?

[Q.1] Why the distance sensor values are subtracted by an offset? Describe a way to compute this offset?

![]() [Q.2] What is the utility of the THRESHOLD_DIST variable?

[Q.2] What is the utility of the THRESHOLD_DIST variable?

![]() [Q.3] Without running the simulation, describe what will be the e-puck behavior.

[Q.3] Without running the simulation, describe what will be the e-puck behavior.

![]() [P.2] Run the simulation both on the virtual and on the real e-puck, and observes the e-puck behavior when an object approaches it.

[P.2] Run the simulation both on the virtual and on the real e-puck, and observes the e-puck behavior when an object approaches it.

![]() [Q.4] Describe the utility of the calibrate(...) function.

[Q.4] Describe the utility of the calibrate(...) function.

![]() [P.3] Until now, the offset values are defined arbitrarily. The goal of this part is to calibrate the IR sensors offsets of your real e-puck by using the calibrate function. First of all, in the main function, uncomment the call to the calibrate function, and compile the program. Your real e-puck must be in an airy place. Run the program on your real e-puck. The spent time in the calibration function depends on the number n. Copy-paste the results of the calibrate function from the log window to your program in the ps_offset_real array. Compile again. Your IR sensors offsets are calibrated to your environment.

[P.3] Until now, the offset values are defined arbitrarily. The goal of this part is to calibrate the IR sensors offsets of your real e-puck by using the calibrate function. First of all, in the main function, uncomment the call to the calibrate function, and compile the program. Your real e-puck must be in an airy place. Run the program on your real e-puck. The spent time in the calibration function depends on the number n. Copy-paste the results of the calibrate function from the log window to your program in the ps_offset_real array. Compile again. Your IR sensors offsets are calibrated to your environment.

![]() [P.4] Determine by tests at which IR sensor correspond each device name. For example, the ”ps2” device name corresponds to the right IR sensor of the e-puck.

[P.4] Determine by tests at which IR sensor correspond each device name. For example, the ”ps2” device name corresponds to the right IR sensor of the e-puck.

Use the Symmetry of the IR Sensors

[edit | edit source]Fortunately, the IR sensors can be used more simply. For bypassing the calibration of the IR sensors and the treatment of their returned values, the e-puck symmetry can be used. If you want to create a simple collision avoidance algorithm, the difference (the delta variable of the example) between the left and the right side is more important than the values of the IR sensors.

![]() [P.5] Uncomment the last part of the run function and compile the program. Observe the code and the e-puck behavior. Note that only the values returned by the IR sensors are used. Try also this part on the real robot either in remote-control mode or in cross-compilation.

[P.5] Uncomment the last part of the run function and compile the program. Observe the code and the e-puck behavior. Note that only the values returned by the IR sensors are used. Try also this part on the real robot either in remote-control mode or in cross-compilation.

![]() [P.6] Get the same behavior as before but backwards instead of forwards.

[P.6] Get the same behavior as before but backwards instead of forwards.

![]() [P.7] Simplify the code as much as possible in order to keep the same behavior.

[P.7] Simplify the code as much as possible in order to keep the same behavior.

Accelerometer [Novice]

[edit | edit source]In this exercise and for the first time in this curriculum, the e-puck accelerometer will be used. You will learn the utility of this device and how to use it. The explanation of this device refers to some notions that are out of the scope of this document such as the acceleration and the vectors. For having more information about these topics, refer respectively to a physic book and to a mathematic book.

Open the World File

[edit | edit source]Open the following world file:

.../worlds/novice_accelerometer.wbt

This opens a world containing a spring board. The utility of this object is to observe the accelerometer behavior on an incline and during the fall of an e-puck. For your tests, don't hesitate to move your e-puck (SHIFT + mouse buttons) and to use the Step button. For moving the e-puck vertically, use SHIFT + the mouse wheel.

The Accelerometer

[edit | edit source]The acceleration can be defined as the change of the instantaneous speed. Its SI units are . The accelerometer is a device which measures its own acceleration (and so the acceleration of the e-puck) as a 3D vector. The axis of the accelerometer are depicted on the figure. At rest, the accelerometer measures at least the gravitational acceleration. The modifications of the motor speeds, the robot rotation and the external forces influence also the acceleration vector. The e-puck accelerometer is mainly used for:

- Measuring the inclination and the orientation of the ground under the robot when it is at rest. These values can be computed by using the trigonometry over the 3 components of the gravitational acceleration vector. In the controller code of this exercise, observe the getInclination(float x,float y, float z) and the getOrientation(float x,float y, float z) functions.

- Detecting a collision with an obstacle. Indeed, if the norm of the acceleration (observe the getAcceleration(float x,float y, float z) function of this exercise) is modified brutally without changing the motor speed, a collision can be assumed.

- Detecting the fall of the robot. Indeed, if the norm of the acceleration becomes too low, the fall of the robot can be assumed.

![]() [Q.1] What should be the direction of the gravitational acceleration? What is the direction of the vector measured by the accelerometer?

[Q.1] What should be the direction of the gravitational acceleration? What is the direction of the vector measured by the accelerometer?

![]() [P.1] Verify your previous answer, i.e., in the simulation, when the e-puck is at rest, observe the direction of the gravitational acceleration by observing the log window. (Hint: use the Step button.)

[P.1] Verify your previous answer, i.e., in the simulation, when the e-puck is at rest, observe the direction of the gravitational acceleration by observing the log window. (Hint: use the Step button.)

Practice

[edit | edit source]![]() [P.2] Get the following behavior: when the e-puck falls, the body led switches on. If you want to try this in reality, remember that an e-puck is breakable.

[P.2] Get the following behavior: when the e-puck falls, the body led switches on. If you want to try this in reality, remember that an e-puck is breakable.

![]() [P.3] Get the following behavior: only the lowest led switches on (according to the vertical).

[P.3] Get the following behavior: only the lowest led switches on (according to the vertical).

Camera [Novice]

[edit | edit source]In the section [sec:Line-following], you already learned the basics of the e-puck camera. You used the e-puck camera as a linear camera by handling the BotStudio interface. By using this interface, you are more or less limited to follow a black line. You will observe in this exercise that you can use the camera differently by using the C programming. Firstly, your e-puck will use a linear camera. It will able be to follow a line of a specific color. Secondly, your e-puck will follow a light source symbolized by a light point using the entire field of view of the camera.

Open the World File

[edit | edit source]Open the following world file:

.../worlds/novice_linear_camera.wbt

This opens a long board on which three colored lines are drawn.

Linear Camera - Follow a colored Line

[edit | edit source]On the board, there are a cyan line, a yellow line and a magenta line. These three colors (primary colors) are not chosen randomly. They have the particularity to be seen independently by using a red, green or blue filter (primary colors). The four figures depicts the simulation first in a colored mode (RGB) and, then, by applying a red, green or blue filter. As depicted in the table, if a red filter is used, the cyan color has no component in the red channel, so it seems black, while the magenta color has a component, so it seems white like the ground.

| Color | Red channel | Green channel | Blue channel |

|---|---|---|---|

| Cyan | 0 | 1 | 1 |

| Magenta | 1 | 0 | 1 |

| Yellow | 1 | 1 | 0 |

| Black | 0 | 0 | 0 |

| White | 1 | 1 | 1 |

In the actual configuration, the e-puck camera acquires its image in an RGB mode. Webots software uses also this mode of rendering. So, it's easy to separate these three channels and to see only one of these lines. In the code, this will be done by using the wb_camera_image_get_red(...), wb_camera_image_get_green(...) and wb_camera_image_get_blue(...) functions of the webots/camera.h library.

![]() [Q.1] According to the current robot controller, the e-puck follows the yellow line. What do you have to change in the controller code to follow the blue line?

[Q.1] According to the current robot controller, the e-puck follows the yellow line. What do you have to change in the controller code to follow the blue line?

![]() [Q.2] What is the role of the find_middle(...) function? (Note: the same find_middle(...) function is used in BotStudio)

[Q.2] What is the role of the find_middle(...) function? (Note: the same find_middle(...) function is used in BotStudio)

![]() [Q.3] The speed of the motors depends twice on the delta integer. Why?

[Q.3] The speed of the motors depends twice on the delta integer. Why?

![]() [Q.4] Explain the difference between the two macro variables TIME_STEP and TIME_STEP_CAM.

[Q.4] Explain the difference between the two macro variables TIME_STEP and TIME_STEP_CAM.

![]() [P.1] Try this robot controller on your real e-puck. For creating a real environment, use a big piece of paper and trace lines with fluorescent pens. (Hint: The results depends a lot on your room light)

[P.1] Try this robot controller on your real e-puck. For creating a real environment, use a big piece of paper and trace lines with fluorescent pens. (Hint: The results depends a lot on your room light)

Open another World File

[edit | edit source]Open the following world file:

.../worlds/novice_camera.wbt

This opens a dark board with a white ball. To move the ball, use the arrow keys. This ball can also move randomly by pressing the M key.

Follow a white Object - change the Camera Resolution

[edit | edit source]![]() [P.2] Launch the simulation. Approach or move away the ball.

[P.2] Launch the simulation. Approach or move away the ball.

![]() [Q.5] What is the behavior of the e-puck?

[Q.5] What is the behavior of the e-puck?

The rest of this subsection will teach you to configure the e-puck camera. Indeed, according to your goals, you don't need to use the same resolution. You have to minimize the resolution according to your problem. The transfer of an image from the e-puck to Webots requires time. The bigger an image is, the longer it will take to be transmitted. The e-puck has a camera resolution of 480x640 pixels. But the Bluetooth connection supports only a transmission of 2028 colored pixel. For this reason a resolution of 52x39 pixels maximizes the Bluetooth connection and keeps a 4:3 ratio.

The figure with the field of view of the e-puck shows where the physical parameters of the e-puck camera are. They correspond to the following values:

- a: about 6 cm

- b: about 4.5 cm

- c: about 5.5 cm

- : about 0.47 rad

- : about 0.7 rad

In the text editor window, open the world file:

.../worlds/novice_camera.wbt

This opens the decryption of the world of this exercise. At the end of the file, you will find the following node:

EPuck {

translation -0.03 0 0.7

rotation 0 1 0 0

controller "curriculum_novice_camera"

camera_fieldOfView 0.7

camera_width 52

camera_height 39

}

This part defines the virtual e-puck. The prototype[4] of the e-puck is stored in an external file (see The Structure of a Webots Simulation). Only some fields can be modified. Notably, what will be the e-puck position, its orientation and its robot controller. You will also find there the attributes of the camera. Firstly, you can modify the field of view. This field accepts values bigger than 0 and up to 0.7 because it is the range of the camera of your real e-puck. This field will also define the zoom attribute of the real e-puck camera. Secondly, you can also modify the width and the height of the virtual camera. You can set them from 1 to 127. But their multiplication hasn't to exceed 2028. When the modification of the world file is performed, then, save the world file and revert the simulation.

The current version of the e-puck firmware[5] permits only to obtain a centered image. For obtaining the last line of the real camera in order to simulate a linear camera, a trick is required. If the virtual e-puck camera is inclined and the height is 1, Webots call another routine of the e-puck firmware for obtaining the last line. So, if you want to use the linear camera, you have to use another e-puck prototype in which the virtual camera is inclined:

epuck_linear_camera {

translation -0.03 0 0.7

rotation 0 1 0 0

controller "curriculum_novice_camera"

camera_fieldOfView 0.7

camera_width 60

}

Note that the height of the camera is not defined. This field is implicitly set to 1.

The table, compares the values of the world file with the resulted values of the real e-puck camera. Note that the field of view (\beta) influences the zoom attribute. A zoom of 8 defines a sub-sampling (one pixel is taken over 8). X and Y show the relative position of the real camera window (see figure [fig:utilisation-camera]).

| width (sim) | height (sim) | angle[6] | width | height | zoom | x | y | |

|---|---|---|---|---|---|---|---|---|

| 52 | 39 | 0.7 | false | 416 | 312 | 8 | 32 | 164 |

| 39 | 52 | 0.7 | false | 468 | 624 | 12 | 6 | 8 |

| 52 | 39 | 0.08 | false | 52 | 39 | 1 | 214 | 300 |

| 39 | 52 | 0.08 | false | 39 | 52 | 1 | 222 | 294 |

| 120 | 1 | 0.7 | true | 480 | 1 | 4 | 0 | 639 |

| 60 | 1 | 0.7 | true | 480 | 1 | 8 | 0 | 639 |

| 40 | 1 | 0.35 | false | 240 | 1 | 6 | 120 | 320 |

![]() [P.3] Try each configuration of the table. Observe the results both on your virtual and on your real e-puck. (Hint: Put the values from the left part of the table to the world file)

[P.3] Try each configuration of the table. Observe the results both on your virtual and on your real e-puck. (Hint: Put the values from the left part of the table to the world file)

Your Progression

[edit | edit source]In the five previous exercises, you saw in detail five devices of the e-puck: the LEDs, the stepper motors, the IR sensors, the accelerometer and the camera. You saw their utility and how to use them using the C programming. Now, you have all the technical knowledge to begin to program the e-puck behavior. This is the main topic of the rest of the curriculum.

Notes

[edit | edit source]- ↑ The Virtual Reality Markup Language (VRML) is standard file format for representing 3D objects.

- ↑ Compiling is the act of transforming source code into object code.

- ↑ Eriksson, Fredrik (1998), Stepper Motor Basics

- ↑ A Webots prototype is the definition of a 3D model which appears frequently. For example, all the virtual e-puck of this document com from only two prototypes. The advantage is to simplify the world file.

- ↑ The firmware is the program which is embedded in the e-puck.

- ↑ the angle field means that the virtual camera is inclined in order to simulate a linear camera. In this configuration, height of the simulated camera must be 1.