Cognitive Psychology and Cognitive Neuroscience/Behavioural and Neuroscience Methods

| Previous Chapter | Overview | Next Chapter |

Introduction

[edit | edit source]

Behavioural and Neuroscientific methods are used to gain insight into how the brain influences the way individuals think, feel, and act. There are an array of methods, which can be used to analyze the brain and its relationship to behavior. Well-known techniques include EEG (electroencephalography) which records the brain’s electrical activity and fMRI (functional magnetic resonance imaging) which produces detailed images of brain structure and/or activity. Other methods, such as the lesion method, are lesser known, but still influential in today's neuroscience research.

Methods can be organized into the following categories: anatomical, physiological, and functional. Other techniques include modulating brain activity, analyzing behavior or computational modeling.

Lesion method

[edit | edit source]In the lesion method, patients with brain damage are examined to determine which brain structures are damaged and how this influences the patient's behavior. Researchers attempt to correlate a specific brain area to an observed behavior by using reported experiences and research observations. Researchers may conclude that the loss of functionality in a brain region causes behavioral changes or deficits in task performance. For example, a patient with a lesion in the parietal-temporal-occipital association area will exhibit agraphia, a condition in which he/she is not able to write, despite having no deficits in motor ability. If damage to a particular brain region (structure X) is shown to correlate with a specific change in behavior (behavior Y), researchers may deduce that structure X has a relation to behavior Y.

In humans, lesions are most often caused by tumors or strokes. Through current brain imaging technologies, it is possible to determine which area was damaged during a stroke. Loss of function in the stroke victim may then be correlated with that damaged brain area. While lesion studies in humans have provided key insights into brain organization and function, lesions studies in animals offer many advantages.

First, animals used in research are reared in controlled environmental conditions that limit variability between subjects. Secondly, researchers are able to measure task performance in the same animal, before and after a lesion. This allows for within-subject comparison. And third the control groups can be watched who either did not undergo surgery or who did have surgery in another brain area. These benefits also increase the accuracy of the hypothesis being tested which is more difficult in human research because the before-after comparison and control experiments drop out.

To strengthen conclusions regarding a brain area and task performance, researchers may perform double dissociation. The goal of this method is to prove that two dissociations are independent. Through comparison of two patients with differing brain damage and contradictory disease patterns, researchers may localize different behaviors to each brain area. Broca's area is a region of the brain is responsible for language processing, comprehension and speech production. Patients with a lesion in Broca's area will exhibit Broca's aphasia or non-fluent aphasia. These patients are unable to speak fluently; a sentence produced by a patient with damage to the Broca's area may sound like: "I ... er ... wanted ... ah ... well ... I ... wanted to ... er ... go surfing ... and ..er ... well...". On the other hand, Wernicke's area is responsible for speech comprehension. A patient with a lesion in this area has Wernicke's aphasia. They may be able to produce language, but lack the ability to produce meaningful sentences. Patients may produce 'word salad': " I then did this chingo for some hours after my dazi went through meek and been sharko". Patients with Wernicke's aphasia are often unaware of speech deficits and may believe that they are speaking properly.

Certainly one of the famous "lesion" cases was that of Phineas Gage. On 13 September 1848 Gage, a railroad construction foreman, was using an iron rod to tamp an explosive charge into a body of rock when premature explosion of the charge blew the rod through his left jaw and out the top of his head. Miraculously, Gage survived, but reportedly underwent a dramatic personality change as a result of destruction of one or both of his frontal lobes. The uniqueness of Gage case (and the ethical impossibility of repeating the treatment in other patients) makes it difficult to draw generalizations from it, but it does illustrate the core idea behind the lesion method. Further problems stem from the persistent distortions in published accounts of Gage—see the Wikipedia article Phineas Gage.

Techniques for Assessing Brain Anatomy / Physiological Function

[edit | edit source]CAT

[edit | edit source]

CAT scanning was invented in 1972 by the British engineer Godfrey N. Hounsfield and the South African (later American) physicist Alan Cromack.

CAT (Computed Axial Tomography) is an x-ray procedure which combines many x-ray images with the aid of a computer to generate cross-sectional views, and when needed 3D images of the internal organs and structures of the human body. A large donut-shaped x-ray machine takes x-ray images at many different angles around the body. Those images are processed by a computer to produce cross-sectional picture of the body. In each of these pictures the body is seen as an x-ray ‘slice’ of the body, which is recorded on a film. This recorded image is called a tomogram.

CAT scans are performed to analyze, for example, the head, where traumatic injuries (such as blood clots or skull fractures), tumors, and infections can be identified. In the spine the bony structure of the vertebrae can be accurately defined, as can the anatomy of the spinal cord. CAT scans are also extremely helpful in defining body organ anatomy, including visualizing the liver, gallbladder, pancreas, spleen, aorta, kidneys, uterus, and ovaries. The amount of radiation a person receives during a CAT scan is minimal. In men and non-pregnant women it has not been shown to produce any adverse effects. However, doing a CAT test hides some risks. If the subject or the patient is pregnant it maybe recommended to do another type of exam to reduce the possible risk of exposing her fetus to radiation. Also in cases of asthma or allergies it is recommended to avoid this type of scanning. Since the CAT scan requires a contrast medium, there's a slight risk of an allergic reaction to the contrast medium. Having certain medical conditions; Diabetes, asthma, heart disease, kidney problems or thyroid conditions also increases the risk of a reaction to contrast medium.

MRI

[edit | edit source]Although CAT scanning was a breakthrough, in many cases it was substituted by magnetic resonance imaging (MRI), a method of looking inside the body without using x-rays, harmful dyes or surgery. Instead, radio waves and a strong magnetic field are used in order to provide remarkably clear and detailed pictures of internal organs and tissues.

History and Development of MRI

MRI is based on a physics phenomenon called nuclear magnetic resonance (NMR), which was discovered in the 1930s by Felix Bloch (working at Stanford University) and Edward Purcell (from Harvard University). In this resonance, magnetic field and radio waves cause atoms to give off tiny radio signals. In the year 1970, Raymond Damadian, a medical doctor and research scientist, discovered the basis for using magnetic resonance imaging as a tool for medical diagnosis. Four years later a patent was granted, which was the world's first patent issued in the field of MRI. In 1977, Dr. Damadian completed the construction of the first “whole-body” MRI scanner, which he called the ”Indomitable”. The medical use of magnetic resonance imaging has developed rapidly. The first MRI equipment in healthcare was available at the beginning of the 1980s. In 2002, approximately 22,000 MRI scanners were in use worldwide, and more than 60 million MRI examinations were performed.

Common Uses of the MRI Procedure

Because of its detailed and clear pictures, MRI is widely used to diagnose sports-related injuries, especially those affecting the knee, elbow, shoulder, hip and wrist. Furthermore, MRI of the heart, aorta and blood vessels is a fast, non-invasive tool for diagnosing artery disease and heart problems. The doctors can even examine the size of the heart-chambers and determine the extent of damage caused by a heart disease or a heart attack. Organs like lungs, liver or spleen can also be examined in high detail with MRI. Because no radiation exposure is involved, MRI is often the preferred diagnostic tool for examination of the male and female reproductive systems, pelvis and hips and the bladder.

Risks

An undetected metal implant may be affected by the strong magnetic field. MRI is generally avoided in the first 12 weeks of pregnancy. Scientists usually use other methods of imaging, such as ultrasound, on pregnant women unless there is a strong medical reason to use MRI.

PPT MRIII

[edit | edit source]

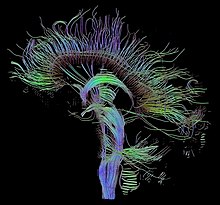

There has been some further development of the MRI: The DT-MRI (diffusion tensor magnetic resonance imaging) enables the measurement of the restricted diffusion of water in tissue and gives a 3-dimensional image of it. History: The principle of using a magnetic field to measure diffusion was already described in 1965 by the chemist Edward O. Stejskal and John E. Tanner. After the development of the MRI, Michael Moseley introduced the principle into MR Imaging in 1984 and further fundamental work was done by Dennis LeBihan in 1985. In 1994 the engineer Peter J. Basser published optimized mathematical models of an older diffusion-tensor model.[1] This model is commonly used today and supported by all new MRI-devices.

The DT-MRI technique takes advantage of the fact that the mobility of water molecules in brain tissue is restricted by obstacles like cell membranes. In nerve fibers mobility is only possible alongside the axons. So measuring the diffusion gives rise to the course of the main nerve fibers. All the data of one diffusion-tensor are too much to process in a single image, so there are different techniques for visualization of different aspects of this data: - Cross section images - tractography (reconstruction of main nerve fibers) - tensor glyphs (complete illustration of diffusion-tensor information)

The diffusion manner changes by patients with specific diseases of the central nervous system in a characteristic way, so they can be discerned by the diffusion-tensor technique. Diagnosis of apoplectic strokes and medical research of diseases involving changes of the white matter, like Alzheimer's disease or Multiple sclerosis are the main applications. Disadvantages of DT-MRI are that it is far more time consuming than ordinary MRI and produces large amounts of data, which first have to be visualized by the different methods to be interpreted.

fMRI

[edit | edit source]The fMRI (Functional Magnetic Resonance) Imaging is based on the Nuclear magnetic resonance (NMR). The way this method works is the following: All atomic nuclei with an odd number of protons have a nuclear spin. A strong magnetic field is put around the tested object which aligns all spins parallel or antiparallel to it. There is a resonance to an oscillating magnetic field at a specific frequency, which can be computed in dependence on the atom type (the nuclei’s usual spin is disturbed, which induces a voltage s (t), afterwards they return to the equilibrium state). At this level different tissues can be identified, but there is no information about their location. Consequently the magnetic field’s strength is gradually changed, thereby there is a correspondence between frequency and location and with the help of Fourier analysis we can get one-dimensional location information. Combining several such methods as the Fourier analysis it is possible to get a 3D image.

The central idea for fMRI is to look at the areas with increased blood flow. Hemoglobin disturbs the magnetic imaging, so areas with an increased blood oxygen level dependant (BOLD) can be identified. Higher BOLD signal intensities arise from decreases in the concentration of deoxygenated haemoglobin. An fMRI experiment usually lasts 1-2 hours. The subject will lie in the magnet and a particular form of stimulation will be set up and MRI images of the subject's brain are taken. In the first step a high resolution single scan is taken. This is used later as a background for highlighting the brain areas which were activated by the stimulus. In the next step a series of low resolution scans are taken over time, for example, 150 scans, one every 5 seconds. For some of these scans, the stimulus will be presented, and for some of the scans, the stimulus will be absent. The low resolution brain images in the two cases can be compared, to see which parts of the brain were activated by the stimulus. The rest of the analysis is done using a series of tools which correct distortions in the images, remove the effect of the subject moving their head during the experiment, and compare the low resolution images taken when the stimulus was off with those taken when it was on. The final statistical image shows up bright in those parts of the brain which were activated by this experiment. These activated areas are then shown as coloured blobs on top of the original high resolution scan. This image can also be rendered in 3D.

fMRI has moderately good spatial resolution and bad temporal resolution since one fMRI frame is about 2 seconds long. However, the temporal response of the blood supply, which is the basis of fMRI, is poor relative to the electrical signals that define neuronal communication. Therefore, some research groups are working around this issue by combining fMRI with data collection techniques such as electroencephalography (EEG) or magneto encephalography (MEG), which has much higher temporal resolution but rather poorer spatial resolution.

PET

[edit | edit source]Positron emission tomography, also called PET imaging or a PET scan, is a diagnostic examination that involves the acquisition of physiologic images based on the detection of radiation from the emission of positrons. It is currently the most effective way to check for cancer recurrences. Positrons are tiny particles emitted from a radioactive substance administered to the patient. This radiopharmaceutical is injected to the patient and its emissions are measured by a PET scanner. A PET scanner consists of an array of detectors that surround the patient. Using the gamma ray signals given off by the injected radionuclide, PET measures the amount of metabolic activity at a site in the body and a computer reassembles the signals into images. PET's ability to measure metabolism is very useful in diagnosing Altsheimer's disease, Parkinson's disease, epilepsy and other neurological conditions, because it can precisely illustrate areas where brain activity differs from the norm. It is also one of the most accurate methods available to localize areas of the brain causing epileptic seizures and to determine if surgery is a treatment option. PET is often used in conjunction with an MRI or CT scan through "fusion" to give a full three-dimensional view of an organ.

Electromagnetic Recording Methods

[edit | edit source]The methods we have mentioned up to now examine the metabolic activity of the brain. But there are also other cases in which one wants to measure electrical activity of the brain or the magnetic fields produced by the electrical activity. The methods we discussed so far do a great job of identifying where activity is occurring in the brain. A disadvantage of these methods is that they do not measure brain activity on a millisecond-by-millisecond basis. This measuring can be done by electromagnetic recording methods, for example by single-cell recording or the Electroencephalography (EEG). These methods measure the brain activity really fast and over a longer period of time so that they can give a really good temporal resolution.

Single cell

[edit | edit source]When using the single-cell method an electrode is placed into a cell of the brain on which we want to focus our attention. Now, it is possible for the experimenter to record the electrical output of the cell that is contacted by the exposed electrode tip. That is useful for studying the underlying ion currents which are responsible for the cell’s resting potential. The researchers’ goal is then to determine for example, if the cell responds to sensory information from only specific details of the world or from many stimuli. So we could determine whether the cell is sensitive to input in only one sensory modality or is multimodal in sensitivity. One can also find out which properties of a stimulus make cells in those regions fire. Furthermore we can find out if the animal’s attention towards a certain stimulus influences in the cell’s respond.

Single cell studies are not very helpful for studying the human brain, since it is too invasive to be a common method. Hence, this method is most often used in animals. There are just a few cases in which the single-cell recording is also applied in humans. People with epilepsy sometimes get removed the epileptic tissue. A week before surgery electrodes are implanted into the brain or get placed on the surface of the brain during the surgery to better isolate the source of seizure activity. So using this method one can decrease the possibility that useful tissues will be removed. Due to the limitations of this method in humans there are other methods which measure electrical activity. Those we are going to discuss next.

EEG

[edit | edit source]

One of the most famous techniques to study brain activity is probably the Electroencephalography (EEG). Most people might know it as a technique which is used clinically to detect aberrant activity such as epilepsy and disorders.

Electroencephalogram (Electroencephalography, EEG) is obtained by electro-electron electroencephalography, which collects weak creatures produced by the human brain from the scalp and enlarges notes. Measuring electroencephalogram, and EEG measures, voltage fluctuations generated by the flow of ionic neurons in the brain. EEG is a diagnosis of a brain-related disease, but because it is susceptible to interference, it is usually used in combination with other methods.

EEG is most commonly used to diagnose epilepsy because epilepsy can cause abnormal EEG readings. It is also used to diagnose sleep disorders, coma, cerebrovascular disease, etc., and brain death. Brain waves have been used in first-line methods to diagnose tumors, strokes, and other focal brain diseases, but this has been reduced with the advent of high-resolution anatomical imaging techniques, such as nuclear magnetic resonance (MRI). And computed tomography (CT). Unlike CT and MRI, EEGs have a higher temporal resolution. Therefore, although spatial resolution of EEG is limited, it is still a valuable tool for research and diagnostics, especially when determining studies that require time resolution in the millisecond range

| Name | Frequency (Hz) | About |

|---|---|---|

|

|

|

In an experimental way this technique is used to show the brain activity in certain psychological states, such as alertness or drowsiness. To measure the brain activity mental electrodes are placed on the scalp. Each electrode, also known as lead, makes a recording of its own. Next, a reference is needed which provides a baseline, to compare this value with each of the recording electrodes. This electrode must not cover muscles because its contractions are induced by electrical signals. Usually it is placed at the “mastoid bone” which is located behind the ear.

During the EEG electrodes are places like this. Over the right hemisphere electrodes are labelled with even numbers. Odd numbers are used for those on the left hemisphere. Those on the midline are labelled with a z. The capital letters stands for the location of the electrode(C=central, F=frontal, Fop= frontal pole, O= occipital, P= parietal and T= temporal).

After placing each electrode at the right position, the electrical potential can be measured. This electrical potential has a particular voltage and furthermore a particular frequency. Accordingly, to a person’s state the frequency and form of the EEG signal can differ. If a person is awake, beta activity can be recognized, which means that the frequency is relatively fast. Just before someone falls asleep one can observe alpha activity, which has a slower frequency. The slowest frequencies are called delta activity, which occur during sleep. Patients who suffer epilepsy show an increase of the amplitude of firing that can be observed on the EEG record. In addition EEG can also be used to help answering experimental questions. In the case of emotion for example, one can see that there is a greater alpha suppression over the right frontal areas than over the left ones, in the case of depression. One can conclude from this, that depression is accompanied by greater activation of right frontal regions than of left frontal regions.

The disadvantage of EEG is that the electric conductivity, and therefore the measured electrical potentials vary widely from person to person and, also during time. This is because all tissues (brain matter, blood, bones etc.) have other conductivities for electrical signals. That is why it is sometimes not clear from which exact brain-region the electrical signal comes from.

ERP

[edit | edit source]Whereas EEG recordings provide a continuous measure of brain activity, event-related potentials (ERPs) are recordings which are linked to the occurrence of an event. A presentation of a stimulus for example would be such an event. When a stimulus is presented, the electrodes, which are placed on a person’s scalp, record changes in the brain generated by the thousands of neurons under the electrodes. By measuring the brain's response to an event we can learn how different types of information are processed. Representing the word eats or bake for example causes a positive potential at about 200msec. From this one can conclude, that our brain processes these words 200 ms after presenting it. This positive potential is followed by a negative one at about 400ms. This one is also called N400 (whereas N stands for negative and 400 for the time). So in general one can say that there is a letter P or N to denote whether the deflection of the electrical signal is positive or negative. And a number, which represent, on average, how many hundreds of milliseconds after stimulus presentation the component appears. The event-related- potential shows special interest for researchers, because different components of the response indicate different aspects of cognitive processing. For example, presenting the sentences “The cats won’t eat” and “The cat won’t bake”, the N400 response for the word “eat” is smaller than for the word “bake”. From this one can draw the conclusion that our brain needs 400 ms to register information about a word’s meaning. Furthermore, one can figure out where this activity occurs in the brain, namely if one looks at the position on the scalp of the electrodes that pick up the largest response.

MEG

[edit | edit source]Magnetoencephalography (MEG) is related to electroencephalography (EEG). However, instead of recording electrical potentials on the scalp, it uses magnetic potentials near the scalp to index brain activity. To locate a dipole, the magnetic field can be used, because the dipole shows excellently the intensity of the magnetic field. By using devices called SQUIDs (superconducting quantum interference device) one can record these magnetic fields.

MEG is mainly used to localize the source of epileptic activity and to locate primary sensory cortices. This is helpful because by locating them they can be avoided during neurological intervention. Furthermore, MEG can be used to understand more about the neurophysiology underlying psychiatric disorders such as schizophrenia. In addition, MEG can also be used to examine a variety of cognitive processes, such as language, object recognition and spatial processing among others, in people who are neurologically intact.

MEG has some advantages over EEG. First, magnetic fields are less influenced than electrical currents by conduction through brain tissues, cerebral spinal fluid, the skull and scalp. Second, the strength of the magnetic field can tell us information about how deep within the brain the source is located. However, MEG also has some disadvantages. The magnetic field in the brain is about 100 million times smaller than that of the earth. Due to this, shielded rooms, made out of aluminum, are required. This makes MEG more expensive. Another disadvantage is that MEG cannot detect activity of cells with certain orientations within the brain. For example, magnetic fields created by cells with long axes radial to the surface will be invisible.

Techniques for Modulating Brain Activity

[edit | edit source]TMS

[edit | edit source]History: Transcranial magnetic stimulation (TMS) is an important technique for modulating brain activity. The first modern TMS device was developed by Antony Baker in the year 1985 in Sheffield after 8 years of research. The field has developed rapidly since then with many researchers using TMS in order to study a variety of brain functions. Today, researchers also try to develop clinical applications of TMS, because there are long lasting effects on the brain activity it has been considered as a possible alternative to antidepressant medication.

Method: UMTS utilizes the principle of electromagnetic induction to an isolated brain region. A wire-coil electromagnet is held upon the fixed head of the subject. When inducing small, localized, and reversible changes in the living brain tissue, especially the directly under laying parts of the motor cortex can be effected. By altering the firing-patterns of the neurons, the influenced brain area is disabled. The repetitive TMS (rTMS) describes, as the name reveals, the application of many short electrical stimulations with a high frequency and is more common than TMS. The effects of this procedure last up to weeks and the method is in most cases used in combination with measuring methods, for example: to study the effects in detail.

Application: The TMS-method gives more evidence about the functionality of certain brain areas than measuring methods on their own. It was a very helpful method in mapping the motor cortex. For example: While rTMS is applied to the prefrontal cortex, the patient is not able to build up short term memory. That determines the prefrontal cortex, to be directly involved in the process of short term memory. By contrast measuring methods on their own, can only investigate a correlation between the processes. Since even earlier researches were aware that TMS could cause suppression of visual perception, speech arrest, and paraesthesias, TMS has been used to map specific brain functions in areas other than motor cortex. Several groups have applied TMS to the study of visual information processing, language production, memory, attention, reaction time and even more subtle brain functions such as mood and emotion. Yet long time effects of TMS on the brain have not been investigated properly, Therefore experiments are not yet made in deeper brain regions like the hypothalamus or the hippocampus on humans. Although the potential utility of TMS as a treatment tool in various neuropsychiatric disorders is rapidly increasing, its use in depression is the most extensively studied clinical applications to date. For instance in the year 1994, George and Wassermann hypothesized that intermittent stimulation of important prefrontal cortical brain regions might also cause downstream changes in neuronal function that would result in an antidepressant response. Here again, the methods effects are not clear enough to use it in clinical treatments today. Although it is too early at this point to tell whether TMS has long lasting therapeutic effects, this tool has clearly opened up new hopes for clinical exploration and treatment of various psychiatric conditions. Further work in understanding normal mental phenomena and how TMS affects these areas appears to be crucial for advancement. A critically important area that will ultimately guide clinical parameters is to combine TMS with functional imaging to directly monitor TMS effects on the brain. Since it appears that TMS at different frequencies has divergent effects on brain activity, TMS with functional brain imaging will be helpful to better delineate not only the behavioral neuropsychology of various psychiatric syndromes, but also some of the pathophysiologic circuits in the brain.

tDCS

[edit | edit source]transcranial Direct Current Stimulation: The principle of tDCS is similar to the technique of TMS. Like TMS this is a non-invasive and painless method of stimulation. The excitability of brain regions is modulated by the application of a weak electrical current.

History and development: It was first observed that electrical current applied to the skull lead to an alleviation of pain. Scribonius Largus, the court physician to the Roman emperor Claudius, found that the current released by the electric ray has positive effects on headaches. In the Middle Ages the same property of another fish, the electrical catfish, was used to treat epilepsy. Around 1800, the so-called galvanism (it was concerned with effects of today’s electrophysiology) came up. Scientists like Giovanni Aldini experimented with electrical effects on the brain. A medical application of his findings was the treatment of melancholy. During the twentieth century among neurologists and psychiatrists electrical stimulation was a controversial but nevertheless wide spread method for the treatment of several kinds of mental disorders (e.g. Electroconvulsive therapy by Ugo Cerletti).

Mechanism: The tDCS works by fixation of two electrodes on the skull. About 50 percent of the direct current applied to the skull reaches the brain. The current applied by a direct current battery usually is around 1 to 2 mA. The modulation of activity of the brain regions is dependent on the value of current, on the duration of stimulation and on the direction of current flow. While the former two mainly have an effect on the strength of modulation and its permanence beyond the actual stimulation, the latter differentiates the modulation itself. The direction of the current (anodic or cathodic) is defined by the polarity and position of the electrodes. Within tDCS two distinct ways of stimulation exist. With the anodal stimulation the anode is put near the brain region to be stimulated and analogue for the cathodal stimulation the cathode is placed near the target region. The effect of the anodal stimulation is that the positive charge leads to depolarization in the membrane potential of the applied brain regions, whereas hyperpolarisation occurs in the case of cathodal stimulation due to the negative charge applied. The brain activity thereby is modulated. Anodal stimulation leads to a generally higher activity in the stimulated brain region. This result can also be verified with MRI scans, where an increased blood flow in the target region indicates a successful anodal stimulation.

Applications: From the description of the TMS method it is should be obvious that there are various fields of appliances. They reach from identifying and pulling together brain regions with cognitive functions to the treatment of mental disorders. Compared to TMS it is an advantage of tDCS to not only is able to modulate brain activity by decreasing it but also to have the possibility to increase the activity of a target brain region. Therefore the method could provide an even better suitable treatment of mental disorders such as depression. The tDCS method has also already proven helpful for apoplectic stroke patients by advancing the motor skills.

Behavioural Methods

[edit | edit source]Besides using methods to measure the brain’s physiology and anatomy, it is also important to have techniques for analyzing behaviour in order to get a better insight on cognition. Compared to the neuroscientific methods, which concentrate on neuronal activity of the brain regions, behavioural methods focus on overt behaviour of a test person. This can be realized by well defined behavioural methods (e.g. eye-tracking), test batteries (e.g. IQ-test) or measurements which are designed to answer specific questions concerning the behaviour of humans. Furthermore, behavioural methods are often used in combination with all kinds of neuroscientific methods mentioned above. Whenever there is an overt reaction on a stimulus (e.g. picture) these behavioural methods can be useful. Another goal of a behavioural test is to examine in what terms damage of the central nervous system influences cognitive abilities.

A Concept of a behavioural test

[edit | edit source]The tests are performed to give an answer to certain questions about human behaviour. In order to find an answer to that question, a test strategy has to be developed. First it has to be carefully considered, how to design the test in the best way, so that the measurement results provide an accurate answer to the initial question. How can the test be conducted so that founding variables are minimal and the focus really is on the problem? When an appropriate test arrangement is found, the defining of test variables is the next part. The test is now conducted and probably repeated until a sufficient amount of data is collected. The next step is the evaluation of the resulting data, with the suitable methods of statistics. If the test reveals a significant result, it might be the case that further questions arise about neuronal activity underlying the behaviour. Then neuroscientific methods are useful to investigate correlating brain activities. Methods, which proved to provide good evidence to a certain recurrent question about cognitive abilities of subjects, can bring together in a test battery.

Example: Question: Does a noisy surrounding affect the ability to solve a certain problem?

Possible test design: Expose half of the subject to a silent environment while solving the same task as the other half in a noisy environment. In this example founding variables might be different cognitive abilities of the participants. Test variables could be the time needed to solve the problem and the loudness of the noise etc. If statistical evaluation shows significance: Probable further questions: How does noise affect the brain activities on a neuronal level?

Are you interested in doing a behavioural test on your own, visit: the socialpsychology.org website.[2]

Test batteries

[edit | edit source]A neuropsychological assessment utilizes test batteries that give an overview on a person’s cognitive strengths and weaknesses by analyzing various cognitive abilities. A neuropsychological test battery is used by a neuropsychologist to assess brain dysfunctions that can rise from developmental, neurological or psychiatric issues. Such batteries can appraise various mental functions and the overall intelligence of a person.

Firstly, there are test batteries designed to assess whether a person suffers from a brain damage or not. They generally work well in discriminating those with brain damage from neurologically impaired patients, but worse when it comes to discriminating them from those with psychiatric disorders. The most popular test, Halstead-Reitan battery, assesses abilities ranging from basic sensory processing to complex reasoning. Furthermore, the Halstead-Reitan battery provides information on the cause of the damage, the brain areas that were harmed, and the stage the damage has reached. Such information is valuable in developing a rehabilitation program. Another test battery, the Luria-Nebraska battery, is twice as fast to administer as the Halstead-Reitan. Its subtests are ordered according to twelve content scales (e.g. motor functions, reading, memory etc.). These two test batteries do not focus only on the absolute level of performance, but look at the qualitative manner of performance as well. This allows for a more comprehensive understanding of the cognitive impairment.

Another type of test batteries, the so-called IQ tests, aims to measure the overall cognitive performance of an individual. The most commonly used tests for estimating intelligence are the Wechsler family intelligence tests. Age-appropriate test versions exist for small children from age 2 years and 6 months, school-aged children, and adults. For example, the Wechsler Intelligence Scale for Children, fifth edition (WISC-V) measures various cognitive abilities in children between 6 and 16 years of age. The test consists of multiple subtests that form five different main indexes of cognitive performance. These main constructs are verbal reasoning skills, inductive reasoning skills, visuo-spatial processing, processing speed and working memory. Performance is analyzed both compared to a normative sample of similarly aged peers and within the test subject, assessing personal strengths and weaknesses.

The Eye Tracking Procedure

[edit | edit source]Another important procedure for analyzing behavior and cognition is Eye-tracking. This is a procedure of measuring either where we are looking (the point of gaze) or the motion of an eye relative to the head. There are different techniques for measuring the movement of the eyes and the instrument that does the tracking is called the tracker. The first non-intrusive tracker was invented by George Buswell.

The eye tracking is a study with a long history, starting back in the 1800s. In 1879 Louis Emile Javal noticed that reading does not involve smooth sweeping of the eye along the text but rather series of short stops which are called fixations. This observation is one of the first attempts to examine the eye’s directions of interest. The book of Alfred L. Yarbus which he published in 1967 after an important eye tracking research is one of the most quoted eye tracking publications ever. The eye tracking procedure is not that complicated. Video based eye trackers are frequently used. A camera focuses on one or both eyes and records the movements while the viewer looks at some stimulus. The most modern eye trackers use contrast to locate the center of the pupil and create corneal reflections using infrared or near-infrared non-collimated light.

There are also two general types of eye tracking techniques. The first one – the Bright Pupil is an effect close to the red eye effect and it appears when the illuminator source is onset from the optical path while when the source is offset from the optical path, the pupil appears to be dark (Dark Pupil). The Bright Pupil creates great contrast between the iris and the pupil which allows tracking in light conditions from dark to very bright but it is not effective for outdoor tracking. There are also different eye tracking setup techniques. Some are head mounted, some require the head to be stable, and some automatically track the head during motion. The sampling rate of the most of them is 30 Hz. But when we have rapid eye movement, for example during reading, the tracker must run at 240, 350 or even 1000-1250 Hz in order to capture the details of the movement. Eye movements are divided to fixations and saccades. When the eye movement pauses in a certain position there is a fixation and saccade when it moves to another position. The resulting series of fixations and saccades is called a scan path. Interestingly most information from the eye is received during a fixation and not during a saccade. Fixation lasts about 200 ms during reading a text and about 350 ms during viewing of a scene and a saccade towards new goal takes about 200 ms. Scan paths are used in analyzing cognitive intent, interest and salience.

Eye tracking has a wide range of application – it is used to study a variety of cognitive processes, mostly visual perception and language processing. It is also used in human-computer interactions. It is also helpful for marketing and medical research. In recent years the eye tracking has generated a great deal of interest in the commercial sector. The commercial eye tracking studies present a target stimulus to consumers while a tracker is used to record the movement of the eye. Some of the latest applications are in the field of the automotive design. Eye tracking can analyze a driver’s level of attentiveness while driving and prevent drowsiness from causing accidents.

Modeling Brain-Behaviour

[edit | edit source]Another major method, which is used in cognitive neuroscience, is the use of neural networks (computer modelling techniques) in order to simulate the action of the brain and its processes. These models help researchers to test theories of neuropsychological functioning and to derive principles viewing brain-behaviour relationships.

In order to simulate mental functions in humans, a variety of computational models can be used. The basic component of most such models is a “unit”, which one can imagine as showing neuron-like behaviour. These units receive input from other units, which are summed to produce a net input. The net input to a unit is then transformed into that unit’s output, mostly utilizing a sigmoid function. These units are connected together forming layers. Most models consist of an input layer, an output layer and a “hidden” layer as you can see on the right side. The input layer simulates the taking up of information from the outside world, the output layer simulates the response of the system and the “hidden” layer is responsible for the transformations, which are necessary to perform the computation under investigation. The units of different layers are connected via connection weights, which show the degree of influence that a unit in one level has on the unit in another one.

The most interesting and important about these models is that they are able to "learn" without being provided specific rules. This ability to “learn” can be compared to the human ability e.g. to learn the native language, because there is nobody who tells one “the rules” in order to be able to learn this one. The computational models learn by extracting the regularity of relationships with repeated exposure. This exposure occurs then via “training” in which input patterns are provided over and over again. The adjustment of “the connection weights between units” as already mentioned above is responsible for learning within the system. Learning occurs because of changes in the interrelationships between units, which occurrence is thought to be similar in the nervous system.

References

[edit | edit source]- ↑ Filler, AG: The history, development, and impact of computed imaging in neurological diagnosis and neurosurgery: CT, MRI, DTI: Nature Precedings DOI: 10.1038/npre.2009.3267.4.

- ↑ Socialpsychology.org

- Ward, Jamie (2006) The Student's Guide to Cognitive Neuroscience New York: Psychology Press

- Banich,Marie T. (2004). Cognitive Neurosciene and Neuropsychology. Housthon Mifflin Company. ISBN 0618122109

- Gazzangia, Michael S.(2000). Cognitive Neuroscience. Blackwell Publishers. ISBN 0631216596

- 27.06.07 Sparknotes.com

- (1) 4 April 2001 / Accepted: 12 July 2002 / Published: 26 June 2003 Springer-Verlag 2003. Fumiko Maeda • Alvaro Pascual-Leone. Transcranial magnetic stimulation: studying motor neurophysiology of psychiatric disorders

- (2) a report by Drs Risto J Ilmoniemi and Jari Karhu Director, BioMag Laboratory, Helsinki University Central Hospital, and Managing Director, Nexstim Ltd

- (3) Repetitive Transcranial Magnetic Stimulation as Treatment of Poststroke Depression: A Preliminary Study Ricardo E. Jorge, Robert G. Robinson, Amane Tateno, Kenji Narushima, Laura Acion, David Moser, Stephan Arndt, and Eran Chemerinski

- Moates, Danny R. An Introduction to cognitive psychology. B:HRH 4229-724 0