Advanced Robotics Book

This Wikibook was mostly written around 2012 and may be somewhat out of date, though core concepts should hold true. |

The Future of Robots

[edit | edit source]In today’s world, technology that is used for robots is ever changing and becoming better. In the past, robots were merely a dream, a “what if” in what the future might hold in terms of technology. Each and every day, advances are being made that are making that dream ever so close to becoming a reality. Humans become more experienced because they never want to be confined by the restrictions that are placed upon them, always refusing to obey the limits of limitations. If one tells a person that he or she cannot do something, then he or she will strive to surpass what others think cannot be done. With planes, helicopters, and other aerial inventions, people have escaped the confines of being restricted to the ground. With rocket ships, humans have shown that they can even escape the confines of the planet, leaving it entirely to a point where they are so high they can look down upon their home world, achieving something that so few can. This type of movement in technology is what pushes the mind to want to create something more because if we can achieve something like space travel, we can do so much more.

There are several robots that already exist in this world today; there are ones that are used for cleaning, some that can dance, and ones that can attach to someone almost like a suit of armor. The Roomba is a robot that is small and circular in size and is designed to clean the floors of a house just like a vacuum. Built by a company called iRobot, the Roomba is made to automatically move around the house, sucking up dirt and grime off of floors and carpets, from edges and corners. It is also made not to get tangled up in chair legs or tables. There are robots, like the Toyota trumpet-playing robot, that can imitate the movement of human lips to the point that they can play songs on actual instruments, like trumpets.

With such advancements made each and every day, the future of robotics is heading in the direction of building full humanoid-like robots with human skeletal and muscular systems. Scientists are also working on building robots with using neural networks, which are modeled after a human’s nervous system. This allows a robot to have a “brain” that allows them to learn as they go on with their tasks. If a robot runs into a desk, for example, because of its neural network, a robot will know not to move in that same direction again to avoid running into the desk again. This is something that scientists are trying to implement intro android designs, but it is something that is proving difficult. One problem is trying to get androids to move around on two legs like humans can because robots on legs are more difficult to get moving than robots on wheels; they have to “learn” to balance themselves. With the rapid pace at which technology is growing, scientists will soon find a way to make these robots more like us.

Programming Concepts

[edit | edit source]Robot programming includes the coded commands which tell the robot when to move, where to go, how fast to move, as well as many other things. A written program is made up of the instructions that manipulate a robot's actions, and it supplies information concerning necessary tasks. A user program is composed of a series of instructions that describe the desired behavior. Programming robots can be a complicated and challenging process. Since people are now trying to create robots that will save money and better facilitate others to make certain jobs easier, creating user-friendly and unchallenging programming languages is essential.

Robot programming is used to manipulate physical objects in the real world, while representing some data in a model not necessarily part of the real world. Commands based on some model are causing robots to alter the conditions of the real world as well as the world model itself. One of the most important concepts in programming a robot is to move the robot the same way a human might move; a robot should be able to move in all directions. An advanced robotics programming concept is the use of sensors, such as touch, ultrasonic, or light sensors that allow a robot to interact with the real world around it. Because of these, many robots today have monitors containing built-in programs to communicate with the robot control system and other applications. Monitors can read a specific sensor to then modify the robot’s actions, such as having a robot move in a particular path or to avoid a person that is walking toward the robot.

In an embedded system, the operating system is often called firmware, or software for an embedded system stored in nonvolatile flash memory that provides control over the hardware of the embedded system. This allows a user to write a program that can be compiled directly on a robot as a compact file, known as bytecode, suitable for execution. Bytecode cannot be executed directly; instead, the operating system contains an application that looks at each bytecode and converts it to the appropriate functionality. Based on movement and sensors, robots can now also use cognition to plan its next move. Based on the coding of a program, a robot can “see” what is coming next and can execute a line or lines of code based on what is going on around the robot. If the robot feels that it just ran into a chair, say, the robot can then plan to back up and move to go around the chair. These concepts are what make a robot act as humans would in the real world.

Robot Control

[edit | edit source]Robot control is the study of controlling robots. Robot control refers to the way that the robot senses and takes action and how the sensors and other parts of the robot work together efficiently. There are many program controls with robots, an almost infinite number of possibilities, and they are limited to a specific number of boundaries when it comes to actual control. There are four basic ways of controlling a robot that are being used today: deliberative control, reactive control, hybrid control, and behavior-based control. For deliberative control, the robot thinks hard first and then acts. With reactive control, a robot does not think, but it just reacts instead. A robot thinks and acts independently in parallel with hybrid control. Finally, for behavior-based control, a robot thinks the way it acts.

These points are explained because each and every robot has its own strengths and weaknesses, so there is no one single approach that is best for controlling robots. There are some trade offs when it comes to controlling robots. The thinking can be slow, but the reaction must be fast. Thinking allows the privilege of being able to look ahead and plan ahead so that any bad actions can be avoided. There is a drawback to thinking, however; because thinking too long can be dangerous because of the fact that it could put the robot in a dangerous situation since it is preoccupied with thinking and not watching out for its surroundings, something like getting run over or falling off a cliff could happen.

It is because of these tradeoffs that robots act differently from each other. Some are made to think little and act little, while others do not even think at all. These types of decisions are thought of when deciding what purpose the robot will have and the kind of environment it will be placed in. If the purpose of the robot is to react and move quickly, then there will be almost no time at all for thinking, and, in turn, it will not be programmed to think in that manner. Two examples of robots that have to react and move quickly are soccer playing robots and car robots. The soccer playing robot has to move and react quickly because it has to be able to move around its environment quickly in order to get the ball and try to score a goal. The car robot has to move and react quickly because it needs to be able to move at a certain speed and react quickly in order not to hit anything that may enter in its pathway.

On the other hand, robots that are in an environment where it is not always changing have the time and luxury of being able to think and plan ahead. Examples of these types of robots are robots that are made to play chess, robots that can build objects, and robots that are given the task of watching a warehouse. The chess playing robot, for example, needs to be able to think and plan ahead because chess is a mind game, and to think and react quickly without taking into consideration every possible detail would result in a quick failure on the robot's part.

Robot Hardware

[edit | edit source]

Since robots are meant to work in the real world, just as humans need their body and heart to function, robots need some sort of hardware to serve as their "body". The hardware of a robot is anything physical used to help the robot operate in a certain way. The hardware needed to build a robot is diverse. It depends on what type of robot one is building. If the robot is an automated factory worker the hardware could involve all sorts of sophisticated and heavy industry tools. If, on the other hand, one is working to build a humanoid or space exploration robot, the hardware can be muscle wire or sensitive lenses, for example.

Robots use many different types of hardware to allow the robots to function in a specific way. Hardware is what allows a robot to move in a certain way. Robots use motors in order to move. Some may only have one motor which allows them to move forward and backward only at a particular speed. Robots with more than one motor have the added capability to make turns as well. By allowing one motor to move quicker than another one, the robot can make a turn in the direction of the slower-moving motor. A programmer can program the motors to move at different speeds, which allows the robot more capacity for work.

Robots also have driving mechanisms, which include gears, chains, pulleys, belts, and gearboxes. The central purpose of the gears and chains is to pass on the rotary movement from one place to another; during this process, there is also a possibility to change the speed or the direction of the movement. These driving mechanisms can help the robot transfer pressure from one place to another on the robot to keep the weight of the robot uniform. They can also help the motors move smoothly. While pulleys and belts also have the same function as gears and chains, pulleys have shapes like wheels that have grooves around their edges.

In order to build a more intelligent robot, one also can install sensors to give a robot more life. For example, a robot can have a touch sensor that, when programmed, may allow a robot to back away when it runs into an object. Robots can also use sound sensors to play sounds as well as to hear sounds and respond to them appropriately. Light sensors allow robots to perform some action or task when they enter a space that has a certain amount of light. A simple example of this is programming a robot to follow a line; by sensing the darkness of a line, the robot can follow it without straying from the path. Robots can also use ultrasonic sensors to sense an object or a person in front of it without having to actually touch or run into it. This may be helpful with especially fragile robots; being able to avoid touching objects may keep pressure off of delicate areas in the build.

Robots also need some sort of a power supply. In a Lego Mindstorms NXT robot, the power supply is the brick. It holds the batteries as well as all the ports in order to plug in motors and sensors. Without the brick, a robot would not be able to move or function in any way. The brick is the main source of the energy for the robot.

Robots can also be used along with cameras. Attaching a camera or a microphone to a robot can allow a person to move the robot and see where it is going or hear what it going on in the surrounding areas. These extra hardware packages can make the robot smarter and more creative.

-

An aquatic robot.

-

A small rover style robot.

-

A millitary robot.

-

A rose clipping robot.

-

A humanoid shaped robot.

-

An informational robot at an Airport.

Mathematics of Robot Control

[edit | edit source]The mathematics of robot control is what goes on in the brick of a robot that allows it to move and function. A term that is commonly used when talking about the mathematics of controlling a robot is kinematics. Kinematics is the mechanics that describe the motion of objects and systems and when it relates to robots; it is what allows the robot to move. Kinematics is a system of bodies that are linked together by mechanical joints, and the system allows for an object to conduct motion. One example is a crane; kinematics allows a crane to move up and down and open and close in order to scoop up or grab hold of objects.

The fact that this is the mathematics of robot control means that there is science involved that explains how a robot is able to move using the joints or mechanics inside of it and what program or code gives it the power to move. In kinematics, the position of a point is an absolute key when it comes to movement. In order to identify what the position of a point is, one has to identify three other things first: the origin, which is also called the reference point; the difference, or how far away the robot is, from the origin; and the direction of the reference point.

In mathematics for robotics, there is also forward and inverse kinematics. To start, forward kinematics also goes by the name “direct kinematics,” and in forward kinematics, the only things that are given are the angle of each joint and the length of each link. What one has to calculate is the position of any point in the robot’s work volume. When it comes to inverse kinematics, one does the opposite; one is given the length of each joint and the position of the point in work volume, and one has to calculate the angle of each joint.

Robot Programming Languages

[edit | edit source]In order to program a robot, many languages are available to use. It depends on the knowledge of the programmer. Many different robot kits or models may work best with certain programming languages, depending on the difficulty of the program and the build of the robot. Depending on whether a person has knowledge of C, C++, Java, or something else, certain programs may be easier to pick up.

ROBOTC is a program based on the C language, and it is very compatible, especially with NXT robots. It was created by the Robotics Academy at Carnegie Mellon University. ROBOTC is very easy to learn, and it can be used for easy programs as well as more complex programs. The programming language contains a debugger that allows programmers to see where their programming mistakes are; this allows for easy editing. ROBOTC allows a programmer to specify motors, timers, sensors, and variables.

Similar to ROBOTC, Not Exactly C, or NXC, is also a C-based program. NXC programs use the Bricx development environment, which was initially developed for LEGO’s earlier RCX robotics product and has been enhanced to support the NXT. NXC uses the same firmware as NXT-G, the basic graphical user interface used with LEGO Mindstorms robot kits. This allows programmers to use a graphical environment and a text environment without having to change the firmware that is loaded on the brick of a robot. A person can store both NXT-G and NXC programs simultaneously in the same brick. NXC allows integer variables; however, floating-point variables create problems.

Another programming language that is compatible with NXT is ROBOLAB, which is more of a graphical representation of the program, rather than lined text. Developed at Tufts University, ROBOLAB was originally used for the first generation LEGO Mindstorms RCX microprocessor “brick.” Like the NXC language, it was expansively improved and modified to function with both the RCX and the second-generation NXT. This program is useful usually only if you are comfortable with the ROBOLAB platform. If a programmer is unfamiliar with how it works, there are much easier languages a programmer could choose to utilize.

Lejos is a Java- based program, so if a person is more familiar with Java than with C, Lejos is a good language to use with robots. Just like any other programming language, Lejos programs are written and compiled on a PC. The compiled programs are then transported to the NXT to be executed.

Pblua is also programming language used for robots. Lua is a relatively new text-based language. It allows a person to virtually do anything that he or she can do with ROBOTC or NXC; it is just a different programming language that one can learn to broaden their robotics programming language knowledge.

Obstacle Avoidance

[edit | edit source]

Obstacle avoidance is a type of robotic training that allows robots the task of moving on the foundation of the information that is provided by its senses. These methods are used as a different and natural option when the situation is unpredictable with unknowing behavior, or the scenario is dynamic. In these types of situations, the surroundings of a robot may be always shifting and, because of this, the information that the sensor receives can be used to discover the changes that have been made so the robot can continue to adapt and move.

Some of the research that has been done on the subject has identified two major problems with this training. One of them is two vehicles that were placed in troublesome events; there, it showed that the technology being used had limited ability for being suited to the matter at hand. The other reason for the major problems was the robot understanding the role of the characteristic of the vehicle within the obstacle avoidance pattern. The characteristics were dynamics, shape, and kinematics.

Most of the techniques that have to do with obstacle avoidance do not take in the fact that the kinematic and shape constraints that the vehicle has. The ones working on the vehicle may assume that the vehicle has infinite directional abilities and are doomed when they discover the vehicle cannot do everything that they thought it could. One contribution is a framework that is able to consider both the shape and kinematics together. This obstacle avoidance process is done by abstracting the constraints from the avoidance method.

Task Planning and Navigation

[edit | edit source]

Task planning, which is being able to achieve a goal by performing certain smaller tasks to reach the ultimate goal, involves learning the spatial requirements for a robot to perform basic operations, such as navigation, efficiently and properly. Because of requirements of robot-environments in the real work force, task planning in robotics should be executed efficiently.

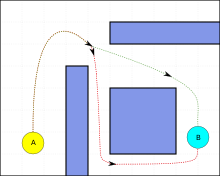

For a robot to plan for a task appropriately, mobile robots usually rely exclusively on spatial information, which it can learn through synchronization of the sensors to obtain and understand information from the outside surroundings, and on trivial domain knowledge, like labels attached to objects and places. Some robots may have information stored about configured space to determine an appropriate path across the configured space that it is knowledgeable about so that it can navigate around barriers. Some robots depend on sensors or programmed rules within the code of the robot in order to pick a suitable route to take when moving. There are also neural network systems that allow a robot to learn as it goes by experimentation. If the robot runs into an object which forces it to turn around, the robot will know, from now on, that it should not go that way again to avoid the particular obstacle. Also, there are some algorithms that can derive a path for robots to take to maximize the distance from a specific obstacle.

In order for robots to successfully perform task planning and navigation, the robot must have organized communication between all of its parts, such as the sensors. Every component of a robot significantly influences the performance. A robot needs to be able to function without collisions as well as knowing what safe conditions are necessary; this includes making sure the robot does not encounter unsafe temperatures or lights, for example.

For a robot to navigate safely, the robot must become aware of itself in reference to some location. Being able to avoid running into harmful objects or falling down stairs is crucial to the success of a robot in its task planning. A program for a robot should include this in the code, whether it is using sensors to become aware of the world or neural networks. Based on this information, a robot can then plan accordingly in terms of its speed or its turning in different directions. A robot should also be able to create a continuous motion during its task in order to achieve the goal smoothly.

Robot Vision

[edit | edit source]

The field of robot vision guidance is a field that is developing at a very fast pace. With the benefits of high level and degree technology, it gives savings, reliability, productivity, and safety. Both identification and navigation are used when it comes to robot vision. Vision applications are generally made for finding a part and positioning it for inspection or robotic handling before another application is performed. There are times when multiple mechanical tools can be replaced with single robot stations by a vision guided robot.

When a robot needs the vision ability, it takes the combination of calibration, temperature, software, cameras, and algorithms for it to work. The calibration of the systems for robot vision is dependent on application. Those applications can range from simple guidance applications to applications that are more complex that need the data from more than one sensor to work. Algorithms are things that are always improving and allow for more high level and more complex detection. There are robots that now have collision detection, which is something that tells the system when the robot is about to hit something. This ability allows the robots to work along with robots in areas with obstacles and not have the fear of having a major collision. The robot would stop moving for a bit when it detects another object in its way that is restricting its movement.

Robot vision simplifies processes making them more straightforward, and, in turn, that cuts costs. Fixturing and hard tooling are both eliminated when robot vision is uses. The products can be identified and the applications can be performed without the need for securing. There are savings in the machinery and labor department. There is no need for labor because the robot now handles its own loading of parts.

There are steps one can take when trying to decide on the right type of robot guidance system. One should work with an integrator and keep some things in mind. One must make sure that the robot vision is able to connect with the robot system and the applications for it. If something were to disconnect, then that could harm the robot or the product and could cause a loss in quality and production. One must also make sure that the environment that the robot is in is a controlled one so that the robot vision remains aware and sharp. Finally, one must consider all of the elements in the environment, like the lighting, the chemicals in the air, and the color changes, as they can affect what a robot sees in its vision.

Knowledge Based Vision Systems

[edit | edit source]A vision system is used in robotics to organize programs to process images and to recognize objects. In order for this to work properly and to allow a robot system to perform at a desired level in its environment, a vision system should adjust to numerous working circumstances by altering and correcting the limitations that manage the system’s several processing phases. A knowledge based vision system must decide which vision modules to connect together, and it must be the power source of image acquisition. Knowledge-based vision systems that analyze knowledge that is given explicitly can be used for this task.

For knowledge based vision system, one must give the robot the parameters of the object, such as the curves or corners of a specific object. From this information, the robot can produce certain controllers. Based on what the robot was given as well as what it computes on its own, the knowledge based vision system can create a model showing the objects, which can be revised by the user.

A knowledge based vision system is responsible for tuning parameters that it comes across, obtaining images through changing shutter speeds of a camera or adjusting the light settings possibly, and observing the system’s configuration to make changes if necessary. A knowledge based vision system may encounter different types of input data, so it must be able to adjust accordingly to changes it may come across while running. Through its blueprint, a knowledge-based vision system has the capability to recognize new information about the surroundings in the world and alter its actions consequently.

Knowledge based vision systems, however, can be expensive to use. Having to collect knowledge, such as images, can be money- and time-consuming. Knowledge based visions systems still should be researched and altered to work better in dynamic worldly situations.

Robots and Artificial Intelligence

[edit | edit source]Artificial Intelligence is one of the fields in robotics that harbors the most excitement. When it comes to controversy in the field of robotics, AI is always at the top of the list. While everyone can agree that a robot is capable enough to work on an assembly line, not everyone can agree that a robot can be intelligent on its own. Artificial Intelligence is as hard a term to define as robot. An Ultimate AI would be a man-made machine that has human beings’ intellectual abilities; it would be a recreation of the human thought process. With these abilities, the robot would be able to learn almost anything; it would be able to create its own ideas, learn how to use human language, and reason. Right now, the area of robotics is nowhere near this level of Artificial Intelligence, but it has been making progress in areas of limited AI.

Computers are already able to solve problems to some degree, as the basic idea of Artificial Intelligence problem solving is very simple. It is the execution that is the complicated part. First the computer, or robot, has to gather the facts of a situation through what a human tells it or through its own sensors. The computer then compares the information to stored data and then decides what the information means. The computer then runs through different types of actions that it could take and decides which action is best suited based on the information that it gathered. It is only capable of solving the problem that it is given and does not have any analytical ability.

The real challenge when it comes to Artificial Intelligence is trying to understand how natural intelligence works. Since scientists do not have a solid model to work from, developing an A.I is not like building an artificial heart. Scientists know that the brain contains billions of neurons and that it’s the electrical connection between the different neurons that allow people to think and learn. What they don’t know is how the connections add up to having higher reasoning or even operations of the low level, and it is this complex circuitry that is difficult to understand.