Linguistics/Phonetics

| Linguistics |

| 00. Introduction |

|---|

| Theoretical Linguistics |

| 01. Phonetics • 02. Phonology • 03. Morphology • 04. Syntax • 05. Semantics • 06. Pragmatics • 07. Discourse Analysis |

| Language as Signs |

| 08. Semiotics • 09. Sign Language • 10. Orthography |

| Language and the Human Mind |

| 11. Psycholinguistics • 12. Neurolinguistics • 13. Language Acquisition • 14. Evolutionary Linguistics |

| The Diversity of Language |

| 15. Typology • 16. Historical Linguistics • 17. Dialectology and Creoles • 18. Sociolinguistics • 18. Anthropological Linguistics |

| Appendices |

| Glossary • IPA Chart • Further reading • Bibliography • License |

Introduction[edit | edit source]

If you have ever heard a person learning English as a second language say, "I want to go to the bitch" (meaning "I want to go to the beach"), you might understand the importance of mastering phonetics when learning new languages.

As such an example illustrates, few people in our society give conscious thought to the sounds they produce and the subtle differences they possess. It is unfortunate, but hardly surprising, that few language-learning books use technical terminology to describe foreign sounds. Language learners often hear unhelpful advice such as "It is pronounced more crisply". As scientists, we cannot be satisfied with this state of affairs. If we can classify the sounds of language, we are one step closer to understanding the gestalt of human communication.

The study of the production and perception of speech sounds is a branch of linguistics called phonetics, studied by phoneticians. The study of how languages treat these sounds is called phonology, covered in the next chapter. While these two fields have considerable overlap, it should soon become clear that they differ in important ways.

Phonetics is the systematic study of the human ability to make and hear sounds which use the vocal organs of speech, especially for producing oral language. It is usually divided into the three branches of (1) articulatory, (2) acoustic and (3) auditory phonetics. It is also traditionally differentiated from (though overlaps with) the field of phonology, which is the formal study of the sound systems (phonologies) of languages, especially the universal properties displayed in ALL languages, such as the psycholinguistic aspects of phonological processing and acquisition.

One of the most important tools of phonetics and phonology is a special alphabet called the International Phonetic Alphabet or IPA, a standardized representation of the sounds used in human language. In this chapter, you will learn what sounds humans use in their languages, and how linguists represent those sounds in IPA. Reading and writing IPA will help you understand what's really happening when people speak.

Phonetic transcription and the IPA[edit | edit source]

It is often convenient to split up speech in a language into segments, which are defined as identifiable units in the flow of speech. In many ways this discretization of speech is somewhat fictional, in that both articulation and the acoustic signal of speech are almost entirely continuous. Additionally, attempts to classify segments by nature must ignore some level of detail, as no two segments produced at separate times are ever identical. Even so, segmentation remains a crucial tool in almost all aspects of linguistics.

In phonetics the most basic segments are called phones, which may be defined as units in speech which can be distinguished acoustically or articulatorily. This definition allows for different degrees of wideness.[1] In many contexts phones may be thought of as acoustic or articulatory targets which may or may not be fully reached in actual speech. Another, more commonly used segment is the phoneme, which will be defined more precisely in the next chapter.

It is important to keep in mind that while the segment may (or may not) be a reality of phonology, it is in no way an actual physical part of realized speech in the vocal tract. Realized speech is highly co-articulated, displays movement and spreads aspects of sounds over entire syllables and words. It is convenient to think of speech as a succession of segments (which may or may not coincide closely with ideal segments) in order to capture it for discussion in written discourse, but actual phonetic analysis of speech confounds such a model. It should be pointed out, however, that if we wish to set down a representation of dynamic, complex speech into static writing, segmental constructs are very convenient fictions to indicate what we are trying to set down. Similarly, syllables and words are convenient structures which capture the prosodic structure of a language, and are often notated in written form, but are not physical realities.

The International Phonetic Alphabet (IPA) is a system of phonetic notation which provides a standardized system of transcribing phonetic segments up to a certain degree of detail. It may be represented visually using charts, which may be found in full in Appendix A. We will leave a more detailed description of the IPA to the end of this chapter, but for now just be aware that text in square brackets [] is phonetic transcription in IPA. We will reproduce simplified charts of different subsets of the IPA here as they are explained.

Variations of IPA such as the well established Americanist phonetic notation and a new, simplified international version called SaypU are available, but IPA is more comprehensive and so preferred for educational use, despite its complexity.

To understand the IPA's taxonomy of phones, it is important to consider articulatory, acoustic, and auditory phonetics.

Articulatory phonetics[edit | edit source]

Articulatory phonetics is concerned with how the sounds of language are physically produced by the vocal apparatus. The units articulatory phonetics deals with are known as gestures, which are abstract characterizations of articulatory events.

Speaking in terms of articulation, the sounds that we utter to make language can be split into two different types: consonants and vowels. For the purposes of articulatory phonetics, consonant sounds are typically characterized as sounds that have constricted or closed configurations of the vocal tract. Vowels, on the other hand, are characterized in articulatory terms as having relatively little constriction; that is, an open configuration of the vocal tract. Vowels carry much of the pitch of speech and can be held different durations, such as a half a beat, one beat, two beats, three beats, etc. of speech rhythm. Consonants, on the other hand, do not carry the prosodic pitch (especially if devoiced and not nasalized) and do not display the potential for the durations that vowels can have. Linguists may also speak of 'semi-vowels' or 'semi-consonants' (often used as synonymous terms). For example, a sound such as [w] phonetically seems more like a vowel (with relative lack of constriction or closure of the vocal tract) but, phonologically speaking, behaves as a consonant in that it always appears before a vowel sound at the beginning (onset) of a syllable.

Consonants[edit | edit source]

Phoneticians generally characterize consonants as being distinguished by settings of the independent variables place of articulation (POA) and manner of articulation (MOA). In layman's terminology, POA is "where" the consonant is produced, while MOA is "how" the consonant is produced.

The following are descriptions of the different POAs:

- Bilabial segments are produced with the lips held together, for instance the [p] sound of the English pin, or the [b] sound in bin.

- Labiodental segments are produced by holding the upper teeth to the lower lip, like in the [f] sound of English fin.

- Dental consonants have the tongue making contact with the upper teeth (area 3 in the diagram). An example from English is the [θ] sound in the word thin.

- Alveolar consonants have the tongue touching the area of the mouth known as the alveolar ridge (area 4 in the diagram). Examples include the [t] in tin and [s] in sin.

- Postalveolar consonants are similar to alveolars but more retracted (in area 5 in the diagram), like the [ʃ] of shin.

- Palatal consonants are articulated at the hard palate (the middle part of the roof of the mouth, area 7 in the diagram). In English the palatal [j] sound appears in the word young.

- Velar consonants are articulated at the soft palate (the back part of the roof of the mouth, known also as the velum, area 8 in the diagram). English [k] is velar, like in the word kin.

- Glottal consonants are articulated far back in the throat, at the glottis (area 11 in the diagram, effectively the vocal folds). English [h] may be regarded as glottal.[2]

- Doubly articulated consonants have two points of articulation, such as the English labio-velar [w] of wit.

Other POAs are also possible, but will be described in more detail later on.

MOA involves a number of different variables which may vary independently:

- Voicing: Try pronouncing the hissing sound [s] of the English word sip. Elongate the sound until you can produce it continuously for five seconds. Then do the same for the [z] sound in zip. Hold your hand to your throat, observing the different in tactile sensation between the two. you should notice that [z] creates vibrations, while [s] does not. This rapid vibration is in fact caused by the vocal folds, and it is referred to as voicing. Many different sounds can contrast solely based on a voicing difference: English [b, p] in bin, pin, [d, t] in din, tin, et cetera.

- Nasality: Some sounds are produced with airflow through the nasal cavity. These are known as nasals. Nasal consonants in English include the [n] of not, the [m] of mit, and the [ŋ] of sing. Nasals may also contrast for voicing in some languages, but this is rare — in most languages, nasals are voiced.

- Obstruency: Consonants involving a total obstruction of airflow are known as stops or plosives. Examples include English [p, b, t, d, k, g]. Fricatives are consonants with a steady stricture causing friction, for example [f, v, s, z, ʃ, ʒ]. Affricates begin with a stop-like closure followed by frication, like the [tʃ, dʒ] of English chip, jeans.

- Sonorancy: Non-obstruents are classed as sonorants. This includes the already-mentioned nasals. Another important type of sonorant found in English is the approximant, in which articulatory organs produce a narrowing of the vocal tract, but leave enough space for air to flow without much audible turbulence. Examples include English [w, j, l, ɹ].

Knowing this information is enough to construct a simplified IPA chart of the consonants of English. As is conventional, MOA is organized in rows, and POA columns. Voicing pairs occur in the same cells; the ones in bold are voiced while the rest are voiceless.

| Bilabial (or Labio-velar) |

Labiodental | Dental | Alveolar | Postalveolar | Palatal | Velar | Glottal | |

|---|---|---|---|---|---|---|---|---|

| Plosive | p b | t d | k g | |||||

| Fricative | f v | θ[3] ð[4] | s z | ʃ ʒ | h | |||

| Affricate | tʃ dʒ | |||||||

| Nasal | m | n | ŋ | |||||

| Approximant | w | ɹ l | j |

Vowels[edit | edit source]

Vowels are very different from consonants, but our method of decomposing sounds into sets of features works equally well. Vowels can essentially be viewed as being combinations of three variables:

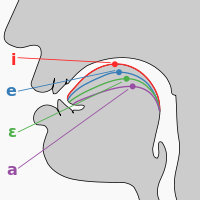

- Height: This measured how close your tongue is to the roof of your mouth. For example, try pronouncing [æ] (as in "cat") and [i] (as in "feet"). Your mouth should be much more open for the former than the latter. Thus [æ] is called either open or low, and [i] either closed or high.

- Backness: This is what is sounds like. Try, for example, alternating between pronouncing the vowels [æ] (as in "cat") and [ɑ] (as in "cot"), and get a feel for the position of your tongue in your mouth. It should move forward for [æ] and back for [ɑ], which is why the former is called a front vowel and the latter a back vowel.

- Rounding: Pronouncing the vowels [i] and [u], and look at your lips in a mirror. They should look puckered up for [u] and spread out for /i/.[5] In general, this "puckering" is referred to in phonetics as rounding.

Back vowels[6] tend to be rounded, and front vowels unrounded, for reasons which will be covered later in this chapter. However, this tendency is not universal. For instance, the vowel in the French word bœuf is what would result from the vowel of the English word bet being pronounced with rounding. Some East and Southeast Asian languages possess unrounded back vowels, which are difficult to describe without a sound sample.[7]

The cardinal vowels are a set of idealized vowels used by phoneticians as a base of reference.

The IPA orders the vowels in a similar way to the consonants, separating the three main distinguishing variables into different dimensions. The vowel trapezoid may be thought of as a rough diagram of the mouth, with the left being the front, the right the back, and the vertical direction representing height in the mouth. Each vowel is positioned thusly based on height and backness. Rounding isn't indicated by location, but when pairs of vowels sharing the same height and backness occur next to each other, the left member is always unrounded, and the right a rounded vowel. Otherwise, just use the general heuristic that rounded vowels are usually back. The following is a simplified version of the IPA vowel chart:

| Front | Near- front | Central | Near- back | Back | |

| Close | |||||

| Near-close | |||||

| Close-mid | |||||

| Mid | |||||

| Open-mid | |||||

| Near-open | |||||

| Open | |||||

Many of these vowels will be familiar from (General American) English. The following are rough examples:

| [æ] | cat | [ɑ] | cot | [ɐ] | cut |

|---|---|---|---|---|---|

| [ɛ] | kelp | [ə] | carrot | [ɔ] | caught |

| [e] | cake | [o] | coat | [ɪ] | kin |

| [ʊ] | cushion | [i] | keep | [u] | cool |

Note, however, that in phonetics we can describe any segment in arbitrarily fine detail. As such, when we say that, say, the vowel in "cat" is [æ], we are sacrificing precision.

Some of these vowels have no English equivalent, but may be familiar from foreign languages. [a] represents the sound in Spanish "hablo", the front rounded [œ] vowel is that in French "bœuf", [y] is that of German "hüten", and [ɯ] (perhaps the most exotic to most English speakers) is found as the first vowel of European Portuguese "pegar".

Other types of phonetics[edit | edit source]

Acoustic phonetics[edit | edit source]

Acoustic phonetics deals with the physical medium of speech -- that is, how speech manipulates sound waves.

Sound is composed of waves of high- and low-pressure areas which propagate through air. The most basic way to view sound is as a wave function. This plots the pressure measured by the sound-recording device against time, corresponding closely to the physical nature of sound. Loudness may be found by looking at the amplitude of the sound at a given time.

However, this approach is fairly limited. Humans, in fact, don't process sound using this raw data. The ear analyzes sound by decomposing it into its constituent frequencies, a mathematical algorithm known as the Fourier transform.

As a sound is produced in the oral tract, the column of air in the tract serves as a harmonic oscillator, oscillating at numerous frequencies simultaneously. Some of the frequencies of oscillation are at higher amplitudes than others, a property called resonance. The resonant frequencies (frequencies with relatively high resonance) of the vocal tract are known in phonetics as formants[8]. The formants in a speech sound are numbered by their frequency: f1 (pronounced eff-one) has the lowest frequency, followed by f2, f3, etc. The analysis of formants turns out to be key to acoustic phonetics, as any change in the shape of the vocal cavity changes which resonances are dominant.

There are two basic ways to analyze the formants of a speech signal. Firstly, at any given time the sound contains a mixture of different frequencies of sound. The relative amplitudes (strengths) of different frequencies at a particular time may be shown as a frequency spectrum. As you can see on the right, frequency is plotted against amplitude, and formants show up as peaks.

Another way to view formants is by using a spectrogram. This plots time against frequency, with amplitude represented by darkness. Formants show up as dark bands, and their movement may be tracked through time.

Given the development of modern technology, acoustic analysis is now accessible to anyone with a computer and a microphone.[9]

Auditory phonetics[edit | edit source]

Auditory phonetics is a branch of phonetics concerned with the hearing of speech sounds and with speech perception.

As a learner[edit | edit source]

Children learn the sounds made in their native language within their first year, and after this it becomes difficult to produce sounds foreign to their native languages. Even familiar segments are produced without reflection on the manner of their production. As such, it is highly recommended that you practice pronouncing isolated segments, and listen repeatedly to examples of those which seem exotic to you. Soon, you will be noticing yourself becoming more observant as to the phones you hear in your everyday life, and will be less puzzled by unfamiliar segments in other languages.

Workbook section[edit | edit source]

Exercise 1: English Places of Articulation[edit | edit source]

The following English words were tagged with the place of articulation of their first segment, but the tags have been scrambled. Match each word with the correct POA:

| cake | palatal | |

| bleed | alveolar | |

| zip | alveolar | |

| food | dental | |

| shack | bilabial | |

| team | velar | |

| thin | labio-velar | |

| wipe | retroflex | |

| yam | post-alveolar | |

| read | labio-dental |

Exercise 2: Brezhoneg[edit | edit source]

The following text in the Breton language has been translated into English:

Ar brezhoneg a zo ur yezh predenek eus skourr ar yezhoù keltiek komzet en darn vrasañ eus Enez Vreizh abaoe an Henamzer betek an aloubadegoù Saoz. Tost eo tre d'ar c'herneveureg (Cornwall:Kernow) ha d'ar c'hembraeg (Wales:Cymru) ha nebeutoc'h d'ar yezhoù keltiek all a zo c'hoazh bev : Skoseg, Gouezeleg ha Manaveg (Scotland, Ireland, Isle of Man) (ar skourr gouezelek).

Breton is a Brythonic language belonging to the branch of Celtic spoken over most of Britain from prehistoric times until the Saxon invasions. It is closely related to Cornish (Cornwall:Kernow) and Welsh (Wales:Cymru) and more distantly to the other surviving Celtic languages, Scots, Irish and Manx Gaelic (Scotland, Ireland, Isle of Man) (The Goidelic group).

You have been commissioned to create a phonetic transcription of this text using the following recording of a native speaker:

Write the transcription, being as accurate as you can. (Hint: The Breton rhotic is similar to that of French.)

Notes[edit | edit source]

- ↑ A wide transcription of speech is one which records a relatively large amount of information which is irrelevant to meaning, while a narrow transcription is closer to a phonemic (see next chapter) transcription.

- ↑ In actuality many argue that [h] has no POA. This will be ignored for our purposes.

- ↑ Say 'theta'.

- ↑ Say 'eth'.

- ↑ [i] and [u] are also distinguished by backness, but in many languages certain vowels may be distinguished only by rounding.

- ↑ [ɑ] is something of an exception

- ↑ Compare close back rounded [u] and unrounded [ɯ]

- ↑ Resonant frequencies are more commonly known as overtones in general acoustics. Don't confuse these with harmonics, which are a somewhat different concept.

- ↑ A commonly-used acoustic analysis tool ("praat") can be downloaded for free here: http://www.fon.hum.uva.nl/praat/