Circuit Theory/All Chapters

| This is the print version of Circuit Theory You won't see this message or any elements not part of the book's content when you print or preview this page. |

Circuit Theory

Wikibooks: The Free Library

Preface

This wikibook is going to be an introductory text about electric circuits. It will cover some the basics of electric circuit theory, circuit analysis, and will touch on circuit design. This book will serve as a companion reference for a 1st year of an Electrical Engineering undergraduate curriculum. Topics covered include AC and DC circuits, passive circuit components, phasors, and RLC circuits. The focus is on students of an electrical engineering undergraduate program. Hobbyists would benefit more from reading Electronics instead.

This book is not nearly completed, and could still be improved. People with knowledge of the subject are encouraged to contribute.

The main editable text of this book is located at http://en.wikibooks.org/wiki/Circuit_Theory. The wikibooks version of this text is considered the most up-to-date version, and is the best place to edit this book and contribute to it.

Electric Circuits Introduction

The theory of electrical circuits can be a complex area of study. The chapters in this section will introduce the reader to the world of electric circuits, introduce some of the basic terminology, and provide the first introduction to passive circuit elements.

Introduction

Who is This Book For?

This is designed for a first course in Circuit Analysis which is usually accompanied by a set of labs. It is assumed that students are in a Differential Equations class at the same time. Phasors are used to avoid the Laplace transform of driving functions while maintaining a complex impedance transform of the physical circuit that is identical in both. 1st and 2nd order differential equations can be solved using phasors and calculus if the driving functions are sinusoidal. The sinusoidal is then replaced by the more simple step function and then the convolution integral is used to find an analytical solution to any driving function. This leaves time for a more intuitive understanding of poles, zeros, transfer functions, and Bode plot interpretation.

For those who have already had differential equations, the Laplace transform equivalent will be presented as an alternative while focusing on phasors and calculus.

This book will expect the reader to have a firm understanding of Calculus specifically, and will not stop to explain the fundamental topics in Calculus.

For information on Calculus, see the wikibook: Calculus.

What Will This Book Cover?

This book will cover linear circuits, and linear circuit elements.

The goal is to emphasize Kirchhoff and symbolic algebra systems such as MuPAD, Mathematica or Sage; at the expense of analysis methods such as node, mesh, and Norton equivalent. A phasor/calculus based approach starts at the very beginning and ends with the convolution integral to handle all the various types of forcing functions.

The result is a linear analysis experience that is general in nature but skips Laplace and Fourier transforms.

Kirchhoff's laws receive normal focus, but the other circuit analysis/simplification techniques receive less than a normal attention.

The class ends with application of these concepts in Power Analysis, Filters, Control systems.

The goal is set the ground work for a transition to the digital version of these concepts from a firm basis in the physical world. The next course would be one focused on modeling linear systems and analyzing them digitally in preparation for a digital signal (DSP) processing course.

Where to Go From Here

- For a technician version of this course which focuses on the practical rather than the idea, expertise rather than theory, and on algebra rather than calculus, see the Electronics wikibook.

- Take Ordinary Differential Equations now that you have a practical reason to do so. This math class should ideally feel like an "art" class and be enjoyable.

- To begin a course of study in Computer Engineering, see the Digital Circuits wikibook.

- For a traditional "next" course, see the Signals and Systems wikibook. There should be an overlap with this wikibook.

- The intended next course would have a name such as A New Sequence in Signals and Linear Systems or Elements of Discrete Signal Analysis.

Basic Terminology

Basic Terminology

There are a few key terms that need to be understood at the beginning of this book, before we can continue. This is only a partial list of all terms that will be used throughout this book, but these key words are important to know before we begin the main narrative of this text.

- Time domain

- The time domain is described by graphs of power, voltage and current that depend upon time. This is simply another way of saying that our circuits change with time, and that the major variable used to describe the system is time. Another name is "temporal".

- Frequency domain

- The frequency domain are graphs of power, voltage and/or current that depend upon frequency such as Bode plots. Variable frequencies in wireless communication can represent changing channels or data on a channel. Another name is the "Fourier domain". Other domains that an engineer might encounter are the "Laplace domain" (or the "s domain" or "complex frequency domain"), and the "Z domain". When combined with the time, it is called a "spectral" or "waterfall."

- Circuit response

- Circuits generally have inputs and outputs. In fact, it is safe to say that a circuit isn't useful if it doesn't have one or the other (usually both). Circuit response is the relationship between the circuit's input to the circuit's output. The circuit response may be a measure of either current or voltage.

- Non-homogeneous

- Circuits are described by equations that capture the component characteristics and how they are wired together. These equations are non-homogeneous in nature. Solving these equations requires splitting the single problem into two problems: a steady-state solution (particular solution) and a transient solution (homogeneous solution).

- Steady-state solution

- The final value, when all circuit elements have a constant or periodic behavior, is also known as the steady-state value of the circuit. The circuit response at steady-state (when voltages and currents have stopped changing due to a disturbance) is also known as the "steady-state response". The steady-state solution to the particular integral is called the particular solution.

- Transient

- A transient event is a short-lived burst of energy in a system caused by a sudden change of state.

- Transient means momentary, or a short period of time. Transient means that the energy in a circuit suddenly changes which causes the energy storage elements to react. The circuit's energy state is forced to change. When a car goes over a bump, it can fly apart, feel like a rock, or cushion the impact in a designed manner. The goal of most circuit design is to plan for transients, whether intended or not.

- Transient solutions are determined by assuming the driving function(s) is zero which creates a homogeneous equation, which has a homogeneous solution.

Summary

When something changes in a circuit, there is a certain transition period before a circuit "settles down", and reaches its final value. The response that a circuit has before settling into its steady-state response is known as the transient response. Using Euler's formula, complex numbers, phasors and the s-plane, a homogeneous solution technique will be developed that captures the transient response by assuming the final state has no energy. In addition, a particular solution technique will be developed that finds the final energy state. Added together, they predict the circuit response.

The related Differential equation development of homogeneous and particular solutions will be avoided.

Variables and Standard Units

Electric Charge (Coulombs)

An electron has a charge of

-1.602×10E-19 C.

Electric charge is a physical property of matter that causes it to experience a force when near other electrically charged matter. Electric Charge (symbol q) is measured in SI units called "Coulombs", which are abbreviated with the letter capital C.

We know that q=n*e, where n = number of electrons and e= 1.6*10−19. Hence n=1/e coulombs. A Coulomb is the total charge of 6.24150962915265×1018 electrons, thus a single electron has a charge of −1.602 × 10−19 C.

It is important to understand that this concept of "charge" is associated with static electricity. Charge, as a concept, has a physical boundary that is related to counting a group of electrons. "Flowing" electricity is an entirely different situation. "Charge" and electrons separate. Charge moves at the speed of light while electrons move at the speed of 1 meter/hour. Thus in most circuit analysis, "charge" is an abstract concept unrelated to energy or an electron and more related to the flow of information.

Electric charge is the subject of many fundamental laws, such as Coulomb's Law and Gauss' Law (static electricity) but is not used much in circuit theory.

Voltage (Volts)

Voltage is a measure of the work required to move a charge from one point to another in a electric field. Thus the unit "volt" is defined as a Joules (J) per Coulomb (C).

W represents work, q represents an amount of charge. Charge is a static electricity concept. The definition of a volt is shared between static and "flowing" electronics.

Voltage is sometimes called "electric potential", because voltage represents the a difference in Electro Motive Force (EMF) that can produce current in a circuit. More voltage means more potential for current. Voltage also can be called "Electric Pressure", although this is far less common.

Voltage is not measured in absolutes but in relative terms. The English language tradition obscures this. For example we say "What is the distance to New York?" Clearly implied is the relative distance from where we are standing to New York. But if we say "What is the voltage at ______?" What is the starting point?

Voltage is defined between two points. Voltage is relative to where 0 is defined. We say "The voltage from point A to B is 5 volts." It is important to understand EMF and voltage are two different things.

When the question is asked "What is the voltage at ______?", look for the ground symbol on a circuit diagram. Measure voltage from ground to _____. If the question is asked "What is the voltage from A to B?" then put the red probe on A and the black probe on B (not ground).

The absolute is referred to as "EMF" or Electro Motive Force. The difference between the two EMF's is a voltage.

Current (Amperes)

Current is a measurement of the flow of electricity. Current is measured in units called Amperes (or "Amps"). An ampere is "charge volume velocity" in the same way water current could be measured in "cubic feet of water per second." But current is a base SI unit, a fundamental dimension of reality like space, time and mass. A coulomb or charge is not. A coulomb is actually defined in terms of the ampere. "Charge or Coulomb" is a derived SI Unit. The coulomb is a fictitious entity left over from the one fluid /two fluid philosophies of the 18th century.

This course is about flowing electrical energy that is found in all modern electronics. Charge volume velocity (defined by current) is a useful concept, but understand it has no practical use in engineering. Do not think of current as a bundle electrons carrying energy through a wire. Special relativity and quantum mechanics concepts are necessary to understand how electrons move at 1 meter/hour through copper, yet electromagnetic energy moves at near the speed of light.

Amperes are abbreviated with an "A" (upper-case A), and the variable most often associated with current is the letter "i" (lower-case I). In terms of coulombs, an ampere is:

Because of the widespread use of complex numbers in Electrical Engineering, it is common for electrical engineering texts to use the letter "j" (lower-case J) as the imaginary number, instead of the "i" (lower-case I) commonly used in math texts. This wikibook will adopt the "j" as the imaginary number, to avoid confusion.

Energy and Power

Electrical theory is about energy storage and the flow of energy in circuits. Energy is chopped up arbitrarily into something that doesn't exist but can be counted called a coulomb. Energy per coulomb is voltage. The velocity of a coulomb is current. Multiplied together, the units are energy velocity or power ... and the unreal "coulomb" disappears.

Energy

Energy is measured most commonly in Joules, which are abbreviated with a "J" (upper-case J). The variable most commonly used with energy is "w" (lower-case W). The energy symbol is w which stands for work.

From a thermodynamics point of view, all energy consumed by a circuit is work ... all the heat is turned into work. Practically speaking, this can not be true. If it were true, computers would never consume any energy and never heat up.

The reason that all the energy going into a circuit and leaving a circuit is considered "work" is because from a thermodynamic point of view, electrical energy is ideal. All of it can be used. Ideally all of it can be turned into work. Most introduction to thermodynamics courses assume that electrical energy is completely organized (and has entropy of 0).

Power

A corollary to the concept of energy being work, is that all the energy/power of a circuit (ideally) can be accounted for. The sum of all the power entering and leaving a circuit should add up to zero. No energy should be accumulated (theoretically). Of course capacitors will charge up and may hold onto their energy when the circuit is turned off. Inductors will create a magnetic field containing energy that will instantly disappear back into the source through the switch that turns the circuit off.

This course uses what is called the "passive" sign convention for power. Energy put into a circuit by a power supply is negative, energy leaving a circuit is positive.

Power (the flow of energy) computations are an important part of this course. The symbol for power is w (for work) and the units are Watts or W.

Electric Circuit Basics

Circuits

Circuits (also known as "networks") are collections of circuit elements and wires. Wires are designated on a schematic as being straight lines. Nodes are locations on a schematic where 2 or more wires connect, and are usually marked with a dark black dot. Circuit Elements are "everything else" in a sense. Most basic circuit elements have their own symbols so as to be easily recognizable, although some will be drawn as a simple box image, with the specifications of the box written somewhere that is easy to find. We will discuss several types of basic circuit components in this book.

Ideal Wires

For the purposes of this book, we will assume that an ideal wire has zero total resistance, no capacitance, and no inductance. A consequence of these assumptions is that these ideal wires have infinite bandwidth, are immune to interference, and are — in essence — completely uncomplicated. This is not the case in real wires, because all wires have at least some amount of associated resistance. Also, placing multiple real wires together, or bending real wires in certain patterns will produce small amounts of capacitance and inductance, which can play a role in circuit design and analysis. This book will assume that all wires are ideal.

Ideal Junctions or Nodes

Nodes are also called "junctions" in this book in order to make a distinction between Node analysis, Kirchhoff's current law and discussions about a physical node itself. Here a physical node is discussed.

A junction is a group of wires that share the same electromotive force (not voltage). Wires ideally have no resistance, thus all wires that touch wire to wire somewhere are part of the same node. The diagram on the right shows two big blue nodes, two smaller green nodes and two trivial (one wire touching another) red nodes.

Sometimes a node is described as where two or more wires touch and students circle where wires intersect and call this a node. This only works on simple circuits.

One node has to be labeled ground in any circuit drawn before voltage can be computed or the circuit simulated. Typically this is the node having the most components connected to it. Logically it is normally placed at the bottom of the circuit logic diagram.

Ground is not always needed physically. Some circuits are floated on purpose.

Measuring instruments

Voltmeters and Ammeters are devices that are used to measure the voltage across an element, and the current flowing through a wire, respectively.

Ideal Voltmeters

An ideal voltmeter has an infinite resistance (in reality, several megaohms), and acts like an open circuit. A voltmeter is placed across the terminals of a circuit element, to determine the voltage across that element. In practice the voltmeter siphons enough energy to move a needle, cause thin strips of metal to separate or turn on a transistor so a number is displayed.

Ideal Ammeters

An ideal ammeter has zero resistance and acts like a short circuit. Ammeters require cutting a wire and plugging the two ends into the Ammeter. In practice an ammeter places a tiny resistor in a wire and measures the tiny voltage across it or the ammeter measures the magnetic field strength generated by current flowing through a wire. Ammeters are not used that much because of the wire cutting, or wire disconnecting they require.

Active, Passive & ReActive

The elements which are capable of delivering energy or which are capable to amplify the signal are called "Active elements". All power supplies fit into this category.

The elements which will receive the energy and dissipate it are called "Passive elements". Resistors model these devices.

Reactive elements store and release energy into a circuit. Ideally they don't either consume or generate energy. Capacitors, and inductors fall into this category.

Open and Short Circuits

Open

No current flows through an open. Normally an open is created by a bad connector. Dust, bad solder joints, bad crimping, cracks in circuit board traces, create an open. Capacitors respond to DC by turning into opens after charging up. Uncharged inductors appear as opens immediately after powering up a circuit. The word open can refer to a problem description. The word open can also help develop an intuition about circuits.

Typically the circuit stops working with opens because 99% of all circuits are driven by voltage power sources. Voltage sources respond to an open with no current. Opens are the equivalent of clogs in plumbing .. which stop water from flowing.

On one side of the open, EMF will build up, just like water pressure will build up on one side of a clogged pipe. Typically a voltage will appear across the open.

Short

A voltage source responds to a short by delivering as much current as possible. An extreme example of this can be seen in this ball bearing motor video. The motor appears as a short to the battery. Notice he only completes the short for a short time because he is worried about the car battery exploding.

Maximum current flows through a short. Normally a short is created by a wire, a nail, or some loose screw touching parts of the circuit unintentionally. Most component failures start with heat build up. The heat destroys varnish, paint, or thin insulation creating a short. The short causes more current to flow which causes more heat. This cycle repeats faster and faster until there is a puff of smoke and everything breaks creating an open. Most component failures start with a short and end in an open as they burn up. Feel the air temperature above each circuit component after power on. Build a memory of what normal operating temperatures are. Cold can indicate a short that has already turned into an open.

An uncharged capacitor initially appears as a short immediately after powering on a circuit. An inductor appears as a short to DC after charging up. The short concept also helps build our intuition, provides an opportunity to talk about electrical safety and helps describe component failure modes.

A closed switch can be thought of as short. Switches are surprisingly complicated. It is in a study of switches that the term closed begins to dominate that of short.

Resistors and Resistance

Resistors

Mechanical engineers seem to model everything with a spring. Electrical engineers compare everything to a Resistor. Resistors are circuit elements that resist the flow of current. When this is done a voltage appears across the resistor's two wires.

A pure resistor turns electrical energy into heat. Devices similar to resistors turn this energy into light, motion, heat, and other forms of energy.

Current in the drawing above is shown entering the + side of the resistor. Resistors don't care which leg is connected to positive or negative. The + means where the positive or red probe of the volt meter is to be placed in order to get a positive reading. This is called the "positive charge" flow sign convention. Some circuit theory classes (often within a physics oriented curriculum) are taught with an "electron flow" sign convention.

In this case, current entering the + side of the resistor means that the resistor is removing energy from the circuit. This is good. The goal of most circuits is to send energy out into the world in the form of motion, light, sound, etc.

Resistance

Resistance is measured in terms of units called "Ohms" (volts per ampere), which is commonly abbreviated with the Greek letter Ω ("Omega"). Ohms are also used to measure the quantities of impedance and reactance, as described in a later chapter. The variable most commonly used to represent resistance is "r" or "R".

Resistance is defined as:

where ρ is the resistivity of the material, L is the length of the resistor, and A is the cross-sectional area of the resistor.

Conductance

Conductance is the inverse of resistance. Conductance has units of "Siemens" (S), sometimes referred to as mhos (ohms backwards, abbreviated as an upside-down Ω). The associated variable is "G":

Before calculators and computers, conductance helped reduce the number of hand calculations that had to be done. Now conductance and it's related concepts of admittance and susceptance can be skipped with matlab, octave, wolfram alpha and other computing tools. Learning one or more these computing tools is now absolutely necessary in order to get through this text.

Resistor terminal relation

The drawing on the right is of a battery and a resistor. Current is leaving the + terminal of the battery. This means this battery is turning chemical potential energy into electromagnetic potential energy and dumping this energy into the circuit. The flow of this energy or power is negative.

Current is entering the positive side of the resistor even though a + has not been put on the resistor. This means electromagnetic potential energy is being converted into heat, motion, light, or sound depending upon the nature of the resistor. Power flowing out of the circuit is given a positive sign.

The relationship of the voltage across the resistor V, the current through the resistor I and the value of the resistor R is related by ohm's law:

[Resistor Terminal Relation]

A resistor, capacitor and inductor all have only two wires attached to them. Sometimes it is hard to tell them apart. In the real world, all three have a bit of resistance, capacitance and inductance in them. In this unknown context, they are called two terminal devices. In more complicated devices, the wires are grouped into ports. A two terminal device that expresses Ohm's law when current and voltage are applied to it, is called a resistor.

Resistor Safety

Resistors come in all forms. Most have a maximum power rating in watts. If you put too much through them, they can melt, catch fire, etc. Resistance is an electrical passive element which oppose the flow of electricity. Air can be considered as one of the resistances. Air is used as a resistance in Lightning Arrestors. Also vacuum also is used as a resistor.

Example

Suppose the voltage across a resistor's two terminals is 10 volts and the measured current through it is 2 amps. What is the resistance?

If then

Resistive Circuits

We've been introduced to passive circuit elements such as resistors, sources, and wires. Now, we are going to explore how complicated circuits using these components can be analyzed.

Resistive Circuit Analysis Techniques

Circuit Theory/Resistive Circuit Analysis

Source Transformations

Source Transformations

Independent current sources can be turned into independent voltage sources, and vice-versa, by methods called "Source Transformations." These transformations are useful for solving circuits. We will explain the two most important source transformations, Thevenin's Source, and Norton's Source, and we will explain how to use these conceptual tools for solving circuits.

Black Boxes

A circuit (or any system, for that matter) may be considered a black box if we don't know what is inside the system. For instance, most people treat their computers like a black box because they don't know what is inside the computer (most don't even care), all they know is what goes in to the system (keyboard and mouse input), and what comes out of the system (monitor and printer output).

Black boxes, by definition, are systems whose internals aren't known to an outside observer. The only methods that an outside observer has to examine a black box is to send input into the systems, and gauge the output.

Thevenin's Theorem

Let's start by drawing a general circuit consisting of a source and a load, as a block diagram:

Let's say that the source is a collection of voltage sources, current sources and resistances, while the load is a collection of resistances only. Both the source and the load can be arbitrarily complex, but we can conceptually say that the source is directly equivalent to a single voltage source and resistance (figure (a) below).

|

|

| (a) | (b) |

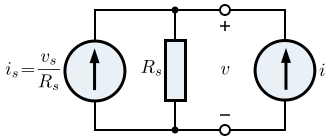

We can determine the value of the resistance Rs and the voltage source, vs by attaching an independent source to the output of the circuit, as in figure (b) above. In this case we are using a current source, but a voltage source could also be used. By varying i and measuring v, both vs and Rs can be found using the following equation:

There are two variables, so two values of i will be needed. See Example 1 for more details. We can easily see from this that if the current source is set to zero (equivalent to an open circuit), then v is equal to the voltage source, vs. This is also called the open-circuit voltage, voc.

This is an important concept, because it allows us to model what is inside a unknown (linear) circuit, just by knowing what is coming out of the circuit. This concept is known as Thévenin's Theorem after French telegraph engineer Léon Charles Thévenin, and the circuit consisting of the voltage source and resistance is called the Thévenin Equivalent Circuit.

Norton's Theorem

Recall from above that the output voltage, v, of a Thévenin equivalent circuit can be expressed as

Now, let's rearrange it for the output current, i:

This is equivalent to a KCL description of the following circuit. We can call the constant term vs/Rs the source current, is.

The equivalent current source and the equivalent resistance can be found with an independent source as before (see Example 2).

When the above circuit (the Norton Equivalent Circuit, after Bell Labs engineer E.L. Norton) is disconnected from the external load, the current from the source all flows through the resistor, producing the requisite voltage across the terminals, voc. Also, if we were to short the two terminals of our circuit, the current would all flow through the wire, and none of it would flow through the resistor (current divider rule). In this way, the circuit would produce the short-circuit current isc (which is exactly the same as the source current is).

Circuit Transforms

We have just shown turns out that the Thévenin and Norton circuits are just different representations of the same black box circuit, with the same Ohm's Law/KCL equations. This means that we cannot distinguish between Thévenin source and a Norton source from outside the black box, and that we can directly equate the two as below:

|

|

We can draw up some rules to convert between the two:

- The values of the resistors in each circuit are conceptually identical, and can be called the equivalent resistance, Req:

- The value of a Thévenin voltage source is the value of the Norton current source times the equivalent resistance (Ohm's law):

If these rules are followed, the circuits will behave identically. Using these few rules, we can transform a Norton circuit into a Thévenin circuit, and vice versa. This method is called source transformation. See Example 3.

Open Circuit Voltage and Short Circuit Current

The open-circuit voltage, voc of a circuit is the voltage across the terminals when the current is zero, and the short-circuit current isc is the current when the voltage across the terminals is zero:

|

|

| The open circuit voltage | The short circuit current |

We can also observe the following:

- The value of the Thévenin voltage source is the open-circuit voltage:

- The value of the Norton current source is the short-circuit current:

We can say that, generally,

Why Transform Circuits?

How are Thevenin and Norton transforms useful?

- Describe a black box characteristics in a way that can predict its reaction to any load.

- Find the current through and voltage across any device by removing the device from the circuit! This can instantly make a complex circuit much simpler to analyze.

- Stepwise simplification of a circuit is possible if voltage sources have a series impedance and current sources have a parallel impedance.

Maximum Power Transfer

Maximum Power Transfer

Often we would like to transfer the most power from a source to a load placed across the terminals as possible. How can we determine the optimum resistance of the load for this to occur?

Let us consider a source modelled by a Thévenin equivalent (a Norton equivalent will lead to the same result, as the two are directly equivalent), with a load resistance, RL. The source resistance is Rs and the open circuit voltage of the source is vs:

The current in this circuit is found using Ohm's Law:

The voltage across the load resistor, vL, is found using the voltage divider rule:

We can now find the power dissipated in the load, PL as follows:

We can now rewrite this to get rid of the RL on the top:

Assuming the source resistance is not changeable, then we obtain maximum power by minimising the bracketed part of the denominator in the above equation. It is an elementary mathematical result that is at a minimum when x=1. In this case, it is equal to 2. Therefore, the above expression is minimum under the following condition:

This leads to the condition that:

We will get maximum power out of the source if the load resistance is identical to the internal source resistance. This is the Maximum Power Transfer Theorem.

Efficiency

The efficiency, η of the circuit is the proportion of all the energy dissipated in the circuit that is dissipated in the load. We can immediately see that at maximum power transfer to the load, the efficiency is 0.5, as the source resistor has half the voltage across it. We can also see that efficiency will increase as the load resistance increases, even though the power transferred will fall.

The efficiency can be calculated using the following equation:

where Ps is the power in the source resistor. This can be found using a simple modification to the equation for PL:

The graph below shows the power in the load (as a proportion of the maximum power, Pmax) and the efficiency for values of RL between 0 and 5 times Rs.

It is important to note that under conditions of maximum power transfer as much power is dissipated in the source as in the load. This is not a desirable condition if, for example, the source is the electricity supply system and the load is your electric heater. This would mean that the electricity supply company would be wasting half the power it generates. In this case, the generators, power lines, etc. are designed to give the lowest source resistance possible, giving high efficiency. The maximum power transfer condition is used in (usually high-frequency) communications systems where the source resistance can not be made low, the power levels are relatively low and it is paramount to get as much signal power as possible to the receiving end of the system (the load).

Resistive Circuit Analysis Methods

Analysis Methods

When circuits get large and complicated, it is useful to have various methods for simplifying and analyzing the circuit. There is no perfect formula for solving a circuit. Depending on the type of circuit, there are different methods that can be employed to solve the circuit. Some methods might not work, and some methods may be very difficult in terms of long math problems. Two of the most important methods for solving circuits are Nodal Analysis, and Mesh Current Analysis. These will be explained below.

Superposition

One of the most important principals in the field of circuit analysis is the principal of superposition. It is valid only in linear circuits.

The superposition principle states that the total effect of multiple contributing sources on a linear circuit is equal to the sum of the individual effects of the sources, taken one at a time.

What does this mean? In plain English, it means that if we have a circuit with multiple sources, we can "turn off" all but one source at a time, and then investigate the circuit with only one source active at a time. We do this with every source, in turn, and then add together the effects of each source to get the total effect. Before we put this principle to use, we must be aware of the underlying mathematics.

Necessary Conditions

Superposition can only be applied to linear circuits; that is, all of a circuit's sources hold a linear relationship with the circuit's responses. Using only a few algebraic rules, we can build a mathematical understanding of superposition. If f is taken to be the response, and a and b are constant, then:

In terms of a circuit, it clearly explains the concept of superposition; each input can be considered individually and then summed to obtain the output. With just a few more algebraic properties, we can see that superposition cannot be applied to non-linear circuits. In this example, the response y is equal to the square of the input x, i.e. y=x2. If a and b are constant, then:

Note that this is only one of an infinite number of counter-examples...

Step by Step

Using superposition to find a given output can be broken down into four steps:

- Isolate a source - Select a source, and set all of the remaining sources to zero. The consequences of "turning off" these sources are explained in Open and Closed Circuits. In summary, turning off a voltage source results in a short circuit, and turning off a current source results in an open circuit. (Reasoning - no current can flow through a open circuit and there can be no voltage drop across a short circuit.)

- Find the output from the isolated source - Once a source has been isolated, the response from the source in question can be found using any of the techniques we've learned thus far.

- Repeat steps 1 and 2 for each source - Continue to choose a source, set the remaining sources to zero, and find the response. Repeat this procedure until every source has been accounted for.

- Sum the Outputs - Once the output due to each source has been found, add them together to find the total response.

Impulse Response

An impulse response of a circuit can be used to determine the output of the circuit:

The output y is the convolution h * x of the input x and the impulse response:

[Convolution]

- .

If the input, x(t), was an impulse (), the output y(t) would be equal to h(t).

By knowing the impulse response of a circuit, any source can be plugged-in to the circuit, and the output can be calculated by convolution.

Convolution

The convolution operation is a very difficult, involved operation that combines two equations into a single resulting equation. Convolution is defined in terms of a definite integral, and as such, solving convolution equations will require knowledge of integral calculus. This wikibook will not require a prior knowledge of integral calculus, and therefore will not go into more depth on this subject then a simple definition, and some light explanation.

Definition

The convolution a * b of two functions a and b is defined as:

Asterisks mean convolution, not multiplication

The asterisk operator is used to denote convolution. Many computer systems, and people who frequently write mathematics on a computer will often use an asterisk to denote simple multiplication (the asterisk is the multiplication operator in many programming languages), however an important distinction must be made here: The asterisk operator means convolution.

Properties

Convolution is commutative, in the sense that . Convolution is also distributive over addition, i.e. , and associative, i.e. .

Systems, and convolution

Let us say that we have the following block-diagram system:

|

|

Where x(t) is the input to the circuit, h(t) is the circuit's impulse response, and y(t) is the output. Here, we can find the output by convoluting the impulse response with the input to the circuit. Hence we see that the impulse response of a circuit is not just the ratio of the output over the input. In the frequency domain however, component in the output with frequency ω is the product of the input component with the same frequency and the transition function at that frequency. The moral of the story is this: the output to a circuit is the input convolved with the impulse response.

Capacitors and Inductors

Resistors, wires, and sources are not the only passive circuit elements. Capacitors and Inductors are also common, passive elements that can be used to store and release electrical energy in a circuit. We will use the analysis methods that we learned previously to make sense of these complicated circuit elements.

Energy Storage Elements

Circuit Theory/Energy Storage Elements

First-Order Circuits

First Order Circuits

First order circuits are circuits that contain only one energy storage element (capacitor or inductor), and that can, therefore, be described using only a first order differential equation. The two possible types of first-order circuits are:

- RC (resistor and capacitor)

- RL (resistor and inductor)

RL and RC circuits is a term we will be using to describe a circuit that has either a) resistors and inductors (RL), or b) resistors and capacitors (RC).

RL Circuits

An RL Circuit has at least one resistor (R) and one inductor (L). These can be arranged in parallel, or in series. Inductors are best solved by considering the current flowing through the inductor. Therefore, we will combine the resistive element and the source into a Norton Source Circuit. The Inductor then, will be the external load to the circuit. We remember the equation for the inductor:

If we apply KCL on the node that forms the positive terminal of the voltage source, we can solve to get the following differential equation:

We will show how to solve differential equations in a later chapter.

RC Circuits

An RC circuit is a circuit that has both a resistor (R) and a capacitor (C). Like the RL Circuit, we will combine the resistor and the source on one side of the circuit, and combine them into a thevenin source. Then if we apply KVL around the resulting loop, we get the following equation:

First Order Solution

Series RL

The differential equation of the series RL circuit

| t | I(t) |

|---|---|

| 1 | 36% A |

| 2 | 14% A |

| 3 | 5% A |

| 4 | 2% A |

| 5 | 0.7% A |

Series RC

The differential equation of the series RC circuit

| t | V(t) |

|---|---|

| 1 | 36% V |

| 2 | 14% V |

| 3 | 5% V |

| 4 | 2% V |

| 5 | 0.7% V |

Time Constant

The series RL and RC has a Time Constant

In general, from an engineering standpoint, we say that the system is at steady state ( Voltage or Current is almost at Ground Level ) after a time period of five Time Constants.

RLC Circuits

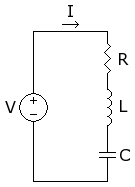

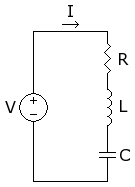

Series RLC Circuit

Second Order Differential Equation

The characteristic equation is

Where

When

- The equation only has one real root .

- The solution for

- The I - t curve would look like

When

- The equation has two real root .

- The solution for

- The I - t curve would look like

When

- The equation has two complex root .

- The solution for

- The I - t curve would look like

Damping Factor

The damping factor is the amount by which the oscillations of a circuit gradually decrease over time. We define the damping ratio to be:

| Circuit Type | Series RLC | Parallel RLC |

|---|---|---|

| Damping Factor | ||

| Resonance Frequency |

Compare The Damping factor with The Resonance Frequency give rise to different types of circuits: Overdamped, Underdamped, and Critically Damped.

Bandwidth

[Bandwidth]

For series RLC circuit:

For Parallel RLC circuit:

Quality Factor

[Quality Factor]

For Series RLC circuit:

For Parallel RLC circuit:

Stability

Because inductors and capacitors act differently to different inputs, there is some potential for the circuit response to approach infinity when subjected to certain types and amplitudes of inputs. When the output of a circuit approaches infinity, the circuit is said to be unstable. Unstable circuits can actually be dangerous, as unstable elements overheat, and potentially rupture.

A circuit is considered to be stable when a "well-behaved" input produces a "well-behaved" output response. We use the term "Well-Behaved" differently for each application, but generally, we mean "Well-Behaved" to mean a finite and controllable quantity.

Resonance

With R = 0

When R = 0 , the circuit reduces to a series LC circuit. When the circuit is in resonance, the circuit will vibrate at the resonant frequency.

The circuit vibrates and may produce a standing wave, depending on the frequency of the driver, the wavelength of the oscillating wave and the geometry of the circuit.

With R ≠ 0

When R ≠ 0 and the circuit operates in resonance .

- The frequency dependent components L , C cancel out ie ZL - ZC = 0 so that the total impedance of the circuit is

- The current of the circuit is

- The Operating Frequency is

If the current is halved by doubling the value of resistance then

- Circuit will be stable over the range of frequencies from

The circuit has the capability to select bandwidth where the circuit is stable . Therefore, it is best suited for Tuned Resonance Select Bandwidth Filter

Once using L or C to tune circuit into resonance at resonance frequency The current is at its maximum value . Reduce current above circuit will respond to narrower bandwidth than . Reduce current below circuit will respond to wider bandwidth than .

Conclusion

| Circuit | General | Series RLC | Parallel RLC |

|---|---|---|---|

| Circuit |  |

| |

| Impedance | Z | ||

| Roots | λ | λ = | λ = |

| I(t) | Aeλ1t + Beλ2t | Aeλ1t + Beλ2t | Aeλ1t + Beλ2t |

| Damping Factor | |||

| Resonant Frequency | |||

| Band Width | |||

| Quality factor |

The Second-Order Circuit Solution

== Second-Order Solution

This page is going to talk about the solutions to a second-order, RLC circuit. The second-order solution is reasonably complicated, and a complete understanding of it will require an understanding of differential equations. This book will not require you to know about differential equations, so we will describe the solutions without showing how to derive them. The derivations may be put into another chapter, eventually.

The aim of this chapter is to develop the complete response of the second-order circuit. There are a number of steps involved in determining the complete response:

- Obtain the differential equations of the circuit

- Determine the resonant frequency and the damping ratio

- Obtain the characteristic equations of the circuit

- Find the roots of the characteristic equation

- Find the natural response

- Find the forced response

- Find the complete response

We will discuss all these steps one at a time.

Finding Differential Equations

A Second-order circuit cannot possibly be solved until we obtain the second-order differential equation that describes the circuit. We will discuss here some of the techniques used for obtaining the second-order differential equation for an RLC Circuit.

- Note

- Parallel RLC circuits are easier to solve using ordinary differential equations in voltage (a consequence of Kirchhoff's Voltage Law), and Series RLC circuits are easier to solve using ordinary differential equations in current (a consequence of Kirchhoff's Current Law).

The Direct Method

The most direct method for finding the differential equations of a circuit is to perform a nodal analysis, or a mesh current analysis on the circuit, and then solve the equation for the input function. The final equation should contain only derivatives, no integrals.

The Variable Method

If we create two variables, g and h, we can use them to create a second-order differential equation. First, we set g and h to be either inductor currents, capacitor voltages, or both. Next, we create a single first order differential equation that has g = f(g, h). Then, we write another first-order differential equation that has the form:

- or

Next, we substitute in our second equation into our first equation, and we have a second-order equation.

Zero-Input Response

The zero-input response of a circuit is the state of the circuit when there is no forcing function (no current input, and no voltage input). We can set the differential equation as such:

This gives rise to the characteristic equation of the circuit, which is explained below.

Characteristic Equation

The characteristic equation of an RLC circuit is obtained using the "Operator Method" described below, with zero input. The characteristic equation of an RLC circuit (series or parallel) will be:

The roots to the characteristic equation are the "solutions" that we are looking for.

Finding the Characteristic Equation

This method of obtaining the characteristic equation requires a little trickery. First, we create an operator s such that:

Also, we can show higher-order operators as such:

Where x is the voltage (in a series circuit) or the current (in a parallel circuit) of the circuit source. We write 2 first order differential equations for the inductor currents and/or the capacitor voltages in our circuit. We convert all the differentiations to s, and all the integrations (if any) into (1/s). We can then use Cramer's rule to solve for a solution.

Solutions

The solutions of the characteristic equation are given in terms of the resonant frequency and the damping ratio:

[Characteristic Equation Solution]

If either of these two values are used for s in the assumed solution and that solution completes the differential equation then it can be considered a valid solution. We will discuss this more, below.

Damping

The solutions to a circuit are dependent on the type of damping that the circuit exhibits, as determined by the relationship between the damping ratio and the resonant frequency. The different types of damping are Overdamping, Underdamping, and Critical Damping.

Overdamped

A circuit is called Overdamped when the following condition is true:

In this case, the solutions to the characteristic equation are two distinct, positive numbers, and are given by the equation:

- , where

In a parallel circuit:

In a series circuit:

Overdamped circuits are characterized as having a very large settling time, and possibly a large steady-state error.

Underdamped

A Circuit is called Underdamped when the damping ratio is less than the resonant frequency.

In this case, the characteristic polynomial's solutions are complex conjugates. This results in oscillations or ringing in the circuit. The solution consists of two conjugate roots:

and

where

The solutions are:

for arbitrary constants A and B. Using Euler's formula, we can simplify the solution as:

for arbitrary constants C and D. These solutions are characterized by exponentially decaying sinusoidal response. The higher the Quality Factor (below), the longer it takes for the oscillations to decay.

Critically Damped

A circuit is called Critically Damped if the damping factor, or the ratio of actual damping to critical damping, is equal to 1:

In this case, the solutions to the characteristic equation is a double root. The two roots are identical (), the solutions are:

for arbitrary constants A and B. Critically damped circuits typically have low overshoot, no oscillations, and quick settling time.

Series RLC

The differential equation to a simple series circuit with a constant voltage source V, and a resistor R, a capacitor C, and an inductor L is:

The characteristic equation then, is as follows:

With the two roots:

and

Parallel RLC

The differential equation to a parallel RLC circuit with a resistor R, a capacitor C, and an inductor L is as follows:

Where v is the voltage across the circuit. The characteristic equation then, is as follows:

With the two roots:

and

Circuit Response

Once we have our differential equations, and our characteristic equations, we are ready to assemble the mathematical form of our circuit response. RLC Circuits have differential equations in the form:

Where f(t) is the forcing function of the RLC circuit.

Natural Response

The natural response of a circuit is the response of a given circuit to zero input (i.e. depending only upon the initial condition values). The natural Response to a circuit will be denoted as xn(t). The natural response of the system must satisfy the unforced differential equation of the circuit:

[Unforced function]

We remember this equation as being the "zero input response", that we discussed above. We now define the natural response to be an exponential function:

Where s are the roots of the characteristic equation of the circuit. The reasons for choosing this specific solution for xn is based in differential equations theory, and we will just accept it without proof for the time being. We can solve for the constant values, by using a system of two equations:

Where x is the voltage (of the elements in a parallel circuit) or the current (through the elements in a series circuit).

Forced Response

The forced response of a circuit is the way the circuit responds to an input forcing function. The Forced response is denoted as xf(t).

Where the forced response must satisfy the forced differential equation:

[Forced function]

The forced response is based on the input function, so we can't give a general solution to it. However, we can provide a set of solutions for different inputs:

| Input Form | Output Form |

|---|---|

| K (constant) | A (constant) |

Complete Response

The Complete response of a circuit is the sum of the forced response, and the natural response of the system:

[Complete Response]

Once we have derived the complete response of the circuit, we can say that we have "solved" the circuit, and are finished working.

the 2nd order circuit (LC) when there is no R in the circuit

we consider a=1/2RC ( if the circuit parallel ) = 0

so the circuit will be in undermade case since a=0 and omega has value greater than zero

Mutual Inductance

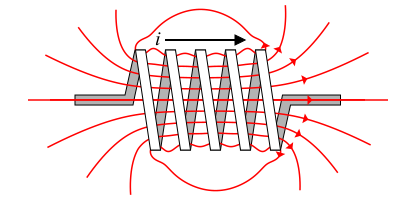

Magnetic Fields

Inductors store energy in the form of a magnetic field. The magnetic field of an inductor actually extends outside of the inductor, and can be affected (or can affect) another inductor close by. The image above shows a magnetic field (red lines) extending around an inductor.

Mutual Inductance

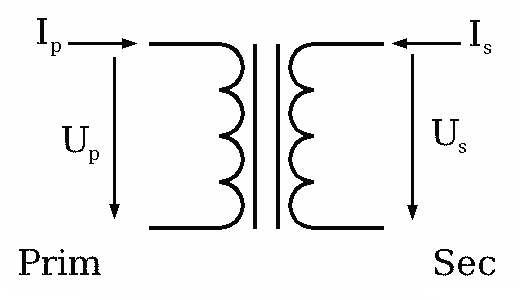

If we accidentally or purposefully put two inductors close together, we can actually transfer voltage and current from one inductor to another. This property is called Mutual Inductance. A device which utilizes mutual inductance to alter the voltage or current output is called a transformer.

The inductor that creates the magnetic field is called the primary coil, and the inductor that picks up the magnetic field is called the secondary coil. Transformers are designed to have the greatest mutual inductance possible by winding both coils on the same core. (In calculations for inductance, we need to know which materials form the path for magnetic flux. Air core coils have low inductance; Cores of iron or other magnetic materials are better 'conductors' of magnetic flux.)

The voltage that appears in the secondary is caused by the change in the shared magnetic field, each time the current through the primary changes. Thus, transformers work on A.C. power, since the voltage and current change continuously.

Ideal Transformers

Modern Inductors

When the coils of number of turns N1 conducts current . There exists a Magnetic Field B on the coil . Changes of B will generates an Induced Voltage on the turns of coil N1 and N2 as shown

- -ξp =

- -ξs =

The ratio of -ξ2 over -ξ1

- -ξp / -ξs =

If Input voltage at coil of turn Np = -ξp and the Output voltage will be

- = -ξs / -ξp =

Thus, this device is capable of Increase, Decrease and Conduct Voltage just by changing the turn ratio of the coils

Therefore, the output voltage can be

- Increased or Step Up by increasing number of turns of coil Ns greater than Np

- Decreased or Step Down by Decreasing number of turns of coil Ns less than Np

- Buffered by setting number of turns of coil Ns equal to Np

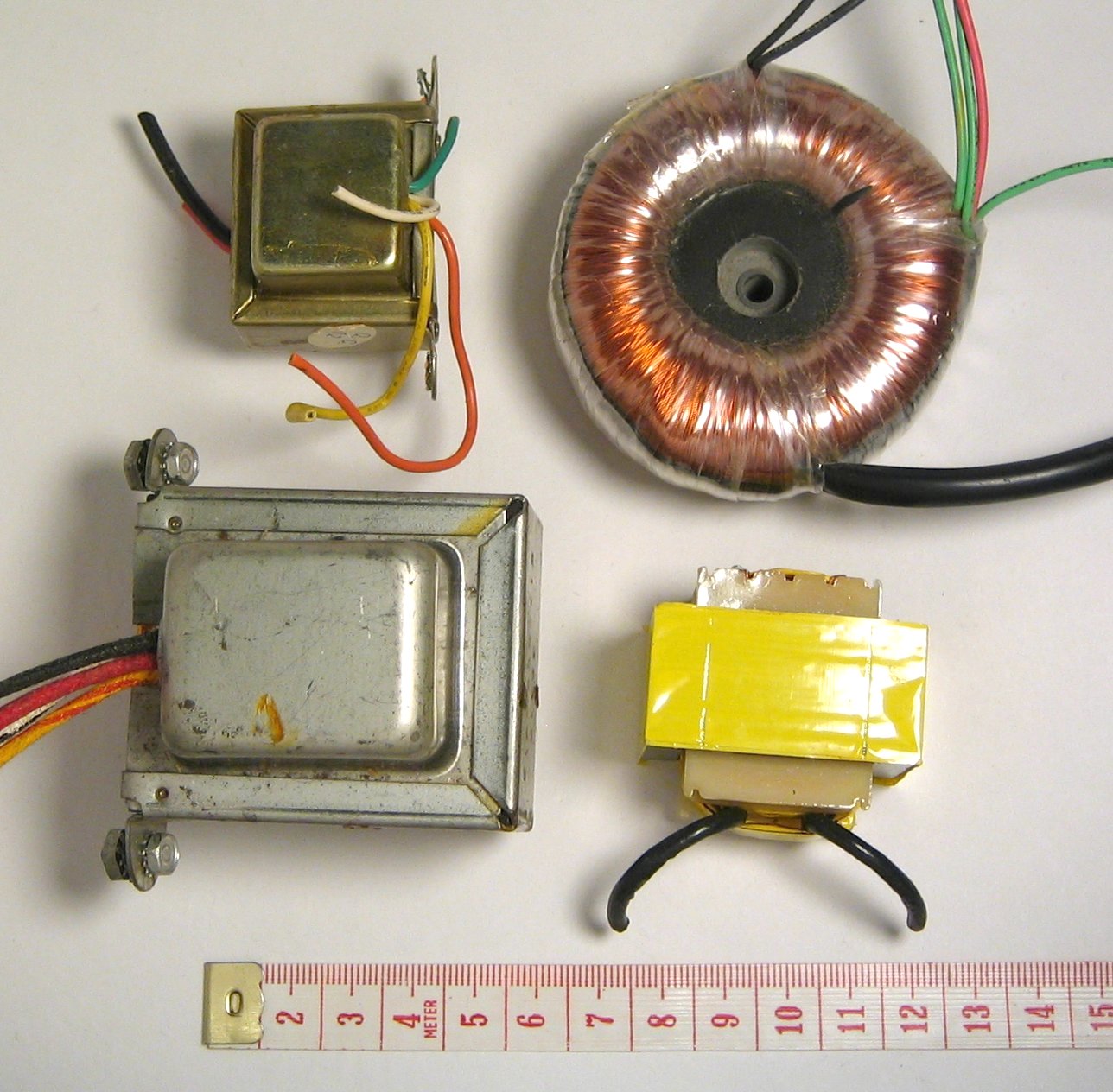

The following photo shows several examples of the construction of inductors and transformers. At the upper right is a toroidal core type (toroid is the mathematical term for a donut shape). This shape very efficiently contains the magnetic flux, so less power (or signal) is lost to heating up the core.

Step Up and Step Down

The terms 'step-up' and 'step-down' are used to compare the secondary (output) voltage to the voltage supplied to the primary.

Many transformers are specially designed to operate exclusively as step-up or step-down. While an ideal transformer could simply be 'turned around', we find that many actual transformers are built to perform best at certain ranges of voltage and current.

For example, a power transformer may be used to step down household AC (about 120 Volts) to 24V for home heating controls, etc. The output current is higher than the primary current in this example, so the transformer is made with a heavier gauge of wire in its secondary windings.

In transformers that deal with very high voltages, special attention is paid to insulation. The windings that deal with thousands of volts must resist arcing and other problems we do not see at home.

Finally, some transformers in electronic equipment are designed for a task known as 'impedance matching', rather than for specific in/out voltages. This function is explained in literature covering audio and radio topics.

Further reading

(This section has not yet been written)

State-Variable Approach

New techniques are needed to solve 2nd order and higher circuits:

- Symbolic solutions are so complicated, merely comparing answers is an exercise

- Analytical solution techniques are more fragmented

- The relationship between constants, initial conditions and circuit layout are becoming complicated

A change in strategy is needed if circuit analysis is going to:

- Move beyond the ideal

- Consider more complicated circuits

- Understand limitations/approximations of circuit modeling software

The solution is "State Variables." After a state variable analysis, the exercise of creating symbolic solution can be simplified by eliminating terms that don't have a significant impact on the output.

State Space

The State Space approach to circuit theory abandons the symbolic/analytical approach to circuit analysis. The state variable model involves describing a circuit in matrix form and then solving it numerically using tools like series expansions, Simpson's rule, and Cramer's Rule. This was the original starting point of matlab.

State

"State" means "condition" or "status" of the energy storage elements of a circuit. Since resistors don't change (ideally) and don't store energy, they don't change the circuit's state. A state is a snap shot in time of the currents and voltages. The goal of "State Space" analysis is to create a notation that describes all possible states.

State Variables

The notation used to describe all states should be as simple as possible. Instead of trying to find a complex, high order differential equation, go back to something like Kirchhoff analysis and just write terminal equations.

State variables are voltages across capacitors and currents through inductors. This means that purely resistive circuit cut sets are collapsed into single resistors that end up in series with an inductor or parallel to capacitor. Rather than using the symbols v and i to represent these unknowns, they are both called x. Kirchhoff's equations are used instead of node or loop equations. Terminal equations are substituted into the Kirchhoff's equations so that remaining resistor's currents and voltages are shared with inductors and capacitors.

State Space Model

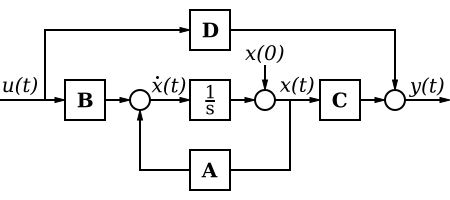

This State Space Model describes the inputs (step function μ(t), initial conditions X(0)), the output Y(t) and A,B,C and D. A-B-C-D are transfer functions that combine as follows:

A control systems class teaches how to build these block diagrams from a desired transfer function. Integrals "remember" or accumulate a history of past states. Derivatives predict future state and both, in addition to the current state can be separately scaled. "A" represents feedback. "D" represents feed forward. There is lots to learn.

Don't try to figure out how a negative sign appeared in the denominator and where the addition term came from. How does the above help us predict voltages and currents in a circuit? Let's start by defining terms and do some examples:

- A is a square matrix representing the circuit components (from Kirchhoff's equations.

- B is a column matrix or vector representing how the source impacts the circuit (from Kirchhoff's equations).

- C is a row matrix or vector representing how the output is computed (could be voltage or current)

- D is a single number that indicates a multiplier of the source .. is usually zero unless the source is directly connected to the output through a resistor.

A and B describe the circuit in general. If X is a column matrix (vector) representing all unknown voltages and currents, then:

At this point, X is known and represents a column of functions of time. The output can be derived from the known X's and the original step function μ using C and D:

MatLab Implementation

This would not be a step forward without tools such as MatLab. These are the relevant MatLab control system toolbox commands:

- step(A,B,C,D) assumes the initial conditions are zero

- initial(A,B,C,D,X(0)) just like step but takes into account the initial conditions X(0)

In addition, there is a simulink block called "State Space" that can be used the same way.

Video Introduction

Further reading

- Control Systems/State-Space Equations

- matlab help links

Sinusoidal Sources

The circuits that we have analyzed previously have been DC , where a constant voltage or current is applied to the circuit. In the following chapters, we will discuss the topic of alternating current (AC), which utilizes sinusoidal forcing functions to stimulate a circuit.

Sinusoidal Sources

Steady State

"Steady State" means that we are not dealing with turning on or turning off circuits in this section. We are assuming that the circuit was turned on a very long time ago and it is behaving in a pattern. We are computing what the pattern will look like. The "complex frequency" section models turning on and off a circuit with an exponential.

Sinusoidal Forcing Functions

Let us consider a general AC forcing function:

In this equation, the term M is called the "Magnitude", and it acts like a scaling factor that allows the peaks of the sinusoid to be higher or lower than +/- 1. The term ω is what is known as the "Radial Frequency". The term φ is an offset parameter known as the "Phase".

Sinusoidal sources can be current sources, but most often they are voltage sources.

Other Terms

There are a few other terms that are going to be used in many of the following sections, so we will introduce them here:

- Period

- The period of a sinusoidal function is the amount of time, in seconds, that the sinusoid takes to make a complete wave. The period of a sinusoid is always denoted with a capital T. This is not to be confused with a lower-case t, which is used as the independent variable for time.

- Frequency

- Frequency is the reciprocal of the period, and is the number of times, per second, that the sinusoid completes an entire cycle. Frequency is measured in Hertz (Hz). The relationship between frequency and the Period is as follows:

- Where f is the variable most commonly used to express the frequency.

- Radian Frequency

- Radian frequency is the value of the frequency expressed in terms of Radians Per Second, instead of Hertz. Radian Frequency is denoted with the variable . The relationship between the Frequency, and the Radian Frequency is as follows:

- Phase

- The phase is a quantity, expressed in radians, of the time shift of a sinusoid. A sinusoid phase-shifted is moved forward by 1 whole period, and looks exactly the same. An important fact to remember is this:

- or

Phase is often expressed with many different variables, including etc... This wikibook will try to stick with the symbol , to prevent confusion.

Lead and Lag

A circuit element may have both a voltage across its terminals and a current flowing through it. If one of the two (current or voltage) is a sinusoid, then the other must also be a sinusoid (remember, voltage is the derivative of the current, and the derivative of a sinusoid is always a sinusoid). However, the sinusoids of the voltage and the current may differ by quantities of magnitude and phase.

If the current has a lower phase angle than the voltage the current is said to lag the voltage. If the current has a higher phase angle then the voltage, it is said to lead the voltage. Many circuits can be classified and examined using lag and lead ideas.

Sinusoidal Response

Reactive components (capacitors and inductors) are going to take energy out of a circuit like a resistor and then pump some of it back into the circuit like a source. The result is initially a mess. But after a while (5 time constants), the circuit starts behaving in a pattern. The capacitors and inductors get in a rhythm that reflects the driving sources. If the source is sinusoidal, the currents and voltages will be sinusoidal. This is called the "particular" or "steady state" response. In general:

What happens initially, what happens if the capacitor is initially charged, what happens if sources are switched in and out of a circuit is that there is an energy imbalance. A voltage or current source might be charged by the initial energy in a capacitor. The derivative of the voltage across an Inductor might instantaneously switch polarity. Lots of things are happening. We are going to save this for later. Here we deal with the steady state or "particular" response first.

Sinusoidal Conventions

For the purposes of this book we will generally use cosine functions, as opposed to sine functions. If we absolutely need to use a sine, we can remember the following trigonometric identity:

We can express all sine functions as cosine functions. This way, we don't have to compare apples to oranges per se. This is simply a convention that this wikibook chooses to use to keep things simple. We could easily choose to use all sin( ) functions, but further down the road it is often more convenient to use cosine functions instead by default.

Sinusoidal Sources

There are two primary sinusoidal sources: wall outlets and oscillators. Oscillators are typically crystals that electrically vibrate and are found in devices that communicate or display video such as TV's, computers, cell phones, radios. An electrical engineer or tech's working area will typically include a "function generator" which can produce oscillations at many frequencies and in shapes that are not just sinusoidal.

RMS or Root mean square is a measure of amplitude that compares with DC magnitude in terms of power, strength of motor, brightness of light, etc. The trouble is that there are several types of AC amplitude:

- peak

- peak to peak

- average

- RMS

Wall outlets are called AC or alternating current. Wall outlets are sinusoidal voltage sources that range from 100 RMS volts, 50 Hz to 240 RMS volts 60 Hz worldwide. RMS, rather than peak (which makes more sense mathematically), is used to describe magnitude for several reasons:

- historical reasons related to the competition between Edison (DC power) and Tesla (Sinusoidal or AC power)

- effort to compare/relate AC (wall outlets) to DC (cars, batteries) .. 100 RMS volts is approximately 100 DC volts.

- average sinusoidal is zero

- meter movements (physical needles moving on measurement devices) were designed to measure both DC and RMS AC

RMS is a type of average:

Electrical power delivery is a complicated subject that will not be covered in this course. Here we are trying to define terms, design devices that use the power and understand clearly what comes out of wall outlets.

Phasor Representation

Phasors

Variables

Variables are defined the same way. But there is a difference. Before variables were either "known" or "unknown." Now there is a sort of in between.

At this point the concept of a constant function (a number) and a variable function (varies with time) needs to be reviewed. See this student professor dialogue. Knowns are described in terms of functions, unknowns are computed based upon the knowns and are also functions.

For example:

- voltage varying with time

Here is the symbol for a function. It is assigned a function of the symbols and . Typically time is not ever solved for.

Time remains an unknown. Furthermore all power, voltage and current turn into equations of time. Time is not solved for. Because time is everywhere, it can be eliminated from the equations. Integrals and derivatives turn into algebra and the answers can be purely numeric (before time is added back in).

At the last moment, time is put back into voltage, current and power and the final solution is a function of time.

Most of the math in this course has these steps:

- describe knowns and unknowns in the time domain, describe all equations

- change knowns into phasors, eliminate derivatives and integrals in the equations

- solve numerically or symbolically for unknowns in the phasor domain

- transform unknowns back into the time domain

Passive circuit output is similar to input

If the input to a linear circuit is a sinusoid, then the output from the circuit will be a sinusoid. Specifically, if we have a voltage sinusoid as such:

Then the current through the linear circuit will also be a sinusoid, although its magnitude and phase may be different quantities:

Note that both the voltage and the current are sinusoids with the same radial frequency, but different magnitudes, and different phase angles. Passive circuit elements cannot change the frequency of a sinusoid, only the magnitude and the phase. Why then do we need to write in every equation, when it doesnt change? For that matter, why do we need to write out the cos( ) function, if that never changes either? The answers to these questions is that we don't need to write these things every time. Instead, engineers have produced a short-hand way of writing these functions, called "phasors".

Phasor Transform

Phasors are a type of "transform." We are transforming the circuit math so that time disappears. Imagine going to a place where time doesn't exist.

We know that every function can be written as a series of sine waves of various frequencies and magnitudes added together. (Look up Fourier transform animation). The entire world can be constructed from sine waves. Because a sine wave repeats, it doesn't matter at what particular time you look at it; all that matters is where you look relative to the beginning of the period. Here, one sine wave is looked at, the repeating nature () is stripped away. What's left is a phasor. Since time is made of circles, and if we consider just one of these circles, we can move to a world where time doesn't exist and circles are "things". Instead of the word "world", use the word "domain" or "plane" as in two dimensions.

Math in the Phasor domain is almost the same as DC circuit analysis. This is handy, because it means that you don't have to solve differential equations every time you want to solve a circuit. What is different is that inductors and capacitors have an impact that needs to be accounted for.

The transform into the Phasor plane or domain and transforming back into time is based upon Euler's equation. It is the reason you studied imaginary numbers in past math class.

Euler's Equation

Euler started at these three series. Obviously there is a relationship:

He did the following:

Set x = π and:

Euler's formula is ubiquitous in mathematics, physics, and engineering. The physicist Richard Feynman called the equation "our jewel" and "one of the most remarkable, almost astounding, formulas in all of mathematics."

A more general version of Euler's equation is:

This equation allows us to view sinusoids as complex exponential functions. A cyclic function represented as a voltage, current or power given in terms of radial frequency and phase angle turns into an arrow having length (magnitude) and angle (phase) in the phasor domain/plane or a point having both a real () and imaginary () coordinate in the complex domain/plane.

Generically, the phasor , (which could be voltage, current or power) can be written:

- (rectangular coordinates)

- (polar coordinates)

We can graph the point (X, Y) on the complex plane and draw an arrow to it showing the relationship between and .

Using this fact, we can get the angle from the origin of the complex plane to out point (X, Y) with the function:

[Angle equation]

And using the pythagorean theorem, we can find the magnitude of C -- the distance from the origin to the point (X, Y) -- as:

[Pythagorean Theorem]

- .

Phasor Symbols

Suppose in the time domain:

In the phasor domain, this voltage is expressed like this:

The radial velocity disappears from known functions (not the derivate and integral operations) and reappears in the time expression for the unknowns.

Not a Vector?

Some argue that phasors are vectors. Be careful. A phasor diagram is not as rich as vector space or field space either in inspiration, math or concepts. A phasor is just a number .. possibly imaginary number. The phasor diagram is used to "explain" what an imaginary number is. Phasors can be divided, multiplied, added, and subtracted. They are one dimensional things.

The math of phasors is exactly the same as ordinary math, except with imaginary numbers. Vectors demand new mathematical operations such as dot product and cross product. For example can North be divided by East or subtracted from West?

For more details see http://en.wikipedia.org/wiki/Phasor_(electronics) or read about this controversy at https://www.quora.com/What-is-difference-b-w-phasor-diagram-and-vector-diagram

- The dot product of vectors finds the shadow of one vector on another.

- The cross product of vectors combines vectors into a third vector perpendicular to both.

These products do apply to phasors, as could be viewed in phasors for AC currents in motor stator and rotor, with the consequence of the creation of a torque, and all of these is expressible exactly by vector products.

Cosine Convention

It is important to remember which trigonometric function your phasors are mapping to. Since a phasor only includes information on magnitude and phase angle, it is impossible to know whether a given phasor maps to a sin( ) function, or a cos( ) function instead. By convention, this wikibook and most electronic texts/documentation map to the cosine function.

If you end up with an answer that is sin, convert to cos by subtracting 90 degrees:

If your simulator requires the source to be in sin form, but the starting point is cos, then convert to sin by adding 90 degrees:

Phasor Concepts

Inside the phasor domain, concepts appear and are named. Inductors and capacitors can be coupled with their derivative operator transforms and appear as imaginary resistors called "reactance." The combination of resistance and reactance is called "impedance." Impedance can be treated algebraically as a phasor although technically it is not. Power concepts such as real, reactive, apparent and power factor appear in the phasor domain. Numeric math can be done in the phasor domain. Symbols can be manipulated in the phasor domain.

Phasor Math

The Appendix

Phasor math turns into the imaginary number math which is reviewed below.

Phasor A can be multiplied by phasor B:

[Phasor Multiplication]

The phase angles add because in the time domain they are exponents of two things multiplied together.

[Phasor Division]

Again the phase angles are treated like exponents ... so they subtract.

The magnitude and angle form of phasors can not be used for addition and subtraction. For this, we need to convert the phasors into rectangular notation:

Here is how to convert from polar form (magnitude and angle) to rectangular form (real and imaginary)

- ,

Once in rectangular form:

- Real parts get add or subtract

- Imaginary parts add or subtract

[Phasor Addition]

Here is how to convert from rectangular form to polar form:

Once in polar phasor form, conversion back into the time domain is easy:

Function transformation Derivation

represents either voltage, current or power.

- starting point

- from Euler's Equation

- law of exponents

- .... is a real number so it can be moved inside

- is the definition of a phasor, here it is an expression substituting for

- where

What happens to term? Long Answer. It hangs around until it is time to transform back into the time domain. Because it is an exponent, and all the phasor math is algebra associated with exponents, the final phasor can be multiplied by it. Then the real part of the expression will be the time domain solution.

| time domain | transformation | phasor domain |

|---|---|---|

| proof | ||

| proof | ||

| proof | ||

| proof | ||

| proof | ||

| proof |

In all the cases above, remember that is a constant, a known value in most cases. Thus the phasor is an complex number in most calculations.

There is another transform associated with a derivatives that is discussed in "phasor calculus."

Transforming calculus operators into phasors

When sinusoids are represented as phasors, differential equations become algebra. This result follows from the fact that the complex exponential is the eigenfunction of the operation:

That is, only the complex amplitude is changed by the derivative operation. Taking the real part of both sides of the above equation gives the familiar result:

Thus, a time derivative of a sinusoid becomes, when tranformed into the phasor domain, algebra:

- (j is the square root of -1 or an imaginary number)

In a similar way the time integral, when transformed into the phasor domain is:

There is an integration constant that will have to be dealt with when translating back into the time domain. It doesn't disappear.

The above is true of voltage, current, and power.

The question is why does this work? Where is the proof? Lets do this three times: once for a resistor, then inductor, then capacitor. The symbols for the current and voltage going through the terminals are: and

Resistor Terminal Equation

- . terminal relationship

- .. substituting example functions

- .. Euler's version of the terminal relationship

- .. law of exponents

- .. do same thing go both sides of equal sign

- .. time domain result

- .. phasor expression